170 results

Impact of reduced rates of tiafenacil at vegetative growth stages on rice growth and yield

-

- Journal:

- Weed Technology / Accepted manuscript

- Published online by Cambridge University Press:

- 03 June 2024, pp. 1-17

-

- Article

-

- You have access

- Open access

- Export citation

Socio-ecological factors linked with changes in adults’ dietary intake in Los Angeles County during the peak of the coronavirus 2019 pandemic

-

- Journal:

- Public Health Nutrition / Volume 27 / Issue 1 / 2024

- Published online by Cambridge University Press:

- 07 May 2024, e133

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Organisation of paediatric echocardiography laboratories and governance of echocardiography services and training in Europe: current status, disparities and potential solutions. A survey from the Association for European Paediatric and Congenital Cardiology (AEPC) imaging working group – CORRIGENDUM

-

- Journal:

- Cardiology in the Young , First View

- Published online by Cambridge University Press:

- 01 April 2024, p. 1

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Organisation of paediatric echocardiography laboratories and governance of echocardiography services and training in Europe: current status, disparities, and potential solutions. A survey from the Association for European Paediatric and Congenital Cardiology (AEPC) imaging working group

-

- Journal:

- Cardiology in the Young , First View

- Published online by Cambridge University Press:

- 05 March 2024, pp. 1-9

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Impact of cereal rye cover crop on the fate of preemergence herbicides flumioxazin and pyroxasulfone and control of Amaranthus spp. in soybean

-

- Journal:

- Weed Science / Volume 71 / Issue 5 / September 2023

- Published online by Cambridge University Press:

- 18 October 2023, pp. 493-505

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

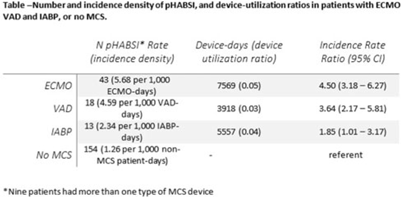

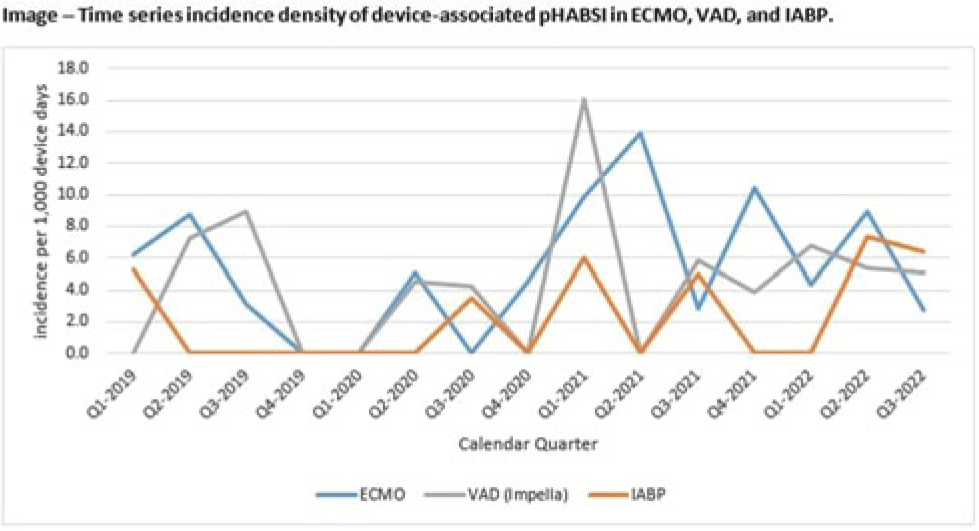

Relative risk of primary bloodstream infection in patients with mechanical circulatory support devices

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, p. s6

-

- Article

-

- You have access

- Open access

- Export citation

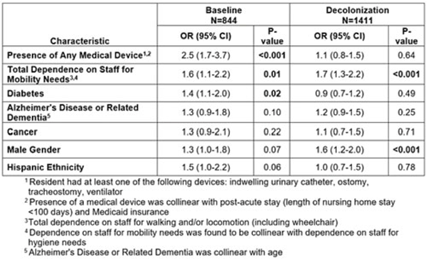

Does universal nasal/skin decolonization in nursing homes affect risk factors for MRSA carriage?

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, p. s78

-

- Article

-

- You have access

- Open access

- Export citation

Associations of alcohol and cannabis use with change in posttraumatic stress disorder and depression symptoms over time in recently trauma-exposed individuals

-

- Journal:

- Psychological Medicine / Volume 54 / Issue 2 / January 2024

- Published online by Cambridge University Press:

- 13 June 2023, pp. 338-349

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Development of the data registry for the Cardiac Neurodevelopmental Outcome Collaborative

-

- Journal:

- Cardiology in the Young / Volume 34 / Issue 1 / January 2024

- Published online by Cambridge University Press:

- 19 May 2023, pp. 79-85

-

- Article

- Export citation

Developmental care pathway for hospitalised infants with CHD: on behalf of the Cardiac Newborn Neuroprotective Network, a Special Interest Group of the Cardiac Neurodevelopmental Outcome Collaborative

-

- Journal:

- Cardiology in the Young / Volume 33 / Issue 12 / December 2023

- Published online by Cambridge University Press:

- 30 March 2023, pp. 2521-2538

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Can panpsychism solve thorny theological problems?

-

- Journal:

- Religious Studies , First View

- Published online by Cambridge University Press:

- 27 February 2023, pp. 1-4

-

- Article

- Export citation

A checklist of the bees (Hymenoptera: Apoidea) of Manitoba, Canada

-

- Journal:

- The Canadian Entomologist / Volume 155 / 2023

- Published online by Cambridge University Press:

- 27 January 2023, e3

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Drivers of change in Arctic fjord socio-ecological systems: Examples from the European Arctic

-

- Journal:

- Cambridge Prisms: Coastal Futures / Volume 1 / 2023

- Published online by Cambridge University Press:

- 13 January 2023, e13

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Criteria for performance evaluation

-

- Journal:

- Judgment and Decision Making / Volume 4 / Issue 2 / March 2009

- Published online by Cambridge University Press:

- 01 January 2023, pp. 164-174

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Spasticity Management Teams, Evaluations, and Tools: A Canadian Cross-Sectional Survey

-

- Journal:

- Canadian Journal of Neurological Sciences / Volume 50 / Issue 6 / November 2023

- Published online by Cambridge University Press:

- 21 November 2022, pp. 876-884

-

- Article

- Export citation

On minimal ideals in pseudo-finite semigroups

- Part of

-

- Journal:

- Canadian Journal of Mathematics / Volume 75 / Issue 6 / December 2023

- Published online by Cambridge University Press:

- 15 November 2022, pp. 2007-2037

- Print publication:

- December 2023

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Abnormal infant neurobehavior and later neurodevelopmental delays in children with critical CHD

-

- Journal:

- Cardiology in the Young / Volume 33 / Issue 7 / July 2023

- Published online by Cambridge University Press:

- 14 July 2022, pp. 1102-1111

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

The Effects of Remote Consultation (RC) on Outpatient Clinic Attendance Rates in City Community Mental Health Team (CMHT) and Patient Feedback on RC

-

- Journal:

- BJPsych Open / Volume 8 / Issue S1 / June 2022

- Published online by Cambridge University Press:

- 20 June 2022, p. S139

-

- Article

-

- You have access

- Open access

- Export citation

Anxiety symptoms and associated functional impairment in children with CHD in a neurodevelopmental follow-up clinic

-

- Journal:

- Cardiology in the Young / Volume 33 / Issue 6 / June 2023

- Published online by Cambridge University Press:

- 20 June 2022, pp. 864-871

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Redefining the timing and circumstances of the chicken's introduction to Europe and north-west Africa

- Part of

-

- Article

-

- You have access

- Open access

- HTML

- Export citation