757 results

The effect of older age on outcomes of rTMS treatment for treatment-resistant depression

-

- Journal:

- International Psychogeriatrics , First View

- Published online by Cambridge University Press:

- 25 March 2024, pp. 1-6

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Advancing health equity through action in antimicrobial stewardship and healthcare epidemiology

- Part of

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 45 / Issue 4 / April 2024

- Published online by Cambridge University Press:

- 14 February 2024, pp. 412-419

- Print publication:

- April 2024

-

- Article

-

- You have access

- HTML

- Export citation

The impact of badmouthing of medical specialties to medical students

-

- Journal:

- Irish Journal of Psychological Medicine , First View

- Published online by Cambridge University Press:

- 14 February 2024, pp. 1-8

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Communicating with families of young people with hard-to-treat cancers: Healthcare professionals’ perspectives on challenges, skills, and training

-

- Journal:

- Palliative & Supportive Care , First View

- Published online by Cambridge University Press:

- 24 January 2024, pp. 1-7

-

- Article

- Export citation

As the crow flies: tracking policy diffusion through stakeholder networks

-

- Journal:

- Journal of Public Policy / Volume 44 / Issue 1 / March 2024

- Published online by Cambridge University Press:

- 04 October 2023, pp. 67-92

-

- Article

-

- You have access

- HTML

- Export citation

Changes in antibiotic prescribing by dentists in the United States, 2012–2019

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 11 / November 2023

- Published online by Cambridge University Press:

- 22 August 2023, pp. 1725-1730

- Print publication:

- November 2023

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Incidence and risk factors for clinically confirmed secondary bacterial infections in patients hospitalized for coronavirus disease 2019 (COVID-19)

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 10 / October 2023

- Published online by Cambridge University Press:

- 15 May 2023, pp. 1650-1656

- Print publication:

- October 2023

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Association of cumulative prenatal adversity with infant subcortical structure volumes and child problem behavior and its moderation by a coexpression polygenic risk score of the serotonin system

-

- Journal:

- Development and Psychopathology , First View

- Published online by Cambridge University Press:

- 03 April 2023, pp. 1-16

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

The incidence, duration, risk factors, and age-based variation of missed opportunities to diagnose pertussis: A population-based cohort study

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 10 / October 2023

- Published online by Cambridge University Press:

- 15 March 2023, pp. 1629-1636

- Print publication:

- October 2023

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Spatial distributions of Tribrachidium, Rugoconites, and Obamus from the Ediacara Member (Rawnsley Quartzite), South Australia

-

- Journal:

- Paleobiology / Volume 49 / Issue 4 / November 2023

- Published online by Cambridge University Press:

- 13 March 2023, pp. 601-620

-

- Article

- Export citation

Gas exchange patterns for a small, stored-grain insect pest, Tribolium castaneum

-

- Journal:

- Bulletin of Entomological Research / Volume 113 / Issue 3 / June 2023

- Published online by Cambridge University Press:

- 23 February 2023, pp. 361-367

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Death by suicide among aged care recipients in Australia 2008–2017

-

- Journal:

- International Psychogeriatrics / Volume 35 / Issue 12 / December 2023

- Published online by Cambridge University Press:

- 20 February 2023, pp. 724-735

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Anomalous 13C enrichment in Mesozoic vertebrate enamel reflects environmental conditions in a “vanished world” and not a unique dietary physiology

-

- Journal:

- Paleobiology / Volume 49 / Issue 3 / August 2023

- Published online by Cambridge University Press:

- 13 January 2023, pp. 563-577

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

The Right Angle: Validating a standardised protocol for the use of infra-red thermography of eye temperature as a welfare indicator

-

- Journal:

- Animal Welfare / Volume 29 / Issue 2 / May 2020

- Published online by Cambridge University Press:

- 01 January 2023, pp. 123-131

-

- Article

- Export citation

Hypothesized drivers of the bias blind spot—cognitive sophistication, introspection bias, and conversational processes

-

- Journal:

- Judgment and Decision Making / Volume 17 / Issue 6 / November 2022

- Published online by Cambridge University Press:

- 01 January 2023, pp. 1392-1421

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Structural racism in healthcare and research: A community-led model of curriculum development and implementation

-

- Journal:

- Journal of Clinical and Translational Science / Volume 7 / Issue 1 / 2023

- Published online by Cambridge University Press:

- 09 November 2022, e18

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

The Evolutionary Map of the Universe Pilot Survey – ADDENDUM

-

- Journal:

- Publications of the Astronomical Society of Australia / Volume 39 / 2022

- Published online by Cambridge University Press:

- 02 November 2022, e055

-

- Article

- Export citation

The impact of a supermarket-based intervention using personalised loyalty card incentives to increase weekly purchasing of fruits and vegetables

-

- Journal:

- Proceedings of the Nutrition Society / Volume 81 / Issue OCE5 / 2022

- Published online by Cambridge University Press:

- 05 October 2022, E183

-

- Article

-

- You have access

- HTML

- Export citation

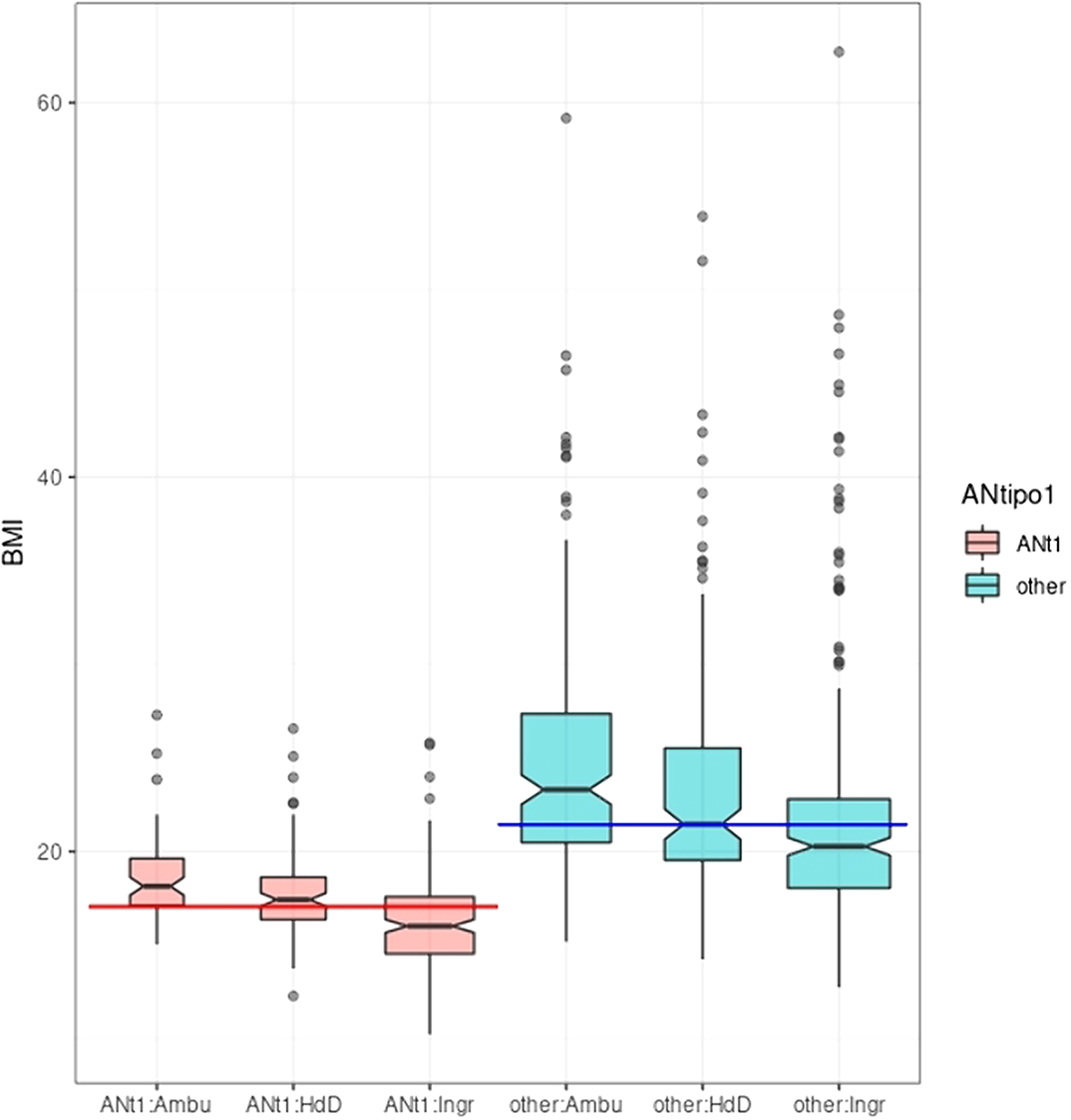

Self-report questionnaires in eating disorders: do we need to be careful interpreting self-report in conditions with self-perception issues?

-

- Journal:

- European Psychiatry / Volume 65 / Issue S1 / June 2022

- Published online by Cambridge University Press:

- 01 September 2022, pp. S580-S581

-

- Article

-

- You have access

- Open access

- Export citation

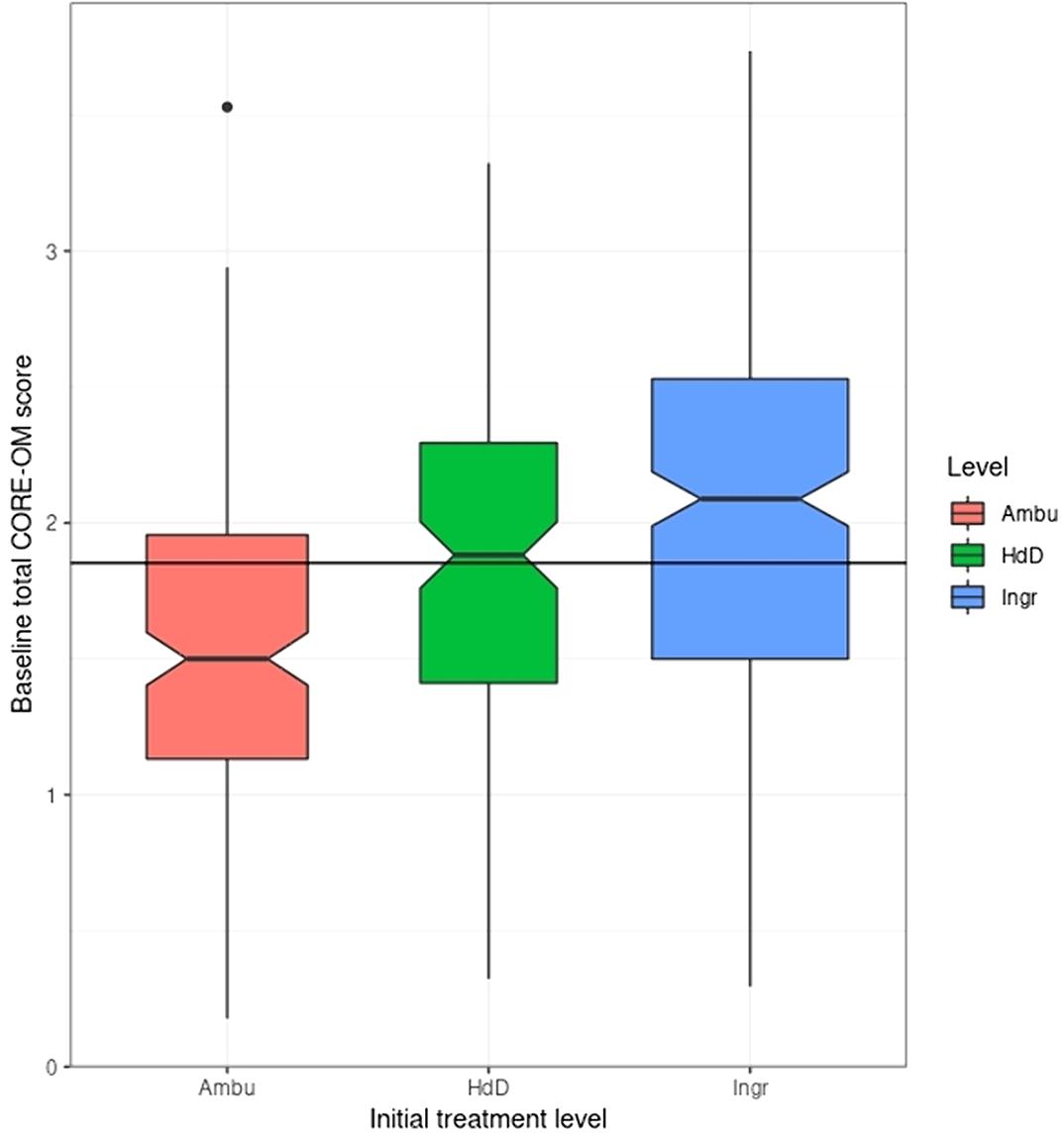

Levels of intervention and support for newly presenting clients with eating disorders

-

- Journal:

- European Psychiatry / Volume 65 / Issue S1 / June 2022

- Published online by Cambridge University Press:

- 01 September 2022, pp. S578-S579

-

- Article

-

- You have access

- Open access

- Export citation