999 results

Regional variations in the demographic response to the arrival of rice farming in prehistoric Japan

-

- Journal:

- Antiquity , First View

- Published online by Cambridge University Press:

- 16 September 2024, pp. 1-16

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Health Outcomes and Health Services Utilization Evaluation Protocol: Assessing the Impact of the Nova Scotia Rapid Access and Stabilization Program

-

- Journal:

- European Psychiatry / Volume 67 / Issue S1 / April 2024

- Published online by Cambridge University Press:

- 27 August 2024, pp. S320-S321

-

- Article

-

- You have access

- Open access

- Export citation

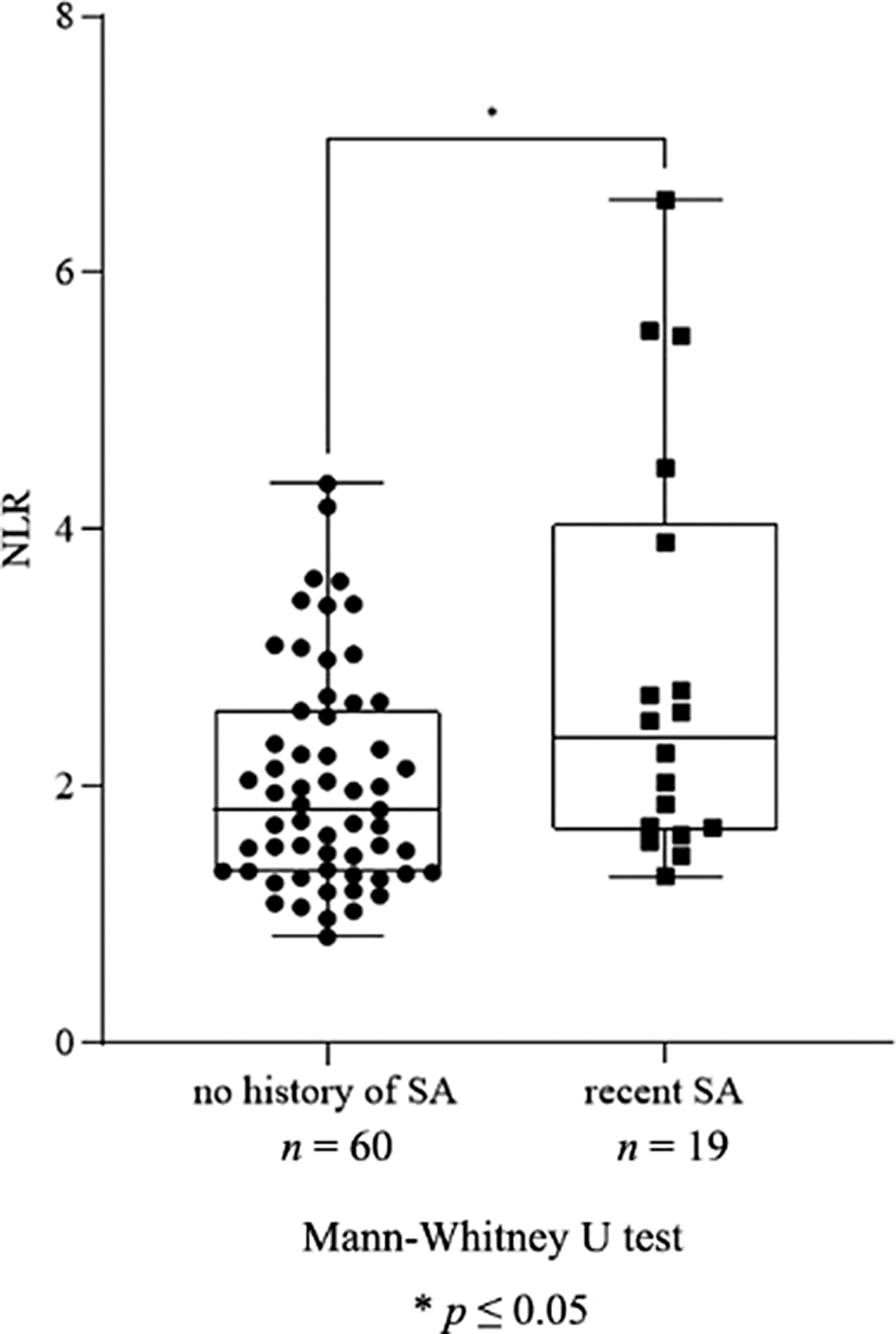

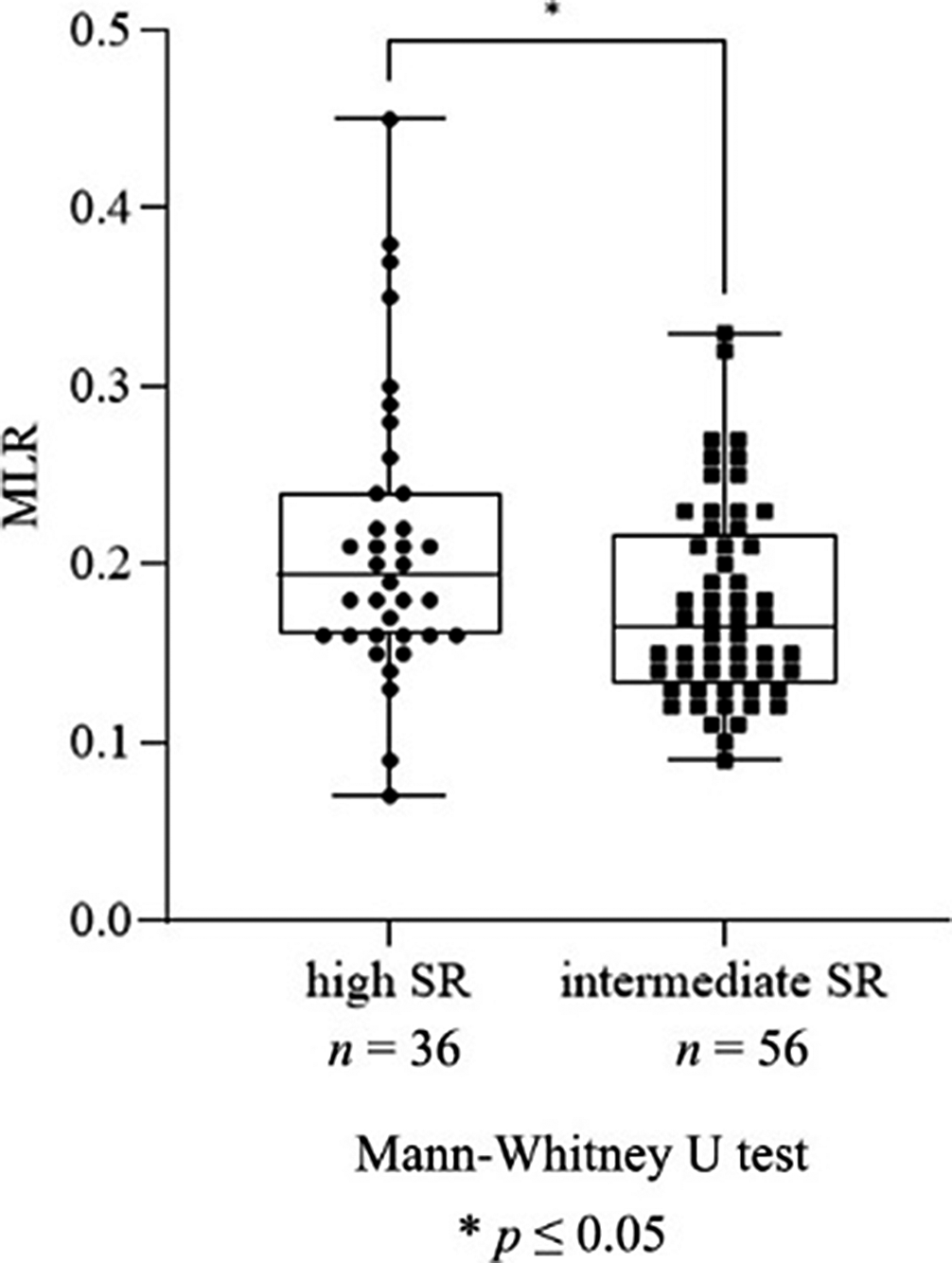

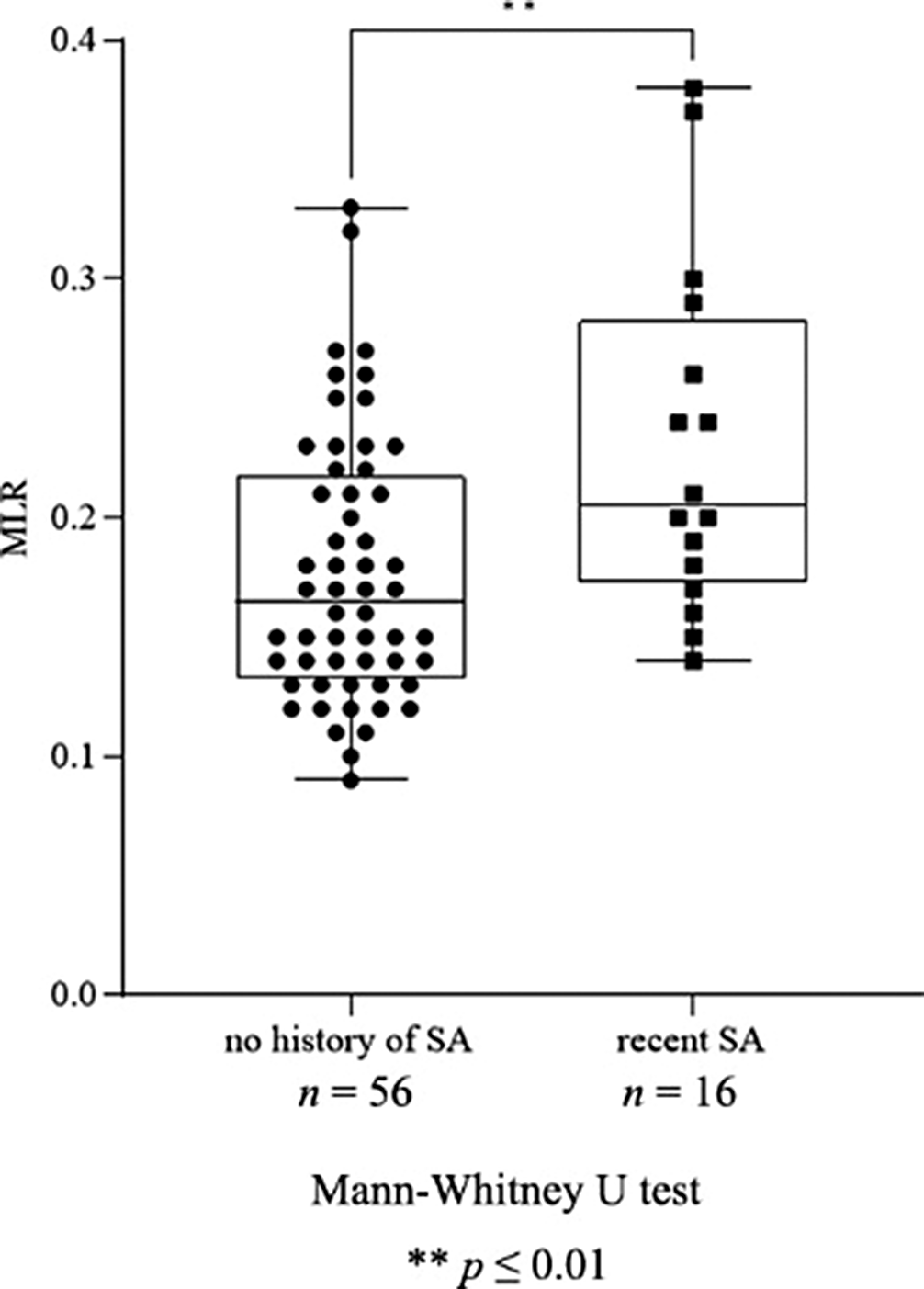

Investigation of peripheral inflammatory biomarkers in association with suicide risk in major depression

-

- Journal:

- European Psychiatry / Volume 67 / Issue S1 / April 2024

- Published online by Cambridge University Press:

- 27 August 2024, pp. S792-S793

-

- Article

-

- You have access

- Open access

- Export citation

Clinical, psychological and brain imaging investigation of first episode psychosis patients treated at Semmelweis University, Department of Psychiatry and Psychotherapy, Budapest, Hungary

-

- Journal:

- European Psychiatry / Volume 67 / Issue S1 / April 2024

- Published online by Cambridge University Press:

- 27 August 2024, p. S294

-

- Article

-

- You have access

- Open access

- Export citation

N-acetylcysteine counteracts increased brain excitatory/inhibitory balance following maternal high-fat diet and restores emotional and cognitive profiles in adult mouse offspring

-

- Journal:

- European Psychiatry / Volume 67 / Issue S1 / April 2024

- Published online by Cambridge University Press:

- 27 August 2024, p. S84

-

- Article

-

- You have access

- Open access

- Export citation

Differences in the perception of stigma in schizophrenia between men and women: a brief qualitative approach

-

- Journal:

- European Psychiatry / Volume 67 / Issue S1 / April 2024

- Published online by Cambridge University Press:

- 27 August 2024, pp. S805-S806

-

- Article

-

- You have access

- Open access

- Export citation

Prevalence of diabetes and insulin resistance in patients with diagnosis of schizophrenia or other psicotic disorders

-

- Journal:

- European Psychiatry / Volume 67 / Issue S1 / April 2024

- Published online by Cambridge University Press:

- 27 August 2024, p. S741

-

- Article

-

- You have access

- Open access

- Export citation

Flow regimes in emptying–filling boxes with two buoyancy sources of differing strengths and elevations

-

- Journal:

- Journal of Fluid Mechanics / Volume 988 / 10 June 2024

- Published online by Cambridge University Press:

- 24 July 2024, A12

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

An Optical Daytime Astronomy Pathfinder for the Huntsman Telescope

-

- Journal:

- Publications of the Astronomical Society of Australia / Accepted manuscript

- Published online by Cambridge University Press:

- 20 May 2024, pp. 1-19

-

- Article

- Export citation

Decreased risk of non-influenza respiratory infection after influenza B virus infection in children

-

- Journal:

- Epidemiology & Infection / Volume 152 / 2024

- Published online by Cambridge University Press:

- 08 April 2024, e60

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

RG-CAT: Detection pipeline and catalogue of radio galaxies in the EMU pilot survey

-

- Journal:

- Publications of the Astronomical Society of Australia / Volume 41 / 2024

- Published online by Cambridge University Press:

- 01 April 2024, e027

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

A comprehensive hierarchical comparison of structural connectomes in Major Depressive Disorder cases v. controls in two large population samples

-

- Journal:

- Psychological Medicine , First View

- Published online by Cambridge University Press:

- 18 March 2024, pp. 1-12

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Efficacy and safety of transcranial magnetic stimulation on cognition in mild cognitive impairment, Alzheimer’s disease, Alzheimer’s disease-related dementias, and other cognitive disorders: a systematic review and meta-analysis

-

- Journal:

- International Psychogeriatrics , First View

- Published online by Cambridge University Press:

- 08 February 2024, pp. 1-49

-

- Article

- Export citation

The effects of saturated fat intake from dairy on CVD markers: the role of food matrices

-

- Journal:

- Proceedings of the Nutrition Society , First View

- Published online by Cambridge University Press:

- 06 February 2024, pp. 1-9

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

FC24: Transcranial Magnetic Stimulation (TMS) as a Treatment for Dementia due to non-Alzheimer’s disease (non-AD): What is the Evidence?

-

- Journal:

- International Psychogeriatrics / Volume 35 / Issue S1 / December 2023

- Published online by Cambridge University Press:

- 02 February 2024, pp. 85-86

-

- Article

-

- You have access

- Export citation

Paediatric heart transplantation: life-saving but not yet a cure

-

- Journal:

- Cardiology in the Young / Volume 34 / Issue 2 / February 2024

- Published online by Cambridge University Press:

- 23 January 2024, pp. 233-237

-

- Article

-

- You have access

- HTML

- Export citation

Reward anticipation-related neural activation following cued reinforcement in adults with psychotic psychopathology and biological relatives

-

- Journal:

- Psychological Medicine / Volume 54 / Issue 7 / May 2024

- Published online by Cambridge University Press:

- 10 January 2024, pp. 1441-1451

-

- Article

- Export citation

Slow positron production and storage for the ASACUSA-Cusp experiment

- Part of

-

- Journal:

- Journal of Plasma Physics / Volume 89 / Issue 6 / December 2023

- Published online by Cambridge University Press:

- 18 December 2023, 905890608

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Phone-based psychosocial counseling for people living with HIV: Feasibility, acceptability and impact on uptake of psychosocial counseling services in Malawi

-

- Journal:

- Cambridge Prisms: Global Mental Health / Volume 11 / 2024

- Published online by Cambridge University Press:

- 05 December 2023, e3

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Changes in audiovestibular handicap following treatment of vestibular schwannomas

-

- Journal:

- The Journal of Laryngology & Otology / Volume 138 / Issue 6 / June 2024

- Published online by Cambridge University Press:

- 29 November 2023, pp. 608-614

- Print publication:

- June 2024

-

- Article

-

- You have access

- Open access

- HTML

- Export citation