63 results

Research agenda for transmission prevention within the Veterans Health Administration, 2024–2028

-

- Journal:

- Infection Control & Hospital Epidemiology , First View

- Published online by Cambridge University Press:

- 11 April 2024, pp. 1-10

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

They’re Still There, He’s All Gone: American Fatalities in Foreign Wars and Right-Wing Radicalization at Home

-

- Journal:

- American Political Science Review , First View

- Published online by Cambridge University Press:

- 19 October 2023, pp. 1-7

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

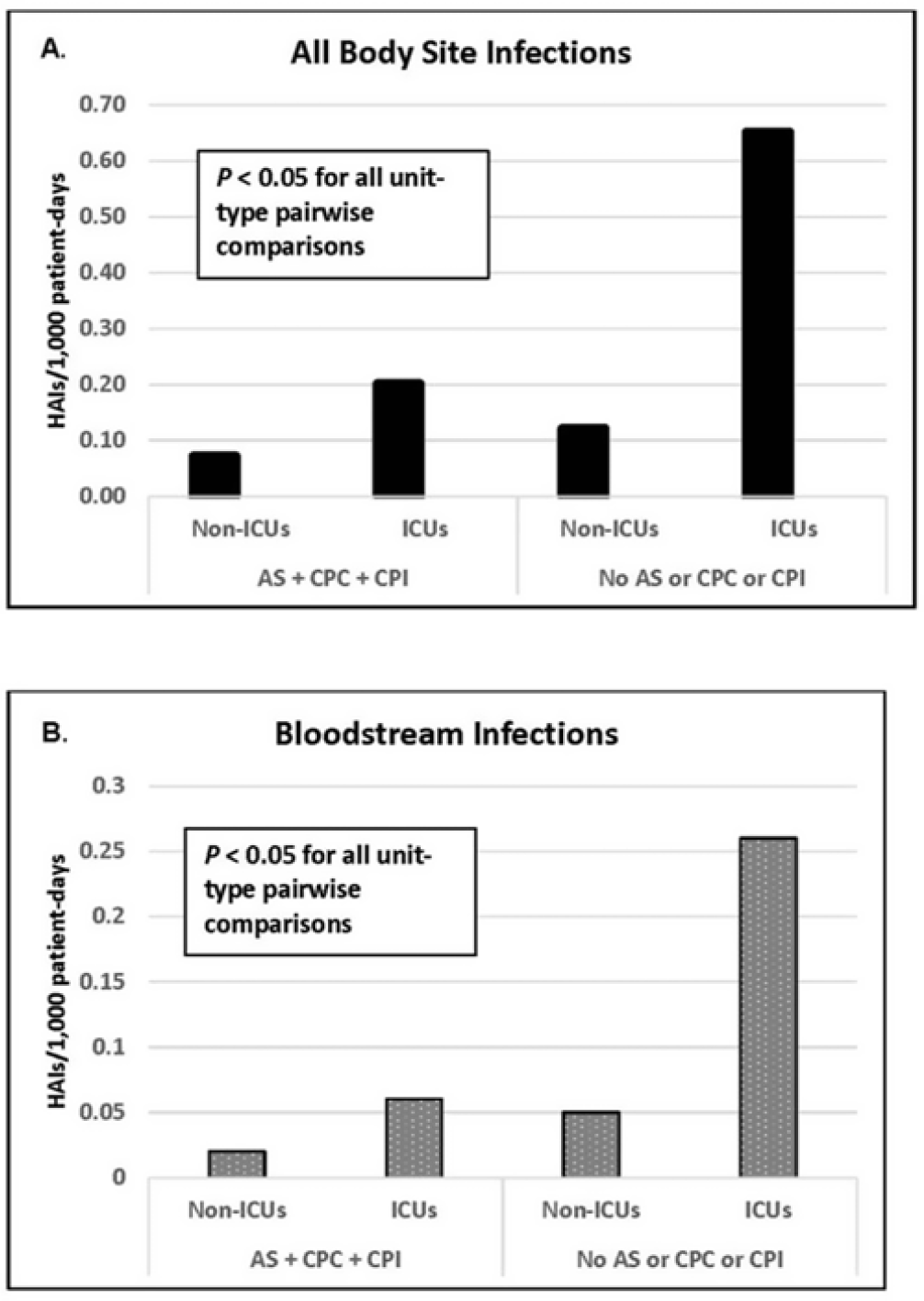

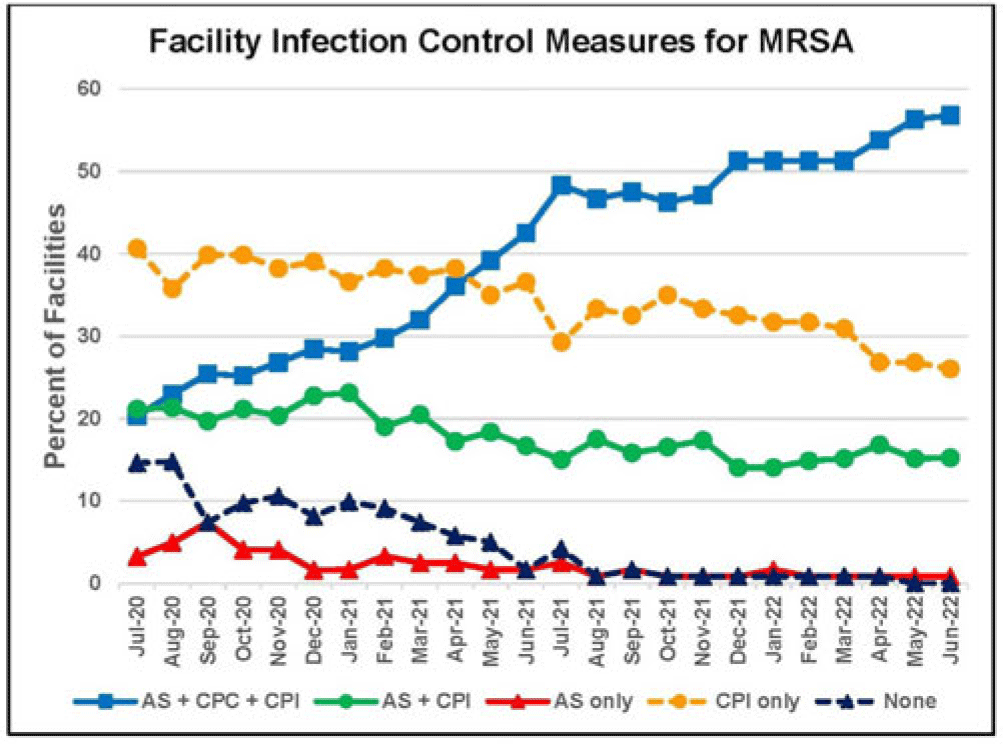

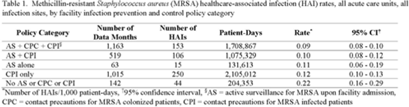

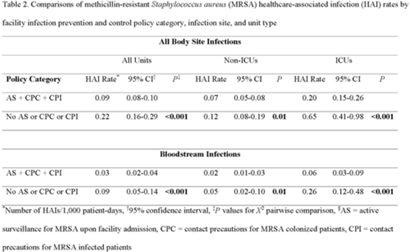

Active surveillance and contact precautions for preventing MRSA healthcare-associated infections during the COVID-19 pandemic

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, pp. s117-s118

-

- Article

-

- You have access

- Open access

- Export citation

Antimicrobial physician and pharmacist experience and perception of an antimicrobial Self-Stewardship Time-Out Program (SSTOP) intervention at eight Veterans’ Affairs medical centers

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 9 / September 2023

- Published online by Cambridge University Press:

- 24 January 2023, pp. 1511-1514

- Print publication:

- September 2023

-

- Article

- Export citation

Increased carbapenemase testing following implementation of national VA guidelines for carbapenem-resistant Enterobacterales (CRE)

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 2 / Issue 1 / 2022

- Published online by Cambridge University Press:

- 02 June 2022, e88

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Real World Performance of SARS-CoV-2 Antigen Rapid Diagnostic Tests in Various Clinical Settings

- Part of

-

- Journal:

- Infection Control & Hospital Epidemiology / Accepted manuscript

- Published online by Cambridge University Press:

- 02 March 2022, pp. 1-20

-

- Article

- Export citation

The Hidden Hand: Middle East Fears of Conspiracy, Daniel Pipes, New York: St. Martin's Press, 1996, xii + 404 pp.

-

- Journal:

- Iranian Studies / Volume 30 / Issue 1-2 / Spring Winter 1997

- Published online by Cambridge University Press:

- 01 January 2022, pp. 177-181

- Print publication:

- Spring Winter 1997

-

- Article

- Export citation

Fihrist-i asnād-i qadīm-i vizārat-i umūr-i khārijah: dawrān-i Qājārīyah, 1124–1316, Tehran: Institute for Political and International Studies, 1371 Sh./1992, xiii + 598 pp., 4,500 rials.

-

- Journal:

- Iranian Studies / Volume 29 / Issue 3-4 / Summer Fall 1996

- Published online by Cambridge University Press:

- 01 January 2022, pp. 394-395

- Print publication:

- Summer Fall 1996

-

- Article

- Export citation

Stumbling through the “Open Door”: The U.S. in Persia and the Standard-Sinclair Oil Dispute, 1920–1925

-

- Journal:

- Iranian Studies / Volume 28 / Issue 3-4 / Summer Fall 1995

- Published online by Cambridge University Press:

- 01 January 2022, pp. 203-229

- Print publication:

- Summer Fall 1995

-

- Article

- Export citation

Hippocampal volume and volume asymmetry prospectively predict PTSD symptom emergence among Iraq-deployed soldiers

-

- Journal:

- Psychological Medicine / Volume 53 / Issue 5 / April 2023

- Published online by Cambridge University Press:

- 22 November 2021, pp. 1906-1913

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Implementation of an Antibiotic Timeout at Veterans’ Affairs Medical Centers (VAMC): COVID-19 Facilitators and Barriers

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 1 / Issue S1 / July 2021

- Published online by Cambridge University Press:

- 29 July 2021, p. s3

-

- Article

-

- You have access

- Open access

- Export citation

Chapter 2 - How Much Does the Family Want to Be Involved in Decision-Making?

-

-

- Book:

- Shared Decision Making in Adult Critical Care

- Published online:

- 27 May 2021

- Print publication:

- 17 June 2021, pp 7-12

-

- Chapter

- Export citation

Inpatient antibiotic utilization in the Veterans’ Health Administration during the coronavirus disease 2019 (COVID-19) pandemic

- Part of

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 42 / Issue 6 / June 2021

- Published online by Cambridge University Press:

- 20 October 2020, pp. 751-753

- Print publication:

- June 2021

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Risk Factors for Carbapenemase Producing-Carbapenem Resistant Enterobacteriaceae in Those With CRE Positive Cultures

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 41 / Issue S1 / October 2020

- Published online by Cambridge University Press:

- 02 November 2020, pp. s376-s377

- Print publication:

- October 2020

-

- Article

-

- You have access

- Export citation

Evaluating Healthcare Worker Movements and Patient Interactions Within ICU Rooms

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 41 / Issue S1 / October 2020

- Published online by Cambridge University Press:

- 02 November 2020, pp. s222-s224

- Print publication:

- October 2020

-

- Article

-

- You have access

- Export citation

Inpatient and Discharge Fluoroquinolone Prescribing in Veterans’ Affairs Hospitals Between 2014 and 2017

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 41 / Issue S1 / October 2020

- Published online by Cambridge University Press:

- 02 November 2020, pp. s487-s488

- Print publication:

- October 2020

-

- Article

-

- You have access

- Export citation

A survey of infection control strategies for carbapenem-resistant Enterobacteriaceae in Department of Veterans’ Affairs facilities

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 43 / Issue 7 / July 2022

- Published online by Cambridge University Press:

- 22 September 2020, pp. 939-942

- Print publication:

- July 2022

-

- Article

- Export citation

Evaluation of the National Healthcare Safety Network standardized infection ratio risk adjustment for healthcare-facility-onset Clostridioides difficile infection in intensive care, oncology, and hematopoietic cell transplant units in general acute-care hospitals

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 41 / Issue 4 / April 2020

- Published online by Cambridge University Press:

- 13 February 2020, pp. 404-410

- Print publication:

- April 2020

-

- Article

- Export citation

Validation of the SHEA/IDSA severity criteria to predict poor outcomes among inpatients and outpatients with Clostridioides difficile infection

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 41 / Issue 5 / May 2020

- Published online by Cambridge University Press:

- 30 January 2020, pp. 510-516

- Print publication:

- May 2020

-

- Article

- Export citation

Determination of Death by Neurologic Criteria in the United States: The Case for Revising the Uniform Determination of Death Act

-

- Journal:

- Journal of Law, Medicine & Ethics / Volume 47 / Issue S4 / Winter 2019

- Published online by Cambridge University Press:

- 01 January 2021, pp. 9-24

- Print publication:

- Winter 2019

-

- Article

- Export citation