224 results

Gravure printing with a shear-rate-dependent ink

-

- Journal:

- Flow: Applications of Fluid Mechanics / Volume 4 / 2024

- Published online by Cambridge University Press:

- 17 January 2024, E1

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Mineralogical and Isotopic Record of Diagenesis from the Opalinus Clay Formation at Benken, Switzerland: Implications for the Modeling of Pore-Water Chemistry in a Clay Formation

-

- Journal:

- Clays and Clay Minerals / Volume 62 / Issue 4 / August 2014

- Published online by Cambridge University Press:

- 01 January 2024, pp. 286-312

-

- Article

- Export citation

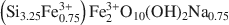

Hydrothermal synthesis, between 75 and 150°C, of High-charge, ferric nontronites

-

- Journal:

- Clays and Clay Minerals / Volume 56 / Issue 3 / June 2008

- Published online by Cambridge University Press:

- 01 January 2024, pp. 322-337

-

- Article

- Export citation

Strengthening self-regulation and reducing poverty to prevent adolescent depression and anxiety: Rationale, approach and methods of the ALIVE interdisciplinary research collaboration in Colombia, Nepal and South Africa

- Part of

-

- Journal:

- Epidemiology and Psychiatric Sciences / Volume 32 / 2023

- Published online by Cambridge University Press:

- 13 December 2023, e69

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

The role of primary health care in long-term care facilities during the COVID-19 pandemic in 30 European countries: a retrospective descriptive study (Eurodata study)

-

- Journal:

- Primary Health Care Research & Development / Volume 24 / 2023

- Published online by Cambridge University Press:

- 24 October 2023, e60

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Financial risk protection in private health insurance: empirical evidence on catastrophic and impoverishing spending from Germany's dual insurance system

-

- Journal:

- Health Economics, Policy and Law / Volume 19 / Issue 1 / January 2024

- Published online by Cambridge University Press:

- 07 September 2023, pp. 3-20

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

IDENTIFICATION AND CLASSIFICATION OF UNCERTAINTIES AS THE FOUNDATION OF AGILE METHODS

-

- Journal:

- Proceedings of the Design Society / Volume 3 / July 2023

- Published online by Cambridge University Press:

- 19 June 2023, pp. 2165-2174

-

- Article

-

- You have access

- Open access

- Export citation

Prediction of estimated risk for bipolar disorder using machine learning and structural MRI features

-

- Journal:

- Psychological Medicine / Volume 54 / Issue 2 / January 2024

- Published online by Cambridge University Press:

- 22 May 2023, pp. 278-288

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Concurrent analysis of hospital stay durations and mortality of emerging severe acute respiratory coronavirus virus 2 (SARS-CoV-2) variants using real-time electronic health record data at a large German university hospital

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue 1 / 2023

- Published online by Cambridge University Press:

- 04 May 2023, e88

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

On-demand, hospital-based, severe acute respiratory coronavirus virus 2 (SARS-CoV-2) genomic epidemiology to support nosocomial outbreak investigations: A prospective molecular epidemiology study

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue 1 / 2023

- Published online by Cambridge University Press:

- 08 March 2023, e45

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Clinical decision-making style preferences of European psychiatrists: Results from the Ambassadors survey in 38 countries

-

- Journal:

- European Psychiatry / Volume 65 / Issue 1 / 2022

- Published online by Cambridge University Press:

- 21 October 2022, e75

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Point-of-Care Ultrasound to Detect Acute Large Vessel Occlusions in Stroke Patients: A Proof-of-Concept Study

-

- Journal:

- Canadian Journal of Neurological Sciences / Volume 50 / Issue 5 / September 2023

- Published online by Cambridge University Press:

- 25 July 2022, pp. 656-661

-

- Article

- Export citation

Chapter 19 - Qualitative Interpretation of Intracranial EEG in Insular Epilepsy

- from Section 4 - Invasive Investigation of Insular Epilepsy

-

-

- Book:

- Insular Epilepsies

- Published online:

- 09 June 2022

- Print publication:

- 24 March 2022, pp 227-237

-

- Chapter

- Export citation

Argentotetrahedrite-(Zn), Ag6(Cu4Zn2)Sb4S13, a new member of the tetrahedrite group

-

- Journal:

- Mineralogical Magazine / Volume 86 / Issue 2 / April 2022

- Published online by Cambridge University Press:

- 07 March 2022, pp. 319-330

-

- Article

- Export citation

The support of singular stochastic partial differential equations

- Part of

-

- Journal:

- Forum of Mathematics, Pi / Volume 10 / 2022

- Published online by Cambridge University Press:

- 14 January 2022, e1

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Integrins, cadherins and channels in cartilage mechanotransduction: perspectives for future regeneration strategies

-

- Journal:

- Expert Reviews in Molecular Medicine / Volume 23 / 2021

- Published online by Cambridge University Press:

- 27 October 2021, e14

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Longitudinal associations between interpersonal relationship functioning and posttraumatic stress disorder (PTSD) in recently traumatized individuals: differential findings by assessment method

-

- Journal:

- Psychological Medicine / Volume 53 / Issue 6 / April 2023

- Published online by Cambridge University Press:

- 08 October 2021, pp. 2205-2215

-

- Article

- Export citation

Outcome in depression (II): beyond the Hamilton Depression Rating Scale

-

- Journal:

- CNS Spectrums / Volume 26 / Issue 4 / August 2021

- Published online by Cambridge University Press:

- 19 May 2020, pp. 378-382

-

- Article

- Export citation

Outcome in depression (I): why symptomatic remission is not good enough

-

- Journal:

- CNS Spectrums / Volume 26 / Issue 4 / August 2021

- Published online by Cambridge University Press:

- 19 May 2020, pp. 393-399

-

- Article

- Export citation

Criterion validity of the interpersonal dependency inventory: a preliminary study on 621 addictive subjects

-

- Journal:

- European Psychiatry / Volume 17 / Issue 8 / December 2002

- Published online by Cambridge University Press:

- 16 April 2020, pp. 477-478

-

- Article

- Export citation