160 results

401 Detecting Hypofibrinolysis in Clinical Coagulation Testing

- Part of

-

- Journal:

- Journal of Clinical and Translational Science / Volume 8 / Issue s1 / April 2024

- Published online by Cambridge University Press:

- 03 April 2024, p. 119

-

- Article

-

- You have access

- Open access

- Export citation

Using randomized controlled trials to ask questions regarding developmental psychopathology: A tribute to Dante Cicchetti

-

- Journal:

- Development and Psychopathology , First View

- Published online by Cambridge University Press:

- 28 February 2024, pp. 1-10

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Changes in sleep and the prevalence of probable insomnia in undergraduate university students over the course of the COVID-19 pandemic: findings from the U-Flourish cohort study

-

- Journal:

- BJPsych Open / Volume 9 / Issue 6 / November 2023

- Published online by Cambridge University Press:

- 07 November 2023, e210

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

When Is It Modernism? A Lesson from Indonesian Musik Kontemporer

-

- Journal:

- Twentieth-Century Music / Volume 20 / Issue 3 / October 2023

- Published online by Cambridge University Press:

- 10 October 2023, pp. 292-322

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Introduction to the Special Issue on Global Musical Modernisms

-

- Journal:

- Twentieth-Century Music / Volume 20 / Issue 3 / October 2023

- Published online by Cambridge University Press:

- 04 October 2023, pp. 274-291

-

- Article

- Export citation

Associations of alcohol and cannabis use with change in posttraumatic stress disorder and depression symptoms over time in recently trauma-exposed individuals

-

- Journal:

- Psychological Medicine / Volume 54 / Issue 2 / January 2024

- Published online by Cambridge University Press:

- 13 June 2023, pp. 338-349

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

15 - An Investigation of Lithology, Hydrogeology, Bioturbation, and Pedogenesis in a Borehole through the Cubango Megafan, Northern Namibia

- from Part III - Applications in Other Sciences

-

-

- Book:

- Fluvial Megafans on Earth and Mars

- Published online:

- 30 April 2023

- Print publication:

- 18 May 2023, pp 287-307

-

- Chapter

- Export citation

361 WDR5 represents a therapeutically exploitable target for cancer stem cells in glioblastoma

- Part of

-

- Journal:

- Journal of Clinical and Translational Science / Volume 7 / Issue s1 / April 2023

- Published online by Cambridge University Press:

- 24 April 2023, p. 107

-

- Article

-

- You have access

- Open access

- Export citation

271 Diagnosis and Detection of Thrombosis in PCOS

- Part of

-

- Journal:

- Journal of Clinical and Translational Science / Volume 7 / Issue s1 / April 2023

- Published online by Cambridge University Press:

- 24 April 2023, p. 81

-

- Article

-

- You have access

- Open access

- Export citation

Safety and efficacy of KarXT (Xanomeline Trospium) in Schizophrenia in the Phase 3, Randomized, Double-Blind, Placebo-Controlled EMERGENT-2 Trial

-

- Journal:

- CNS Spectrums / Volume 28 / Issue 2 / April 2023

- Published online by Cambridge University Press:

- 14 April 2023, p. 220

-

- Article

-

- You have access

- Export citation

Longitudinal analysis of risk factors associated with severe acute respiratory coronavirus virus 2 (SARS-CoV-2) infection among hemodialysis patients and healthcare personnel in outpatient hemodialysis centers

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 2 / Issue 1 / 2022

- Published online by Cambridge University Press:

- 21 July 2022, e125

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Cerambycid pheromones affect catches of Phymatodes aeneus (Coleoptera: Cerambycidae) and Thanasimus undatulus (Coleoptera: Cleridae) in ethanol-baited multiple-funnel traps in the Pacific Northwest, United States of America

-

- Journal:

- The Canadian Entomologist / Volume 154 / Issue 1 / 2022

- Published online by Cambridge University Press:

- 11 July 2022, e31

-

- Article

- Export citation

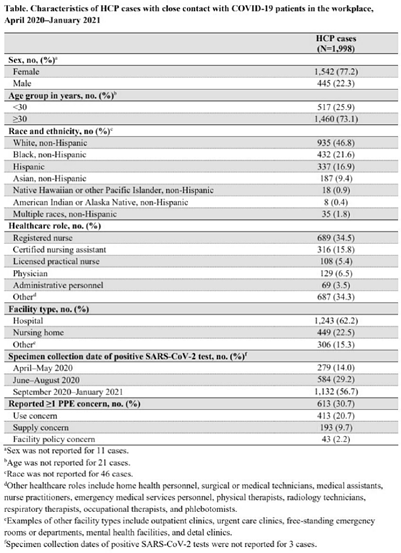

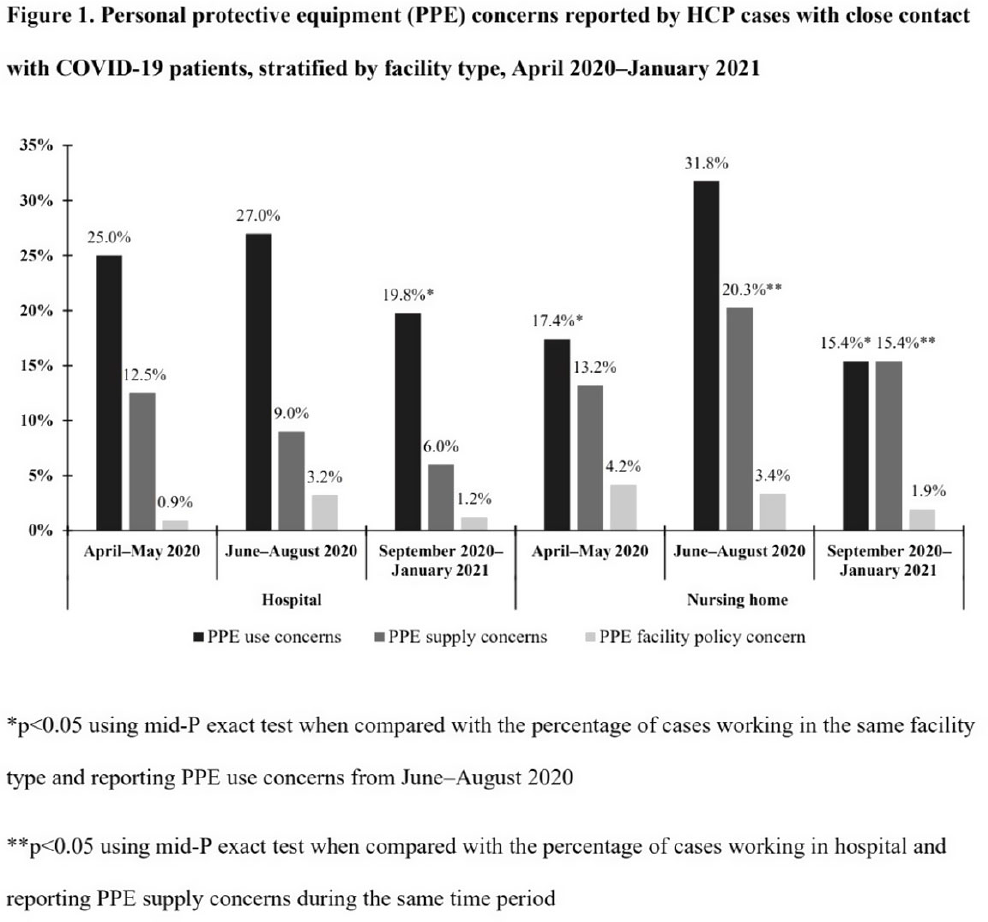

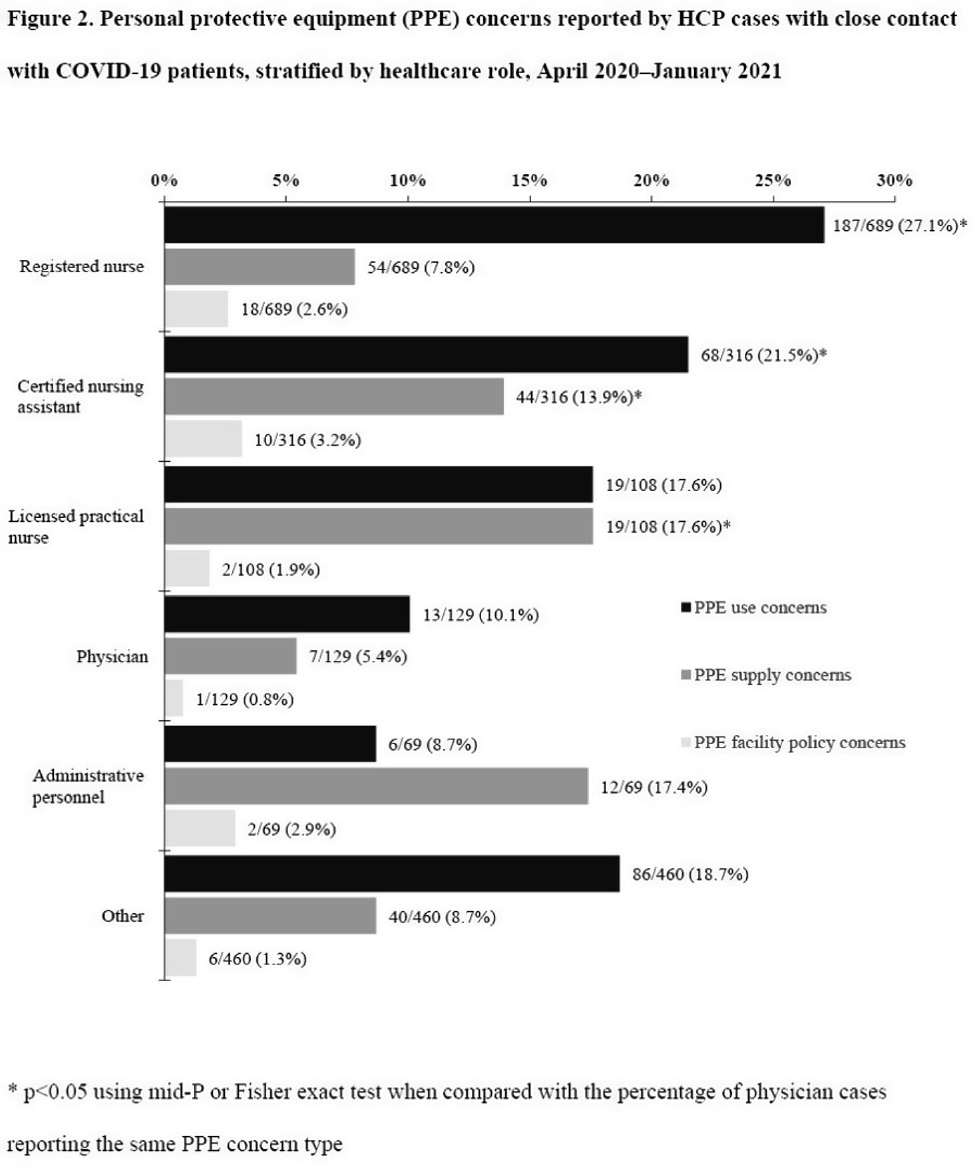

Characteristics of healthcare personnel who reported concerns related to PPE use during care of COVID-19 patients

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 2 / Issue S1 / July 2022

- Published online by Cambridge University Press:

- 16 May 2022, pp. s8-s9

-

- Article

-

- You have access

- Open access

- Export citation

Blanket NOP rules and regional realities: from the field

-

- Journal:

- Renewable Agriculture and Food Systems / Volume 37 / Issue 6 / December 2022

- Published online by Cambridge University Press:

- 27 April 2022, pp. 644-648

-

- Article

- Export citation

Predictors of humoral response to SARS-CoV-2 mRNA vaccine BNT162b2 in patients receiving maintenance dialysis

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 2 / Issue 1 / 2022

- Published online by Cambridge University Press:

- 23 March 2022, e48

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

The ability of the Global Leadership Initiative on Malnutrition (GLIM) to diagnose protein–energy malnutrition in patients requiring vascular surgery: a validation study

-

- Journal:

- British Journal of Nutrition / Volume 129 / Issue 1 / 14 January 2023

- Published online by Cambridge University Press:

- 04 February 2022, pp. 49-53

- Print publication:

- 14 January 2023

-

- Article

-

- You have access

- HTML

- Export citation

Impact of fetal haemodynamics on surgical and neurodevelopmental outcomes in patients with Ebstein anomaly and tricuspid valve dysplasia

-

- Journal:

- Cardiology in the Young / Volume 32 / Issue 11 / November 2022

- Published online by Cambridge University Press:

- 06 January 2022, pp. 1768-1779

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Comparison of criteria for determining appropriateness of antibiotic prescribing in nursing homes

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 43 / Issue 7 / July 2022

- Published online by Cambridge University Press:

- 21 June 2021, pp. 860-863

- Print publication:

- July 2022

-

- Article

- Export citation