102 results

Implementing a continuous quality-improvement framework for tuberculosis infection prevention and control in healthcare facilities in China, 2017–2019

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 45 / Issue 5 / May 2024

- Published online by Cambridge University Press:

- 25 January 2024, pp. 651-657

- Print publication:

- May 2024

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Northward migration of Red Knots Calidris canutus rufa and environment connectivity of southern Brazil to Canada

-

- Journal:

- Bird Conservation International / Volume 34 / 2024

- Published online by Cambridge University Press:

- 16 January 2024, e2

-

- Article

- Export citation

Severe and common mental disorders and risk of emergency hospital admissions for ambulatory care sensitive conditions among the UK Biobank cohort

-

- Journal:

- BJPsych Open / Volume 9 / Issue 6 / November 2023

- Published online by Cambridge University Press:

- 07 November 2023, e211

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Radiofrequency ice dielectric measurements at Summit Station, Greenland

-

- Journal:

- Journal of Glaciology , First View

- Published online by Cambridge University Press:

- 09 October 2023, pp. 1-12

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

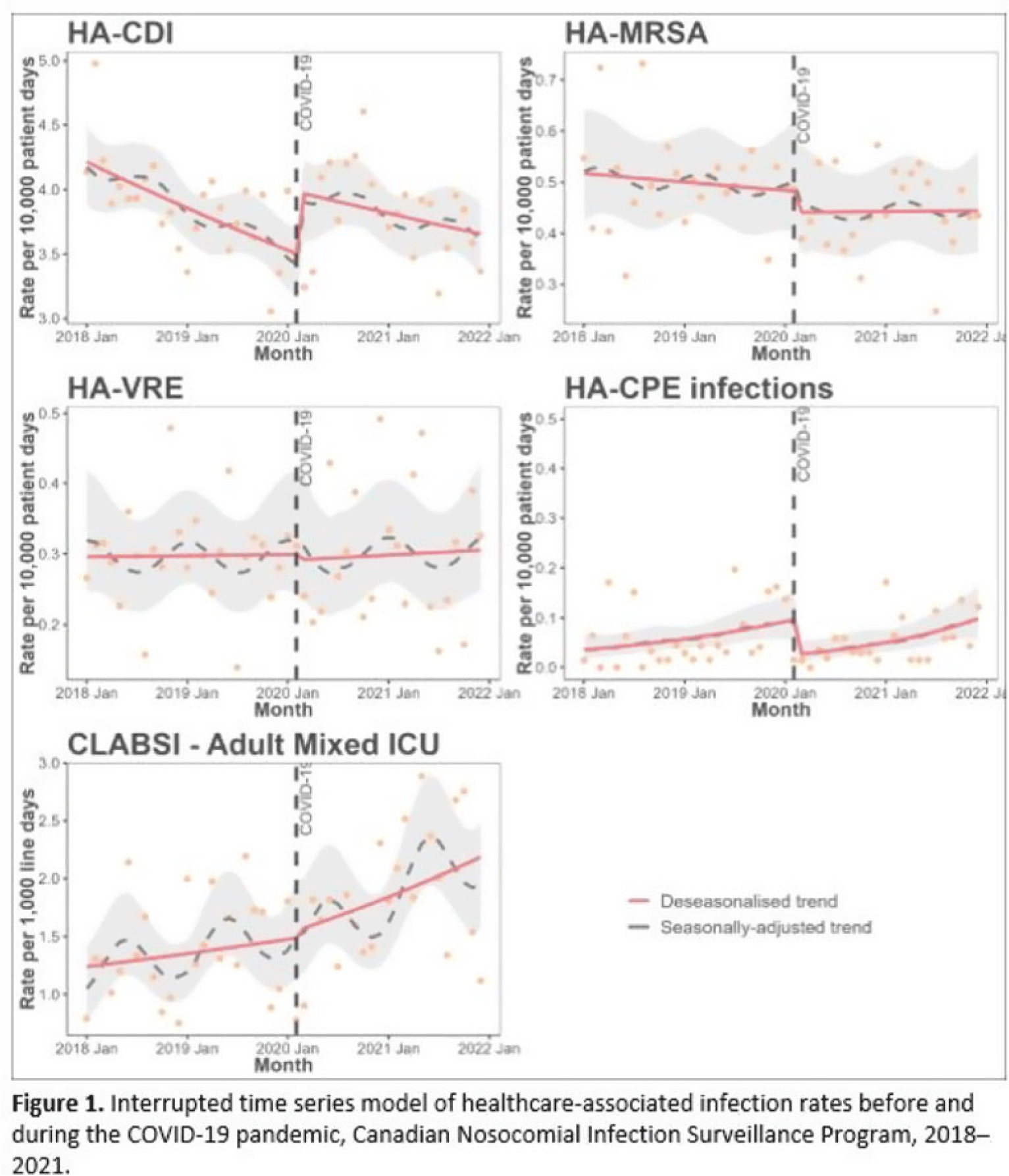

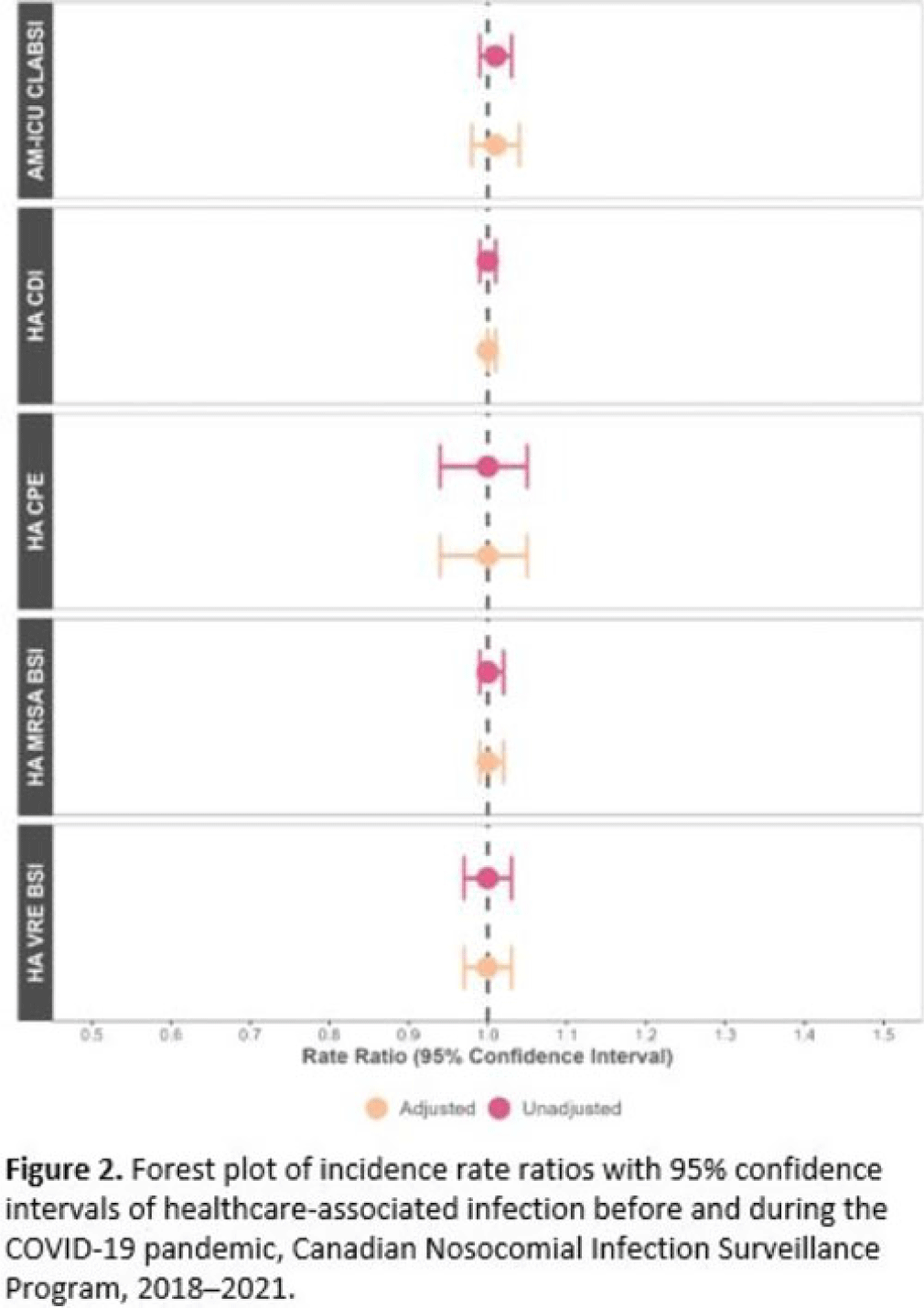

Impact of COVID-19 on healthcare-associated infections in Canadian acute-care hospitals: Interrupted time series (2018–2021)

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, pp. s112-s113

-

- Article

-

- You have access

- Open access

- Export citation

Epidemiology of central-line–associated bloodstream infection mortality in Canadian NICUs before and after 2017

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, p. s48

-

- Article

-

- You have access

- Open access

- Export citation

How does the antimicrobial stewardship provider role affect prospective audit and feedback acceptance for restricted antibiotics in a Canadian tertiary-care center?

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 45 / Issue 2 / February 2024

- Published online by Cambridge University Press:

- 18 August 2023, pp. 234-236

- Print publication:

- February 2024

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

19 - Temperament, Family Context, and the Development of Coping

- from Part V - Social Contexts and the Development of Coping

-

-

- Book:

- The Cambridge Handbook of the Development of Coping

- Published online:

- 22 June 2023

- Print publication:

- 06 July 2023, pp 468-488

-

- Chapter

- Export citation

Complex cardiac implantable electronic device infections in Alberta, Canada: An epidemiologic cohort study of validated administrative data

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 10 / October 2023

- Published online by Cambridge University Press:

- 15 May 2023, pp. 1607-1613

- Print publication:

- October 2023

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

294 Identification of MCAK Inhibitors that Induce Aneuploidy in Triple Negative Breast Cancer Models

- Part of

-

- Journal:

- Journal of Clinical and Translational Science / Volume 7 / Issue s1 / April 2023

- Published online by Cambridge University Press:

- 24 April 2023, p. 88

-

- Article

-

- You have access

- Open access

- Export citation

What Do You Do When You Can Do No More? Limited Resources, Unimaginable Environments, Personal Danger: What Have Previous Disasters Taught Us About Moral and Ethical Challenges?

-

- Journal:

- Disaster Medicine and Public Health Preparedness / Volume 17 / 2023

- Published online by Cambridge University Press:

- 11 April 2023, e373

-

- Article

- Export citation

Developmental care pathway for hospitalised infants with CHD: on behalf of the Cardiac Newborn Neuroprotective Network, a Special Interest Group of the Cardiac Neurodevelopmental Outcome Collaborative

-

- Journal:

- Cardiology in the Young / Volume 33 / Issue 12 / December 2023

- Published online by Cambridge University Press:

- 30 March 2023, pp. 2521-2538

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Expanding mental health services in low- and middle-income countries: A task-shifting framework for delivery of comprehensive, collaborative, and community-based care

-

- Journal:

- Cambridge Prisms: Global Mental Health / Volume 10 / 2023

- Published online by Cambridge University Press:

- 27 February 2023, e16

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

10 - Parenting That Promotes Positive Social, Emotional and Behavioral Development in Middle Childhood

- from Part II - Parenting across Development: Social, Emotional, and Cognitive Influences

-

-

- Book:

- The Cambridge Handbook of Parenting

- Published online:

- 01 December 2022

- Print publication:

- 15 December 2022, pp 213-235

-

- Chapter

- Export citation

Relationship building in pediatric research recruitment: Insights from qualitative interviews with research staff

-

- Journal:

- Journal of Clinical and Translational Science / Volume 6 / Issue 1 / 2022

- Published online by Cambridge University Press:

- 03 October 2022, e138

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Mobilizing digital technology to implement a population-based psychological support response during the COVID-19 pandemic in Lima, Peru

-

- Journal:

- Global Mental Health / Volume 9 / 2022

- Published online by Cambridge University Press:

- 28 July 2022, pp. 355-365

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Participation in cost-offset community-supported agriculture by low-income households in the USA is associated with community characteristics and operational practices

-

- Journal:

- Public Health Nutrition / Volume 25 / Issue 8 / August 2022

- Published online by Cambridge University Press:

- 13 April 2022, pp. 2277-2287

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Acceptability and experience of a personalised proteomic risk intervention for type 2 diabetes in primary care: qualitative interview study with patients and healthcare providers

-

- Journal:

- Primary Health Care Research & Development / Volume 23 / 2022

- Published online by Cambridge University Press:

- 01 April 2022, e24

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Vancomycin-resistant Enterococcus sequence type 1478 spread across hospitals participating in the Canadian Nosocomial Infection Surveillance Program from 2013 to 2018

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 1 / January 2023

- Published online by Cambridge University Press:

- 10 March 2022, pp. 17-23

- Print publication:

- January 2023

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Comparison of the performance of a clinical classification tree versus clinical gestalt in predicting sepsis with extended-spectrum beta-lactamase–producing gram-negative rods

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 2 / Issue 1 / 2022

- Published online by Cambridge University Press:

- 07 March 2022, e35

-

- Article

-

- You have access

- Open access

- HTML

- Export citation