325 results

Rift propagation signals the last act of the Thwaites Eastern Ice Shelf despite low basal melt rates

-

- Journal:

- Journal of Glaciology , First View

- Published online by Cambridge University Press:

- 19 September 2024, pp. 1-18

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Morphodynamics and management challenges for beaches in modified estuaries and bays

-

- Journal:

- Cambridge Prisms: Coastal Futures / Volume 2 / 2024

- Published online by Cambridge University Press:

- 27 August 2024, e11

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Self-identified strategies to manage intake of tempting foods: cross-sectional and prospective associations with BMI and snack intake

-

- Journal:

- Public Health Nutrition / Volume 27 / Issue 1 / 2024

- Published online by Cambridge University Press:

- 20 March 2024, e107

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Head and Neck Cancer: United Kingdom National Multidisciplinary Guidelines, Sixth Edition

-

- Journal:

- The Journal of Laryngology & Otology / Volume 138 / Issue S1 / April 2024

- Published online by Cambridge University Press:

- 14 March 2024, pp. S1-S224

- Print publication:

- April 2024

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Illite/Smectite Geothermometry of the Proterozoic Oronto Group, Midcontinent Rift System

-

- Journal:

- Clays and Clay Minerals / Volume 41 / Issue 2 / April 1993

- Published online by Cambridge University Press:

- 28 February 2024, pp. 134-147

-

- Article

-

- You have access

- Export citation

A commensal Fast Radio Burst search pipeline for the Murchison Widefield Array

-

- Journal:

- Publications of the Astronomical Society of Australia / Volume 41 / 2024

- Published online by Cambridge University Press:

- 29 January 2024, e011

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

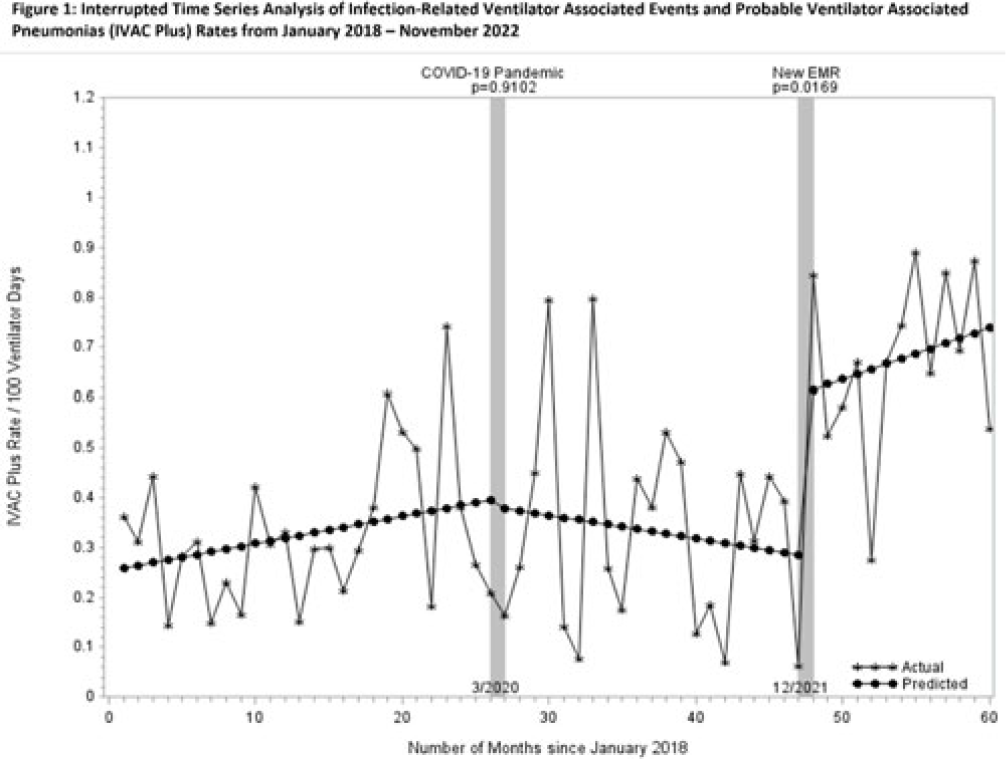

Increasing rates of ventilator-associated events: Blame it on COVID-19?

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, pp. s105-s106

-

- Article

-

- You have access

- Open access

- Export citation

Agricultural Research Service Weed Science Research: Past, Present, and Future

-

- Journal:

- Weed Science / Volume 71 / Issue 4 / July 2023

- Published online by Cambridge University Press:

- 16 August 2023, pp. 312-327

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Associations between dietary variety, portion size and body weight: prospective evidence from UK Biobank participants

-

- Journal:

- British Journal of Nutrition / Volume 130 / Issue 7 / 14 October 2023

- Published online by Cambridge University Press:

- 16 January 2023, pp. 1267-1277

- Print publication:

- 14 October 2023

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Please Vote!!

-

- Journal:

- Microscopy Today / Volume 30 / Issue 6 / November 2022

- Published online by Cambridge University Press:

- 24 November 2022, p. 7

- Print publication:

- November 2022

-

- Article

-

- You have access

- HTML

- Export citation

A pilot trial investigating the feasibility of a future randomised controlled trial of Individualised Placement and Support for people unemployed with chronic pain recruiting in primary care

-

- Journal:

- Primary Health Care Research & Development / Volume 23 / 2022

- Published online by Cambridge University Press:

- 22 July 2022, e39

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

P.084 5q spinal muscular atrophy Canadian Paediatric Surveillance Program – 2020-2021 results

-

- Journal:

- Canadian Journal of Neurological Sciences / Volume 49 / Issue s1 / June 2022

- Published online by Cambridge University Press:

- 24 June 2022, pp. S29-S30

-

- Article

-

- You have access

- Export citation

Predicting asymptomatic severe acute respiratory coronavirus virus 2 (SARS-CoV-2) infection rates of inpatients: A time-series analysis — CORRIGENDUM

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 5 / May 2023

- Published online by Cambridge University Press:

- 25 May 2022, p. 859

- Print publication:

- May 2023

-

- Article

-

- You have access

- HTML

- Export citation

Predictors of persistent symptoms after severe acute respiratory coronavirus virus 2 (SARS-CoV-2) infection among healthcare workers: Results of a multisite survey

- Part of

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 5 / May 2023

- Published online by Cambridge University Press:

- 06 April 2022, pp. 817-820

- Print publication:

- May 2023

-

- Article

- Export citation

Poor outcomes in both infection and colonization with carbapenem-resistant Enterobacterales

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 43 / Issue 12 / December 2022

- Published online by Cambridge University Press:

- 02 February 2022, pp. 1840-1846

- Print publication:

- December 2022

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Non-opioid analgesics and post-operative pain following transoral robotic surgery for oropharyngeal cancer

-

- Journal:

- The Journal of Laryngology & Otology / Volume 136 / Issue 6 / June 2022

- Published online by Cambridge University Press:

- 10 January 2022, pp. 527-534

- Print publication:

- June 2022

-

- Article

- Export citation

4 - Neuropsychological and Biological Influences on Drinking Behavior Change

- from Part I - Micro Level

-

-

- Book:

- Dynamic Pathways to Recovery from Alcohol Use Disorder

- Published online:

- 23 December 2021

- Print publication:

- 06 January 2022, pp 60-76

-

- Chapter

- Export citation

P.112 5q Spinal Muscular Atrophy Canadian Paediatric Surveillance Program - 2020 Results

-

- Journal:

- Canadian Journal of Neurological Sciences / Volume 48 / Issue s3 / November 2021

- Published online by Cambridge University Press:

- 05 January 2022, pp. S50-S51

-

- Article

-

- You have access

- Export citation

A Warm Welcome to the New MSA Council Members

-

- Journal:

- Microscopy Today / Volume 30 / Issue 1 / January 2022

- Published online by Cambridge University Press:

- 31 January 2022, p. 7

- Print publication:

- January 2022

-

- Article

-

- You have access

- HTML

- Export citation

Humanizing the intensive care unit experience in a comprehensive cancer center: A patient- and family-centered improvement study

-

- Journal:

- Palliative & Supportive Care / Volume 20 / Issue 6 / December 2022

- Published online by Cambridge University Press:

- 14 December 2021, pp. 794-800

-

- Article

-

- You have access

- Open access

- HTML

- Export citation