716 results

Mental health impact of multiple sexually minoritized and gender expansive stressors among LGBTQ+ young adults: a latent class analysis

-

- Journal:

- Epidemiology and Psychiatric Sciences / Volume 33 / 2024

- Published online by Cambridge University Press:

- 11 April 2024, e22

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Hydrothermal Treatment of Smectite, Illite, and Basalt to 460°C: Comparison of Natural with Hydrothermally Formed Clay Minerals

-

- Journal:

- Clays and Clay Minerals / Volume 35 / Issue 4 / August 1987

- Published online by Cambridge University Press:

- 02 April 2024, pp. 241-250

-

- Article

- Export citation

The effect of older age on outcomes of rTMS treatment for treatment-resistant depression

-

- Journal:

- International Psychogeriatrics , First View

- Published online by Cambridge University Press:

- 25 March 2024, pp. 1-6

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Incidence of mental health diagnoses during the COVID-19 pandemic: a multinational network study

-

- Journal:

- Epidemiology and Psychiatric Sciences / Volume 33 / 2024

- Published online by Cambridge University Press:

- 04 March 2024, e9

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Prolonged bacterial carriage and hospital transmission detected by whole genome sequencing surveillance

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue 1 / 2024

- Published online by Cambridge University Press:

- 30 January 2024, e11

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

78 Remotely monitored in-home IADLs can discriminate between normal cognition and mild cognitive impairment

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 29 / Issue s1 / November 2023

- Published online by Cambridge University Press:

- 21 December 2023, pp. 381-382

-

- Article

-

- You have access

- Export citation

31 Characterizing Sociodemographic Factors Associated with the Cognitive and Linguistic Scale (CALS) Among Pediatric Rehabilitation Patients Admitted for Traumatic Brain Injury

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 29 / Issue s1 / November 2023

- Published online by Cambridge University Press:

- 21 December 2023, pp. 139-140

-

- Article

-

- You have access

- Export citation

60 The Impact of Retirement Status on Cognitive Dysfunction in Alzheimer’s Disease

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 29 / Issue s1 / November 2023

- Published online by Cambridge University Press:

- 21 December 2023, pp. 366-367

-

- Article

-

- You have access

- Export citation

34 Association Between Subjective Cognitive Decline and Mental Wellbeing in Normal Cognition and MCI Older Adults

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 29 / Issue s1 / November 2023

- Published online by Cambridge University Press:

- 21 December 2023, pp. 344-345

-

- Article

-

- You have access

- Export citation

87 Idiopathic Autoimmune Encephalitis Influences Functional Recovery for Pediatric Patients Admitted to Inpatient Rehabilitation

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 29 / Issue s1 / November 2023

- Published online by Cambridge University Press:

- 21 December 2023, pp. 78-79

-

- Article

-

- You have access

- Export citation

Environmental contamination of postmortem blood cultures detected by whole-genome sequencing surveillance

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 12 / December 2023

- Published online by Cambridge University Press:

- 24 August 2023, pp. 2103-2105

- Print publication:

- December 2023

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Excessive fear of clusters of holes, its interaction with stressful life events and the association with anxiety and depressive symptoms: large epidemiological study of young people in Hong Kong

-

- Journal:

- BJPsych Open / Volume 9 / Issue 5 / September 2023

- Published online by Cambridge University Press:

- 14 August 2023, e151

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

The evolving role of data & safety monitoring boards for real-world clinical trials

-

- Journal:

- Journal of Clinical and Translational Science / Volume 7 / Issue 1 / 2023

- Published online by Cambridge University Press:

- 02 August 2023, e179

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Psychological Characteristics and Quality of Life of Patients with Upper and Lower Functional Gastrointestinal Disorders

-

- Journal:

- European Psychiatry / Volume 66 / Issue S1 / March 2023

- Published online by Cambridge University Press:

- 19 July 2023, p. S401

-

- Article

-

- You have access

- Open access

- Export citation

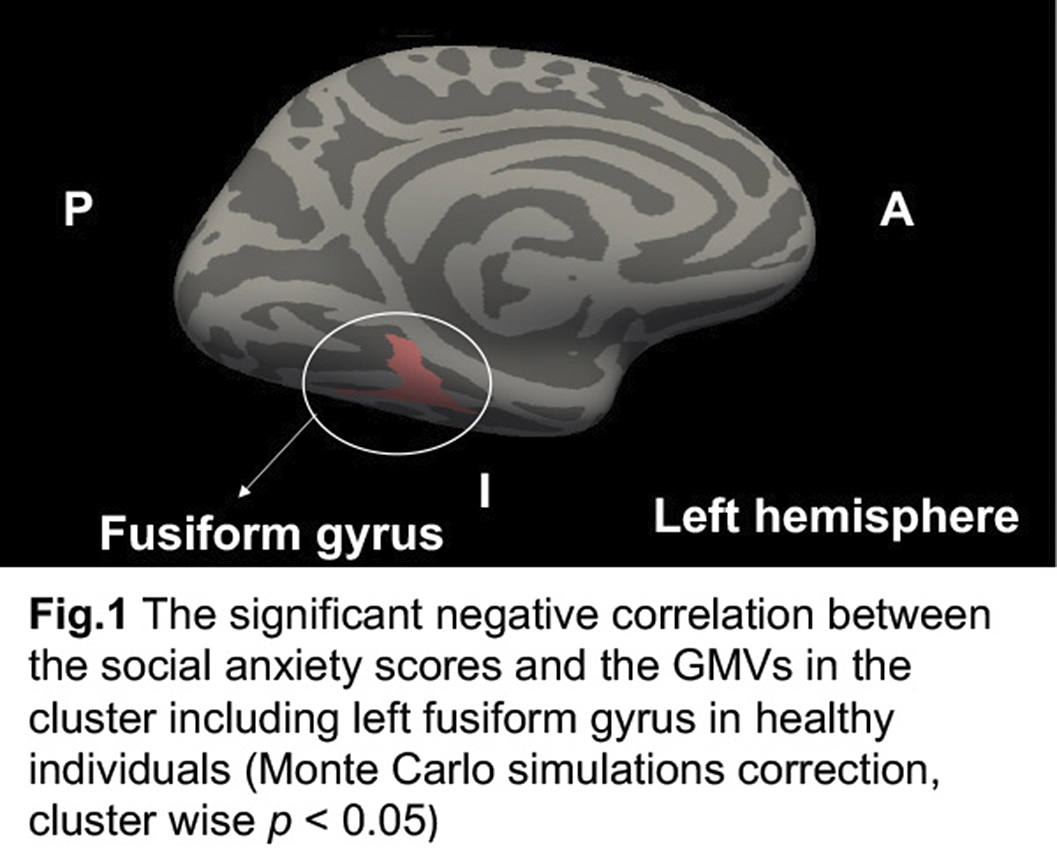

Fusiform Gyrus is Related to Subclinical Social Anxiety in Healthy Individuals

-

- Journal:

- European Psychiatry / Volume 66 / Issue S1 / March 2023

- Published online by Cambridge University Press:

- 19 July 2023, p. S189

-

- Article

-

- You have access

- Open access

- Export citation

Systematic Review on the Mechanisms of Action of Psilocybin in the Treatment of Depression

-

- Journal:

- European Psychiatry / Volume 66 / Issue S1 / March 2023

- Published online by Cambridge University Press:

- 19 July 2023, pp. S416-S417

-

- Article

-

- You have access

- Open access

- Export citation

Is Personality Disorder Madness? A Qualitative Study of the perceptions of Medical Students in Somaliland

-

- Journal:

- European Psychiatry / Volume 66 / Issue S1 / March 2023

- Published online by Cambridge University Press:

- 19 July 2023, p. S1119

-

- Article

-

- You have access

- Open access

- Export citation

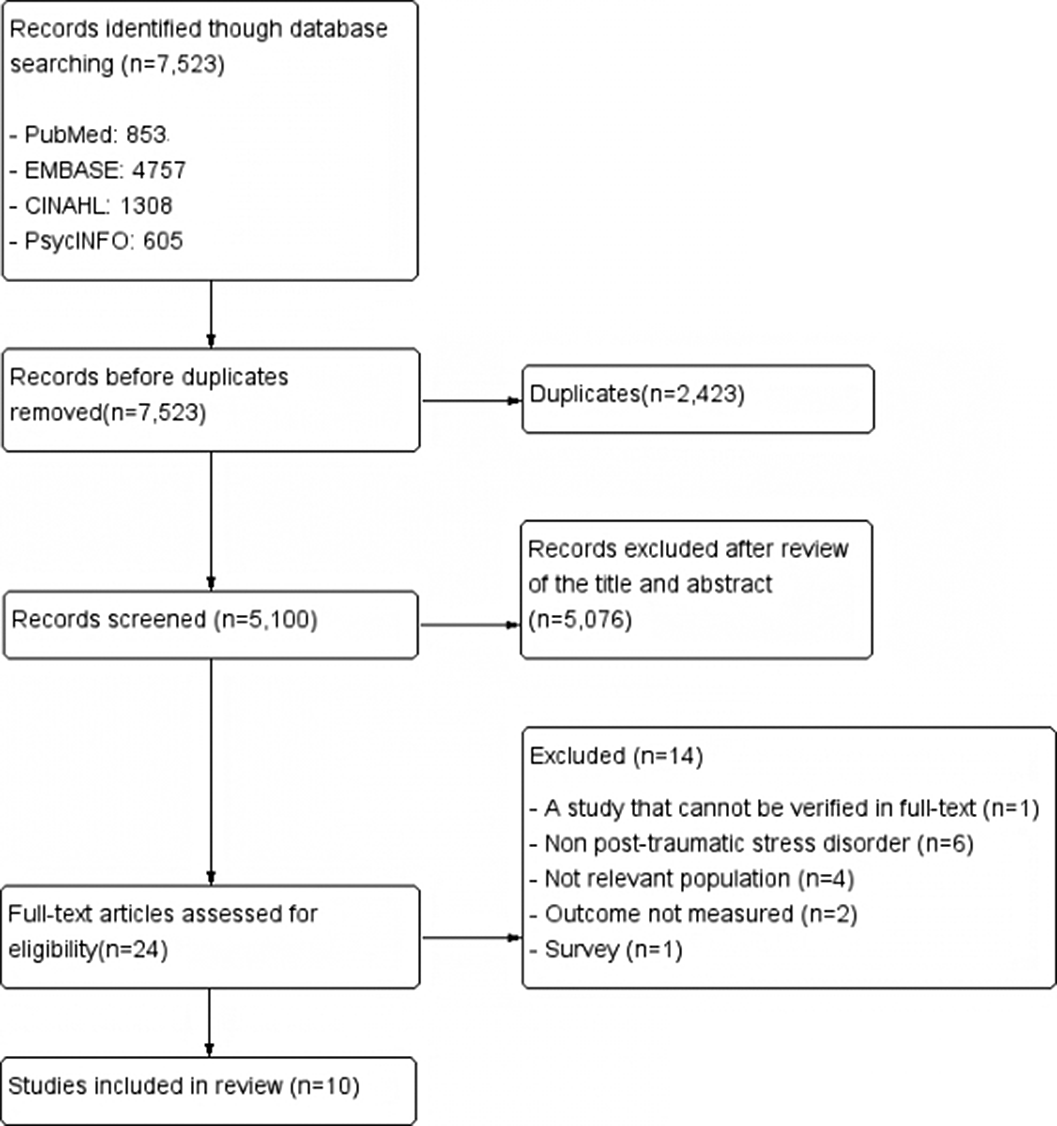

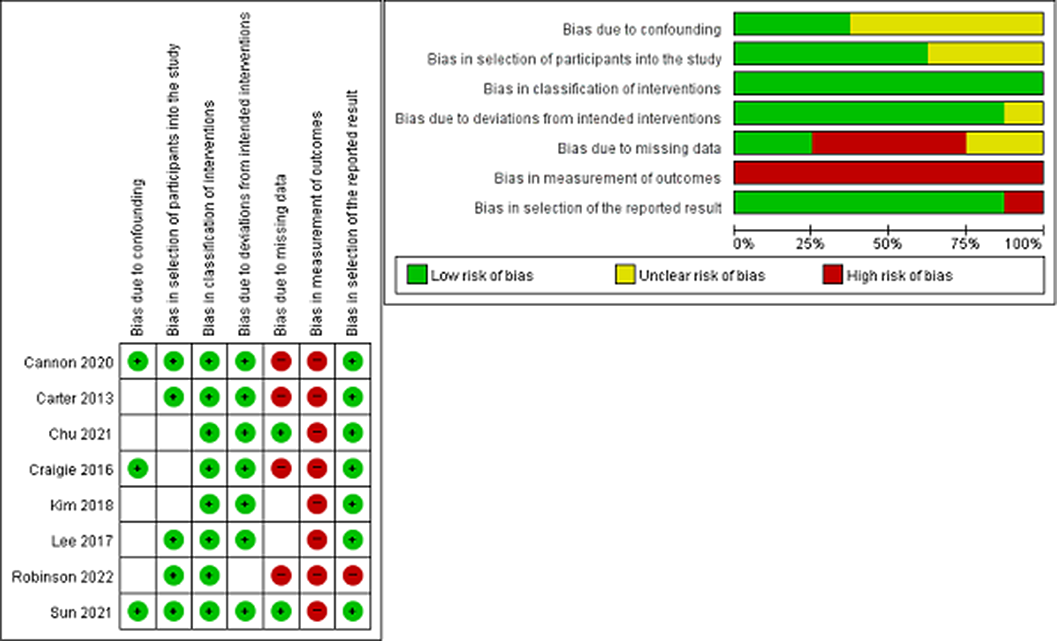

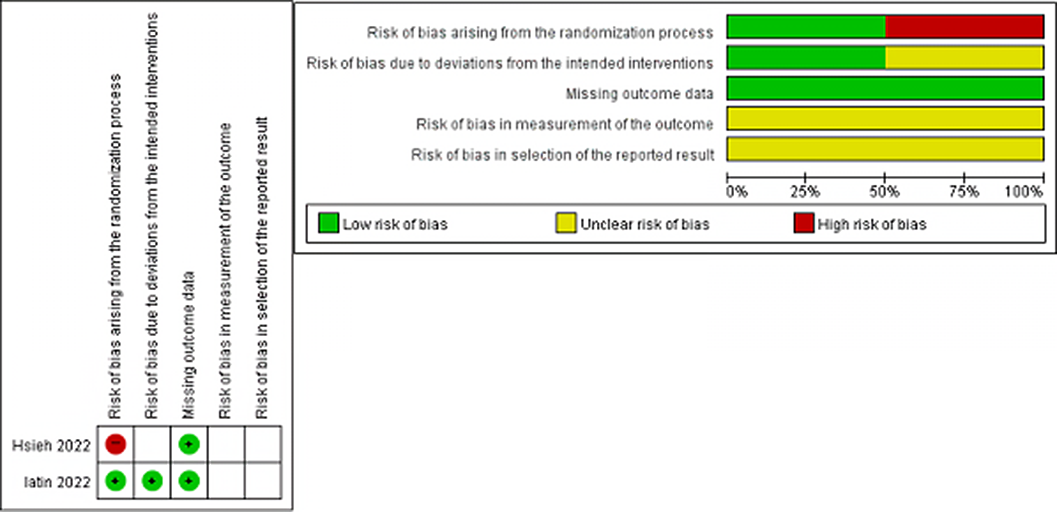

A Systematic Review of the Effect of Post-traumatic Stress Disorder Programs for Nurses

-

- Journal:

- European Psychiatry / Volume 66 / Issue S1 / March 2023

- Published online by Cambridge University Press:

- 19 July 2023, pp. S977-S978

-

- Article

-

- You have access

- Open access

- Export citation

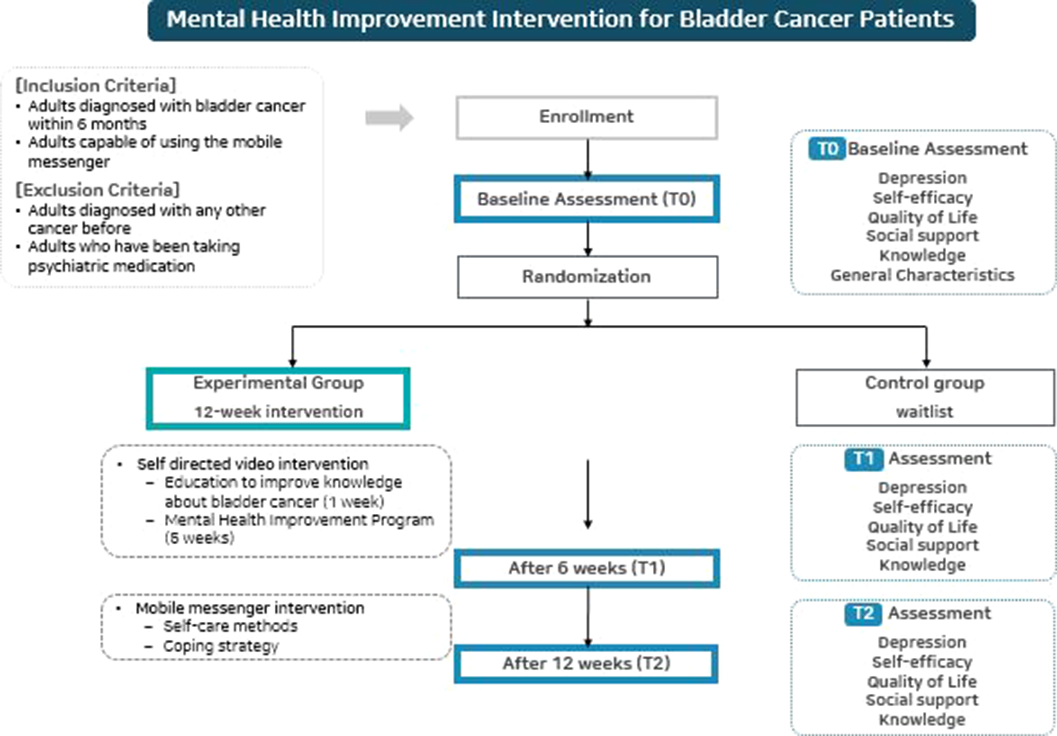

A mobile-based mental health improvement program for non-muscle invasive bladder cancer patients: Program development and feasibility protocol

-

- Journal:

- European Psychiatry / Volume 66 / Issue S1 / March 2023

- Published online by Cambridge University Press:

- 19 July 2023, pp. S362-S363

-

- Article

-

- You have access

- Open access

- Export citation

A survey of the workplace experiences of police force employees who are autistic and/or have attention deficit hyperactivity disorder

-

- Journal:

- BJPsych Open / Volume 9 / Issue 4 / July 2023

- Published online by Cambridge University Press:

- 06 July 2023, e123

-

- Article

-

- You have access

- Open access

- HTML

- Export citation