Molecular Nutrition

Research Article

J-shaped association between dietary copper intake and all-cause mortality: a prospective cohort study in Chinese adults

-

- Published online by Cambridge University Press:

- 01 September 2022, pp. 1841-1847

-

- Article

- Export citation

Metabolism and Metabolic Studies

Scoping Review

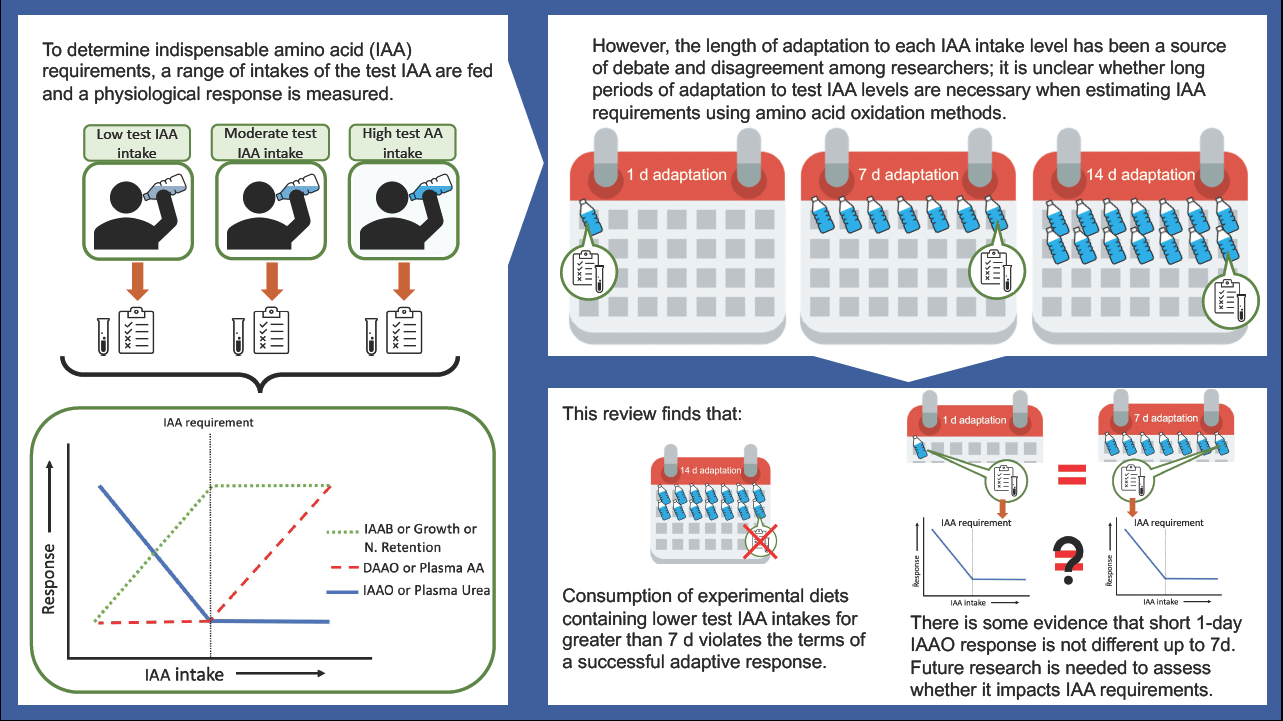

Amino acid oxidation methods to determine amino acid requirements: do we require lengthy adaptation periods?

-

- Published online by Cambridge University Press:

- 01 September 2022, pp. 1848-1854

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Research Article

Effect of live yeast supplementation and feeding frequency in male finishing pigs subjected to heat stress

-

- Published online by Cambridge University Press:

- 19 August 2022, pp. 1855-1870

-

- Article

- Export citation

Nutritional Endocrinology

Protocol Paper

Effect of taurine on glycaemic, lipid and inflammatory profile in individuals with type 2 diabetes: study protocol of a randomised trial

-

- Published online by Cambridge University Press:

- 01 September 2022, pp. 1871-1876

-

- Article

- Export citation

Human and Clinical Nutrition

Research Article

Diet therapy along with nutrition education can improve renal function in people with stages 3–4 chronic kidney disease who do not have diabetes: a randomised controlled trial

-

- Published online by Cambridge University Press:

- 07 July 2022, pp. 1877-1887

-

- Article

- Export citation

Role of sarcopenia risk in predicting COVID-19 severity and length of hospital stay in older adults: a prospective cohort study

-

- Published online by Cambridge University Press:

- 24 October 2022, pp. 1888-1896

-

- Article

- Export citation

Effects of inulin supplementation on inflammatory biomarkers and clinical symptoms of women with obesity and depression on a calorie-restricted diet: a randomised controlled clinical trial

-

- Published online by Cambridge University Press:

- 05 September 2022, pp. 1897-1907

-

- Article

- Export citation

Seasonal variation in vitamin D status of Japanese infants starts to emerge at 2 months of age: a retrospective cohort study

-

- Published online by Cambridge University Press:

- 26 August 2022, pp. 1908-1915

-

- Article

- Export citation

Dietary Surveys and Nutritional Epidemiology

Systematic Review and Meta-Analysis

Effect of COVID-19 outbreak on the diet, body weight and food security status of students of higher education: a systematic review

-

- Published online by Cambridge University Press:

- 10 August 2022, pp. 1916-1928

-

- Article

- Export citation

Triangulating evidence for the causal impact of single-intervention zinc supplement on glycaemic control for type 2 diabetes: systematic review and meta-analysis of randomised controlled trial and two-sample Mendelian randomisation

-

- Published online by Cambridge University Press:

- 10 August 2022, pp. 1929-1944

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Research Article

Evaluation and interpretation of latent class modelling strategies to characterise dietary trajectories across early life: a longitudinal study from the Southampton Women’s Survey

-

- Published online by Cambridge University Press:

- 15 August 2022, pp. 1945-1954

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Dietary sodium sources according to four 3-d weighed food records and their association with multiple 24-h urinary excretions among middle-aged and elderly Japanese participants in rural areas

-

- Published online by Cambridge University Press:

- 18 August 2022, pp. 1955-1963

-

- Article

- Export citation

Relative to processed red meat, alternative protein sources are associated with a lower risk of hypertension and diabetes in a prospective cohort of French women

-

- Published online by Cambridge University Press:

- 01 September 2022, pp. 1964-1975

-

- Article

- Export citation

Avocado consumption is associated with a reduction in hypertension incidence in Mexican women

-

- Published online by Cambridge University Press:

- 18 August 2022, pp. 1976-1983

-

- Article

- Export citation

Nutritional adequacy of commercial food products targeted at 0–36-month-old children: a study in Brazil and Portugal

-

- Published online by Cambridge University Press:

- 18 August 2022, pp. 1984-1992

-

- Article

- Export citation

Body composition and anthropometric indicators as predictors of blood pressure: a cross-sectional study conducted in young Algerian adults

-

- Published online by Cambridge University Press:

- 01 September 2022, pp. 1993-2000

-

- Article

- Export citation

Validation of the Thumbs food classification system as a tool to accurately identify the healthiness of foods

-

- Published online by Cambridge University Press:

- 30 August 2022, pp. 2001-2010

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Food and nutrient intakes and compliance with recommendations in school-aged children in Ireland: findings from the National Children’s Food Survey II (2017–2018) and changes since 2003–2004.

-

- Published online by Cambridge University Press:

- 01 September 2022, pp. 2011-2024

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Front Cover (OFC, IFC) and matter

BJN volume 129 issue 11 Cover and Front matter

-

- Published online by Cambridge University Press:

- 05 May 2023, pp. f1-f2

-

- Article

-

- You have access

- Export citation

Back Cover (OBC, IBC) and matter

BJN volume 129 issue 11 Cover and Back matter

-

- Published online by Cambridge University Press:

- 05 May 2023, pp. b1-b2

-

- Article

-

- You have access

- Export citation