331 results

Peer review of clinical and translational research manuscripts: Perspectives from statistical collaborators

-

- Journal:

- Journal of Clinical and Translational Science / Volume 8 / Issue 1 / 2024

- Published online by Cambridge University Press:

- 04 January 2024, e20

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Apples to advocacy: Evaluating consumer preferences for hard cider policies

-

- Journal:

- Journal of Wine Economics / Volume 18 / Issue 4 / November 2023

- Published online by Cambridge University Press:

- 28 November 2023, pp. 286-301

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

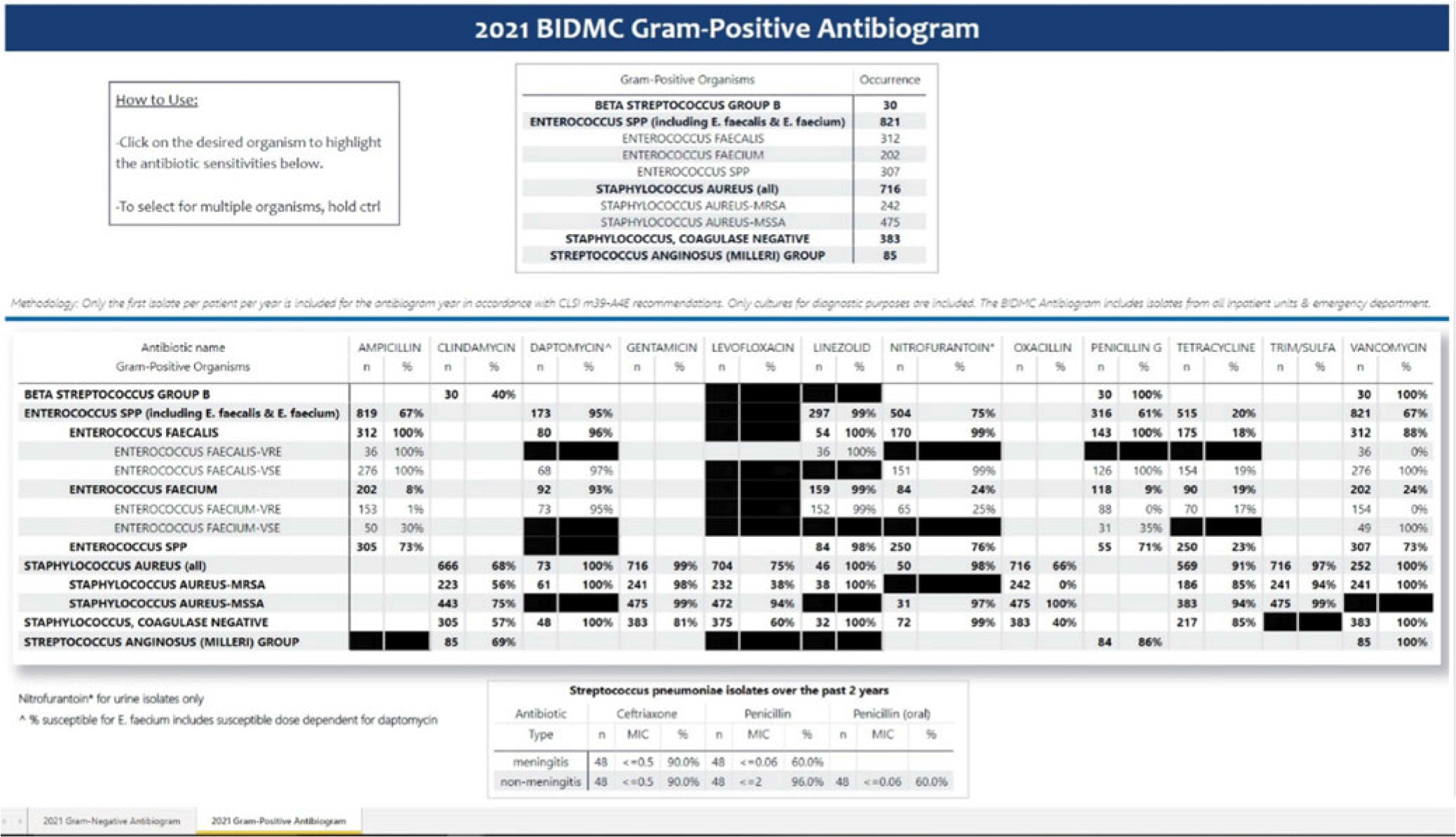

Creating an electronic antibiogram using visualization software: Easily updatable and removes the need for yearly manual review

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, p. s34

-

- Article

-

- You have access

- Open access

- Export citation

Description of Patients with Out-of-Hospital Cardiac Arrest within 24 Hours of EMS Transport Refusal.

-

- Journal:

- Prehospital and Disaster Medicine / Volume 38 / Issue S1 / May 2023

- Published online by Cambridge University Press:

- 13 July 2023, p. s106

- Print publication:

- May 2023

-

- Article

-

- You have access

- Export citation

The Virtues of Interpretable Medical AI

-

- Journal:

- Cambridge Quarterly of Healthcare Ethics , First View

- Published online by Cambridge University Press:

- 10 January 2023, pp. 1-10

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Antidepressant medications in dementia: evidence and potential mechanisms of treatment-resistance

-

- Journal:

- Psychological Medicine / Volume 53 / Issue 3 / February 2023

- Published online by Cambridge University Press:

- 09 January 2023, pp. 654-667

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

The Virtues of Interpretable Medical Artificial Intelligence

-

- Journal:

- Cambridge Quarterly of Healthcare Ethics , First View

- Published online by Cambridge University Press:

- 16 December 2022, pp. 1-10

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Occurrence of baleen whales in the New York Bight, 1998–2017: insights from opportunistic data

-

- Journal:

- Journal of the Marine Biological Association of the United Kingdom / Volume 102 / Issue 6 / September 2022

- Published online by Cambridge University Press:

- 02 November 2022, pp. 438-444

-

- Article

- Export citation

Radar attenuation demonstrates advective cooling in the Siple Coast ice streams

-

- Journal:

- Journal of Glaciology / Volume 69 / Issue 275 / June 2023

- Published online by Cambridge University Press:

- 11 October 2022, pp. 566-576

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Cost-effectiveness of mirtazapine for agitated behaviors in dementia: findings from a randomized controlled trial

-

- Journal:

- International Psychogeriatrics / Volume 34 / Issue 10 / October 2022

- Published online by Cambridge University Press:

- 19 July 2022, pp. 905-917

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Development of a model to predict antidepressant treatment response for depression among Veterans

-

- Journal:

- Psychological Medicine / Volume 53 / Issue 11 / August 2023

- Published online by Cambridge University Press:

- 15 July 2022, pp. 5001-5011

-

- Article

- Export citation

Suicidal ideation in dementia: associations with neuropsychiatric symptoms and subtype diagnosis

-

- Journal:

- International Psychogeriatrics / Volume 34 / Issue 4 / April 2022

- Published online by Cambridge University Press:

- 25 March 2022, pp. 399-406

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Birth without intervention in women with severe mental illness: cohort study

-

- Journal:

- BJPsych Open / Volume 8 / Issue 2 / March 2022

- Published online by Cambridge University Press:

- 24 February 2022, e50

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Development of a model to predict psychotherapy response for depression among Veterans

-

- Journal:

- Psychological Medicine / Volume 53 / Issue 8 / June 2023

- Published online by Cambridge University Press:

- 11 February 2022, pp. 3591-3600

-

- Article

- Export citation

Poor outcomes in both infection and colonization with carbapenem-resistant Enterobacterales

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 43 / Issue 12 / December 2022

- Published online by Cambridge University Press:

- 02 February 2022, pp. 1840-1846

- Print publication:

- December 2022

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

The baby and the bathwater: On the need for substantive–methodological synergy in organizational research

-

- Journal:

- Industrial and Organizational Psychology / Volume 14 / Issue 4 / December 2021

- Published online by Cambridge University Press:

- 14 December 2021, pp. 497-504

-

- Article

- Export citation

Characterisation of age and polarity at onset in bipolar disorder

-

- Journal:

- The British Journal of Psychiatry / Volume 219 / Issue 6 / December 2021

- Published online by Cambridge University Press:

- 25 August 2021, pp. 659-669

- Print publication:

- December 2021

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Trajectories of psychological distress among individuals exposed to the 9/11 World Trade Center disaster

-

- Journal:

- Psychological Medicine / Volume 52 / Issue 14 / October 2022

- Published online by Cambridge University Press:

- 07 April 2021, pp. 2950-2961

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

60941 Vaginal pH predicts cervical intraepithelial neoplasia-2 regression in women living with human immunodeficiency virus

-

- Journal:

- Journal of Clinical and Translational Science / Volume 5 / Issue s1 / March 2021

- Published online by Cambridge University Press:

- 30 March 2021, pp. 23-24

-

- Article

-

- You have access

- Open access

- Export citation

Klaus Bergmann, MD, FRCPsych

-

- Journal:

- BJPsych Bulletin / Volume 45 / Issue 4 / August 2021

- Published online by Cambridge University Press:

- 26 February 2021, pp. 251-252

- Print publication:

- August 2021

-

- Article

-

- You have access

- Open access

- HTML

- Export citation