166 results

Investigation and control of an outbreak of methicillin-susceptible Staphylococcus aureus skin and soft tissue infections in a level IV NICU

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, p. s27

-

- Article

-

- You have access

- Open access

- Export citation

Acceptability of virtual psychiatric consultations for routine follow-ups post COVID-19 pandemic for people with intellectual disabilities: cross-sectional study

-

- Journal:

- BJPsych Open / Volume 10 / Issue 3 / May 2024

- Published online by Cambridge University Press:

- 19 April 2024, e90

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

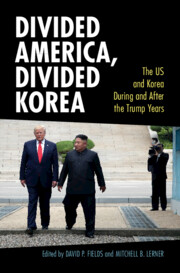

Figures

-

- Book:

- Divided America, Divided Korea

- Published online:

- 15 March 2024

- Print publication:

- 28 March 2024, pp vii-viii

-

- Chapter

- Export citation

Table

-

- Book:

- Divided America, Divided Korea

- Published online:

- 15 March 2024

- Print publication:

- 28 March 2024, pp xi-xi

-

- Chapter

- Export citation

Copyright page

-

- Book:

- Divided America, Divided Korea

- Published online:

- 15 March 2024

- Print publication:

- 28 March 2024, pp iv-iv

-

- Chapter

- Export citation

Acknowledgments

-

- Book:

- Divided America, Divided Korea

- Published online:

- 15 March 2024

- Print publication:

- 28 March 2024, pp xix-xx

-

- Chapter

- Export citation

Additional material

-

- Book:

- Divided America, Divided Korea

- Published online:

- 15 March 2024

- Print publication:

- 28 March 2024, pp xii-xiv

-

- Chapter

- Export citation

Contents

-

- Book:

- Divided America, Divided Korea

- Published online:

- 15 March 2024

- Print publication:

- 28 March 2024, pp v-vi

-

- Chapter

- Export citation

Index

-

- Book:

- Divided America, Divided Korea

- Published online:

- 15 March 2024

- Print publication:

- 28 March 2024, pp 233-244

-

- Chapter

- Export citation

Maps

-

- Book:

- Divided America, Divided Korea

- Published online:

- 15 March 2024

- Print publication:

- 28 March 2024, pp ix-x

-

- Chapter

- Export citation

Contributors

-

- Book:

- Divided America, Divided Korea

- Published online:

- 15 March 2024

- Print publication:

- 28 March 2024, pp xv-xviii

-

- Chapter

- Export citation

Divided America, Divided Korea

- The US and Korea During and After the Trump Years

-

- Published online:

- 15 March 2024

- Print publication:

- 28 March 2024

Strategies to maintain an N95 respirator supply during a pandemic supply-chain shortage

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 45 / Issue 5 / May 2024

- Published online by Cambridge University Press:

- 13 December 2023, pp. 688-689

- Print publication:

- May 2024

-

- Article

-

- You have access

- HTML

- Export citation

Risk factors associated with overweight and obesity in people with severe mental illness in South Asia: cross-sectional study in Bangladesh, India, and Pakistan

-

- Journal:

- Journal of Nutritional Science / Volume 12 / 2023

- Published online by Cambridge University Press:

- 21 November 2023, e116

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Advocacy at the Eighth World Congress of Pediatric Cardiology and Cardiac Surgery

-

- Journal:

- Cardiology in the Young / Volume 33 / Issue 8 / August 2023

- Published online by Cambridge University Press:

- 24 August 2023, pp. 1277-1287

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Efficacy and safety of a 4-week course of repeated subcutaneous ketamine injections for treatment-resistant depression (KADS study): randomised double-blind active-controlled trial

-

- Journal:

- The British Journal of Psychiatry / Volume 223 / Issue 6 / December 2023

- Published online by Cambridge University Press:

- 14 July 2023, pp. 533-541

- Print publication:

- December 2023

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Chapter 6 - Intravenous Anesthetics and Adjunctive Agents

-

-

- Book:

- Cambridge Handbook of Anesthesiology

- Published online:

- 24 May 2023

- Print publication:

- 08 June 2023, pp 86-105

-

- Chapter

- Export citation

Principles and Agents: The British Slave Trade and Its Abolition. By David Richardson. New Haven, Conn.: Yale University Press, 2022. 384 pp. Illustrations. Hardcover, $38.00. ISBN: 978-0-300-25043-5. Envoys of Abolition: British Naval Officers and the Campaign Against the Slave Trade in West Africa. By Mary Wills. Liverpool: Liverpool University Press, 2019. 256 pp. Illustrations. Paperback, $49.99. ISBN: 978-1-80207-771-1. Humanitarian Governance and the British Antislavery World System. By Maeve Ryan. New Haven, Conn.: Yale University Press, 2022. 328 pp. Hardcover, $50.00. ISBN: 978-0-300-25139-5

-

- Journal:

- Business History Review / Volume 97 / Issue 2 / Summer 2023

- Published online by Cambridge University Press:

- 25 September 2023, pp. 415-419

- Print publication:

- Summer 2023

-

- Article

- Export citation

The Impact of Chemical, Biological, Radiological, Nuclear and Explosive Events on Emergency Departments: An Integrative Review

-

- Journal:

- Prehospital and Disaster Medicine / Volume 38 / Issue S1 / May 2023

- Published online by Cambridge University Press:

- 13 July 2023, p. s3

- Print publication:

- May 2023

-

- Article

-

- You have access

- Export citation

Derivation and validation of risk prediction for posttraumatic stress symptoms following trauma exposure

-

- Journal:

- Psychological Medicine / Volume 53 / Issue 11 / August 2023

- Published online by Cambridge University Press:

- 01 July 2022, pp. 4952-4961

-

- Article

- Export citation