54 results

Extended-Spectrum Beta-Lactamase Producing Enterobacterales Infections in the United States, 2012-2021

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. s32-s33

-

- Article

-

- You have access

- Open access

- Export citation

Organism-specific Trends in Carbapenem-resistant Enterobacterales Infections in a Cohort of Hospitalized Patients, 2012–2022

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, p. s156

-

- Article

-

- You have access

- Open access

- Export citation

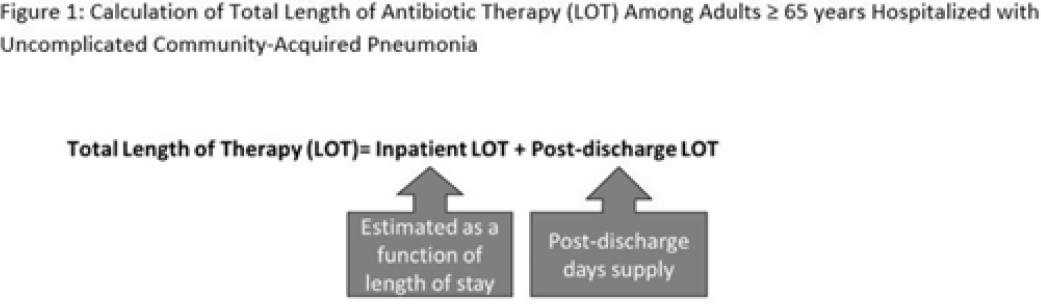

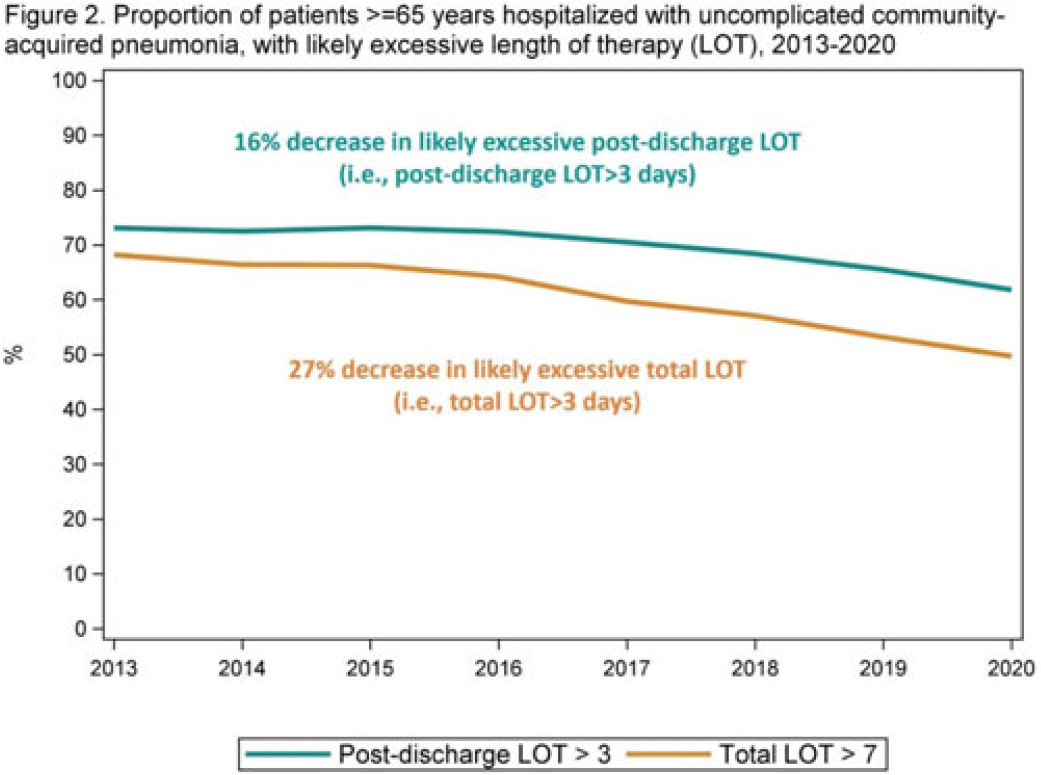

Length of antibiotic therapy among adults hospitalized with uncomplicated community-acquired pneumonia, 2013–2020

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 45 / Issue 6 / June 2024

- Published online by Cambridge University Press:

- 14 February 2024, pp. 726-732

- Print publication:

- June 2024

-

- Article

- Export citation

Length of antibiotic therapy among adults aged ≥65 years hospitalized with uncomplicated community-acquired pneumonia, 2013-2020

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, p. s26

-

- Article

-

- You have access

- Open access

- Export citation

The Connelly House approach: occupational therapists facilitating the self-administration of medication in a psychiatric rehabilitation in-patient ward

-

- Journal:

- BJPsych Bulletin / Volume 47 / Issue 5 / October 2023

- Published online by Cambridge University Press:

- 20 October 2022, pp. 274-279

- Print publication:

- October 2023

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Trends in facility-level rates of Clostridioides difficile infections in US hospitals, 2019–2020

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 2 / February 2023

- Published online by Cambridge University Press:

- 19 May 2022, pp. 238-245

- Print publication:

- February 2023

-

- Article

- Export citation

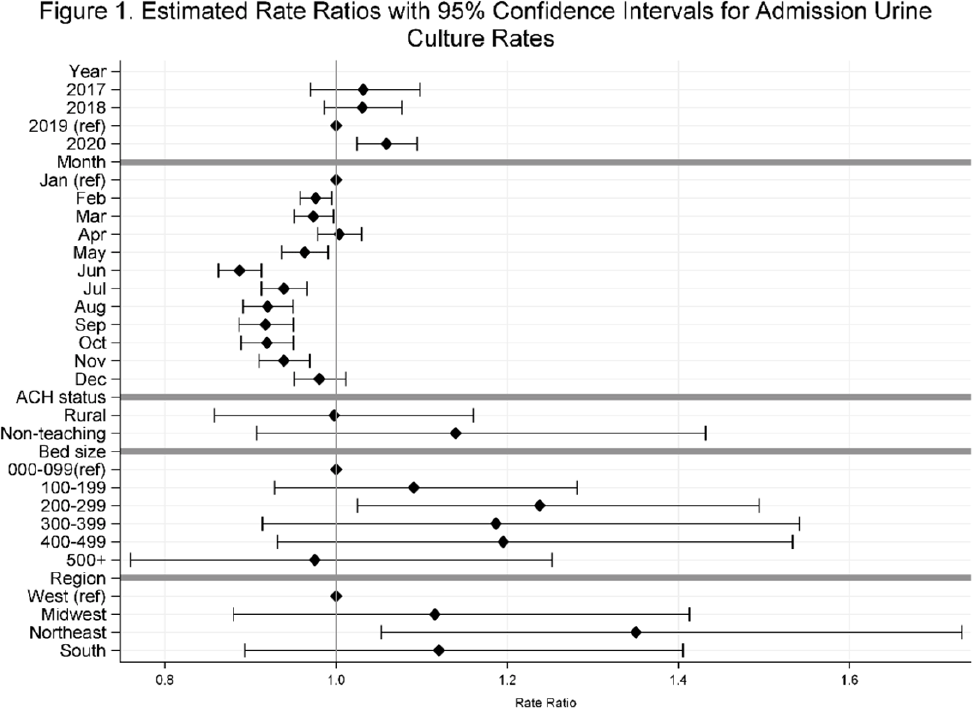

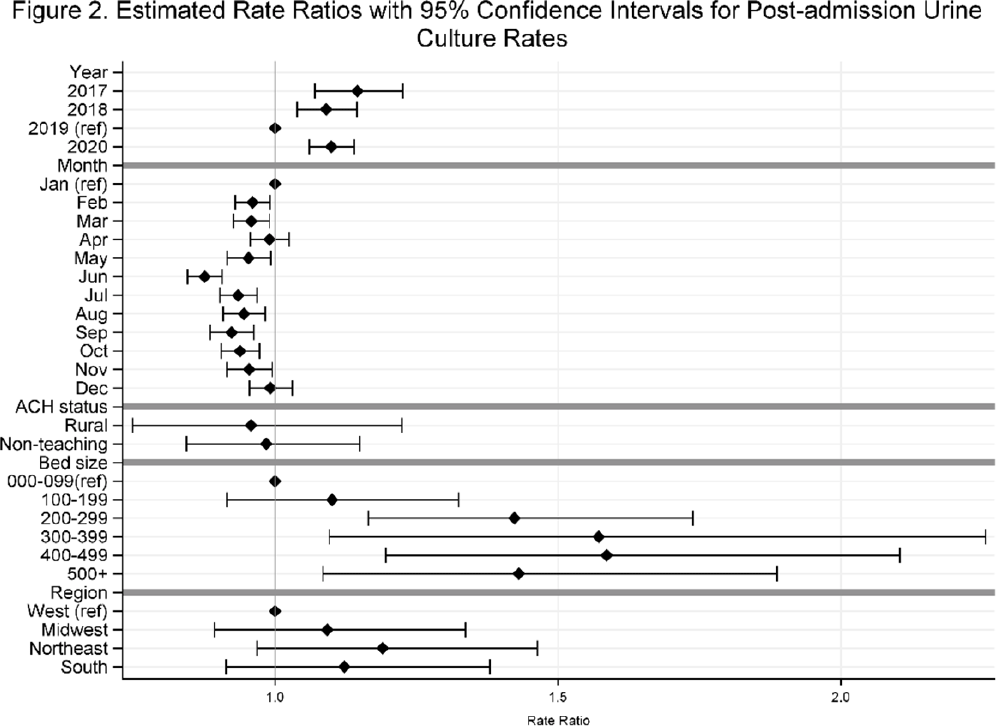

Temporal trends in urine-culture rates in the US acute-care hospitals, 2017–2020

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 2 / Issue S1 / July 2022

- Published online by Cambridge University Press:

- 16 May 2022, p. s12

-

- Article

-

- You have access

- Open access

- Export citation

Using polygenic scores and clinical data for bipolar disorder patient stratification and lithium response prediction: machine learning approach – CORRIGENDUM

-

- Journal:

- The British Journal of Psychiatry / Volume 221 / Issue 2 / August 2022

- Published online by Cambridge University Press:

- 04 May 2022, p. 494

- Print publication:

- August 2022

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Using polygenic scores and clinical data for bipolar disorder patient stratification and lithium response prediction: machine learning approach

-

- Journal:

- The British Journal of Psychiatry / Volume 220 / Issue 4 / April 2022

- Published online by Cambridge University Press:

- 28 February 2022, pp. 219-228

- Print publication:

- April 2022

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Mindfulness based cognitive therapy for recurrent depressive disorder

-

- Journal:

- BJPsych Open / Volume 7 / Issue S1 / June 2021

- Published online by Cambridge University Press:

- 18 June 2021, p. S272

-

- Article

-

- You have access

- Open access

- Export citation

Antipsychotic prescribing in dementia

-

- Journal:

- BJPsych Open / Volume 7 / Issue S1 / June 2021

- Published online by Cambridge University Press:

- 18 June 2021, pp. S91-S92

-

- Article

-

- You have access

- Open access

- Export citation

Substance Use Diagnoses Among Persons with Community-Onset Methicillin-Resistant Staphylococcus aureus Bloodstream Infections

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 41 / Issue S1 / October 2020

- Published online by Cambridge University Press:

- 02 November 2020, pp. s392-s393

- Print publication:

- October 2020

-

- Article

-

- You have access

- Export citation

Variability and Trends in Blood Culture Utilization, US Hospitals, 2012–2017

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 41 / Issue S1 / October 2020

- Published online by Cambridge University Press:

- 02 November 2020, pp. s430-s431

- Print publication:

- October 2020

-

- Article

-

- You have access

- Export citation

Blueprint for Transparency at the U.S. Food and Drug Administration: Recommendations to Advance the Development of Safe and Effective Medical Products

-

- Journal:

- Journal of Law, Medicine & Ethics / Volume 45 / Issue S2 / Winter 2017

- Published online by Cambridge University Press:

- 01 January 2021, pp. 7-23

- Print publication:

- Winter 2017

-

- Article

- Export citation

Windowless EDS Detection of N Lines and their Practical use in sub 2 kV X-ray mapping to Optimize Spatial Resolution

-

- Journal:

- Microscopy and Microanalysis / Volume 22 / Issue S3 / July 2016

- Published online by Cambridge University Press:

- 25 July 2016, pp. 112-113

- Print publication:

- July 2016

-

- Article

-

- You have access

- Export citation

Comparative pathogenesis of eosinophilic meningitis caused by Angiostrongylus mackerrasae and Angiostrongylus cantonensis in murine and guinea pig models of human infection

-

- Journal:

- Parasitology / Volume 143 / Issue 10 / September 2016

- Published online by Cambridge University Press:

- 09 June 2016, pp. 1243-1251

-

- Article

- Export citation

Contributors

-

-

- Book:

- Shakespeare Survey

- Published online:

- 05 October 2014

- Print publication:

- 02 October 2014, pp vi-vi

-

- Chapter

- Export citation

Creation of Surge Capacity by Early Discharge of Hospitalized Patients at Low Risk for Untoward Events

-

- Journal:

- Disaster Medicine and Public Health Preparedness / Volume 3 / Issue S1 / June 2009

- Published online by Cambridge University Press:

- 08 April 2013, pp. S10-S16

-

- Article

- Export citation

Enhancing Community Disaster Resilience Through Mass Sporting Events

-

- Journal:

- Disaster Medicine and Public Health Preparedness / Volume 5 / Issue 4 / December 2011

- Published online by Cambridge University Press:

- 08 April 2013, pp. 310-315

-

- Article

- Export citation

From hope to crisis and back again? A critical history of the global CBNRM narrative

-

- Journal:

- Environmental Conservation / Volume 37 / Issue 1 / March 2010

- Published online by Cambridge University Press:

- 14 June 2010, pp. 5-15

-

- Article

- Export citation