622 results

Impact of a syndrome-based stewardship intervention on antipseudomonal beta-lactam use, antimicrobial resistance, C. difficile rates, and cost in a safety-net community hospital

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue 1 / 2024

- Published online by Cambridge University Press:

- 13 March 2024, e31

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

The weight of beauty in psychological research

-

- Journal:

- Industrial and Organizational Psychology / Volume 17 / Issue 1 / March 2024

- Published online by Cambridge University Press:

- 07 March 2024, pp. 111-114

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

How do we grow a Biodesigner?

-

- Journal:

- Research Directions: Biotechnology Design / Volume 2 / 2024

- Published online by Cambridge University Press:

- 28 February 2024, e2

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Impact of a midline catheter prioritization initiative on device utilization and central line-associated bloodstream infections at an urban safety-net community hospital

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue 1 / 2024

- Published online by Cambridge University Press:

- 16 February 2024, e27

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Changing the culture: impact of a diagnostic stewardship intervention for urine culture testing and CAUTI prevention in an urban safety-net community hospital

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue 1 / 2024

- Published online by Cambridge University Press:

- 29 January 2024, e14

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Communicating with families of young people with hard-to-treat cancers: Healthcare professionals’ perspectives on challenges, skills, and training

-

- Journal:

- Palliative & Supportive Care / Volume 22 / Issue 3 / June 2024

- Published online by Cambridge University Press:

- 24 January 2024, pp. 539-545

-

- Article

- Export citation

Structural Investigations of Natural and Synthetic Chlorite Minerals by X-ray Diffraction, Mössbauer Spectroscopy and Solid-State Nuclear Magnetic Resonance

-

- Journal:

- Clays and Clay Minerals / Volume 54 / Issue 2 / April 2006

- Published online by Cambridge University Press:

- 01 January 2024, pp. 252-265

-

- Article

- Export citation

A comparison of methods to balance geophysical flows

-

- Journal:

- Journal of Fluid Mechanics / Volume 971 / 25 September 2023

- Published online by Cambridge University Press:

- 08 September 2023, A2

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

The Mopra Southern Galactic Plane CO Survey – data release 4– complete survey

-

- Journal:

- Publications of the Astronomical Society of Australia / Volume 40 / 2023

- Published online by Cambridge University Press:

- 22 August 2023, e047

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

A call to action: Taking the untenable out of women professors’ pregnancy, postpartum, and caregiving demands

-

- Journal:

- Industrial and Organizational Psychology / Volume 16 / Issue 2 / June 2023

- Published online by Cambridge University Press:

- 09 May 2023, pp. 187-210

-

- Article

- Export citation

508 A Study of Cortical Thickness in Bilingual Children with Reading Disability (Dyslexia)

- Part of

-

- Journal:

- Journal of Clinical and Translational Science / Volume 7 / Issue s1 / April 2023

- Published online by Cambridge University Press:

- 24 April 2023, p. 144

-

- Article

-

- You have access

- Open access

- Export citation

Feasibility and impact of a monoclonal antibody infusion program in reaching vulnerable underserved communities

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 10 / October 2023

- Published online by Cambridge University Press:

- 04 April 2023, pp. 1690-1692

- Print publication:

- October 2023

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

The Un-‘Common Sense’ of National Identity: Luigi Molina, Trentini and the Fascist Italianisation Campaign in Trentino-Alto Adige/Südtirol

-

- Journal:

- Contemporary European History , First View

- Published online by Cambridge University Press:

- 27 March 2023, pp. 1-21

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Impact of a culturally sensitive multilingual community outreach model on coronavirus disease 2019 (COVID-19) vaccinations at an urban safety-net community hospital

- Part of

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 9 / September 2023

- Published online by Cambridge University Press:

- 02 February 2023, pp. 1526-1528

- Print publication:

- September 2023

-

- Article

-

- You have access

- HTML

- Export citation

Limited resources or limited luck? Why people perceive an illusory negative correlation between the outcomes of choice options despite unequivocal evidence for independence

-

- Journal:

- Judgment and Decision Making / Volume 14 / Issue 5 / September 2019

- Published online by Cambridge University Press:

- 01 January 2023, pp. 573-590

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

The livestock farming digital transformation: implementation of new and emerging technologies using artificial intelligence

-

- Journal:

- Animal Health Research Reviews / Volume 23 / Issue 1 / June 2022

- Published online by Cambridge University Press:

- 09 June 2022, pp. 59-71

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

How we can bring I-O psychology science and evidence-based practices to the public

-

- Journal:

- Industrial and Organizational Psychology / Volume 15 / Issue 2 / June 2022

- Published online by Cambridge University Press:

- 26 May 2022, pp. 259-272

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

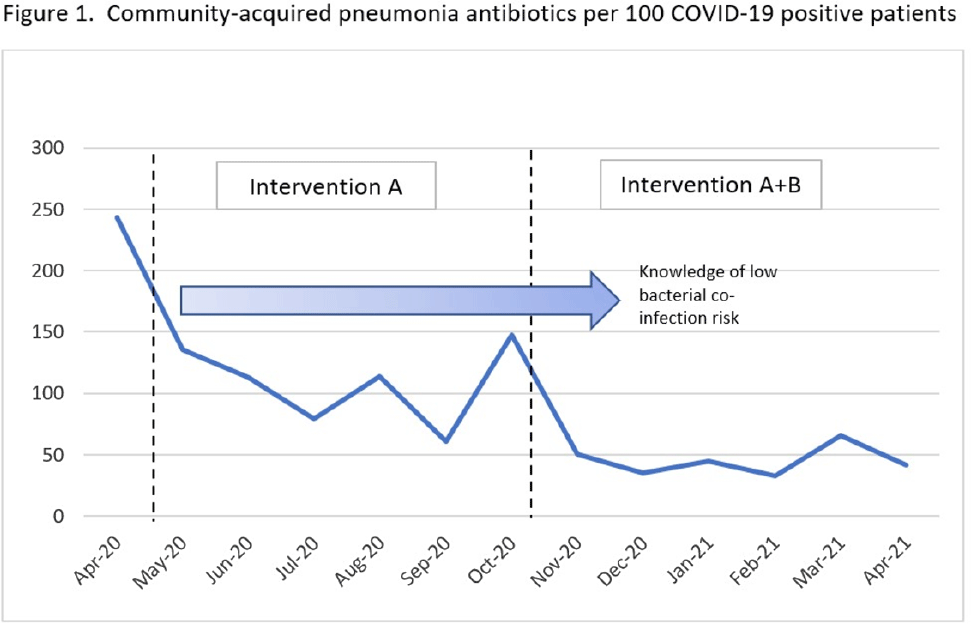

A little education goes a long way: Decreasing antibiotics for community-acquired pneumonia in COVID-19 patients

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 2 / Issue S1 / July 2022

- Published online by Cambridge University Press:

- 16 May 2022, pp. s4-s5

-

- Article

-

- You have access

- Open access

- Export citation

451 Unique Gray Matter Volume Differences in Bilingual Children with Reading Disability

-

- Journal:

- Journal of Clinical and Translational Science / Volume 6 / Issue s1 / April 2022

- Published online by Cambridge University Press:

- 19 April 2022, p. 89

-

- Article

-

- You have access

- Open access

- Export citation