In well-fed populations, child growth is expected to be regular and consistent with anthropometric standards. Abnormal patterns of growth, whether undernutrition or obesity, are assessed by specific indicators such as weight-for-age, weight-for-height, BMI, head circumference, mid-upper arm circumference (MUAC), triceps skinfold and subscapular skinfold.

These indicators can be used to monitor the nutritional status of an individual or to assess the nutritional status of a group of children. At the individual level, extensive research has documented that MUAC provides a good assessment of the risk of death and is more and more frequently used in therapeutic feeding programmes to select children in need of treatment(Reference Myatt, Khara and Collins1, 2). In contrast, little research has been conducted on the behaviour of these indicators in situations of nutritional stress, i.e. short-term food shortage or higher demand due to severe morbidity. Which anthropometric indicator is the most responsive for assessment of the nutritional status of populations of children remains an open question. This has multiple implications for anthropometric assessment in developing countries.

In emergency situations, the nutritional situation is usually evaluated based on anthropometric surveys carried out in children under 5 years of age. In a WHO document published in 2000, the situation is said to be acceptable, poor, serious or critical when the proportion of wasted children, i.e. with a weight-for-height Z-score (WHZ) less than −2, is below 5 %, between 5 % and 10 %, between 10 % and 15 % or above 15 %, respectively(3). This classification later evolved, in particular to include food security indicators, but the prevalence of wasting remains a key element in population nutritional assessment and alert thresholds used in UN documents remain the same(4), although the introduction of WHO growth standards in 2006 led to major changes in wasting prevalence since the publication of the 2000 report(Reference Seal and Kerac5).

Using WHZ for population assessment has several limitations, however. First, the standard procedure involves weighing and measuring a total of typically more than 600 children in thirty different clusters(3), which is time-consuming and requires mobilization of large resources. Weight and height measurement requires a team of two trained people and heavy equipment that is not easy to carry around(Reference Cogill6). Second, WHZ is influenced by body proportion, and in particular leg length, which varies between different populations(Reference Myatt, Duffield and Seal7). This is potentially a concern, as children with long legs, who usually are in better health(Reference Bogin and Varela-Silva8), are more easily classified as malnourished with WHZ. Third, these WHZ-based thresholds are used for making decisions about implementing large-scale programmes of management of severe acute malnutrition, but these programmes often identify children in need of treatment by MUAC(2). MUAC often does not classify as malnourished the same children as those defined by WHZ(Reference Myatt, Khara and Collins1), which leads to difficulties when planning a response. As a result, some guidelines also recommend reporting the number of children with low MUAC (<115 mm) as part of nutritional surveillance(9). Beyond all the limitations of the WHZ surveys, their basic assumption, namely that WHZ is the most appropriate anthropometric indicator for measuring nutritional stress, has never been adequately tested. Adaptation to food shortage involves fat and muscle tissue mobilization to provide fuel for body metabolism(Reference Cahill10), with a special stress on muscle when food shortage is associated with infection(Reference Lecker, Solomon and Mitch11). Fat and muscle represent less than 30 % of body weight in children(12) and the relevance of weight-based indices can be questioned, especially when compared with MUAC which measures directly muscle and fat mass.

Responsiveness, defined as the change of an indicator divided by its standard deviation, seems the most appropriate measure to assess the relevance of different indicators to measure nutritional stress(Reference Habicht and Pelletier13). Ideally, for comparison, responsiveness should be calculated among all possible nutritional indicators before and during a crisis situation. These data are not readily available, but useful information can be obtained from rural communities experiencing large short-term variations in body composition, such as seasonal variations associated with variations in food availability and morbidity.

The objective of the present study was to compare the responsiveness of selected anthropometric indicators in a rural community during different seasons and to measure their contrast (i.e. the difference in responsiveness) between seasons with and without nutritional stress.

Data and methods

Study population

The study area covered thirty villages in the department of Fatick, an area located about 150 km east of Dakar, the capital city of Senegal (West Africa). The population is poor and lives primarily on subsistence agriculture, growing mainly millet, maize and peanuts (groundnuts). The area is a dry orchard savannah. The climate is harsh, with two distinctive seasons: a rainy season with heavy rainfall from June to October, and a dry season with virtually no rain for the rest of the year. Most crops are planted at the beginning of the rainy season (June) and harvested at the end (September–October). The rainy season is a time of heavy transmission of malaria (mostly Plasmodium falciparum). During the rainy season children undergo severe stress due to food shortage (until the next harvest), malaria, a variety of diarrhoeal diseases and the fact that parents have less time to care for children because of heavy work load in the fields. During the dry season malaria transmission stops, food is more abundant and mothers have more time to devote to young children. However, the dry season is marked by intense transmission of airborne diseases, in particular measles, whooping cough and meningitis. The study area has been the focus of sporadic research between 1962 and 1982, and intense research activity since 1983, which was still going on in 2012. The core of the research is organized around a comprehensive demographic surveillance system covering the whole population of some 30 000 persons(Reference Garenne and Cantrelle14).

The present study was part of a broader study on the relationship between nutritional status assessed by anthropometry and child survival undertaken in 1983–1984. The broader study has been described in detail elsewhere(Reference Garenne, Maire and Fontaine15, Reference Garenne, Willie and Maire16). In brief, some 5000 children under 5 years of age living in the study area were visited four times at 6-month intervals in May and November 1983 and in May and November 1984. Intervals between two visits included the dry, post-harvest season (from November to May) and the wet pre-harvest season (from May to November). Altogether, growth data were available for two wet seasons (May 1983 to November 1983, and May 1984 to November 1984) and one dry season (November 1983 to May 1984). For the present study, we selected a sub-sample of children who were present at two successive visits, who were 6–23 months of age at the first visit and who had complete anthropometric measures. This age group was selected to maximize contrast because moderate and severe malnutrition, and in particular seasonal malnutrition, occur mostly in this age group, and rarely below age 6 months or after age 24 months.

Anthropometric measures

At each visit, a full anthropometric assessment was conducted on all children who were present, including weight, height/length, head circumference, arm circumference, triceps skinfold and subscapular skinfold. All measurements followed standard procedures and were taken with high-quality equipment by investigators themselves. Length was measured for children unable to stand, usually below 24 months, and height for older children. Weight was measured with beam scales with a precision of 10 g (SECA France, Semur en Auxois, France); length or height (for children who could stand) was measured with metal length/height boards with a precision of 1 mm (Holtain Ltd, Crymych, UK). Circumferences were measured with fibreglass tapes and skinfold thickness with standard callipers (Holtain Ltd). Only one measure was taken for each child at each visit by qualified persons (e.g. B.M. and O.F.).

Statistical analysis

Univariate analysis

All anthropometric measures available were used for the present study. First, we used plain values of all measures taken: weight, height/length, head circumference, arm circumference, triceps skinfold and subscapular skinfold. We computed muscle circumference as the difference between MUAC and ![]() $\pi$ × triceps skinfold. Second, we used the BMI computed as the ratio of weight to height-squared. Third, we used standardized values of weight-for-age, height-for-age, weight-for-height and head circumference-for-age using Z-scores computed from the 2000 US Centers for Disease Control and Prevention growth charts (CDC-2000 reference set)(Reference Kuczmarski, Ogden and Guo17). We selected the CDC-2000 reference set because it had better screening value in this population than the 1977 National Center for Health Statistics growth reference or the 2006 WHO growth standards, in particular for assessing the mortality risk associated with low nutritional status. However, we also provide similar calculations with the 2006 WHO growth standards, for international comparisons.

$\pi$ × triceps skinfold. Second, we used the BMI computed as the ratio of weight to height-squared. Third, we used standardized values of weight-for-age, height-for-age, weight-for-height and head circumference-for-age using Z-scores computed from the 2000 US Centers for Disease Control and Prevention growth charts (CDC-2000 reference set)(Reference Kuczmarski, Ogden and Guo17). We selected the CDC-2000 reference set because it had better screening value in this population than the 1977 National Center for Health Statistics growth reference or the 2006 WHO growth standards, in particular for assessing the mortality risk associated with low nutritional status. However, we also provide similar calculations with the 2006 WHO growth standards, for international comparisons.

For each indicator, we computed the mean (μ) and standard deviation (σ) at baseline, i.e. at the first visit. We defined the change (Δ) in any selected indicator as the difference between the value at the next visit and the value at the previous visit. Since the mean time interval from one visit to the next was 176 d, with minor variations, we did not standardize the raw values for semester (183 d) in the univariate analysis. The ‘responsiveness’ of each indicator was defined as the change divided by the standard deviation of the same indicator (ρ = Δ/σ). This responsiveness gives a measure of the change (growth or loss) over a semester compared with the variation of the indicator in the population. The higher the value, the more responsive is the indicator for measuring changes. This definition is similar to that introduced earlier by other authors(Reference Habicht and Pelletier13). The ‘contrast’ was defined as the difference between the responsiveness during the rainy and the dry season (κ = ρ 1 − ρ 2). The higher the contrast in absolute value, the better is the indicator to measure the change in body size and body composition during a period of nutritional stress.

Multivariate analysis

A multivariate analysis was carried out to provide a net effect independent of sex, age and duration of interval. This analysis was conducted using linear regression. The dependent variable was the change in the indicator during the interval between two successive visits. The control variables were the duration between two visits (in d), sex (1 for males, 0 for females) and age (in months), and the main independent variable was season (1 for rainy season, 0 for dry season). The net effect of season for each anthropometric indicator was provided directly by the coefficient of season in the linear regression (β) and the ‘contrast’ was computed as the coefficient of season divided by the standard deviation (κ′ = β/σ). All statistical calculations were done with the SPSS statistical software package version 11.

Results

Sample size and main characteristics

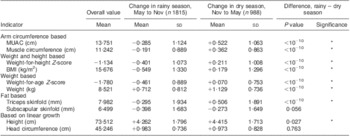

A total of 2803 children-intervals were kept for the final analysis, of which 775 occurred between the first and second visit (May 1983–October 1984: rainy season), 988 occurred between the second and third visit (November 1984–April 1983: dry season) and 1040 occurred between the third and fourth visit (May 1984–October 1984: rainy season). On average, children were well below the international reference for all indicators, with an average WHZ = −1·1 and an average MUAC = 13·8 cm, compared with an expected value of 15·5 cm in this age range. The corresponding WHZ value was −0·8 in the 2006 WHO growth standards (Table 1).

Table 1 Mean values and changes in anthropometric indicators during the dry and rainy season among children aged 6–23 months, Niakhar, Senegal, 1983–1984

MUAC, mid-upper arm circumference.

*P < 0·05 (standard t tests).

Corresponding values in 2006 WHO growth standard: change = −0·261 (sd 1·039) in rainy season and change = +0·342 (sd 0·987) in dry season for weight-for-height Z-score; change = −0·429 (sd 0·818) in rainy season and change = +0·153 (sd 0·754) in dry season for weight-for-age Z-score.

Growth during the dry and rainy seasons

Child growth was markedly different during the rainy season when compared with the dry season. For several growth indicators, changes were negative during the rainy season, whereas they were positive during the dry season (MUAC, muscle circumference, triceps skinfold). Changes were also going into the same direction for composite index such as WHZ, BMI and weight-for-age. All of these differences between dry and rainy season were highly significant (P < 10−10). As expected, linear growth was positive during both seasons (height, weight, head circumference), but was significantly slower during the rainy season than during the dry season, with the exception of head circumference (no difference). Subscapular skinfold had a different pattern, since it tended to decline with age, especially during the rainy season but also during the more favourable dry season, as expected from international standards.

Responsiveness

Univariate analysis

Values of responsiveness (Δ/σ) varied by indicator, and differed between the dry season and the rainy season (Fig. 1). Overall, the largest positive values were obtained for height, weight and head circumference. Low absolute values were obtained for MUAC, muscle circumference and triceps skinfold. Lowest negative values were obtained for BMI, WHZ and subscapular skinfold. More important, the contrast (difference between responsiveness during the rainy and during the dry season) varied strongly by indicator. It was highest in absolute value for MUAC, followed by muscle circumference, WHZ and BMI. Other indicators showed a lower contrast (weight, weight-for-age, triceps skinfold). Three indicators (subscapular skinfold, height and head circumference) showed no contrast in growth between the rainy and dry season (Table 2).

Fig. 1 Responsiveness of anthropometric indicators among children aged 6–23 months at baseline, by season (![]() $$$$, rainy season;

$$$$, rainy season; ![]() $$$$, dry season), Niakhar, Senegal 1983–1984. Note: anthropometric indicators are ranked by contrast (differences between two bars)

$$$$, dry season), Niakhar, Senegal 1983–1984. Note: anthropometric indicators are ranked by contrast (differences between two bars)

Table 2 Responsiveness in anthropometric indicators between dry and rainy seasons (univariate analysis) among children aged 6–23 months, Niakhar, Senegal 1983–1984

MUAC, mid-upper arm circumference.

Responsiveness is the change (Δ) divided the standard deviation of the measure (σ). Contrast is the differences between responsiveness in rainy and dry seasons.

Corresponding values in 2006 WHO growth standards: responsiveness in rainy season = −0·23, responsiveness in dry season = +0·30 and contrast = 0·52 for weight-for-height Z-score; responsiveness in rainy season = −0·34, responsiveness in dry season = +0·12 and contrast = 0·46 for weight-for-age Z-score.

Multivariate analysis

The multivariate analysis confirmed the results of the univariate analysis (Table 3). The highest value of contrast was again found for MUAC. Other high values of contrast were found for WHZ, BMI and arm muscle circumference, followed by weight-for-age and triceps skinfold. As for the univariate analysis, the net effects of season were highly significant (all P < 10−10). On the other hand, height, head circumference and subscapular skinfold showed no contrast between the two seasons.

Table 3 Comparison of changes in anthropometric indicators between dry and rainy seasons (multivariate analysis) among children aged 6–23 months, Niakhar, Senegal, 1983–1984

MUAC, mid-upper arm circumference.

Control variables are age, sex and duration of interval. P values from t test on regression models.

Corresponding values in 2006 WHO growth standards: contrast = −0·55 for weight-for-height Z-score and contrast = −0·43 for weight-for-age Z-score.

Discussion

Comparison of responsiveness of different anthropometric measures and indices within seasons showed that in both seasons, height, weight and head circumference had the highest responsiveness. This suggests that these indices are the most appropriate to monitor growth velocity of children in a stable situation. This is consistent with the current practice of monitoring preferentially these indices for routinely monitoring the growth of children.

Our hypothesis that responsiveness should vary between seasons was validated. On one hand, there was hardly any variation in head and linear growth between seasons. On the other hand, MUAC, which is directly related to both muscle mass and fat mass, was the most affected, and somewhat more than arm muscle circumference which discounts for fat. Other classic indicators of changing body composition such as WHZ and BMI also varied significantly, but were less sensitive than MUAC, as expected since they also include fluid mass. This suggests that MUAC is the nutritional indicator most responsive to nutritional stress at the population level.

The difference in contrast between triceps and subscapular skinfold thickness is difficult to interpret as it takes place at an age where fat stores are progressively decreasing(Reference Kuzawa18). In this age range (6–23 months), triceps skinfold is expected to remain roughly constant (although international standards are sometimes inconsistent), whereas subscapular skinfold is expected to decline (true in all standards). These two skinfolds measure different fat stores, likely to have different responses to stress. This point remains poorly studied, and we did not find any detailed analysis on this effect in the published literature. It deserves further research.

Our results are consistent with a previous study in rural Bangladesh which showed that normalized distance of different nutritional indices between seasons was greater for MUAC than for other nutritional indices(Reference Briend, Hasan and Aziz19). They are also consistent with our knowledge of adaptation during food deprivation and infection preferentially affecting fat and muscle(Reference Cahill10, Reference Lecker, Solomon and Mitch11) which are the main components of MUAC. This suggests that MUAC is more appropriate than other nutritional indices to measure nutritional stress at population level. This finding, however, requires confirmation in other settings and in other age groups.

In its document on nutrition in emergencies, WHO advised not to use MUAC and stated that its measurement error is too high(3). This document assumes that errors up to 10 mm are often observed when measuring MUAC. This estimation, however, seems very high for skilled observers, who can reproduce MUAC measures with an inter-observer correlation coefficient as high as 0·96 to 0·98(20). The precision of weight measures should not be overestimated either. Even if weight can be measured with 10 g accuracy in a quiet child, several factors impossible to standardize in population surveys such as stool and urine movements, time since last food or drink and hydration status will introduce random errors well beyond 10 g. Of note, MUAC has been shown to be less subject to measurement error than WHZ in a previous study(Reference Velzeboer, Selwyn and Sargent21) and shown to be less sensitive to hydration status than weight-based nutritional indices(Reference Mwangome, Fegan and Prentice22).

Even if measurement errors were higher for MUAC than for weight and WHZ, this is not enough to discard straightaway the use of MUAC for nutritional surveillance. Measurement errors are likely to increase baseline standard deviation and therefore to decrease responsiveness. Our results showing a greater responsiveness of MUAC suggest that these measurement errors are compensated by the greater variation of MUAC during nutritional stress, making it more detectable. This result, however, cannot be extrapolated to situations where MUAC is not carefully measured and bears a high random measurement error.

In addition to random errors, a systematic error or observer bias in MUAC measurement could also limit its use to monitor nutritional stress in a community. This is a real possibility as MUAC estimation is influenced by the tension applied to the tape during measurement(20). A simple device to standardize the tension applied to the tape has been recently described (http://tng.brixtonhealth.com/sites/default/files/equal.pull_.MUAC_.pdf). Its usefulness to remove possible systematic errors during MUAC measure should be explored. If the problem of systematic errors during MUAC measurement can be eliminated, our results suggest that it could be more appropriate than other indices for monitoring nutritional stress of a community, even in non-emergency situations.

Conclusions

Our data suggest that MUAC is the nutritional indictor which is the most responsive to nutritional stress. Our data also show that WHZ is a useful indicator of nutritional stress, but apparently is not superior to MUAC as usually assumed in WHO and FAO documents. As WHZ is more difficult to measure, it should be preferred to MUAC only if shown definitely more reactive, which is not supported by our data. MUAC could be more adapted to measure nutritional stress of vulnerable populations, provided its measure can be adequately standardized.

Acknowledgements

Source of funding: The Niakhar study was supported by the European Union, DG-XII, Grant # TDR-36 (M.G., B.M., A.B. and O.F.). Conflicts of interest: All authors declare no conflict of interest of any type. Authors’ contribution: The Niakhar study was initiated by M.G., B.M. and A.B.; the fieldwork was conducted by M.B., B.M. and O.F. All four authors contributed to the data analysis and the writing of the paper.