205 results

Application of a Model Using Prior Healthcare Information to Predict Multidrug-Resistant Organism (MDRO) Carriage

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, p. s111

-

- Article

-

- You have access

- Open access

- Export citation

Impact and Safety of Diagnostic Stewardship to Improve Urine Culture Testing Among Patients with Indwelling Urinary Catheters

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. s24-s25

-

- Article

-

- You have access

- Open access

- Export citation

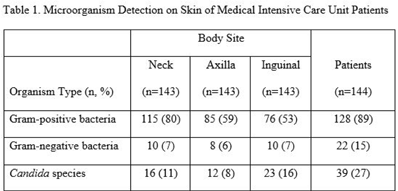

Relationship between chlorhexidine gluconate concentration and microbial colonization of patients’ skin

-

- Journal:

- Infection Control & Hospital Epidemiology , First View

- Published online by Cambridge University Press:

- 28 May 2024, pp. 1-6

-

- Article

-

- You have access

- HTML

- Export citation

The effectiveness of the COVID-19 vaccines in the prevention of post-COVID conditions in children and adolescents: a systematic literature review and meta-analysis

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue 1 / 2024

- Published online by Cambridge University Press:

- 19 April 2024, e54

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Assessing social cognition in patients with schizophrenia and healthy controls using the reading the mind in the eyes test (RMET): a systematic review and meta-regression

-

- Journal:

- Psychological Medicine / Volume 54 / Issue 5 / April 2024

- Published online by Cambridge University Press:

- 04 January 2024, pp. 847-873

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

4 Risk Factor and Biomarker Correlates of FLAIR White Matter Hyperintensities in Former American Football Players

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 29 / Issue s1 / November 2023

- Published online by Cambridge University Press:

- 21 December 2023, pp. 608-610

-

- Article

-

- You have access

- Export citation

Depression is associated with reduced outcome sensitivity in a dual valence, magnitude learning task – ADDENDUM

-

- Journal:

- Psychological Medicine / Volume 54 / Issue 3 / February 2024

- Published online by Cambridge University Press:

- 24 November 2023, p. 637

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

The effectiveness of COVID-19 vaccine in the prevention of post-COVID conditions: a systematic literature review and meta-analysis of the latest research

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue 1 / 2023

- Published online by Cambridge University Press:

- 13 October 2023, e168

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Depression is associated with reduced outcome sensitivity in a dual valence, magnitude learning task

-

- Journal:

- Psychological Medicine / Volume 54 / Issue 3 / February 2024

- Published online by Cambridge University Press:

- 14 September 2023, pp. 631-636

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Impact of measurement and feedback on chlorhexidine gluconate bathing among intensive care unit patients: A multicenter study

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 9 / September 2023

- Published online by Cambridge University Press:

- 13 September 2023, pp. 1375-1380

- Print publication:

- September 2023

-

- Article

-

- You have access

- HTML

- Export citation

Author response: Quantifying healthcare-acquired coronavirus disease 2019 (COVID-19) in hospitalized patients: A closer look

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 5 / May 2023

- Published online by Cambridge University Press:

- 27 April 2023, pp. 854-855

- Print publication:

- May 2023

-

- Article

-

- You have access

- HTML

- Export citation

Computerized-adaptive testing versus short forms for pediatric inflammatory bowel disease patient-reported outcome assessment

-

- Journal:

- Journal of Clinical and Translational Science / Volume 7 / Issue 1 / 2023

- Published online by Cambridge University Press:

- 14 April 2023, e109

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

SG-APSIC1035: Prospective safety surveillance study of ACAM2000 smallpox vaccine in deployed military personnel

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S1 / February 2023

- Published online by Cambridge University Press:

- 16 March 2023, p. s10

-

- Article

-

- You have access

- Open access

- Export citation

16 - Sociopragmatics and Intercultural Interaction

- from Part III - Interface of Intercultural Pragmatics and Related Disciplines

-

-

- Book:

- The Cambridge Handbook of Intercultural Pragmatics

- Published online:

- 29 September 2022

- Print publication:

- 20 October 2022, pp 420-444

-

- Chapter

- Export citation

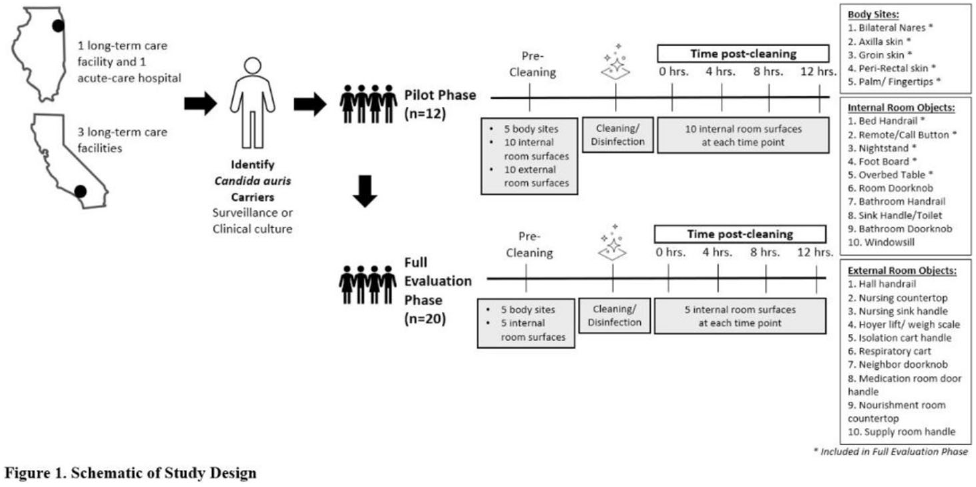

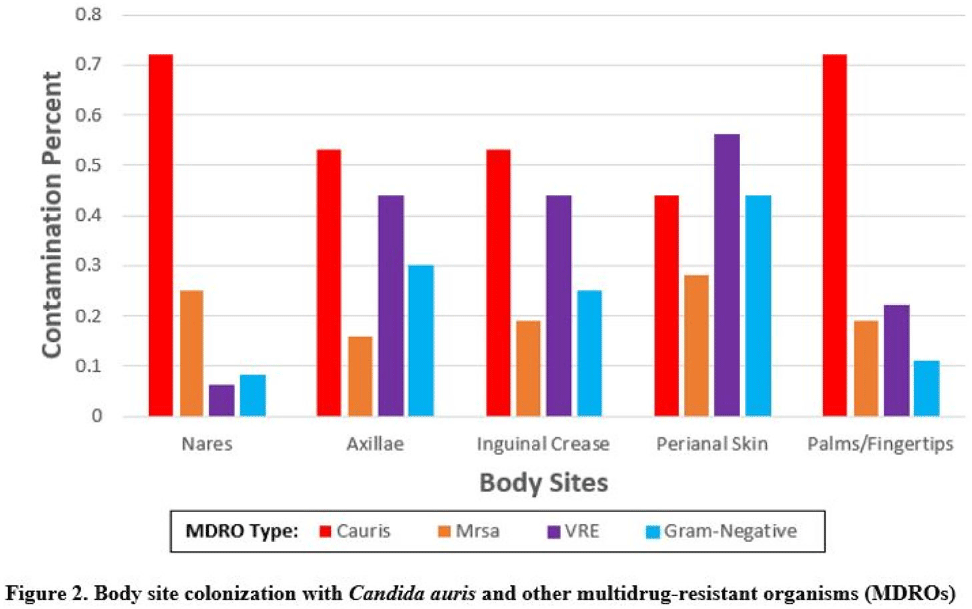

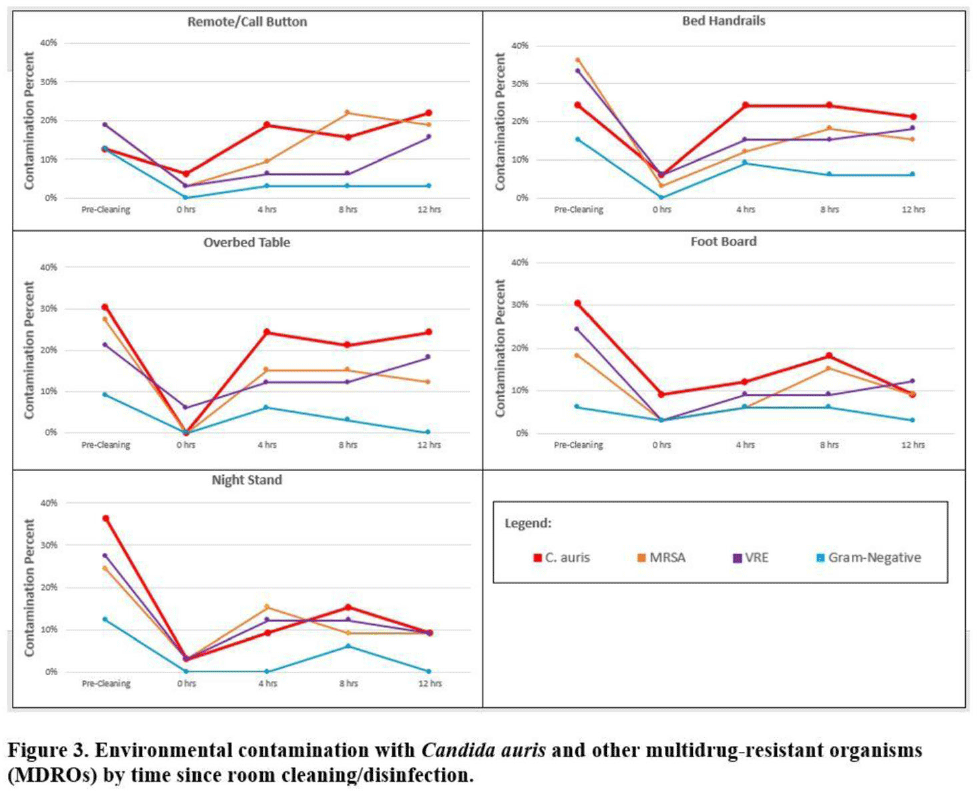

Multicenter evaluation of contamination of the healthcare environment near patients with Candida auris skin colonization – ERRATUM

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 2 / Issue 1 / 2022

- Published online by Cambridge University Press:

- 07 October 2022, e166

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Derivation and validation of risk prediction for posttraumatic stress symptoms following trauma exposure

-

- Journal:

- Psychological Medicine / Volume 53 / Issue 11 / August 2023

- Published online by Cambridge University Press:

- 01 July 2022, pp. 4952-4961

-

- Article

- Export citation

Morphometric analysis of stem-group mollusks from the northern Yangtze Craton, China

-

- Journal:

- Journal of Paleontology / Volume 96 / Issue 5 / September 2022

- Published online by Cambridge University Press:

- 27 May 2022, pp. 1024-1036

-

- Article

- Export citation

Multicenter evaluation of contamination of the healthcare environment near patients with Candida auris skin colonization

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 2 / Issue S1 / July 2022

- Published online by Cambridge University Press:

- 16 May 2022, pp. s78-s79

-

- Article

-

- You have access

- Open access

- Export citation

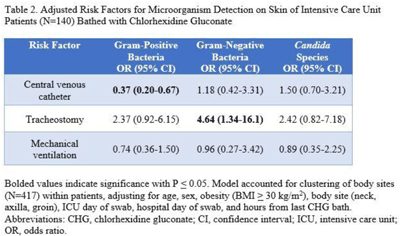

Indwelling medical devices and skin microorganisms on ICU patients bathed with chlorhexidine gluconate

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 2 / Issue S1 / July 2022

- Published online by Cambridge University Press:

- 16 May 2022, pp. s43-s44

-

- Article

-

- You have access

- Open access

- Export citation

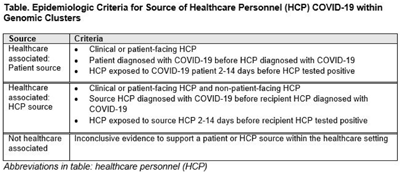

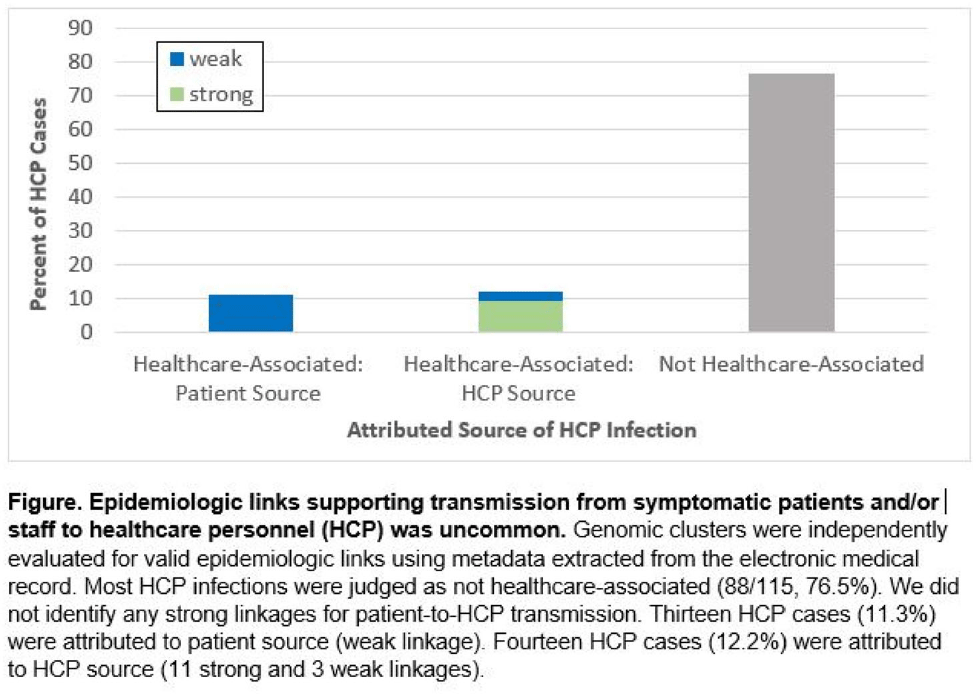

Genomic investigation to identify the source of SARS-CoV-2 infection among healthcare personnel

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 2 / Issue S1 / July 2022

- Published online by Cambridge University Press:

- 16 May 2022, pp. s74-s75

-

- Article

-

- You have access

- Open access

- Export citation