388 results

The Future of Qualitative Research in Healthcare

- The Role and Management of Digital Methods

- Coming soon

-

- Expected online publication date:

- August 2024

- Print publication:

- 31 August 2024

-

- Book

- Export citation

The Rapid ASKAP Continuum Survey III: Spectra and Polarisation In Cutouts of Extragalactic Sources (SPICE-RACS) first data release – CORRIGENDUM

-

- Journal:

- Publications of the Astronomical Society of Australia / Volume 41 / 2024

- Published online by Cambridge University Press:

- 03 June 2024, e039

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Becoming and acting as an ally against weight-based discrimination

-

- Journal:

- Industrial and Organizational Psychology / Volume 17 / Issue 1 / March 2024

- Published online by Cambridge University Press:

- 07 March 2024, pp. 142-147

-

- Article

- Export citation

Impact of primary care triage using the Head and Neck Cancer Risk Calculator version 2 on tertiary head and neck services in the post-coronavirus disease 2019 period

-

- Journal:

- The Journal of Laryngology & Otology , First View

- Published online by Cambridge University Press:

- 22 January 2024, pp. 1-6

-

- Article

-

- You have access

- HTML

- Export citation

The predictive role of symptoms in COVID-19 diagnostic models: A longitudinal insight

-

- Journal:

- Epidemiology & Infection / Volume 152 / 2024

- Published online by Cambridge University Press:

- 22 January 2024, e37

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

New Method Using Growth Dynamics to Quantify Microbial Contamination of Kaolinite Slurries

-

- Journal:

- Clays and Clay Minerals / Volume 61 / Issue 6 / December 2013

- Published online by Cambridge University Press:

- 01 January 2024, pp. 517-524

-

- Article

- Export citation

Helium as a Surrogate for Deuterium in LPI Studies

-

- Journal:

- Laser and Particle Beams / Volume 2023 / 2023

- Published online by Cambridge University Press:

- 01 January 2024, e2

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

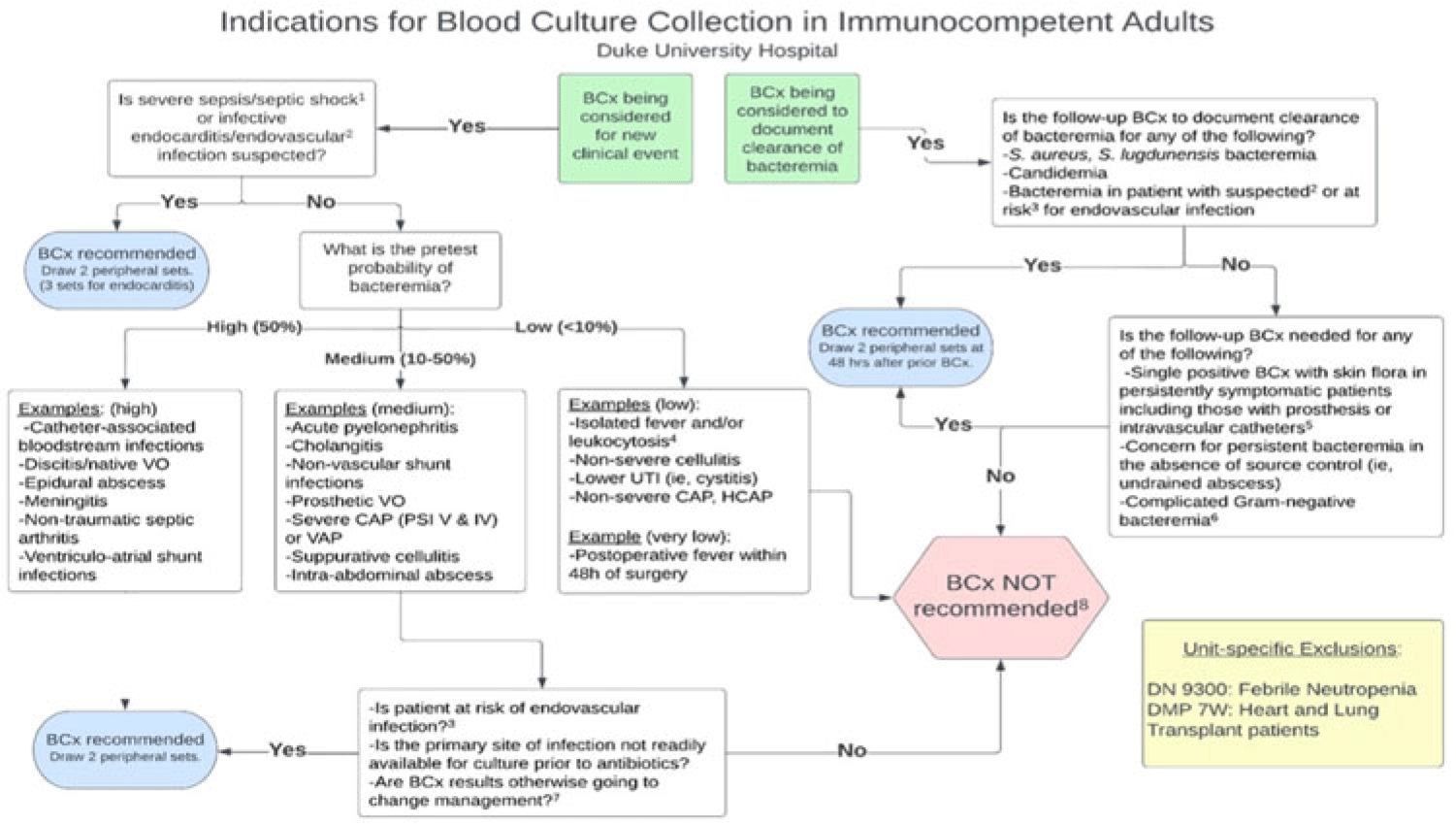

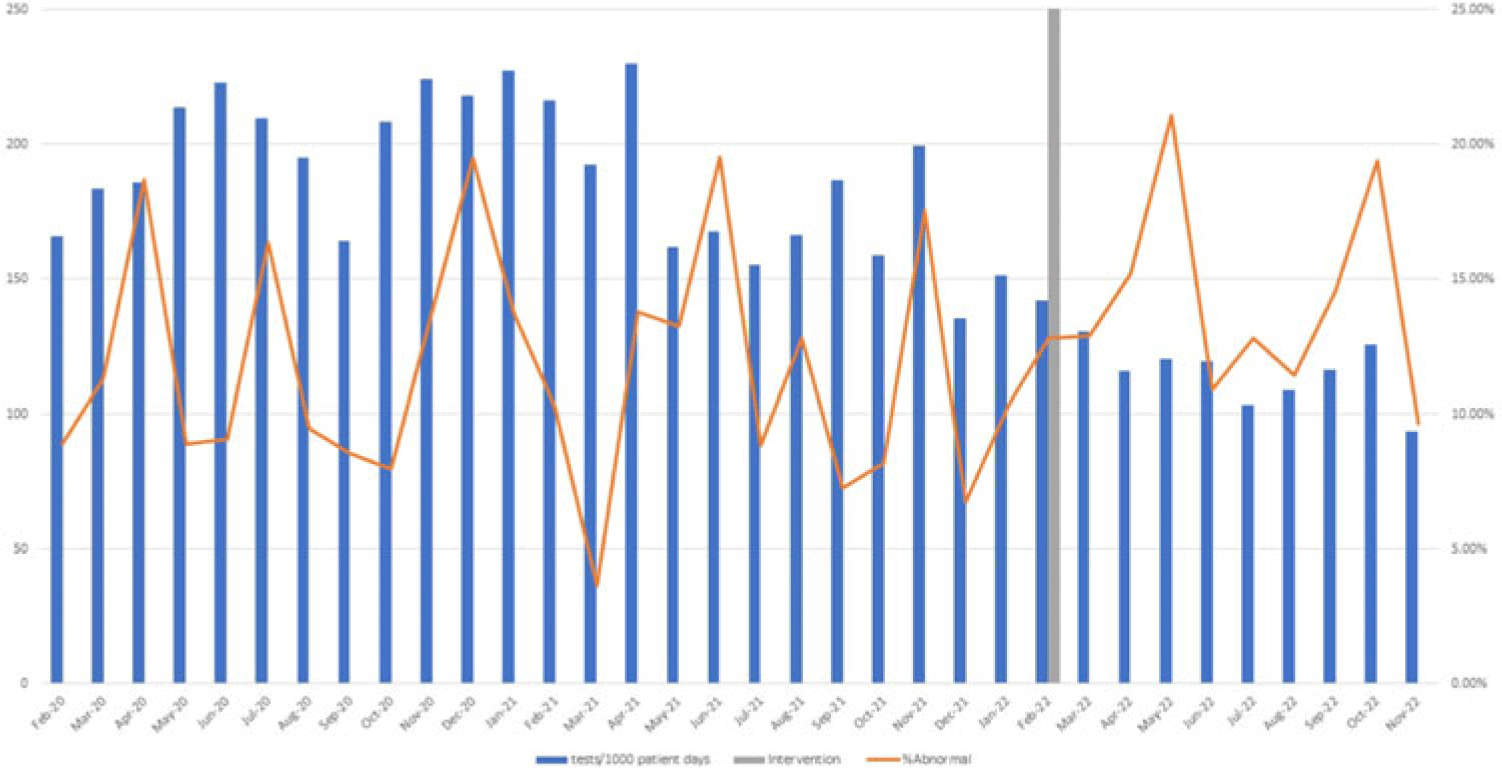

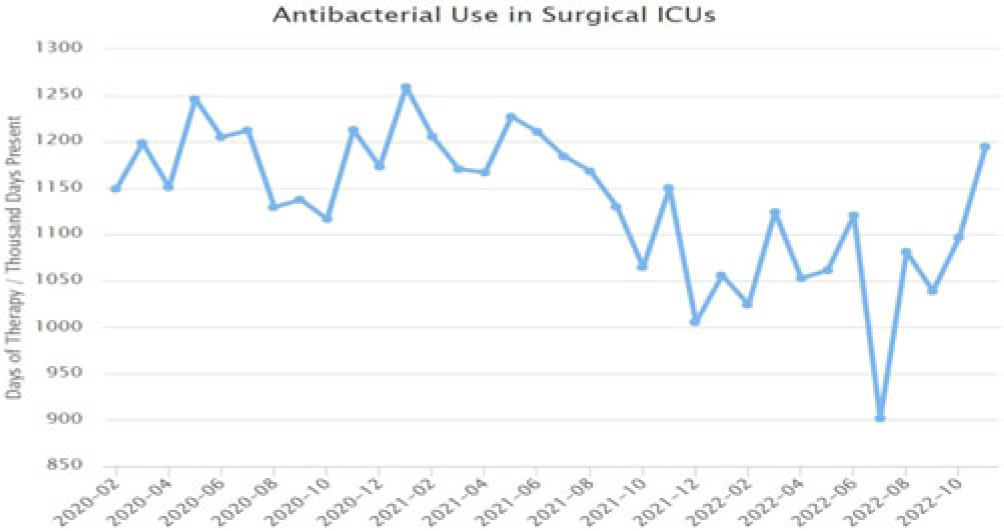

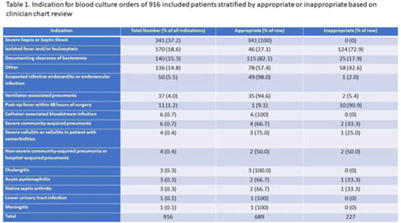

Implementation of a diagnostic stewardship intervention to improve blood-culture utilization in 2 surgical ICUs: Time for a blood-culture change

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 45 / Issue 4 / April 2024

- Published online by Cambridge University Press:

- 11 December 2023, pp. 452-458

- Print publication:

- April 2024

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Radiofrequency ice dielectric measurements at Summit Station, Greenland

-

- Journal:

- Journal of Glaciology , First View

- Published online by Cambridge University Press:

- 09 October 2023, pp. 1-12

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Implementation of diagnostic stewardship in two surgical ICUs: Time for a blood-culture change

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, pp. s9-s10

-

- Article

-

- You have access

- Open access

- Export citation

Epidemiology of carbapenem-resistant and extended-spectrum beta-lactamase-producing Enterobacterales in US children, 2016–2020

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, p. s16

-

- Article

-

- You have access

- Open access

- Export citation

Investigation of the first cluster of Candida auris cases among pediatric patients in the United States―Nevada, May 2022

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, pp. s118-s119

-

- Article

-

- You have access

- Open access

- Export citation

An approach for collaborative development of a federated biomedical knowledge graph-based question-answering system: Question-of-the-Month challenges

-

- Journal:

- Journal of Clinical and Translational Science / Volume 7 / Issue 1 / 2023

- Published online by Cambridge University Press:

- 14 September 2023, e214

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

The Rapid ASKAP Continuum Survey III: Spectra and Polarisation In Cutouts of Extragalactic Sources (SPICE-RACS) first data release

-

- Journal:

- Publications of the Astronomical Society of Australia / Volume 40 / 2023

- Published online by Cambridge University Press:

- 30 August 2023, e040

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Advocacy at the Eighth World Congress of Pediatric Cardiology and Cardiac Surgery

-

- Journal:

- Cardiology in the Young / Volume 33 / Issue 8 / August 2023

- Published online by Cambridge University Press:

- 24 August 2023, pp. 1277-1287

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Disinfection efficacy of Oxivir TB wipe residue on severe acute respiratory coronavirus virus 2 (SARS-CoV-2)

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 11 / November 2023

- Published online by Cambridge University Press:

- 19 July 2023, pp. 1891-1893

- Print publication:

- November 2023

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Assessing pig farm biosecurity measures for the control of Salmonella on European farms

-

- Journal:

- Epidemiology & Infection / Volume 151 / 2023

- Published online by Cambridge University Press:

- 13 July 2023, e130

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

12 - Becoming Political

- from Conclusion

-

-

- Book:

- Making the Middle Republic

- Published online:

- 20 April 2023

- Print publication:

- 27 April 2023, pp 253-269

-

- Chapter

- Export citation

Linear and nonlinear stability of Rayleigh–Bénard convection with zero-mean modulated heat flux

-

- Journal:

- Journal of Fluid Mechanics / Volume 961 / 25 April 2023

- Published online by Cambridge University Press:

- 11 April 2023, A1

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Using clinical decision support to improve urine testing and antibiotic utilization

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 10 / October 2023

- Published online by Cambridge University Press:

- 29 March 2023, pp. 1582-1586

- Print publication:

- October 2023

-

- Article

-

- You have access

- Open access

- HTML

- Export citation