185 results

Rapid Genomic Characterization of High-Risk, Antibiotic Resistant Pathogens Using Long-Read Sequencing to Identify Nosocomial

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, p. s106

-

- Article

-

- You have access

- Open access

- Export citation

Electrophysiological correlates of inhibitory control in children: Relations with prenatal maternal risk factors and child psychopathology

-

- Journal:

- Development and Psychopathology , First View

- Published online by Cambridge University Press:

- 24 April 2024, pp. 1-14

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Preventing unnecessary urine cultures at a Veteran’s affairs healthcare system

-

- Journal:

- Infection Control & Hospital Epidemiology , First View

- Published online by Cambridge University Press:

- 25 March 2024, pp. 1-3

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Head and Neck Cancer: United Kingdom National Multidisciplinary Guidelines, Sixth Edition

-

- Journal:

- The Journal of Laryngology & Otology / Volume 138 / Issue S1 / April 2024

- Published online by Cambridge University Press:

- 14 March 2024, pp. S1-S224

- Print publication:

- April 2024

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Examination of protective factors that promote prosocial skill development among children exposed to intimate partner violence

-

- Journal:

- Development and Psychopathology , First View

- Published online by Cambridge University Press:

- 28 February 2024, pp. 1-14

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

4 Misinterpreting cognitive change over multiple timepoints: When practice effects meet age-related decline

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 29 / Issue s1 / November 2023

- Published online by Cambridge University Press:

- 21 December 2023, pp. 673-674

-

- Article

-

- You have access

- Export citation

2 Neuropsychological Predictors of Posttraumatic Stress Disorder and Depressive Symptom Improvement in Compensatory Cognitive Training for Veterans with a History of Mild Traumatic Brain Injury

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 29 / Issue s1 / November 2023

- Published online by Cambridge University Press:

- 21 December 2023, pp. 515-517

-

- Article

-

- You have access

- Export citation

Strategies to maintain an N95 respirator supply during a pandemic supply-chain shortage

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 45 / Issue 5 / May 2024

- Published online by Cambridge University Press:

- 13 December 2023, pp. 688-689

- Print publication:

- May 2024

-

- Article

-

- You have access

- HTML

- Export citation

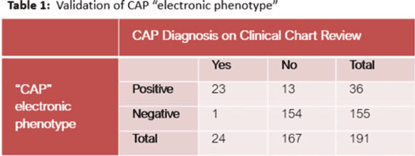

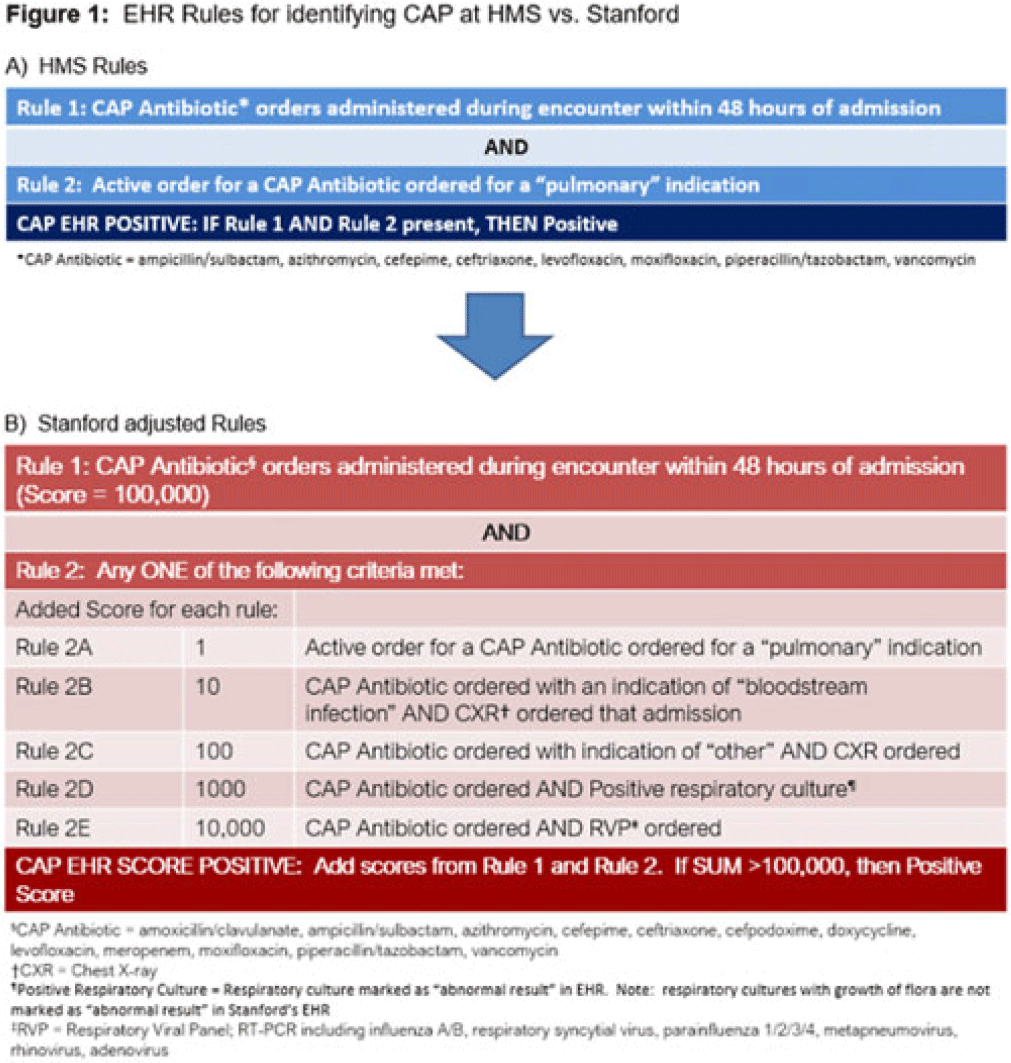

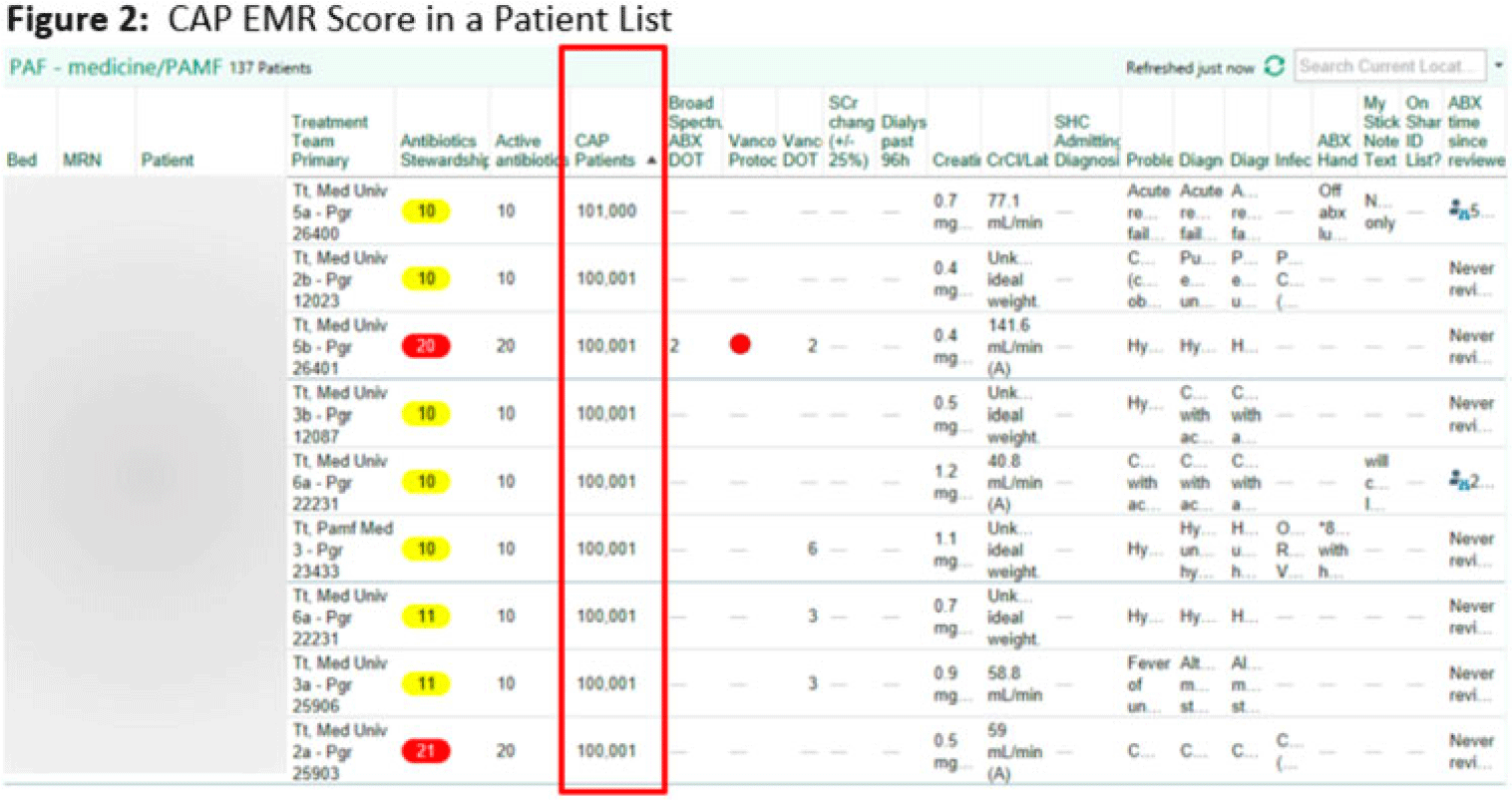

Electronic phenotyping of community-acquired pneumonia: A tool for inpatient syndrome-specific antimicrobial stewardship

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, pp. s114-s115

-

- Article

-

- You have access

- Open access

- Export citation

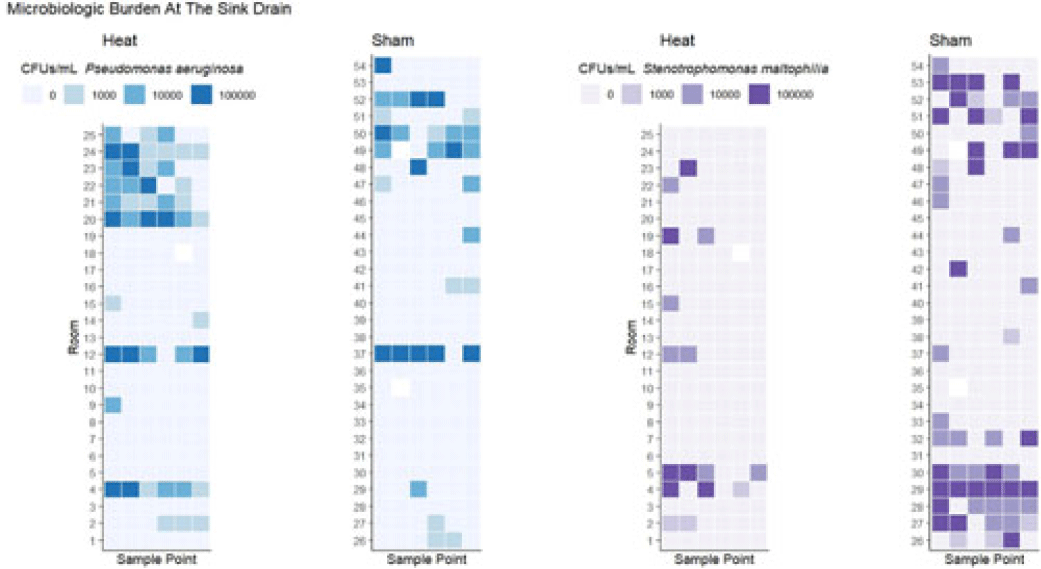

Some like it hot: Variable impact of a tailpiece heating device on different gram-negative bacteria

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, p. s70

-

- Article

-

- You have access

- Open access

- Export citation

Advocacy at the Eighth World Congress of Pediatric Cardiology and Cardiac Surgery

-

- Journal:

- Cardiology in the Young / Volume 33 / Issue 8 / August 2023

- Published online by Cambridge University Press:

- 24 August 2023, pp. 1277-1287

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Contents

-

- Book:

- National and Transnational Memories of the Kindertransport

- Published by:

- Boydell & Brewer

- Published online:

- 20 December 2023

- Print publication:

- 22 August 2023, pp vii-viii

-

- Chapter

- Export citation

Introduction: Kindertransport Memory and Representation

-

- Book:

- National and Transnational Memories of the Kindertransport

- Published by:

- Boydell & Brewer

- Published online:

- 20 December 2023

- Print publication:

- 22 August 2023, pp 1-16

-

- Chapter

- Export citation

List of Abbreviations

-

- Book:

- National and Transnational Memories of the Kindertransport

- Published by:

- Boydell & Brewer

- Published online:

- 20 December 2023

- Print publication:

- 22 August 2023, pp xi-xii

-

- Chapter

- Export citation

Index

-

- Book:

- National and Transnational Memories of the Kindertransport

- Published by:

- Boydell & Brewer

- Published online:

- 20 December 2023

- Print publication:

- 22 August 2023, pp 267-272

-

- Chapter

- Export citation

3 - Memories of the Kindertransport in Australia and New Zealand

-

- Book:

- National and Transnational Memories of the Kindertransport

- Published by:

- Boydell & Brewer

- Published online:

- 20 December 2023

- Print publication:

- 22 August 2023, pp 134-184

-

- Chapter

- Export citation

Bibliography

-

- Book:

- National and Transnational Memories of the Kindertransport

- Published by:

- Boydell & Brewer

- Published online:

- 20 December 2023

- Print publication:

- 22 August 2023, pp 251-266

-

- Chapter

- Export citation

National and Transnational Memories of the Kindertransport

- Exhibitions, Memorials, and Commemorations

-

- Published by:

- Boydell & Brewer

- Published online:

- 20 December 2023

- Print publication:

- 22 August 2023

2 - American and Canadian Memories of the Kindertransport

-

- Book:

- National and Transnational Memories of the Kindertransport

- Published by:

- Boydell & Brewer

- Published online:

- 20 December 2023

- Print publication:

- 22 August 2023, pp 80-133

-

- Chapter

- Export citation

Acknowledgments

-

- Book:

- National and Transnational Memories of the Kindertransport

- Published by:

- Boydell & Brewer

- Published online:

- 20 December 2023

- Print publication:

- 22 August 2023, pp ix-x

-

- Chapter

- Export citation