Introduction

Participant recruitment is often the major rate-limiting step in the conduct of clinical trials and other health-related research [Reference Lovato1, Reference Ross2]. Electronic health records (EHRs) contain rich patient information and are a promising resource to facilitate recruitment activities, such as eligibility determination and engaging prospective participants [Reference Cowie3–Reference Weng5]. In recent years, there has been widespread adoption of EHRs in health care systems, hospitals, and clinical practices; nearly all hospitals had a certified EHR technology as of 2015 [Reference Henry6]. However, investigators face differing and sometimes conflicting institutional policies and practices for the use of EHRs, which can discourage collaboration and inhibit research.

The National Institutes of Health’s National Center for Advancing Translational Science funded a consortium of ~60 centers through the Clinical and Translational Science Award (CTSA) with a focus on interinstitutional collaboration to accelerate the initiation, recruitment, and reporting of multisite trials. The CTSA Consortium established a workgroup of trialists, regulatory specialists, informaticians, and others to describe policies and practices for the research use of EHRs. The workgroup conducted a survey of key individuals at CTSA-funded institutions to examine informatics tools employed across the Consortium and current practices in EHR-based cohort identification and research recruitment. Results from the survey were analyzed to elucidate the range of practices across the CTSA Consortium. From review of the literature, there is inadequate comparative data to firmly establish best practices; however, the workgroup developed a preliminary sense of practices that may help facilitate transparent research recruitment using EHRs while accommodating the interests of patients, clinicians, and health care systems.

Methods

Data Collection

The workgroup developed a survey (online Supplementary Material) to collect data on current and emerging EHR-based recruitment practices at all CTSA sites (some comprised more than 1 institution) with regard to institution-specific informatics practices and tools, and regulatory and workflow issues. The questionnaire was constructed in REDCap [Reference Harris7] and emailed to individual CTSA program directors by the CTSA coordinating center at Vanderbilt University. Four weeks were allowed for responses from March to April 2016. Directors were asked to consult with their informatics, regulatory, and recruitment cores, and to respond as a team as needed. The survey process was reviewed and determined exempt by the Institutional Review Board (IRB) at the University of North Carolina at Chapel Hill.

Using a combination of closed and open-ended questions, we queried participants regarding site-specific implementation of 7 common informatics methods:

-

1. Use of EHR patient portals to notify patients of research opportunities.

-

2. Use of electronic alerts to care providers about patients in clinic who meet eligibility requirements.

-

3. Use of electronic alerts to the research team about patients in clinic who meet eligibility requirements.

-

4. Access to data warehouse via a staff member/analyst.

-

5. Use of self-service tools to run de-identified queries.

-

6. Use of business intelligence tools to give researcher teams direct query access.

-

7. Use of EHRs to build registries to aid in recruitment.

For each method, participants were asked to assess the level of implementation at their institutions using a 5-level Likert-type scale, which included options ranging from no plans to implement such a tool, to fully operational implementations. An option was provided for not sure or not applicable.

Participants were also asked to estimate the level of demand for each method by researchers at their institution using a 6-level Likert-type scale with options ranging from never, to rarely, to very frequently. Here again an option was provided for not sure or not applicable.

To explore regulatory and workflow processes, we posed a hypothetical case involving a study of type 2 diabetes (online Supplementary Material). The local principal investigator encounters multiple steps in order to use EHR data to aid in recruitment: regulatory review and approval, engagement of informatics staff to generate a list of potential participants by translating inclusion/exclusion criteria into a database query, and finally the process of reaching out to patients or their providers to determine if patients are interested in study participation. Based on this hypothetical scenario, we posed several questions to survey participants about the regulatory and workflow processes at their institutions.

Data Analysis

Data were exported from REDCap and analyzed. Quantitative statistics were generated for structured answers using Microsoft Excel. Free text responses were analyzed using QSR International’s NVivo qualitative data analysis software.

Results

Participant/EHR Characteristics

There were 64 responses in the survey representing over 98% of the CTSA Consortium; 122 individuals contributed to the responses. The largest group of respondents (48%) self-identified as informatics specialists while 16% did so as recruitment specialists, 11% as regulatory specialists, and 25% as other.

When asked what EHR program their institution/hospital uses, 42 respondents said Epic, 13 said Cerner, and 3 said they use a homegrown system. Fourteen indicated “other” (including most commonly Allscripts and Centricity), with 1 of these reporting they were in the planning stages of identifying a system. Five CTSAs reported using 2 or more EHR systems.

Methods of EHR-Based Recruitment

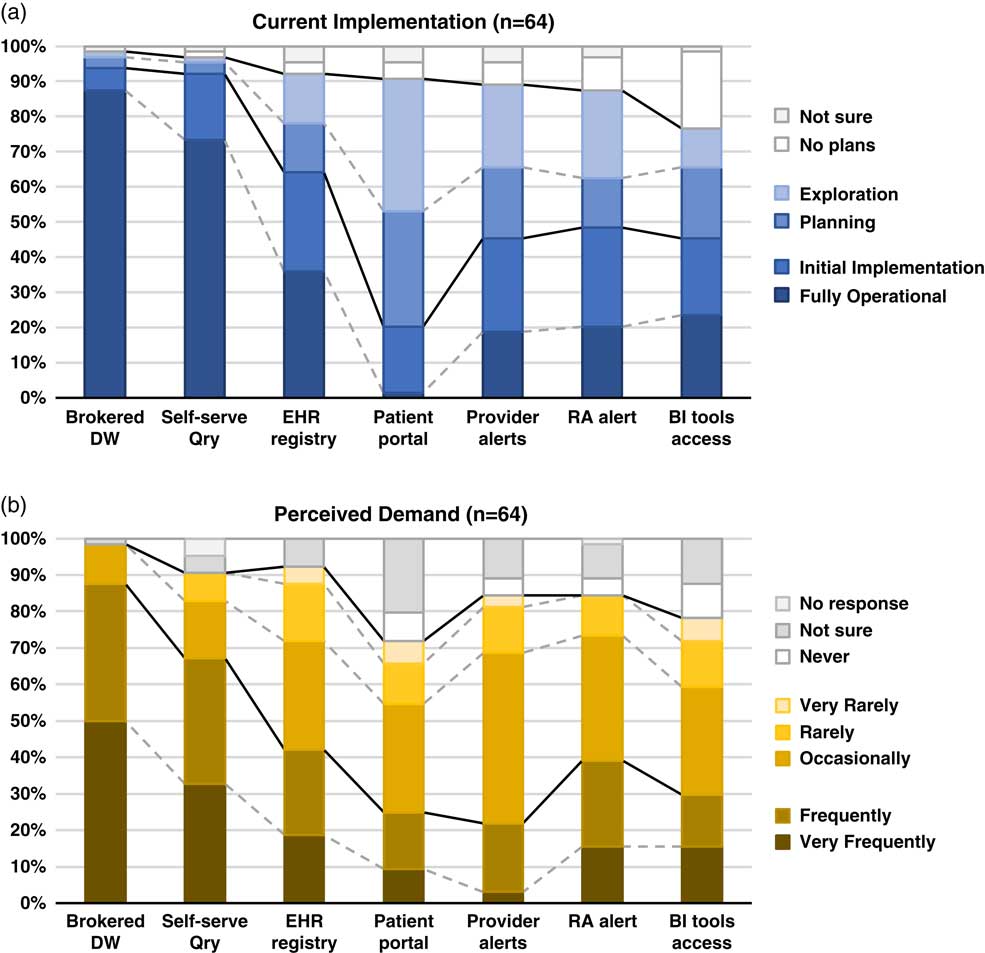

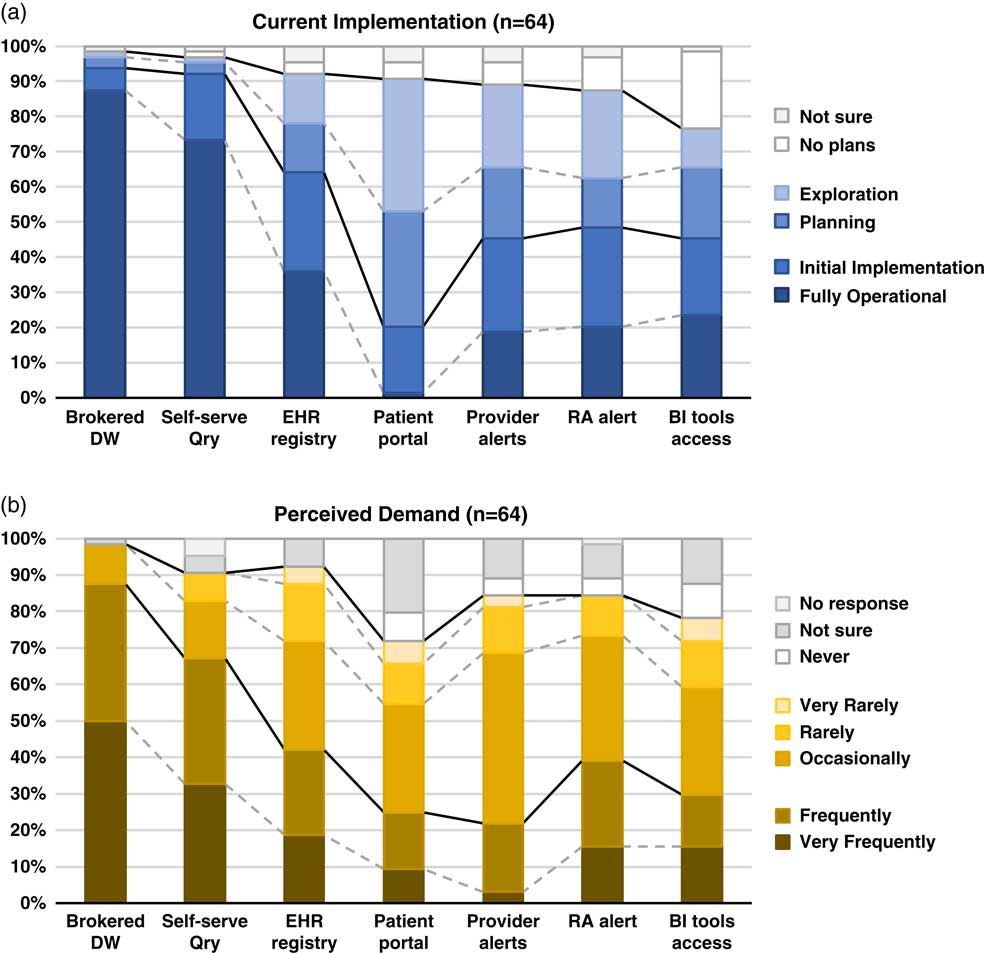

Although the use of patient portals (e.g., Epic MyChart) to notify patients about research opportunities was reported as initially implemented or fully operational at only 20% of responding institutions; however, 70% were either exploring or planning the use of such tools (Table 1). Demand for these tools was reported as frequent or very frequent by 25% of respondents, and another 30% described it as “occasional” (see Fig. 1).

Fig. 1 Summary of responses to questions regarding methods of electronic health records (EHR)-based cohort identification and recruitment. (a) Current implementation. (b) Perceived demand. Brokered data warehouse (DW), access to data warehouse by staff members; Self-serve Qry, use of self-service tools to run de-identified queries; EHR registry, use of EHRs to build patient lists to aid in recruitment; Patient portal, use of EHR patient portals to notify patients of research opportunities; Provider alerts, use of electronic alerts to care providers of patients in clinic meeting eligibility requirements; Research associate (RA) alert, use of electronic alerts to the research team if patients in clinic meet eligibility requirements; Business intelligence (BI) tools access, research given direct query access to data warehouse through business intelligence tools.

Table 1 Responses to questions regarding current implementation of methods of electronic health records (EHR)-based cohort identification and recruitment

Patient portal, use of EHR patient portals to notify patients of research opportunities; Provider alerts, use of electronic alerts to care providers of patients in clinic meeting eligibility requirements; Research team alerts, use of electronic alerts to the research team if patients in clinic meet eligibility requirements; Brokered data warehouse, access to data warehouse by staff members; Self-Service query, use of self-service tools to run de-identified queries; Business intelligence tools, research given direct query access to data warehouse through business intelligence tools; EHR registry, use of EHRs to build patient lists to aid in recruitment.

EHR alerts to care providers about patients in clinic meeting eligibility criteria were reported as in exploratory or planning stages by 44% and initially or fully implemented by 45% of respondents. Demand for these tools was reported as frequent or very frequent by 22% and “occasional” by nearly half (47%) of the respondents. Similarly, EHR alerts addressed to research teams, when an eligible patient is scheduled or attends a clinic visit, were described as exploratory or planning by 39% and some form of implementation at 48%. However, reported demand for this method was notably higher, with 39% describing it as frequent/very frequent.

With regard to access to EHR data via a data warehouse, most institutions (94%) said they were in initial implementation of, or had fully operational processes for providing access via a staff member or analyst (Table 1). This high level of implementation was consistent with perceived demand for such services; 88% of respondents described demand as frequent/very frequent. Similarly, most institutions (92%) said they had initially or fully implemented self-service tools (e.g., i2b2 [Reference Murphy8]), allowing researchers to run queries on de-identified aggregate data. Demand was also high, with two-thirds describing it as frequent/very frequent. Use of off-the-shelf business intelligence tools that allow researchers to run more complex queries on EHR data was much less prevalent with 45% reporting initial or full implementation. Perceived demand for these tools was mixed, with 30% reporting it as frequent or very frequent.

Finally, nearly two-thirds of institutions (64%) had initially or fully implemented the use of EHR data to build registries to aid in recruitment (Table 1). Demand for this approach was strong, with 30% describing it as occasional and 42% as frequent/very frequent.

When asked whether there were other EHR-related approaches they had considered, piloted, or implemented to facilitate research recruitment, 50 respondents (78%) said yes. Among those who provided additional details, common elements included:

-

∙ National networks of data sharing, either industry based (such as TriNetX®, or Cerner PowerTrials) or funded programs such as the PCORnet and SHRINE-based networks.

-

∙ Vendor-based or homegrown tools to assist in matching patients with clinical trials eligibility criteria.

-

∙ Other outreach, for example, asking patients directly for permission to be contacted for recruitment purposes (including both opt-in and opt-out approaches); the use of patient portals and Web sites to allow patients to indicate preferred method of contact; the use of direct mail, electronic communication (email, text, apps), and phone; point of patient registration or clinic visit procedures; and community-based efforts.

Nearly three-fourths (72%) of respondents said they gather metrics or other evidence of the impact of EHR-based recruitment. Among those who provided additional details, descriptions fell into 3 broad categories: (1) system utilization measures, such as numbers of hits, requests, or logons; (2) recruitment measures, such as numbers of patients who were identified as potentially eligible, responded after being contacted, screened and/or enrolled; and (3) other user-related measures, such as the numbers of grants, publications, or the results of surveys and gathered anecdotes.

Workflow and Regulatory Process for Cohort Recruitment

When asked, “Does your institution have 1 or more established workflow processes for cohort recruitment into clinical research which leverages the power of EHR to identify large numbers?”, nearly two-thirds (64%) said yes, and another 22% said they were piloting such programs. Given the option to provide additional details, respondents described a variety of workflow elements, such as regulatory and related review/approval processes, including the use of committees that review requests; informatics tools and processes (e.g., i2b2/SHRINE, ACT, tools that link EHRs to CTMS or REDCap); data sources (e.g., databases, registries, data warehouses); tools and processes to assess eligibility; and tools and processes associated with initiating patient contact, including use of MyChart portal for recruitment purposes. They also described general processes and approaches, including the use of dedicated/specialized teams and services, including honest brokers, data analysts, and recruitment specialists; self-services approaches; and standard operating procedures on issues like direct mail recruitment, guidebooks, and consultations to improve recruitment letters. Examples of specific processes and tools respondents mentioned included:

-

∙ A listing of patients who can be contacted after committee approval (as opposed to having to go through their direct provider).

-

∙ An automated interface that streamlines the load of EHR data into REDCap; template implementation for data extraction to make the process easier and more efficient for analysts.

-

∙ Using patient portals and tablets to engage volunteers to opt-in for research creating a flag in the EHR to allow subsequent identification.

Nearly one-third (30%) of respondents reported substantive restrictions on workflow processes, beyond regulatory reviews and approvals. These often included logistical constraints, such as limited resources (e.g., time, staff) to carry out recruitment processes, and the need for more training and awareness regarding the tools and systems available. In addition, respondents often described limitations related to collaborations and data sharing, such as intrainstitutional challenges (e.g., sharing data between the university and hospital); the need to identify a collaborator at the institution; and, with regard to multisite studies, additional steps such as contracting, data harmonization, network review panels and governance, and IRB approval and local Principal Investigator at each site. Somewhat less commonly, respondents noted:

-

∙ Challenges associated with the nature of the study and/or data, such as studies that require chart review, study topics that are seen to be sensitive, and data availability in complex cases.

-

∙ Additional regulatory requirements, such as compliance review of data security plans, and the need for data use agreements.

-

∙ Challenges in the process of contacting patients, such as required provider involvement, creating burden for the provider as well as delays for the researcher, and the restrictions on the number of times a patient can be contacted for recruitment purposes.

A similar number of respondents (30%) reported that alternative approaches of regulatory and workflow processes had been implemented or piloted at their institution. Among those who offered addition details, descriptions commonly included workflow elements, such as:

-

∙ Regulatory and related review/approval processes, for example enabling the informatics program to sign off on compliance/security issues for studies that meet criteria for routine data uses, and the use of a centralized or delegated IRB.

-

∙ Informatics tools and processes, such as building a library of queries and computational phenotypes to streamline queries and enhance consistency across defined diseases.

-

∙ Data sources, such as use of an external registry linked to EHRs of enrolled subjects.

-

∙ Tools and processes to assess eligibility, for instance the informatics and participant core working together using honest brokers to develop lists of patients meeting inclusion/exclusion criteria.

-

∙ Tools and processes associated with initiating patient contact, such as the expanded use of EHRs to engage patients in recruitment through MyChart.

Many also described general processes and approaches, such as the use of dedicated teams and services (e.g., a recruitment team that contacts providers on behalf of the study to obtain approval to contact the patient, and creation of a Participant Recruitment Center); the use of self-services tools that have built-in honest broker capability; and workflows tailored to the type of project, data needs and sources.

Finally, over one-third (36%) of respondents said they had additional insights to offer on using EHRs to facilitate research recruitment that might be useful. Workflow elements were commonly mentioned, including:

-

∙ Regulatory and related review/approval processes, such as ensuring that HIPAA waivers for permission to contact for research recruitment become a standard part of the patient intake workflow; minimizing the time for regulatory review by the use of standard data use agreements developed in collaboration with the IRB and legal office; use of data review committees to relieve the burden of regulatory compliance assessment on the honest broker.

-

∙ Informatics tools and processes, such as the importance of evaluating data quality and provenance for each query; strict segregation of identified Versus de-identified data in data warehouse; use of open standards, non-proprietary software, and data extraction tools designed by programmers who are trained in statistics, and closer collaboration with academic informaticians and software engineers.

-

∙ Data sources, such as addressing the physical, technological, and cultural separation between the operational and research sides of the institution; integrating EHRs from other clinical partners (with different EHR platforms) into data warehouse; tools and processes to assess eligibility, including the necessity of allowing flexibility by working in “what if” mode, and dialog with investigators to help them clarify their ideas.

-

∙ Tools and processes for initiating patient contact, including: obtaining consent from patients for direct researcher contact in the future (including mention of a regional registry built by having admission clerks ask about willingness to be contacted); an automated algorithm to identify prospective participants de-identified to researchers, allowing a message to go directly to patients without releasing PHI to the investigator; the use of new technologies such as ResearchKit to aid in recruitment; development of templates and guidance for how to initiate contact; collection of data on satisfaction among patients who receive research invitations.

Respondents also offered insights on general processes and approaches, such as:

-

∙ The use of dedicated teams and services (e.g., offering a suite of recruitment services with a mix of technology and human-supported options), and the importance of having “people available who have a good understanding of the tools, clinical contexts, etc. to help users develop reproducible and trustworthy best practices for obtaining and using data for research”.

-

∙ The importance of creating a business model that justifies institutional support.

-

∙ Other ideas, such as leveraging PCORnet experiences and recognition that communication, trust, effective process, and data sharing agreements are the key to working with community partners in collaborative clinical and translational research.

Recruitment Practices

Once a researcher receives a list of potential research participants, respondents reported a wide range of recruitment practices allowed at their institution (Table 2). Practices involving an intermediary were allowed at over half of institutions, including approaching potential participants in clinic who were previously identified (55%) and contact only after introduction by the care provider or clinic (53%). Direct approaches were less commonly allowed; for example, a letter sent from the researcher explaining how s/he got the potential participant’s name (34%).

Table 2 Distribution of responses to the question “Once the researcher receives the list of participants, what recruitment practices are allowed, with IRB approval?”Footnote *

EHR, electronic health records; IRB, Institutional Review Board; PCP, primary care provider.

* Respondents were instructed to identify all the practices that were allowed at their institution.

Twenty-three percent of respondents indicated “other” recruitment practices, many of whom offered details on provider involvement, such as making initial contact to introduce the study or obtain consent for researcher contact, providing a signed letter for researchers to use to make initial contact, or providing approval to make contact. Many also noted that their recruitment practices were study specific, for example, depending on whether the study topic is considered sensitive or specific IRB approvals. A few described recruitment processes carried out by others, such as recruitment specialists.

Variations on the Hypothetical Scenario

When asked whether workflow processes would substantially differ if the research involved a rare disease, cancer, or pediatrics, only a minority of participants said yes. One respondent indicated that, for a rare disease, they would extend the search to include patients from other medical centers within their larger academic system. For a cancer study, a few (8%) respondents identified additional review and approval processes (e.g., cancer center specific processes) and the use of cancer registries for recruitment. About one-fifth (19%) of respondents noted substantial differences for pediatric studies, based on separation between health care entities focused on children Versus adults, initial contact with surrogates (e.g., parents, guardians), and separate IRBs.

Discussion

There is widespread recognition of the nascent promise of EHRs to facilitate cohort identification and research recruitment [Reference Cowie3, Reference Coorevits4]. Our survey results suggest significant activity in this arena at CTSA institutions, with the level of implementation across various practices and tools ranging from exploratory to fully operational. In nearly every case, reported demand for these practices and tools exceeded implementation (Fig. 1). Regulatory and workflow processes were similarly varied, and a substantive proportion of survey respondents described restrictions on the use of EHR data for recruitment purposes arising from logistical constraints and limitations on collaboration and data sharing. These findings highlight the need for further implementation and evaluation research—including comparative research—to help identify best practices that efficiently and effectively meet the needs of patients, providers, and researchers.

Studies thus far have generally indicated public support for research use of EHRs [Reference Damschroder9–Reference Grande11], albeit with some potential concerns about the use of sensitive information [Reference Caine and Hanania12]. EHR patient portals are one possible tool to enhance patients’ awareness of research opportunities and perhaps offer some level of control. Even so, only a minority of institutions responding to our survey had implemented the use of EHR patient portals for research purposes. In addition, there continue to be disparities in individuals’ access to and use of EHRs and PHRs, particularly among certain socio-demographic groups [Reference Patel, Barker and Siminerio13]; however, this is improving and there is some evidence that underrepresented groups are just as amenable to recruitment via such approaches [Reference Bower14].

With regard to electronic alerts to care providers about patients’ eligibility and recruitment for research, our survey found what could arguably be described as a moderate level of both implementation and demand. Embi et al. described an approach to research decision support at the point of care referred to as a “clinical trial alert” that demonstrated an 8-fold increase in physician-generated referrals to studies and a doubling of enrollment [Reference Embi15]. Rollman et al. [Reference Rollman16] compared similar electronic physician prompts to waiting room case finding, and found that physicians referred a smaller number of patients (compared to the number approached by waiting room recruiters), but a substantially higher proportion of them met inclusion criteria and enrolled. There were also significant demographic and clinical differences between subjects enrolled via the 2 methods. However, declining responsiveness to alerts (or “alert fatigue”) is a well-recognized concern [Reference Embi and Leonard17]. Embi et al. [Reference Embi, Jain and Harris18] examined physician perceptions of a clinical trials alert system for subject recruitment. Although 77% of physician respondents appreciated being reminded about the trial, a similar majority stated that they dismissed the alerts sometimes (54%) or every time (25%). Among those who ignored all of the alerts, common reasons included lack of time, knowledge of patients’ ineligibility, and limited knowledge of the trial. Compared to alerts targeting providers, our survey respondents reported a higher demand for alerts to the research team, and published reports [Reference Weng19–Reference Thadani21] provide preliminary indication that these may help increase the efficiency of the recruitment process. As opposed to providers who lack time and incentive to act on the recruitment alerts, clinical research staff are highly motivated to receive recruitment alerts and often seek to create such alerts with the help of IT team. In the study reported by Thadani et al., the recruit efficiency or manual chart review effort were reduced by 90% for the ACCORD study by creating the recruitment alerts for research coordinators.

Data warehouses with self-service query tools had been fully implemented at nearly all of our responding institutions and were described as the subject of frequent demand. Our results suggest that business intelligence tools have less often been implemented, but the benefits of using advanced analytics to build complex queries across multiple data sets from disparate sources have been demonstrated in several contexts [Reference Fihn22–Reference Narus25].

Finally, approaches involving direct patient engagement, for example building registries of patients who have agreed to be contacted, had been initially or fully implemented at a majority of our responding institutions. Positive experiences with these approaches have been described in the literature [Reference Kluding26–Reference Tan29].

Our results suggest it may be common and essential practice for an institution to offer a suite of recruitment services comprising a mixture of the above approaches, including both technology and human‐supported options. Many institutions have self-service tools and also provide support for investigators with data analysts and recruitment specialists who together develop efficient queries to support recruitment or gather data. Investigator dialog with an analyst is often necessary to help clarify ideas and refine selection criteria for a study population. Table 3 highlights some of our findings. Greater experience and careful evaluation over time will provide insights into optimizing services that enhance sensitivity and specificity of recruitment strategies.

Table 3 Lessons learned

EHR, electronic health records.

In all cases, research recruitment must take place within well-established principles for ethically responsible research. Even so, it important to distinguish between risks associated with identifying and contacting individuals about their interest in research participation, and risks associated with actually participating in research [Reference Beskow30]. Survey respondents reported wide variation in recruitment practices allowed at their institution, including, for example, whether investigators are allowed to contact prospective participants directly or only after introduction by the healthcare provider. The concept of “physician-as-gatekeeper” has been the subject of empirical study [Reference Beskow31–Reference Beskow33] and ethical analysis [Reference Beskow30, Reference Sharkey34], and is a topic ripe for focused effort and debate to identify and justify best practice guidelines.

This study has a few limitations. First, while we present prevailing practices, we are not able to provide strong recommendations for best practices due to lack of evidence from comparative effectiveness research on EHR-based recruitment methods. In addition, there is a need for a consensus-based approach for adaptation of metrics from the practice of clinical research (e.g., accrual index [Reference Corregano35]) or development of metrics to measure the efficiency of different EHR-based recruitment approaches.

The lack of a sufficient body of comparative research to provide an evidence base for the application of these technologies limits our ability to make specific recommendations. Nonetheless, we believe our survey results provide a useful sense of the spectrum of activities and approaches being employed and frequent implementation considerations associated with different approaches. Moreover, our survey examined practices only at academically-based health care systems with relatively large federally-funded research portfolios. Furthermore, the results are based on the perceptions of a limited number of stakeholders at each institution. Perhaps most importantly, these data reflect a snapshot from the spring of 2016 that will need to be updated as the field evolves. Future studies should focus on the costs, yield, patient and provider acceptability, and study retention achieved with various approaches in order to drive the development and adoption of innovative best practices that both protect patients and facilitate interinstitutional collaboration and multisite research.

Acknowledgments

The authors thank the workgroup members listed in the online Supplementary Material for helpful discussion and input. The authors also thank Ms. Bridget Swindell and Jodie Jackson of Vanderbilt University for supporting the effort. The results would not have been possible without the support and effort of hundreds of individuals working at each of the CTSA institutions who participated in the survey.

This project has been funded in whole or in part with Federal funds from the National Center for Research Resources and National Center for Advancing Translational Sciences (NCATS), National Institutes of Health (NIH), through the Clinical and Translational Science Awards Program, grant numbers: UL1TR001073, UL1TR001430, UL1TR000439, UL1TR001876, UL1TR001873, UL1TR001086, UL1TR001117, UL1TR000454, UL1TR001409, UL1TR001102, UL1TR001433, UL1TR001108, UL1TR001079, UL1TR000135, UL1TR001436, UL1TR001450, UL1TR001445, UL1TR001422, UL1TR002369, UL1TR002014, UL1TR001866, UL1TR001114, UL1TR001085, UL1TR001070, UL1TR001064, UL1TR001417, UL1TR000039, UL1TR001412, UL1TR001860, UL1TR001414, UL1TR001881, UL1TR001442, UL1TR001872, UL1TR000430, UL1TR001425, UL1TR001082, UL1TR001427, UL1TR002003, U54TR001356, UL1TR000001, UL1TR001998, UL1TR001453, UL1TR000460, UL1TR000433, UL1TR000114, UL1TR001449, UL1TR001111, UL1TR001878, UL1TR001857, UL1TR002001, UL1TR001855, UL1TR000371, UL1TR001120, UL1TR001439, UL1TR001105, UL1TR001067, UL1TR000423, UL1TR000427, UL1TR000445, UL1TR000058, UL1TR001420, UL1TR000448, UL1TR000457, UL1TR001863, and U54 TR000123.

Disclosures

The authors have no conflicts of interest to declare.

Supplementary material

To view supplementary material for this article, please visit https://doi.org/10.1017/cts.2017.301