62 results

Potential underreporting of treated patients using a Clostridioides difficile testing algorithm that screens with a nucleic acid amplification test

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 45 / Issue 5 / May 2024

- Published online by Cambridge University Press:

- 25 January 2024, pp. 590-598

- Print publication:

- May 2024

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Quantifying racial disparities in risk of invasive Staphylococcus aureus infection in Metropolitan Atlanta, Georgia, during the 2020–2021 coronavirus disease 2019 (COVID-19) pandemic

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 45 / Issue 4 / April 2024

- Published online by Cambridge University Press:

- 27 December 2023, pp. 534-536

- Print publication:

- April 2024

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

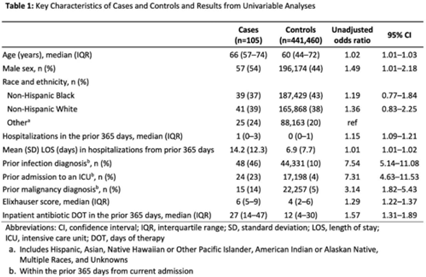

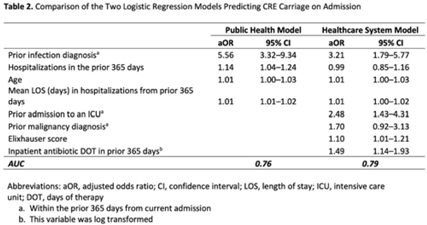

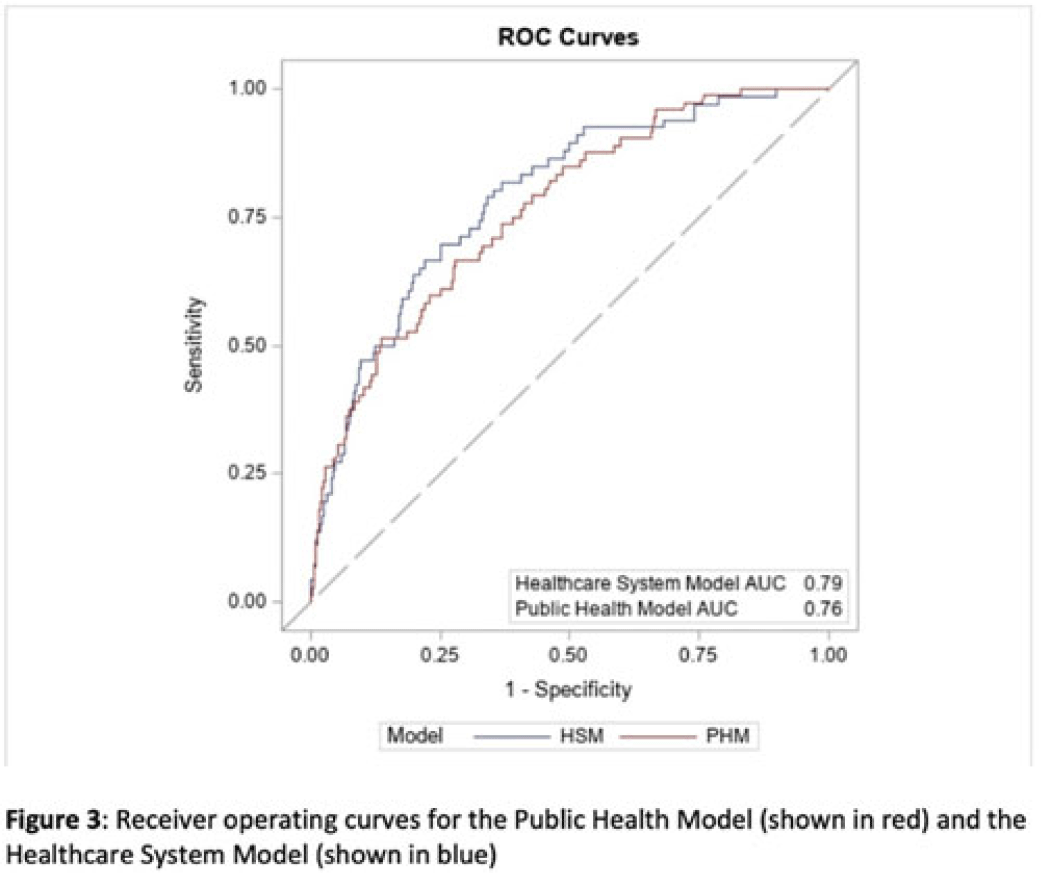

Identifying patients at high risk for carbapenem-resistant Enterobacterales carriage upon admission to acute-care hospitals

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, pp. s82-s83

-

- Article

-

- You have access

- Open access

- Export citation

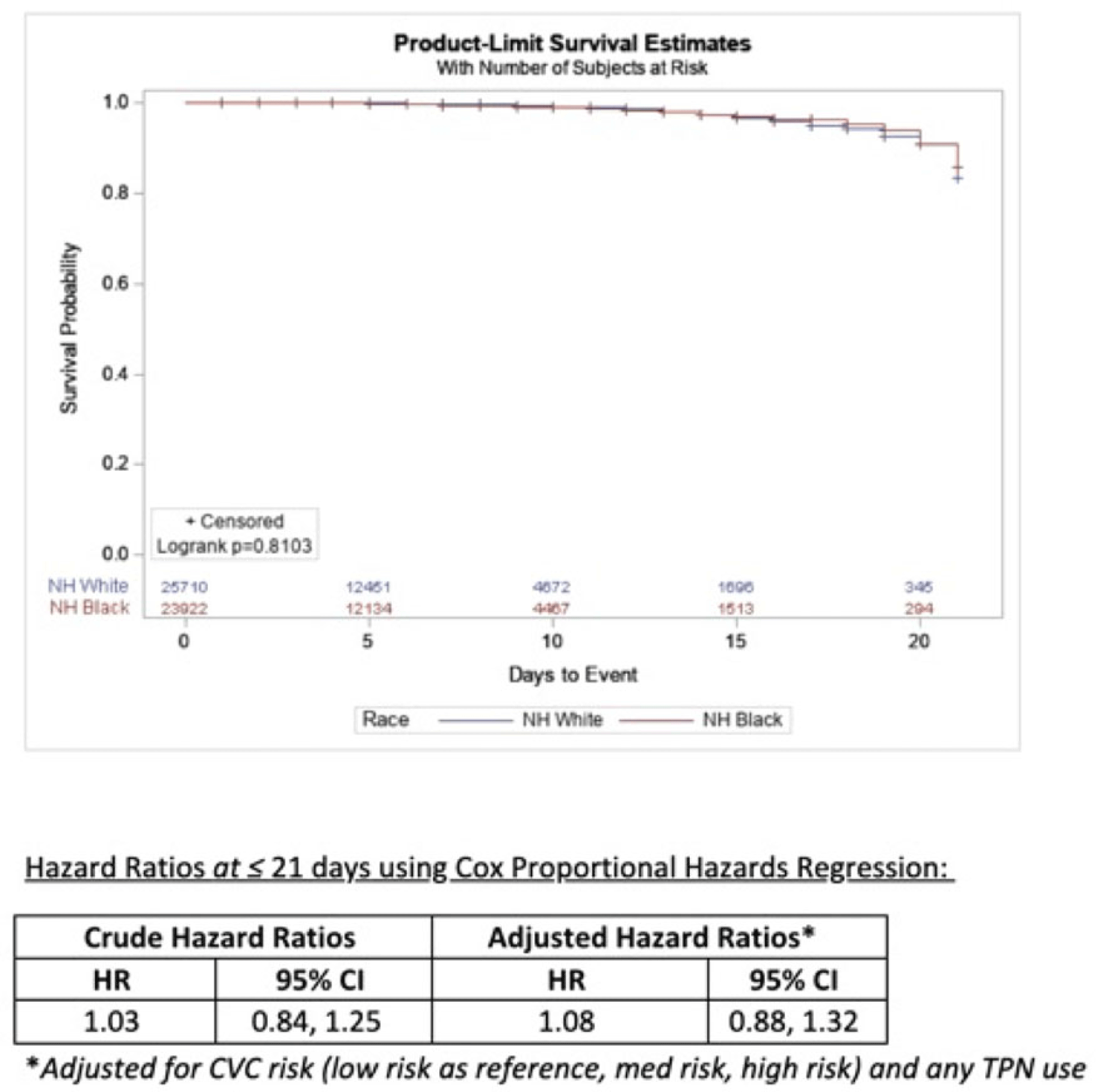

Estimating racial differences in risk for CLABSI in a large urban healthcare system

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, pp. s50-s51

-

- Article

-

- You have access

- Open access

- Export citation

Determinates of Clostridioides difficile infection (CDI) testing practices among inpatients with diarrhea at selected acute-care hospitals in Rochester, New York, and Atlanta, Georgia, 2020–2021

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 7 / July 2023

- Published online by Cambridge University Press:

- 14 September 2022, pp. 1085-1092

- Print publication:

- July 2023

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

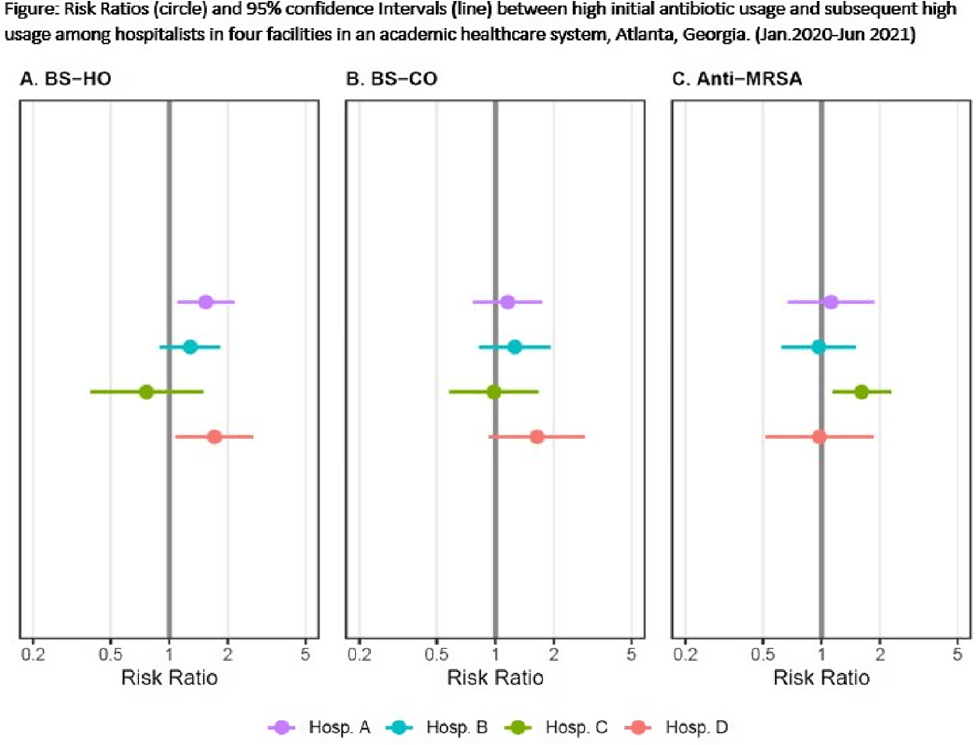

Do hospitalists who prescribe high (risk-adjusted) rates of antibiotics do so repeatedly?

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 2 / Issue S1 / July 2022

- Published online by Cambridge University Press:

- 16 May 2022, p. s2

-

- Article

-

- You have access

- Open access

- Export citation

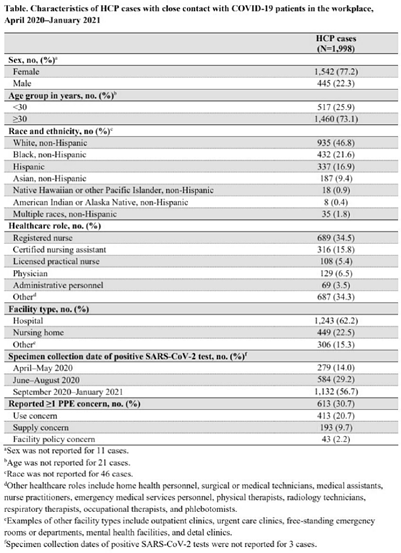

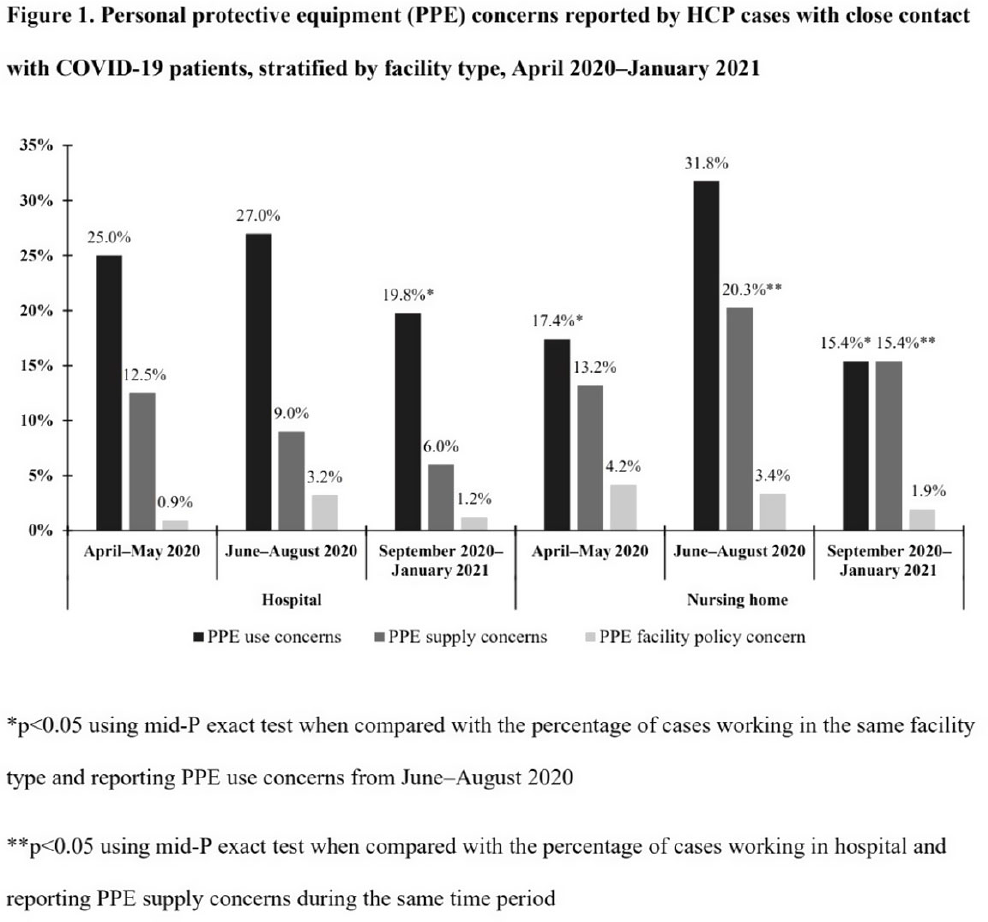

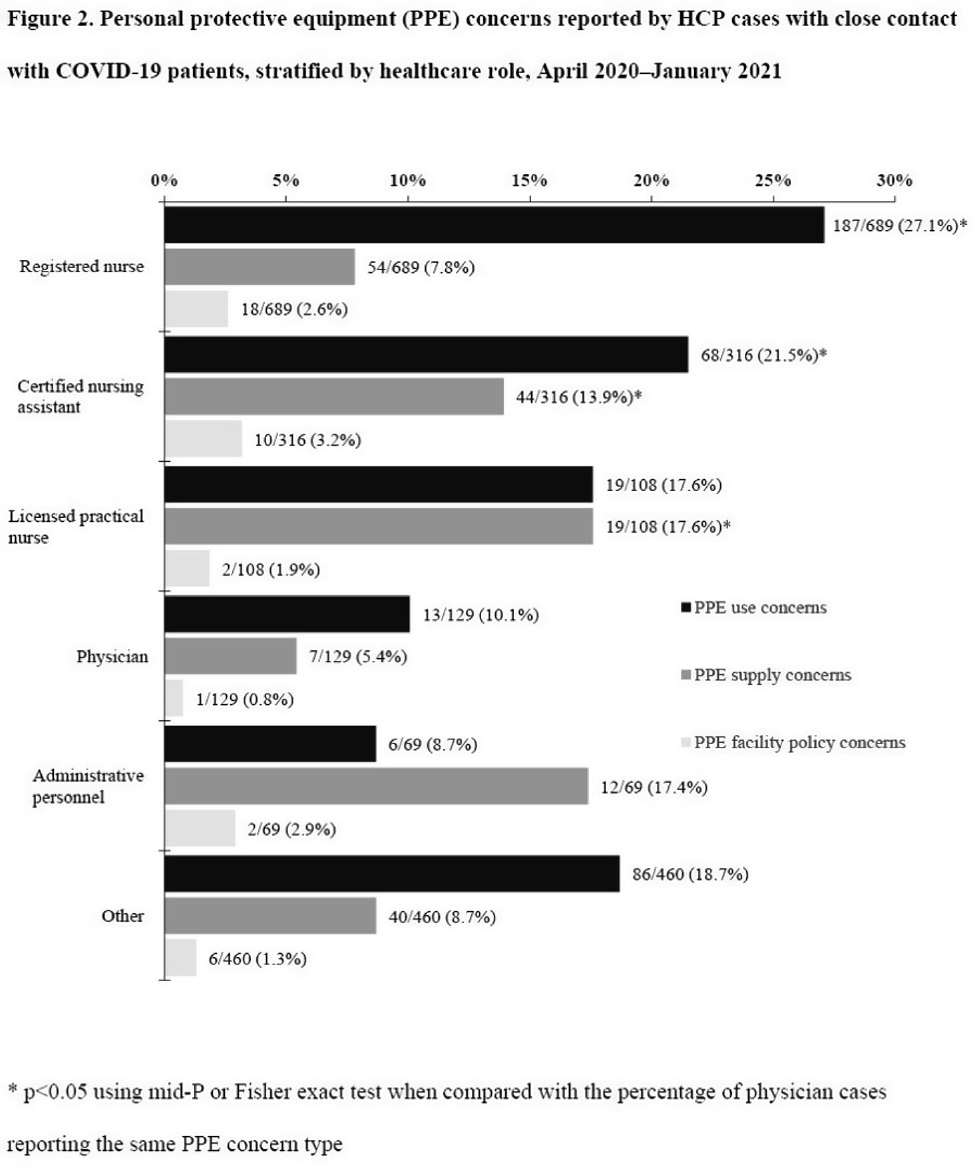

Characteristics of healthcare personnel who reported concerns related to PPE use during care of COVID-19 patients

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 2 / Issue S1 / July 2022

- Published online by Cambridge University Press:

- 16 May 2022, pp. s8-s9

-

- Article

-

- You have access

- Open access

- Export citation

Which nursing home workers were at highest risk for SARS-CoV-2 infection during the November 2020–February 2021 winter surge of COVID-1?

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 2 / Issue S1 / July 2022

- Published online by Cambridge University Press:

- 16 May 2022, p. s7

-

- Article

-

- You have access

- Open access

- Export citation

Occupational risk factors for severe acute respiratory coronavirus virus 2 (SARS-CoV-2) infection among healthcare personnel: A 6-month prospective analysis of the COVID-19 Prevention in Emory Healthcare Personnel (COPE) Study

- Part of

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 43 / Issue 11 / November 2022

- Published online by Cambridge University Press:

- 14 February 2022, pp. 1664-1671

- Print publication:

- November 2022

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Risk factors for severe acute respiratory coronavirus virus 2 (SARS-CoV-2) seropositivity among nursing home staff

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 1 / Issue 1 / 2021

- Published online by Cambridge University Press:

- 28 October 2021, e35

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

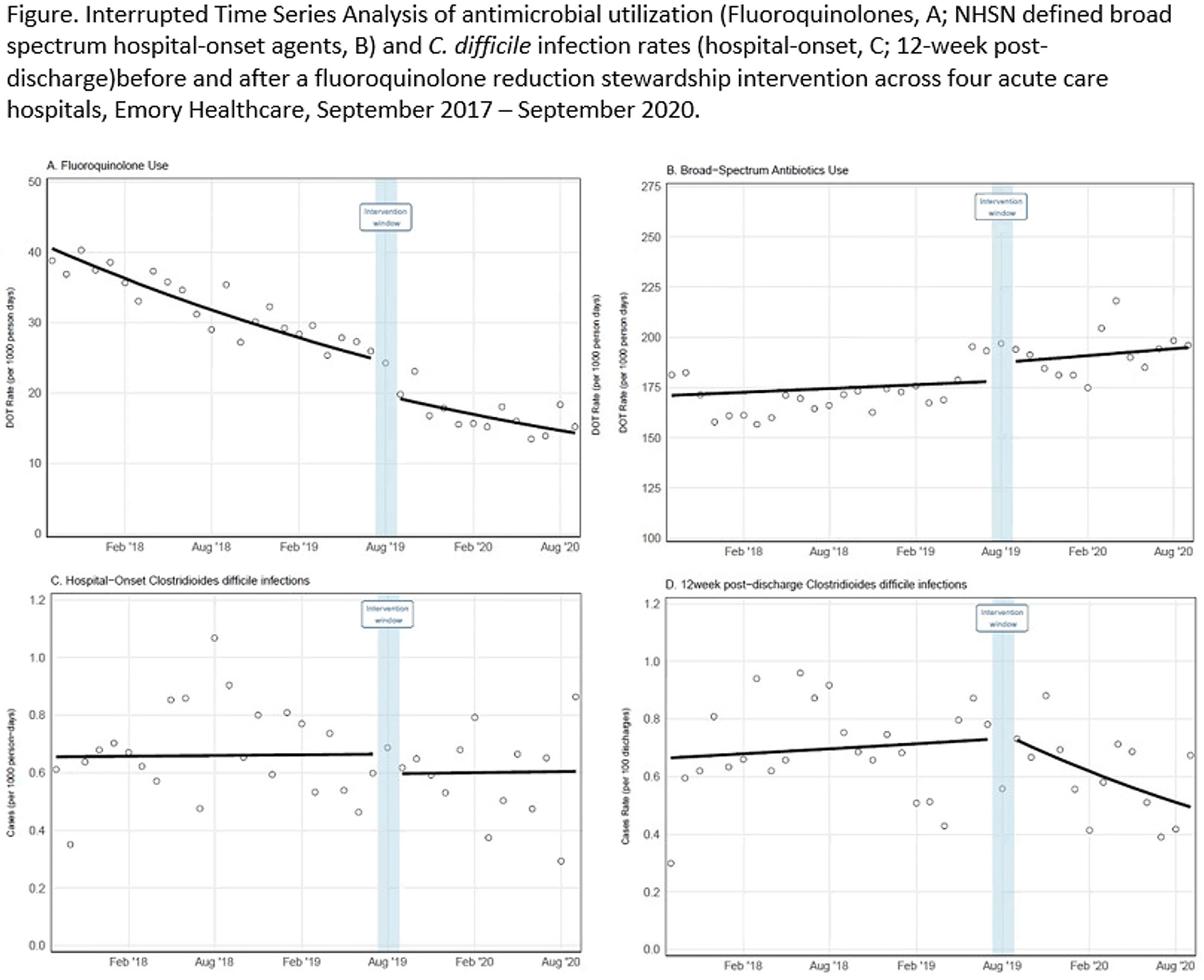

Reductions in inpatient fluoroquinolone use and postdischarge Clostridioides difficile infection (CDI) from a systemwide antimicrobial stewardship intervention

- Part of

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 1 / Issue 1 / 2021

- Published online by Cambridge University Press:

- 22 October 2021, e32

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Reductions in Postdischarge Clostridioides difficile Infection after an Inpatient Health System Fluoroquinolone Stewardship

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 1 / Issue S1 / July 2021

- Published online by Cambridge University Press:

- 29 July 2021, p. s4

-

- Article

-

- You have access

- Open access

- Export citation

Reductions in positive Clostridioides difficile events reportable to National Healthcare Safety Network (NHSN) with adoption of reflex enzyme immunoassay (EIA) testing in 13 Atlanta hospitals

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 43 / Issue 7 / July 2022

- Published online by Cambridge University Press:

- 08 July 2021, pp. 935-938

- Print publication:

- July 2022

-

- Article

- Export citation

Occupational risk factors for severe acute respiratory coronavirus virus 2 (SARS-CoV-2) infection among healthcare personnel: A cross-sectional analysis of subjects enrolled in the COVID-19 Prevention in Emory Healthcare Personnel (COPE) study

- Part of

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 43 / Issue 3 / March 2022

- Published online by Cambridge University Press:

- 09 February 2021, pp. 381-386

- Print publication:

- March 2022

-

- Article

-

- You have access

- HTML

- Export citation

A Portable, Easily Deployed Approach to Measure Healthcare Professional Contact Networks in Long-Term Care Settings

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 41 / Issue S1 / October 2020

- Published online by Cambridge University Press:

- 02 November 2020, p. s455

- Print publication:

- October 2020

-

- Article

-

- You have access

- Export citation

Variation in Hospitalist-Specific Antibiotic Prescribing at Four Hospitals: A Novel Tool for Antibiotic Stewardship

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 41 / Issue S1 / October 2020

- Published online by Cambridge University Press:

- 02 November 2020, pp. s56-s57

- Print publication:

- October 2020

-

- Article

-

- You have access

- Export citation

Racial Differences in Incidence of Staphylococcus aureus Joint Infections in Metropolitan Atlanta, 2016–2018

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 41 / Issue S1 / October 2020

- Published online by Cambridge University Press:

- 02 November 2020, p. s495

- Print publication:

- October 2020

-

- Article

-

- You have access

- Export citation

Evaluation of Care Interactions Between Healthcare Personnel and Residents in Nursing Homes Across the United States

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 41 / Issue S1 / October 2020

- Published online by Cambridge University Press:

- 02 November 2020, pp. s36-s38

- Print publication:

- October 2020

-

- Article

-

- You have access

- Export citation

Validation of Administrative Codes for Identification of Staphylococcus aureus Infections Among Electronic Health Data

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 41 / Issue S1 / October 2020

- Published online by Cambridge University Press:

- 02 November 2020, pp. s507-s509

- Print publication:

- October 2020

-

- Article

-

- You have access

- Export citation

The Second Central Line Increases Central-Line–Associated Bloodstream Infection Risk by 80%: Implications for Inpatient Quality Reporting Programs

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 41 / Issue S1 / October 2020

- Published online by Cambridge University Press:

- 02 November 2020, pp. s499-s500

- Print publication:

- October 2020

-

- Article

-

- You have access

- Export citation