15 results

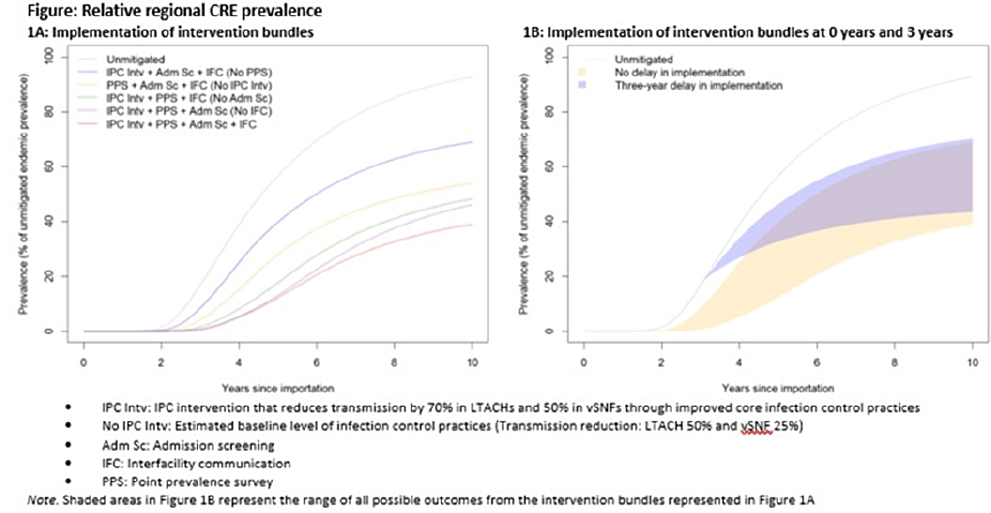

Regional impact of multidrug-resistant organism prevention bundles implemented by facility type: A modeling study

-

- Journal:

- Infection Control & Hospital Epidemiology , First View

- Published online by Cambridge University Press:

- 28 February 2024, pp. 1-8

-

- Article

- Export citation

Modeling the impacts of influenza antiviral prophylaxis strategies in nursing homes

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, pp. s18-s19

-

- Article

-

- You have access

- Open access

- Export citation

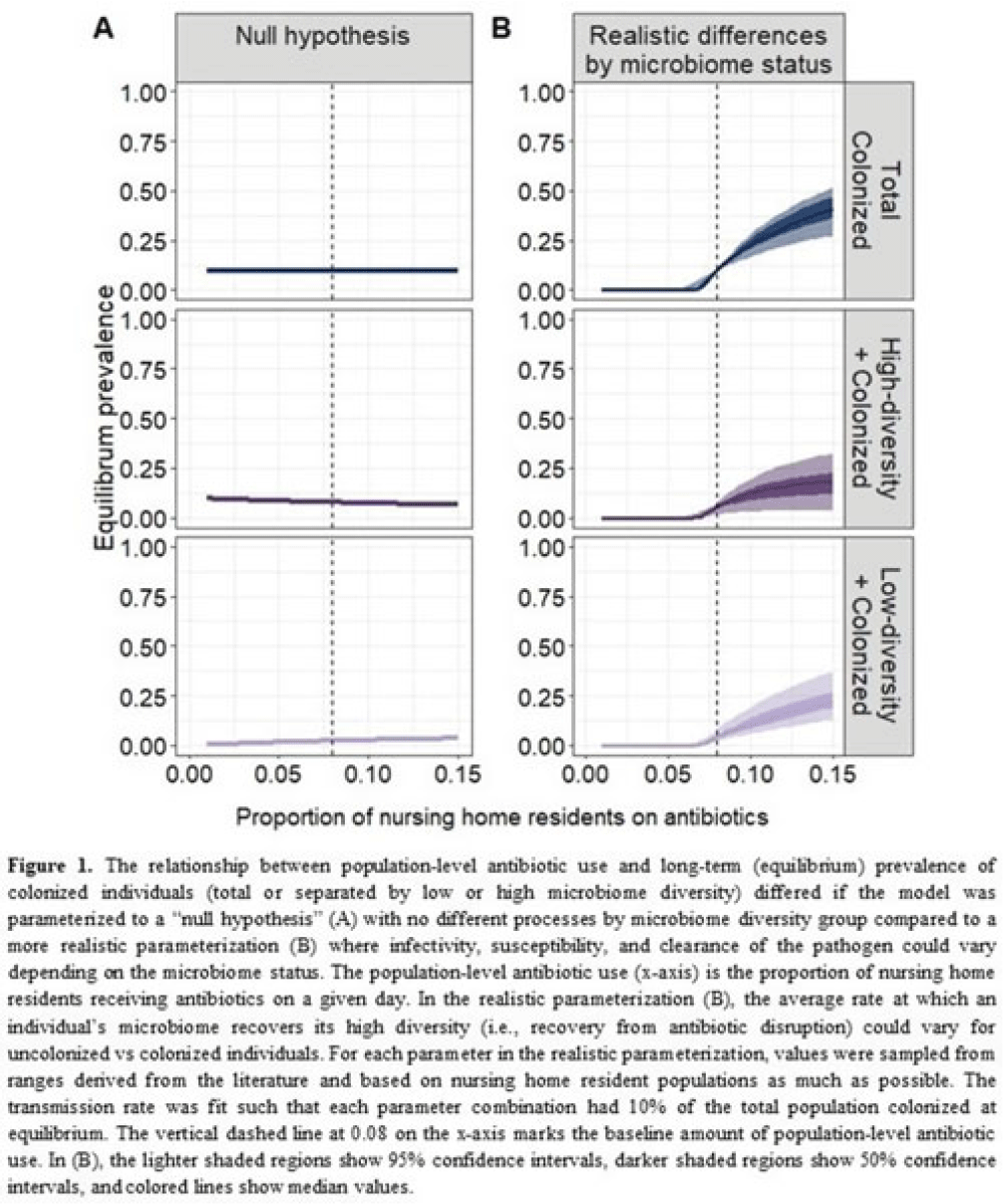

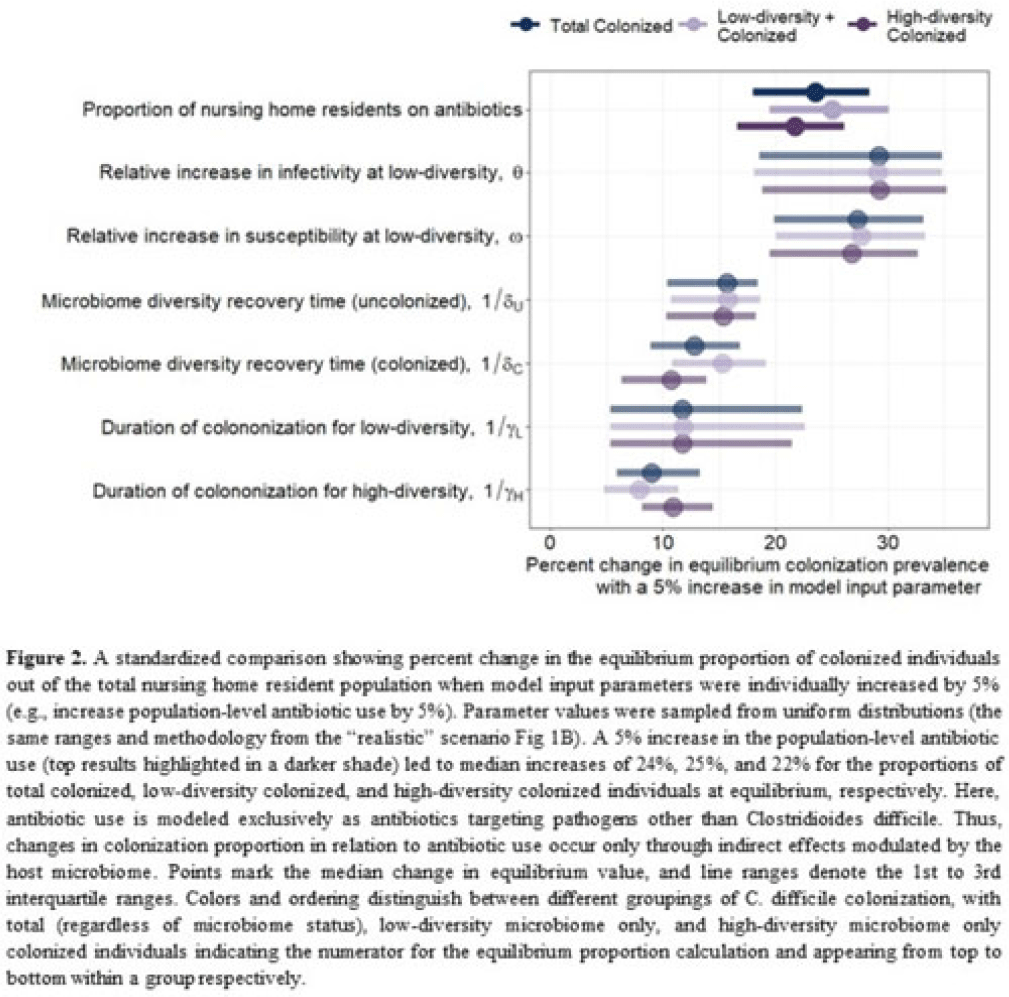

Uncovering gut microbiota-mediated indirect effects of antibiotic use on Clostridioides difficile transmission

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, pp. s104-s105

-

- Article

-

- You have access

- Open access

- Export citation

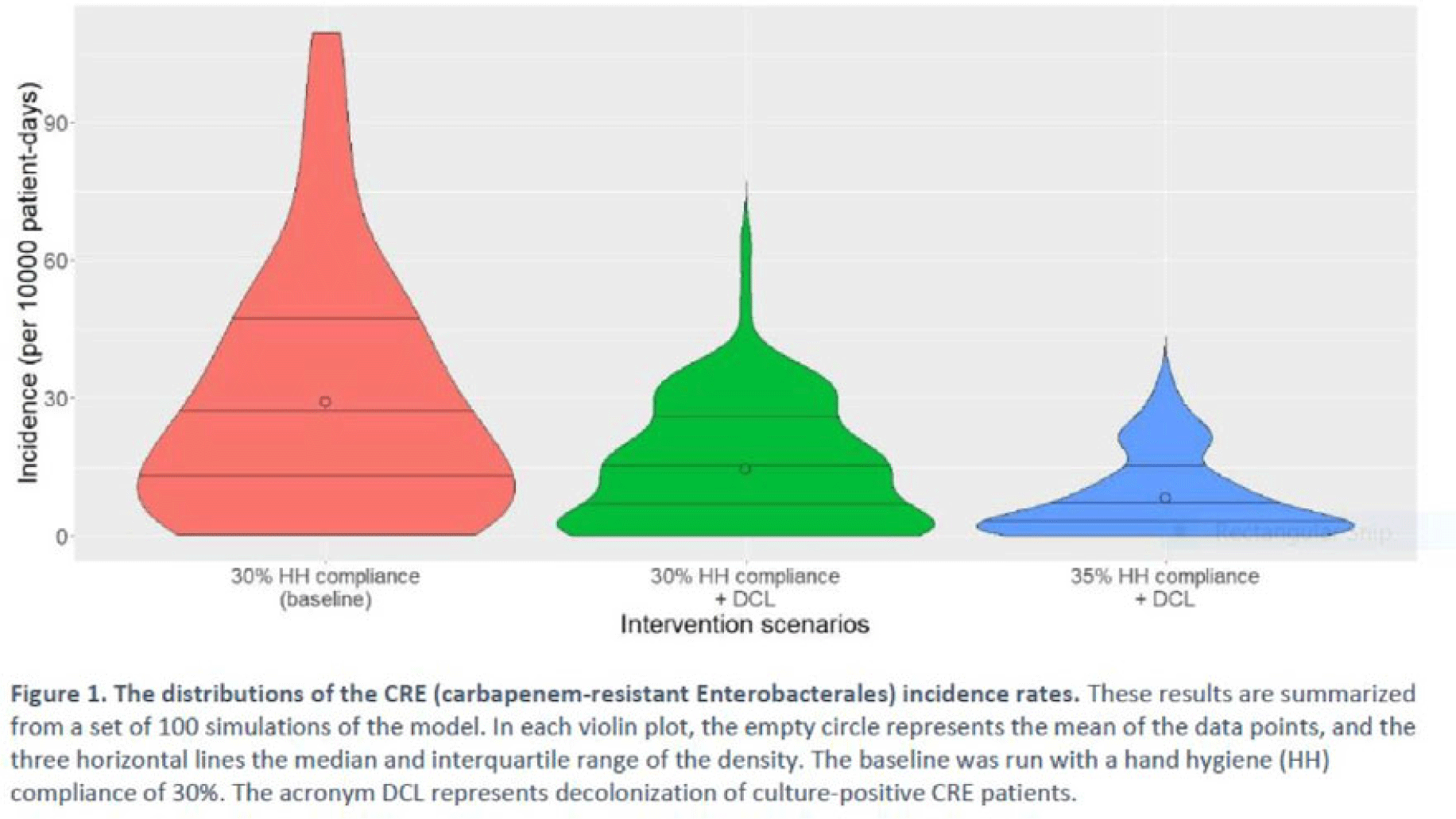

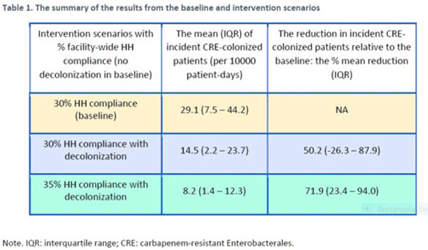

Decolonization of hospital patients may aid efforts to reduce transmission of carbapenem-resistant Enterobacterales

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, pp. s59-s60

-

- Article

-

- You have access

- Open access

- Export citation

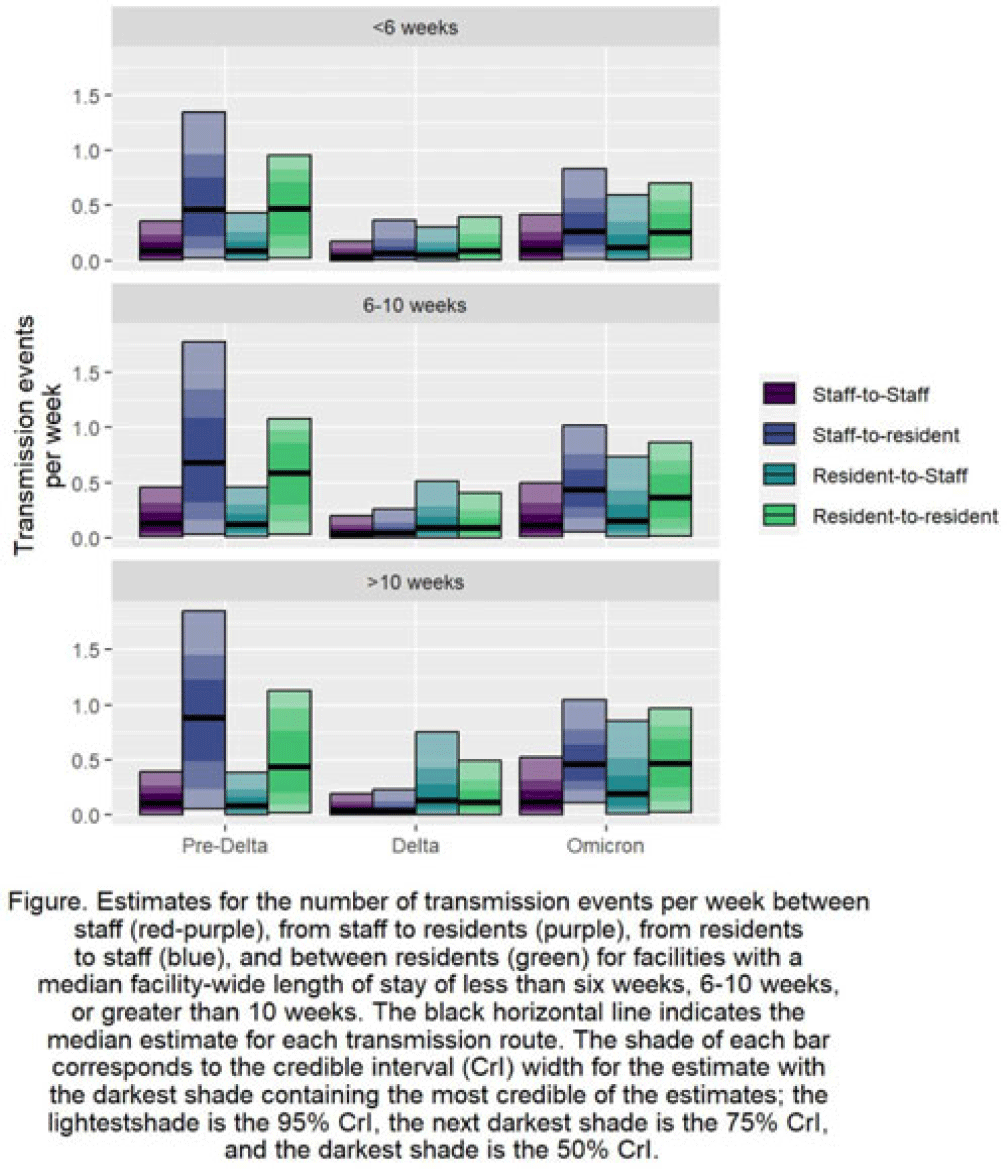

How COVID-19 spread varied by resident length of stay and resident–staff transmission pathways over time in US nursing homes

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, p. s111

-

- Article

-

- You have access

- Open access

- Export citation

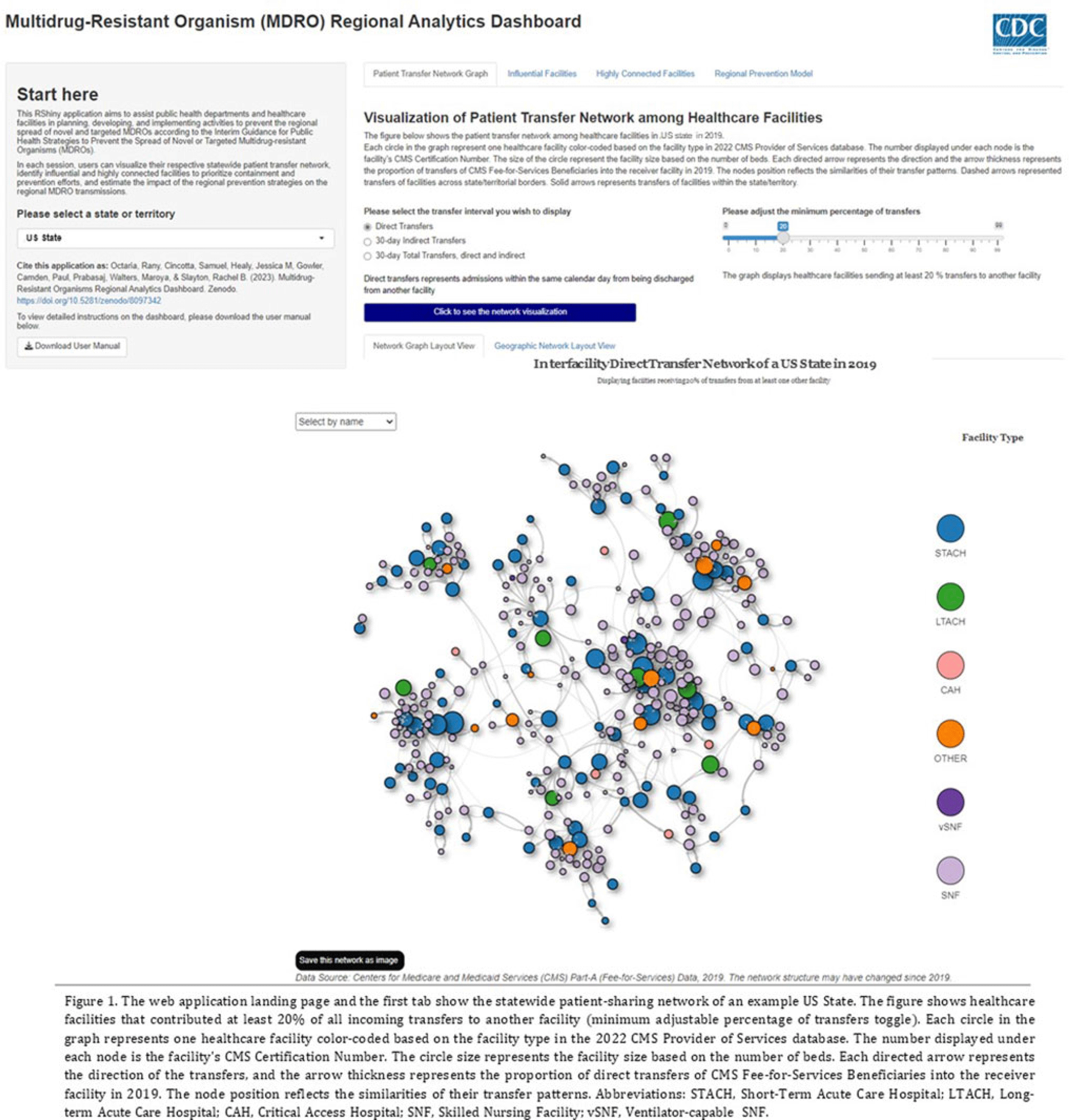

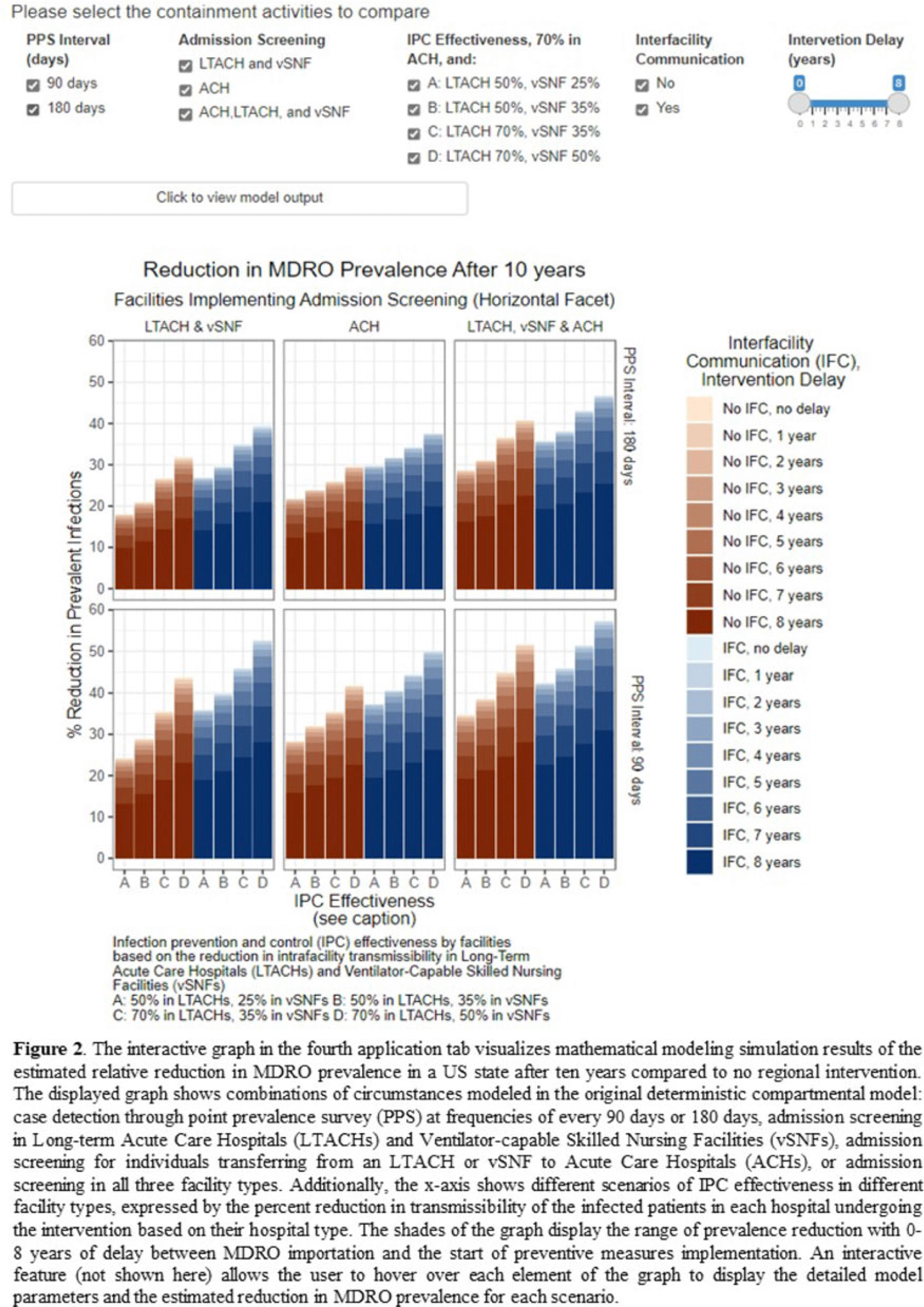

An interactive patient transfer network and model visualization tool for multidrug-resistant organism prevention strategies

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, pp. s120-s122

-

- Article

-

- You have access

- Open access

- Export citation

Predicting the regional impact of interventions to prevent and contain multidrug-resistant organisms

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 2 / Issue S1 / July 2022

- Published online by Cambridge University Press:

- 16 May 2022, pp. s13-s14

-

- Article

-

- You have access

- Open access

- Export citation

Regional Impact of a CRE Intervention Targeting High Risk Postacute Care Facilities (Chicago PROTECT)

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 41 / Issue S1 / October 2020

- Published online by Cambridge University Press:

- 02 November 2020, pp. s48-s49

- Print publication:

- October 2020

-

- Article

-

- You have access

- Export citation

Optimizing Sentinel Surveillance to Target Containment of Emerging Multidrug-Resistant Organisms in Regional Networks

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 41 / Issue S1 / October 2020

- Published online by Cambridge University Press:

- 02 November 2020, pp. s336-s337

- Print publication:

- October 2020

-

- Article

-

- You have access

- Export citation

Decreased Hospitalizations and Costs From Infection in Sixteen Nursing Homes in the SHIELD OC Regional Decolonization Initiative

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 41 / Issue S1 / October 2020

- Published online by Cambridge University Press:

- 02 November 2020, pp. s7-s8

- Print publication:

- October 2020

-

- Article

-

- You have access

- Export citation

Validation of Administrative Codes for Identification of Staphylococcus aureus Infections Among Electronic Health Data

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 41 / Issue S1 / October 2020

- Published online by Cambridge University Press:

- 02 November 2020, pp. s507-s509

- Print publication:

- October 2020

-

- Article

-

- You have access

- Export citation

Surgical site infection risk following cesarean deliveries covered by Medicaid or private insurance

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 40 / Issue 6 / June 2019

- Published online by Cambridge University Press:

- 09 April 2019, pp. 639-648

- Print publication:

- June 2019

-

- Article

- Export citation

The projected burden of complex surgical site infections following hip and knee arthroplasties in adults in the United States, 2020 through 2030

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 39 / Issue 10 / October 2018

- Published online by Cambridge University Press:

- 30 August 2018, pp. 1189-1195

- Print publication:

- October 2018

-

- Article

- Export citation

Attributable Mortality of Healthcare-Associated Infections Due to Multidrug-Resistant Gram-Negative Bacteria and Methicillin-Resistant Staphylococcus Aureus

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 38 / Issue 7 / July 2017

- Published online by Cambridge University Press:

- 01 June 2017, pp. 848-856

- Print publication:

- July 2017

-

- Article

- Export citation

The Cost–Benefit of Federal Investment in Preventing Clostridium difficile Infections through the Use of a Multifaceted Infection Control and Antimicrobial Stewardship Program

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 36 / Issue 6 / June 2015

- Published online by Cambridge University Press:

- 18 March 2015, pp. 681-687

- Print publication:

- June 2015

-

- Article

- Export citation