2.1 A Taxonomy of Disasters

Given that there is a wide range of causes and consequences of disasters, it is unsurprising that there are also numerous forms of disaster classification. A classic categorization, focusing on the causes of disasters, is the distinction between what is natural and what is human-made. For more than one reason the validity of such a simple dichotomy is questionable. Indeed, scholarship on Hurricane Katrina has already claimed that “there is no such thing as a natural disaster.”Footnote 1 Even though the initial shock was a natural event, the catastrophic outcome was ultimately the result of human intervention – or the lack of it. Put simply, without existing societal vulnerability, the chances of a hazard turning into a disaster are small.Footnote 2 Sometimes, the hazard or shock itself is also partly human-induced. For example, in the Limbe region in Cameroon, landslides are triggered by intense rainfall together with deforestation of steep slopes, soil excavation, and unregulated building activities.Footnote 3 The difficulty with this kind of classification system then is the blurred line between what might be considered ‘exogenous’ and ‘endogenous,’ with both features frequently present.

Another classic typology, also starting from causes, is a distinction based on the type of event that triggered the disaster, and is commonly used in contemporary disaster management. Leaving aside human conflict and industrial and transport-related accidents, three broad categories can be identified: disasters triggered by geological events (such as earthquakes, volcanic eruptions, and landslides), biological events (such as epidemics and epizootics) and meteorological–hydrological events (such as storms, floods, and droughts).Footnote 4 Sub-divisions and variations are possible. Famines, for instance, are often distinguished as a separate category – perhaps as a result of the complexities involved in explaining them.Footnote 5 There is, however, a tendency for crossovers and combinations: meteorological–hydrological events such as storms may lead to harvest failure and to famine, and they in turn may lead to biological events such as epidemics.

A different approach focuses on the time it takes for the triggering event to build up and the disaster to actually unfold, distinguishing between ‘rapid onset’ disasters, such as earthquakes and hurricanes, and ‘slow onset’ or insidious disasters, which include various types of environmental degradation such as desertification, sand drifts, and sea-level rise.Footnote 6 This categorization only partially overlaps with a typology based on triggering events. While many geological hazards – particularly earthquakes – are of the rapid onset type, meteorological–hydrological hazards can fall into either of the two categories: temporally, a hurricane is very different from rising sea levels. Biological hazards, moreover, fall somewhere in between: the unfolding of an epidemic can take months or years rather than minutes, but with differing stages of intensity. Rapid onset disasters, taking people by surprise, are more likely to lead to high levels of physical destruction, mortality, and displacement of survivors. The Lisbon earthquake of 1755, for instance, destroyed the city almost completely. Recent estimates arrive at a death toll of 20,000 to 30,000 (from a population of 160,000 to 200,000) with a similar number of survivors leaving the city.Footnote 7 Slow onset disasters are more insidious and therefore rarely cause immediate death; they are more likely to impact livelihoods in the long run. Over the long term, hazards such as erosion, climate change, and pollution can cause serious health problems, fertility reduction, outward migration, and capital destruction – increasing vulnerability when working in tandem with other kinds of sudden hazards. The major problem with classifying these types of disasters is that the impact of the hazard or shock can be difficult to isolate from other factors contributing to the same outcome.Footnote 8

We can also establish a taxonomy based primarily on the consequences of disasters. Just like triggering events, consequences can be ranked according to their nature; for instance by distinguishing between demographic effects (raised mortality or reduced fertility), physical effects (destruction of land, buildings, infrastructure, and machinery), economic effects (directly as a result of physical destruction or indirectly due to erosion of livelihoods or redistribution in the long run), and social and political effects (social polarization, unrest, or upheaval). Some of the classification systems even try to form categories that incorporate two dimensions, such as the Modified Mercalli Intensity scale, which, unlike the Richter scale, incorporates human impact and building damage as well as the magnitude of a seismic shock.Footnote 9 Categories, and their relationship to disasters, are not always clear-cut, however. Demographic consequences, for instance, are obviously highly relevant in the case of epidemics, and mortality has been cited as one of the essential ways of distinguishing subsistence crises or dearth from famine.Footnote 10 However, mortality can also be prominent in geological disasters such as large earthquakes or tsunamis, or in meteorological ones. For example, the 2010 earthquake in Haiti created conditions conducive to the spread of diseases such as cholera.

The fact that disasters show such variety, both in their causes and in their consequences, raises a question vital to the core of this book: if this is the case, is it at all possible to analyze disasters using a general conceptual framework? Does it make sense to compare epidemics and earthquakes, or tsunamis and sand drifts? Overall, we believe so. While in the practice of disaster management the exact measures to mitigate the impact of a hazard or prevent its recurrence vary depending on the nature of the trigger, on a higher level of abstraction significant similarities can still be demonstrated. The ways in which individuals, groups, and societies cope with shocks – or fail to do so – often share characteristics – and this is something particularly brought to the fore when viewed in a historical perspective.

Indeed, two crucial concepts here are vulnerability and resilience.Footnote 11 Determinants of vulnerability, although situationally specific, often incorporate various aspects of distribution of wealth, resources, support, and opportunity, while resilience is determined to a significant extent by social, economic, and political institutions and the context in which they function.Footnote 12 Similarities of this type allow us to compare disasters that at first sight appear very different. For example, ‘entitlement theory,’ originally developed by Amartya Sen to explain vulnerabilities to twentieth-century famines in the developing world, has recently been used as a concept to assess vulnerabilities during large-scale flooding of coastal regions in the pre-industrial North Sea area and to analyze the opportunities of specific groups to organize protection against flooding and restrictions on their ability to do so. Just as weather-induced harvest failures lost their central role in explaining famines, the entitlement approach explains floods as a result of declining entitlement to flood protection by specific groups rather than by looking at storminess or climatic factors.Footnote 13

2.2 Scale and Scope of Disasters

The scale and scope of disasters is something that continues to help them appeal to the popular imagination. By scope, we refer to the range of different facets of everyday life a disaster can touch; by scale, we refer to the intensity, magnitude, or territorial spread of effect for each of these facets touched. The diversity in scope and scale of disasters makes them a suitable subject for all kinds of popular rankings, many of which are found on easily accessible resources such as Wikipedia.

Historical research into the scale of disasters has quite a long tradition of focusing predominantly on the death toll – mortality being an important measurement for historical demographers of the 1960s and 1970s, who defined ‘crisis severity’ through death rates using various kinds of debated methodologies.Footnote 14 However, as disaster research has become a topic in its own right, new parameters have been added, mainly focusing on material losses, as these were highly relevant in dealing with the outcome of a disaster. Foster’s calamity magnitude scale, developed in the 1970s, took into account the number of fatalities, the number of seriously injured, infrastructural stress, and the total population affected. According to this scale, the Black Death emerged as the largest ‘natural’ disaster, after the ‘human-made’ shocks of the World Wars.Footnote 15 The logarithmic Bradford disaster scale, developed for disaster prevention and management, combines fatalities, damage costs, evacuation numbers, and injuries.Footnote 16 Nowadays, physical damage is seen as essential to call an event a disaster, “because that is the perspective of institutions charged with their management.”Footnote 17 Government agencies and insurers are most interested in material losses, as this is the exact aspect they have to resolve. The 1995 Chicago heat wave, for example, which killed approximately 140 people, was not formally identified as a disaster by the US government, even though the death toll was higher there than in previous years’ Californian earthquakes, which were labeled as disasters.Footnote 18 Accordingly, the definition of a term such as disaster always remains fluid and flexible and can diverge across social interest groups, even for the same event.

Although the scope of disasters can be wide, then – affecting very different aspects of social life – the main way of classifying disaster damage has still tended to revolve around casualties and material damage. And it is not always a given that the scale of effect in one dimension will be the same in the other. In some cases, a shock destroys capital but leaves people untouched, and on other occasions a shock kills people, but leaves much of the infrastructure and goods intact – the Black Death of 1347–52 being the classic example. Sometimes both occur together. Certain hazards such as floods have rarely killed large numbers of people throughout history – those that do are exceptional.Footnote 19 Other hazards such as earthquakes cause fatalities, but those deaths are then further supplemented by events occurring in the aftermath – see the already-mentioned example of cholera in post-earthquake Haiti in 2010 and 2011.

Famines and epidemics are the best example of disasters with a high death rate, and even famine mortality tends to be created mainly by conditions conducive to the spread of diseases rather than starvation per se – at least prior to the twentieth century. Classic famine-related diseases include tuberculosis, dysentery, typhoid fever, and typhus, which are linked to acute malnutrition, eating foods not normally fit for human consumption, a decline in the attention paid to hygiene, and increasing amounts of displacement and migration.Footnote 20 Plague is not said to be a disease of malnutrition, which may account for the lack of association between grain prices and plague mortality in certain early-modern studies,Footnote 21 but still may have been indirectly stimulated by breakdowns in infrastructure, poor hygiene, and heightened migration during food crises.Footnote 22 The scale of these mortality effects was not always the same, however – even with regard to the same type of disease, and to the same outbreak.

Even within the same disease, the severity and pervasiveness of outbreaks could differ significantly. The plague epidemic in 1629–30 in Northern Italy, for example, was much more severe than the plague outbreaks of the sixteenth century in the same area, and more severe than the same 1629–30 outbreak that had occurred in parts of Central Italy.Footnote 23 Many diseases killed large numbers of people in a restricted number of localities, but few combined the key facets of death rate and territorial spread to be truly large-scale killers. Understanding the diverse scale of the death toll is often difficult in a historical context because (a) it requires large amounts of epidemiologic data easily comparable over long periods of time and large areas – something we do not always have, particularly before the early-modern period, and (b) the causes are immeasurably complex, bringing together interrelated factors on environment, climate, natural and acquired immunity, proximity to vectors and points of contagion, pathogen adaptation, and human patterns of warfare, migration, trade, commerce, and institutional control of the disease.

The scale of material damage stemming from disasters can also differ hugely. For some disasters it was decidedly limited: many famines, for example, had only a limited impact on capital goods, if they were not twinned with warfare.Footnote 24 Earthquakes, however, could do much more material damage, as the one that hit Lisbon in 1755 illustrates. The earthquake and the tsunami and fire that followed in its wake made two-thirds of the city uninhabitable, and destroyed 86 percent of all church buildings.Footnote 25 Yet it was not only the type of disaster, but also the society it struck that determined the scale of material damage. As an example, the indigenous Filipino way of building nipa’s – palm and bamboo huts – was seen as completely backward by the Spanish colonial powers, but these structures had the advantage of being easily rebuilt after earthquakes. In contrast, the seventeenth-century Spanish baroque stone buildings were reduced to ruins by an earthquake in 1645, creating much greater material damage.Footnote 26 It is telling that between 1977 and 1997 the number of deaths from ‘natural’ disasters remained more or less constant (even as the world population increased), but the cost of disasters increased significantly.Footnote 27 Our highly technological societies of today have become more vulnerable in terms of the specific category of material damage.Footnote 28

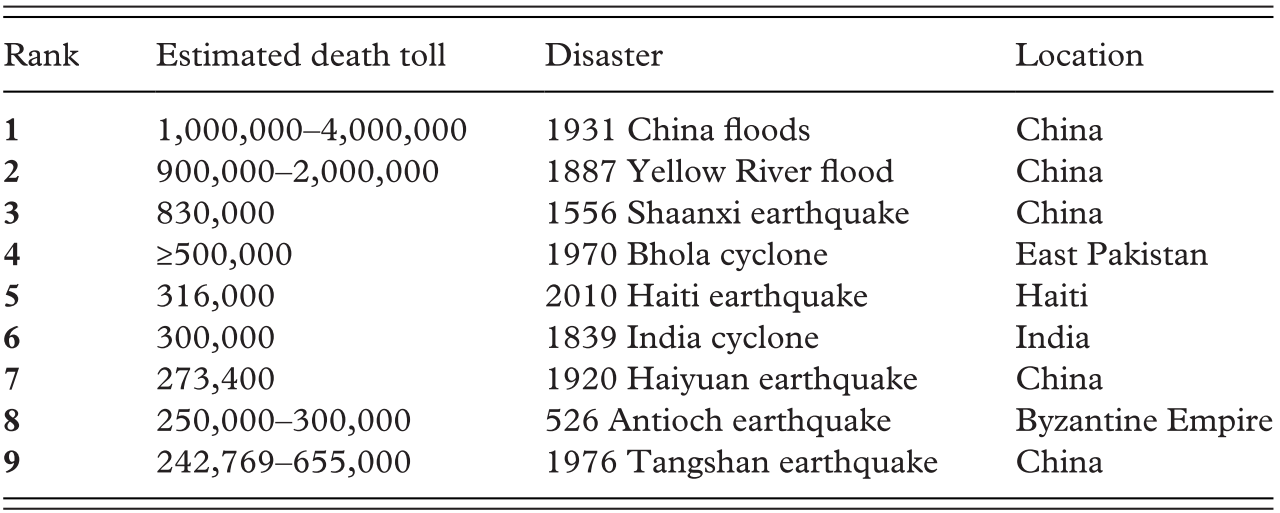

The assessment of casualties and material damage is, however, a complex undertaking and historians and social scientists should note the difficulties that often arise – particularly in light of ‘popular’ interest in these kinds of facets of disasters. This issue can be demonstrated by looking more closely at some of the ‘rankings’ that often appear on the Internet – for example, the ranking of ‘death tolls’ from ‘natural disasters’ taken from Wikipedia and presented in Table 2.1. These lists, like many other related ones on the Internet, tend to exaggerate the death rate for historical floods and earthquakes, sometimes even producing estimates that exceeded the total population count of the day, which then run the risk of producing sensationalist stories and narratives. What are the reasons for this? A significant problem is that many of the estimates made by contemporary observers are taken at face value, when several will have been exaggerated for a particular agenda (tax concessions, for example) or a moralizing standpoint or rhetorical effect.Footnote 29 Sometimes the guesses of contemporaries were simply that – guesses. Even in the late eighteenth century, when statistical material became more important and more prevalent in disaster-reporting in newspapers, numbers were by no means exact. Numbers are also not necessarily neutral, since they “incorporate the values of the people who create them, and data collection begins with the collector’s interests or concerns.”Footnote 30 Chinese victims in the 1906 San Francisco earthquake and Aboriginals in twentieth-century Australian cyclones were simply not counted – which is problematic, given that in both cases they represented numerically large proportions of the population.Footnote 31 During the Lisbon earthquake of 1755 both Protestant and Catholic commentators strongly inflated the number of dead, to fit in with their respective narrative of divine retribution for the city’s godlessness. The Marquis of Pombal, the Portuguese prime minister and personification of Enlightenment and ‘godlessness,’ was dismayed by this and ordered his own ‘official’ damage report, one of the first of its kind.Footnote 32 Measuring the impact of disaster is thus not as easy as Wikipedia may have us believe.

Table 2.1 Ranked list of natural disasters by death toll on Wikipedia, https://en.wikipedia.org/wiki/List_of_natural_disasters_by_death_toll

| Rank | Estimated death toll | Disaster | Location |

|---|---|---|---|

| 1 | 1,000,000–4,000,000 | 1931 China floods | China |

| 2 | 900,000–2,000,000 | 1887 Yellow River flood | China |

| 3 | 830,000 | 1556 Shaanxi earthquake | China |

| 4 | ≥500,000 | 1970 Bhola cyclone | East Pakistan |

| 5 | 316,000 | 2010 Haiti earthquake | Haiti |

| 6 | 300,000 | 1839 India cyclone | India |

| 7 | 273,400 | 1920 Haiyuan earthquake | China |

| 8 | 250,000–300,000 | 526 Antioch earthquake | Byzantine Empire |

| 9 | 242,769–655,000 | 1976 Tangshan earthquake | China |

This should not be seen as merely a problem of ‘popularizing’ media sources either. This is because, as a result of the recent trend towards increased use of historical data by scholars working in the natural and social sciences (i.e. not trained historians), a number of papers published in high-ranking science journals are now being accepted that use figures and data taken from the Internet or historical papers, without taking into account all the methodological issues or lacunae in data collection. For a discussion on this trend, see Section 3.1.3 on source criticism and big data.

2.3 Concepts

Having explored variations in the types, scale, and scope of disasters, this section provides a critical introduction to key concepts used to study disasters – primarily those used in the disaster studies literature, but also in cognate fields such as the ecological sciences and development economics. It makes particular reference to vulnerability, resilience, and their temporal dimensions, while acknowledging that use of these concepts is inconsistent and occasionally ambiguous between disciplines and contexts.Footnote 33 Whereas in ecology, for instance, resilience is increasingly seen as the adaptive ability to transform to a different state, development economists often use a more limited definition that highlights a society’s ability to return to its pre-existing state.Footnote 34

2.3.1 Disaster and Hazard

One point of contention in the disasters literature lies in the term ‘disaster’ itself, and how it should be defined – and distinguished from other terms such as ‘catastrophe’ and ‘shock.’Footnote 35 Certainly there is a tendency towards separating qualifiers such as ‘natural’ from the term ‘disaster.’ Although the term ‘natural disaster’ has fairly widespread use as a convenience term in the mainstream media and some popularizing literature, few scholars now argue that disasters are simply ‘natural events,’ regardless of whether they are working in the natural sciences, social sciences, or humanities. Indeed, it is clear that although hurricanes, for example, are fundamentally natural phenomena, the root cause of disastrous effects emerging from hurricanes can usually be put down to poor building construction or weak institutional infrastructure rather than the occurrence of extreme winds or storm surges per se. Indeed, the past few decades of disaster studies research have consistently shown that it are social processes that shape disasters and those most at risk. These include technological, political, and cultural factors that determine human capacity to prepare for, cope with, and recover from sources of potential harm,Footnote 36 as well as gender, ethnicity, and age – each of which has little to do with the natural environment.Footnote 37 The emphasis on the naturalness of disaster that comes with the term ‘natural disaster’ focuses attention on physical processes and their destructive power, rather than what makes people vulnerable to these processes, and can distract attention from human responsibility for the causes of disasters.Footnote 38

Attention in the disasters literature has instead turned to terms such as environmental ‘hazards,’ or sometimes ‘shocks.’ The word ‘shock,’ with its inbuilt element of surprise, does not hold the same breadth of applicability as hazard, as it implies that the event or process was unexpected. This may be the case in an area suffering a severe tsunami triggered by a distant high-magnitude earthquake, but the same may not be said for a river bursting its banks onto a floodplain. Still, the words ‘shock’ and ‘hazard’ more aptly describe an environmental event or process itself, whereas ‘disaster,’ or perhaps ‘nature-induced disaster,’ refers specifically to the severe impact of an event.Footnote 39 As we have seen, hazards can be both natural and technological, and both can lead to disasters. But before we examine the factors that might turn a hazard into a disaster, how do we distinguish a disaster from a hazard in the first place? This question has been the subject of much debate in disaster studies.Footnote 40 Previously we showed the complexities of classifying disasters on the basis of characteristics such as magnitude, duration, impact, potential of occurrence, and ability to control impact,Footnote 41 as well as consequences such as economic losses and mortality.Footnote 42 In reality, any such distinction or threshold is an inherently anthropocentric valuation, and any metric is open to criticisms of generalization. What is clear, though, is that there is a qualitative difference between disasters and hazardous events: disasters severely disrupt normal activity, often cause damage or casualties because coping capacities are exceeded, and require large responses in terms of resources and organization which often necessitate external support.

If an extreme geophysical or meteorological event is not simply a synonym of a disaster, and disaster risk is produced by a combination of factors, it becomes imperative to understand the determinants of different levels of vulnerability of different groups of people and how societies tried to manage hazardous events.

2.3.2 The Disaster Management Cycle

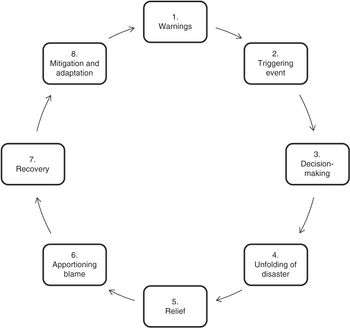

Some disasters have also been classified according to how they are managed, and this approach is seen nowhere more clearly than in the disaster management cycle – a framework to understand the processes and stages through which disasters evolve. This framework has been used, further developed, and modified in a variety of disciplines including sociology, geography, psychology, civil defense, and development studies. The initial idea to develop a framework to understand disasters via how societies cope with their effects dates back to the 1930s. Practitioners and policy makers distinguished between different phases of the unfolding disaster to respond more effectively in future situations. Initially, three stages were identified: a preliminary stage where the hazard and problems built up, followed by the disaster stage in which the actual event took place, and finally the readjustment or reorganization stage.Footnote 43

In the 1970s the idea of a cycle was developed because of the often-recurrent nature of hazards and the disasters that can ensue. Seldom is a society hit by a completely unforeseen hazard or disaster, such as a meteorite or sudden earthquake in low-tectonic-risk zones. A series of disasters occurring during that decade urged practitioners and policy makers to look for more than simply disaster relief measures: societies had to become more receptive to prevention. Therefore, the disaster management cycle was developed, comprised of four phases including mitigation after a previous disaster, the development of preparedness, the response after another triggering event, and recovery.Footnote 44

Since then different disciplines and scholars have proposed several models with more or fewer stages. Here we present the disaster cycle as recently reformulated by John Singleton as an analytical tool for historical research (Figure 2.1), which includes more psychological and social processes than the 1970s framework. We use this disaster cycle as an illustrative example precisely because it fits within our framework for two reasons. First of all, the model acknowledges that hazards do not happen out of the blue but interact with societies that have a long prehistory with similar hazards, and therefore former mitigation and adaptation measures affect the outcome of a disaster. Second, the cycle moves beyond the short-term disaster effects and mitigation stages, and urges scholars to look at the long-term effects and adaptation measures. Therefore, this model is conveniently set up to address the temporal components of historical disasters. In this framework, decision-making is one of the most crucial stages between the occurrence of hazard and potential disastrous consequences, and thereby a driver of high or low disaster impact. Those intermediate response and decisions stages have also been described as ‘sensemaking’ points.Footnote 45

Figure 2.1 The disaster cycle, based on the model of John Singleton. Singleton, ‘Using the Disaster Cycle.’

Although providing a more ‘complete’ overview of how disasters can unfold from early warning signs and triggers, to adaptive responses, and then the aftermath of recovery, there are also some limitations to classifying and conceptualizing disasters in a full cycle. It must be remembered that this is a disaster management cycle, not a disaster cycle; and, furthermore, it is definitively a cycle and thus connected to cyclical processes only. Many disaster ‘cycles’ do not reach those later stages at all, and it is unclear whether all stages are actually intrinsic parts of the disaster experience. For example, according to Singleton’s framework, almost all disasters lead to the apportioning of blame, and yet recent historical research has shown that even some of the most severe epidemics did not necessarily lead to scapegoating or social unrest,Footnote 46 but instead gave rise to cohesive or even compassionate responses, and some of the most severe famines did not necessarily bring about the collapse of collective solidarity mechanisms.Footnote 47 Does the absence of blame then preclude a whole host of crisis conditions from being labeled as disasters? A cycle such as this one also has no room for divergence. We are told that intermediate decision-making processes remain vital to disaster impact, but there is no possibility of measuring the effectiveness of these decisions and relief phases. Accordingly, the disaster management cycle approach to disaster classification and conceptualization offers a step forward in outlining a ‘textbook case’ of how a disaster unfolds over a period of time, but we need more flexible frameworks if we want to conceptualize disaster experiences that diverge from the ‘norm.’ For that we must turn to other concepts such as vulnerability, resilience, and adaptive capacity.

2.3.3 Vulnerability

It is easy to associate disasters with ‘nature’ when we consider that earthquakes are caused by shifting tectonic plates, floods by storm surges, and plague by biological pathogens. Nevertheless, the naturalness of natural disasters was already questioned in the 1970s in a seminal article in Nature, where it was stated that “the time is ripe for some form of precautionary planning which considers vulnerability of the population as the real cause of disaster – a vulnerability which is induced by socio-economic conditions.”Footnote 48 Without denying that an initial hazard is often required for a disaster, this approach emphasizes that the root cause of a disaster is social, and that people have to be already vulnerable to hazards in order for a disaster to arise: that is to say, all vulnerability is social vulnerability.Footnote 49 Through this lens, the most important questions are therefore twofold: who suffers from disasters, and why does society create these precarious circumstances that expose people to suffering?

Before addressing those questions, however, a more essential question is what do we mean by vulnerability? According to one influential work from the 1980s, vulnerability was meant to represent “the exposure to contingencies and stress and difficulties coping with them,” and is comprised of two sides: an external side of risks, shocks, and stress to which an individual, household, or community is subject, and an internal side concerning a lack of means to cope.Footnote 50 According to this kind of definition, the concept then rests on three distinct facets: (1) a risk of exposure; (2) a risk of inadequate capacities to cope; and (3) the attendant risks connected to poverty.Footnote 51 These facets of vulnerability can be found within individuals, but also on the collective level of social groups, communities, and whole societies.

The above-mentioned definitions and general approach to vulnerability were at their most dominant in the 1970s. On the basis of critiques of Western development plans for the Global South, this movement stated that vulnerabilities arose from political choices rather than from natural inevitabilities. Its popularity, however, waned in the decades thereafter. Driven by increasing insight into climate change and its human components, systemic approaches began to gain ground (focusing on ecosystems, for example), in which the ability of systems to absorb, adapt, and transform when confronted with disasters was considered pivotal. In particular, much of the focus connected to climate change shifted from different and diverse social groups to either the ‘system’ as a whole or the individual, and hence from vulnerability to resilience (see the next section). Social relations and the application of power were no longer central to many of the hazards and disasters narratives, as attention moved from causality to response.Footnote 52 In the process, as the ‘social’ aspect of disasters became increasingly obscured, so too did the structural inequalities in wealth, resources, and power that shape how disasters impact societies and people differentially. This is why several authors linked this shift and the new focus on resilience and adaptation to the hegemony of neoliberal ideologies.Footnote 53

Nevertheless, recent work has argued for disaster research to once again return to vulnerability as a core organizing concept. This argument centers on the potential of vulnerability to put the ‘social’ back into disaster analysis, focusing on elements that run the risk of being neglected in more instrumentalist or technocratic approaches to disasters. Indeed, by placing particular attention on the root causes that make people vulnerable, we are able to shine more light on different aspects that become apparent only over long periods of time, and by moving beyond directly disaster-related issues.Footnote 54 For those looking to use history as a tool for understanding more about different dimensions of disasters, this inherent temporal aspect of the vulnerability approach becomes invaluable – whether working across years, decades, or centuries – since the vulnerability of individuals, groups, and communities varies over time, and we need the frameworks to analyze and understand these changes.

2.3.4 Resilience

While disaster studies scholars from the 1970s to the 1990s were preoccupied with vulnerability and its root causes, resilience became the buzzword of disaster studies at the start of the twenty-first century. The origins of resilience – from the Latin resilire meaning more literally to ‘jump back’ – can be traced back to the 1940s and 1950s when the concept was used both in psychology (‘lives lived well despite adversity’) and in engineering (the capacity of materials to absorb shocks and still persist). Yet it was from ecosystem analysis that the concept migrated to disaster studies. As defined by Buzz Holling in 1973, resilience refers to either the ‘buffer capacity’ of an ecosystem (its ability to absorb perturbation), the magnitude of the disturbance that can be absorbed before structural change occurs, or alternatively the time it takes to recover from disturbance.Footnote 55 Later, the concept was transferred to the social sciences. W. N. Adger defines it as “the ability of communities to withstand external shocks to their social infrastructure” and sees a direct link between ‘ecological’ and ‘social’ resilience – particularly in societies highly dependent on a single resource or a single ecosystem.Footnote 56 Over time, fostering resilience became the official mantra of international disaster relief and prevention, with the overarching idea that if we can strengthen the capacity of households and communities in risk-prone areas to counter hazards, for instance by improving alert systems and solidarity networks, organizing micro-credit, or removing institutional constraints to food markets, then we can accommodate recurrent hazards.

While the initial focus of social science research into resilience was about ‘bouncing back’ after disasters, things became complicated with the realization that change following a shock is not necessarily something that can be viewed negatively, since it can also stimulate changes for the better. In disaster studies, older ‘conservative’ definitions of resilience – measuring the restoration of the previous equilibrium – became replaced by more ‘progressive’ ones, seeing adaptation of the system in more positive terms.Footnote 57 When we look to the past, there are some examples of that too. In the fourteenth century, climatic cooling and epidemic diseases may have resulted in the retreat of settlement and arable cultivation in upland England or Scandinavia – on the surface a ‘negative’ outcome – and yet this could also be interpreted as simply rearranging farming and habitation into more fertile areas, or a complete shift in production from arable to pastoral.Footnote 58 Apart from material or demographic changes, institutions could be transformed as well to suit the new environmental and societal structures that develop after a shock.

By stretching this idea too far, however, new problems can be created: if the complete make-over of a society after a major disaster is qualified as a ‘resilient’ outcome, then only total breakdown or collapse remains as counter-evidence for a failure in resilience. And although popular books have been written on the subject of collapse of societies and civilizations in the past,Footnote 59 from a historical perspective we are also aware that total breakdown and collapse has been exceptionally rare – certainly over the period for which written documents survive. Accordingly, it might also be the case that most studies on past hazards and disasters reach the same conclusion as Georgina Endfield in her thought-provoking discussion of extreme drought and floods in colonial Mexico: society did not collapse, but proved ‘remarkably resilient’ to such problems.Footnote 60 If hardly anything can counter the resilience outcome – and history proves that to be the case more often than not – then the term begins to lose much of its utility.

As noted towards the end of the previous section, further critiques of resilience include the subordinate role given to power relationships, agency, values, and knowledge. Some authors even see resilience as the handmaid of neoliberalism, strengthening its discourse on personal responsibility, but this is a “responsibility without power.”Footnote 61 This is particularly problematic given that systems that may be classified as highly resilient can contain, or even derive some of their resilience from, vulnerability within certain groups or communities.Footnote 62 Ultimately in this book, we accept the more recent definition of resilience as something systemic, where adaptation can lead to a new post-hazard or post-disaster ‘state of things’ (see the next section on adaptation), but we also more explicitly demonstrate our criticisms of the concept by employing historical examples in Chapters 5 and 6. In the end, while a certain level of resilience becomes the outcome from most historical disasters, vulnerability outcomes are much more diverse and unpredictable.

2.3.5 Adaptation, Transformation, and Transition

Adaptation generally refers to “the adjustments that populations take in response to current or predicted change,” and is related to each of the frameworks introduced above.Footnote 63 Emphasis on adaptation was long shunned within the Intergovernmental Panel on Climate Change (IPCC) in favor of the mitigation of greenhouse gas emissions, whereas acceptance of a widespread need for adaptation was seen as accommodating (or even embracing) the inevitability of disaster.Footnote 64 Over time, however, the term adaptation or adaptive capacity did gain currency – particularly from the early 2000s – and in close parallel with the dominance of more resilience-focused research that accepted more flexible definitions of resilience outcomes. This was initially very prominent in climate change research, where models within the natural sciences prescribed technological ‘fixes’ for issues such as declines in agricultural productivity.Footnote 65 Only later did social science approaches refocus research into adaptation onto areas such as indigenous knowledge – but also cultural limits to adaptation and even the potential negative consequences of adaptive action, which became known as ‘maladaptation.’Footnote 66 Thus, we came to learn that hazards and disasters often led to adaptation, with adaptive capacity and resilience closely linked,Footnote 67 and this has had the knock-on effect of allowing us to exchange gloomy interpretations of disasters for more ‘positive’ ones, stressing the opportunities for change created by the disaster.Footnote 68 In more recent times, historians have argued for cases where climate-related pressures, leading to hazards, did indeed create new incentives for adaptation, since risks and hazards could function as constructive triggers for innovations. See for example the economic and cultural flourishing of the Dutch Golden Age of the seventeenth century being tied to successful adaptive capacity during the worst phases of the so-called Little Ice Age.Footnote 69

However, at the same time, we have also come to learn that adaptation did not always occur post-hazard or post-disaster, and equally that not all of the adaptation that did occur can be considered in ‘positive’ terms. Elements such as social capital, networks, trust, and coordination are often cited as factors promoting adaptation,Footnote 70 yet elements hampering adaptation have been cited, such as mismatches in scale between environmental and social dynamics, asymmetries in power, and inequality.Footnote 71 Much of this is further complicated by the intricacies of scale: hazards that lead to disastrous disruption at the level of single-village communities may be perfectly absorbed on a regional or macro-level, for example. Adaptation, and the form it takes then, is never inevitable – and a path forward for understanding how societies respond to hazards and the disasters that ensue is surely connected to better understanding why certain systems and societies adapt and why some do not, and, moreover, why some adaptations are effective, while others are less so. As Eleonora Rohland has noted, this perspective is seemingly at odds with definitions of adaptation that include notions of “moderating harm” or “exploiting beneficial opportunities” – both of which depend on time, place, conflicting interests, and power relationsFootnote 72 We suggest that this is an area in which historians can offer the greatest insights and contribution, since an important element for explaining adaptation is incorporating chronology and developments across time.

Indeed, influential models for adaptation of social systems or ecosystems – such as the ‘Adaptive Cycle’ of Gunderson and Holling – pay explicit attention to temporal aspects and the different phases in which adaptation can take place, but stress that this adaptivity has its limits when confronted with hazards that are too numerous or too extensive.Footnote 73 According to this framework, adaptation within socio-ecological systems accommodates external disruption as easily as internal dysfunction, but over time the system becomes more rigid and difficult to adapt – leading to a lack of flexibility and ‘rigidity traps’ – which in turn creates a kind of tipping point or threshold that can lead to implosion from even the smallest of shocks or slightest disruption. Historians have indeed shown – for example in the case of success or failure in adapting to hurricanes – that rigid configurations of technologies or institutions can be difficult to transform, even in the wake of severe hazards.Footnote 74 More broadly speaking, recently support has gathered for historically informed approaches towards climate change adaptation, with greater attention paid to long-standing or even path-dependent norms and processes that drive or constrain successful or unsuccessful adaptation in particular social contexts.Footnote 75

Therefore, through a lens with more attention paid to temporality, historians can fundamentally complement and alter the current focus on adaptation. For one, historical research may help to redefine practices that on the surface appear ‘maladaptive.’ For example, it has been shown that the migration of pastoralists in times of drought should not be conceived negatively but instead offers long-standing and effective ways of sustaining livelihoods in the face of climate-related hazards.Footnote 76 Conversely, historical research may dampen current optimism about the possibility for adaptation. While ecosystems can perhaps indefinitely and automatically adapt, historians by investigating adaptation processes in the long term can show how human societies do not have the same logic. Some did adapt, while others maintained a rigid, ineffective, or even destructive institutional framework. By examining how adaptation and the problems to which we are adapting emerge over time, it also becomes possible to identify the level of action needed to reduce pre-existing vulnerabilities – whether this concerns incremental changes over extended periods or transformational change in the face of deep-rooted and recurring problems.Footnote 77

2.3.6 Risk

So far we have focused on physical exposure to natural hazards, and more significantly issues with societal organization that lead to pre-existing vulnerabilities of certain populations, yet another fundamental element for understanding the occurrence and impact of disasters is ‘risk.’ Risk relates to human agency and perception, which guide the strategies deployed by individuals or groups to manage and calculate the potential occurrence of harm. The calculation of risk is often based on weighing up the possibilities, likelihood, and consequences of a number of outcomes, and preference for an outcome differs from context to context depending on the values of the parties involved – that is to say, it is highly subjective. So, in many pre-industrial contexts, for example, societies had to weigh up the risks of living in a particular environment. Some agricultural workers lived in agglomerated settlements – even when they were poor, cramped, and far from their fields – because to live isolated next to their lands exposed them to the risks of violence.Footnote 78 But this also depended on the weight of knowledge. Some people in late-medieval and early-modern Europe moved to cities – to find work and have access to urban amenities – but at the same time heightened risks of death through disease outbreaks – the so-called ‘urban penalty,’ yet, of course, ordinary people did not have equal knowledge about the likelihood of either outcome.

Time is also an important aspect of risk. According to Ulrich Beck, for example, risk has taken on whole new dimensions and meaning from the second half of the twentieth century – and is thus not directly comparable to earlier risks. Indeed, the increasing number of ‘technological’ disasters (from the Bhopal gas tragedy to the nuclear disaster in Chernobyl), and the obvious failure to predict and control natural variability and climate change, has apparently introduced a new kind of ‘risk society,’ where perfect control has been abandoned, but the management and accommodation of uncertainty remain center-stage.Footnote 79 This shift in risk perception is reflected in the evolution of private insurance schemes related to climatic risks: expanding during the twentieth century, but declining again at the dawn of the twenty-first, as the potential losses were increasingly deemed impossible to cover by private insurance schemes.Footnote 80

Risk used in this way can also be critiqued in two ways. First, some works have argued that risk is not a neutral concept, but that its use is determined by inequitable power relations. According to disaster scholars such as Greg Bankoff, the so-called Third World has been significantly ‘othered’ throughout time by its repeated associations with risk. During colonial times, colonized countries were seen as disease-ridden and in need of a civilization offensive. After the World War II, focus was put on remedying poverty, but during the 1990s attention shifted to the ‘disaster-proneness’ of non-Western countries, which were labeled as risky environments and areas of disaster. In a way, our labeling of these regions as hazardous and risky also demonstrates a clash between two different types of risk societies: the one where risk as a frequent life experience has led to adaptation (as in several non-Western cases) and the one where risk was seen as needing to be controlled and later managed (as in the Western world).Footnote 81

Second, it is our view also that the perception of modern risk being inherently or intrinsically different from that of the pre-modern era is perhaps overstated slightly. Although perfect control may have been abandoned as a disaster philosophy in recent times – with the accommodation of uncertainty now more acceptable – it is clear that very few pre-industrial societies believed they could completely eradicate the possibility of experiencing both hazards and disasters. For many of them, the constant threat of certain hazards, and the management of their prevention and impact, was a central preoccupation, with the acceptance of natural hazards as ‘frequent life experience’ and continuous attempts to adapt both landscape and society to accommodate risk as well as possible.Footnote 82 Epidemics – once said to evoke total panic and breakdown – by the early-modern period at least became simply accepted as ‘normal’ characteristics of urban life, and while precautionary measures were developed, social transactions did not stop altogether. Peasant societies are often a prime example of this – both pre-industrial and contemporary – by shaping their survival strategies to deal with risks inherent to their way of life, but never eliminating them. In a much-cited article from 1976, D. McCloskey isolated this attitude towards risk as the distinctive element of subsistence-oriented societies.Footnote 83 Risk aversion characterized subsistence-farmers, who in order to guarantee the long-term survival of the family, tended to diversify income, crops, and plots, preferring a stable but low income to higher but less certain profit.Footnote 84

Still, however, it is important to point out that the ‘risk society’ model of hazard and disaster behavior is not seen in all occasions of the past. Commercial and capitalist societies in Europe, on the rise during the early-modern period, saw the development of a new and rational risk paradigm: one founded on a belief in the capacity of humans to control the ‘vagaries’ of nature, and based on the development of technological, financial, and institutional ‘improvements.’ Risk became a rational operation which could be calculated, predicted, and hence controlled.Footnote 85 In the modern tradition, risk became equated with opportunity, a sign of human progress. Originating in international trade, insurance contracts became the main institutional tool of dealing with nature-induced risks.Footnote 86 Accordingly then, we suggest that risk retains its place as an important concept for disasters in history – since, on the one hand, we should refrain from going as far as Beck to conceive of the distant past and the contemporary as two different and incomparable ‘worlds,’ and yet, on the other hand, we should recognize that risk could mean very different things over time – with its meaning often dictated by changes in the social distribution of resources and power.