646 results

VII - The Journal of Lieutenant Charles Knowles in the River Niger, 1864

-

-

- Book:

- The Naval Miscellany

- Published by:

- Boydell & Brewer

- Published online:

- 05 March 2024, pp 273-326

-

- Chapter

- Export citation

Association of COVID-19 coinfection with increased mortality among patients with Pseudomonas aeruginosa bloodstream infection in the Veterans Health Administration system

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue 1 / 2023

- Published online by Cambridge University Press:

- 15 December 2023, e237

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Transdisciplinary Philosophy of Science: Meeting the Challenge of Indigenous Expertise

-

- Journal:

- Philosophy of Science ,

- Published online by Cambridge University Press:

- 04 October 2023, pp. 1-11

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

VERTICO V: The environmentally driven evolution of the inner cold gas discs of Virgo cluster galaxies

-

- Journal:

- Publications of the Astronomical Society of Australia / Volume 40 / 2023

- Published online by Cambridge University Press:

- 27 April 2023, e017

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

A lost campaign? New evidence of Roman temporary camps in northern Arabia

- Part of

-

- Article

-

- You have access

- HTML

- Export citation

Strategies for exploration in the domain of losses

-

- Journal:

- Judgment and Decision Making / Volume 12 / Issue 2 / March 2017

- Published online by Cambridge University Press:

- 01 January 2023, pp. 104-117

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

How to Think with the Global South

-

- Journal:

- Philosophy of Science / Volume 90 / Issue 1 / January 2023

- Published online by Cambridge University Press:

- 21 October 2022, pp. 209-217

- Print publication:

- January 2023

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Governance and Reform of the State: Signs of Progress?

-

- Journal:

- Latin American Research Review / Volume 41 / Issue 1 / February 2006

- Published online by Cambridge University Press:

- 05 October 2022, pp. 165-177

-

- Article

-

- You have access

- Export citation

Longitudinal change in serial position scores in older adults with entorhinal and hippocampal neuropathologies

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 29 / Issue 6 / July 2023

- Published online by Cambridge University Press:

- 05 September 2022, pp. 561-571

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

The Hierarchical Taxonomy of Psychopathology (HiTOP) in psychiatric practice and research

-

- Journal:

- Psychological Medicine / Volume 52 / Issue 9 / July 2022

- Published online by Cambridge University Press:

- 02 June 2022, pp. 1666-1678

-

- Article

- Export citation

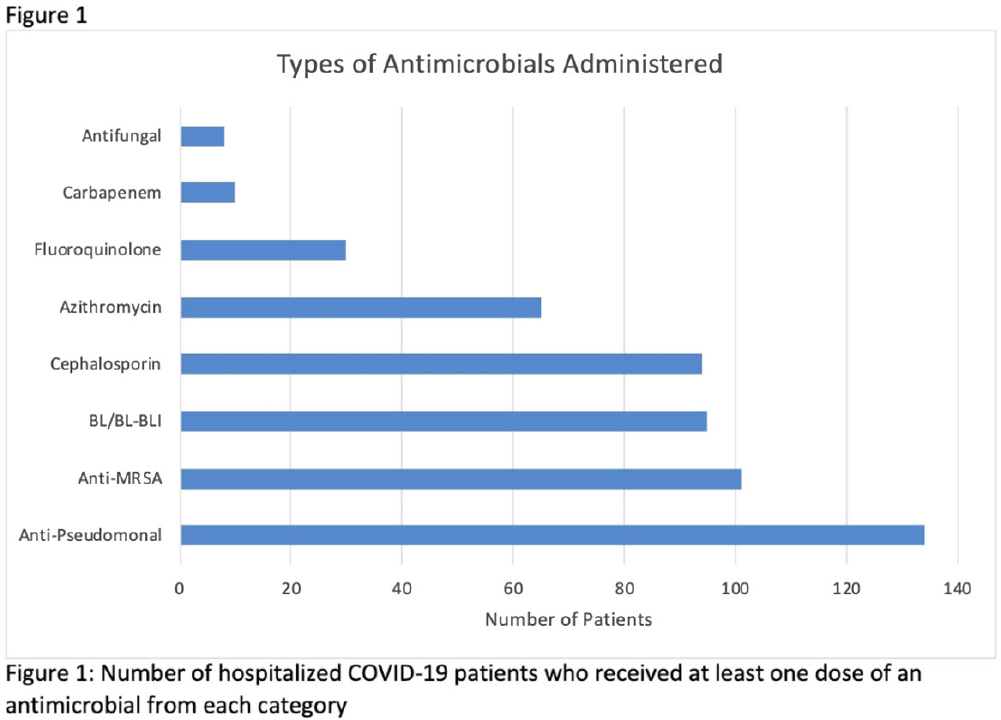

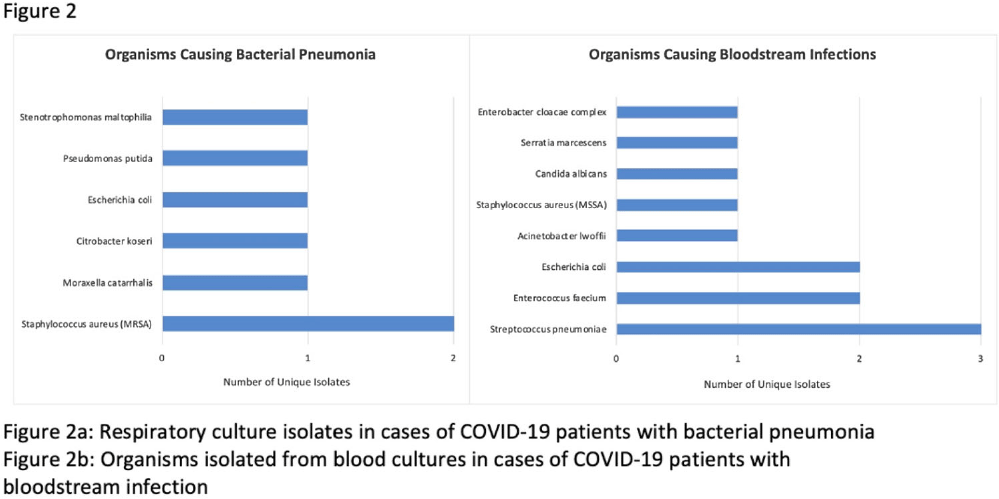

Coinfections in hospitalized COVID-19 patients are associated with high mortality: need for improved diagnostic tools

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 2 / Issue S1 / July 2022

- Published online by Cambridge University Press:

- 16 May 2022, pp. s7-s8

-

- Article

-

- You have access

- Open access

- Export citation

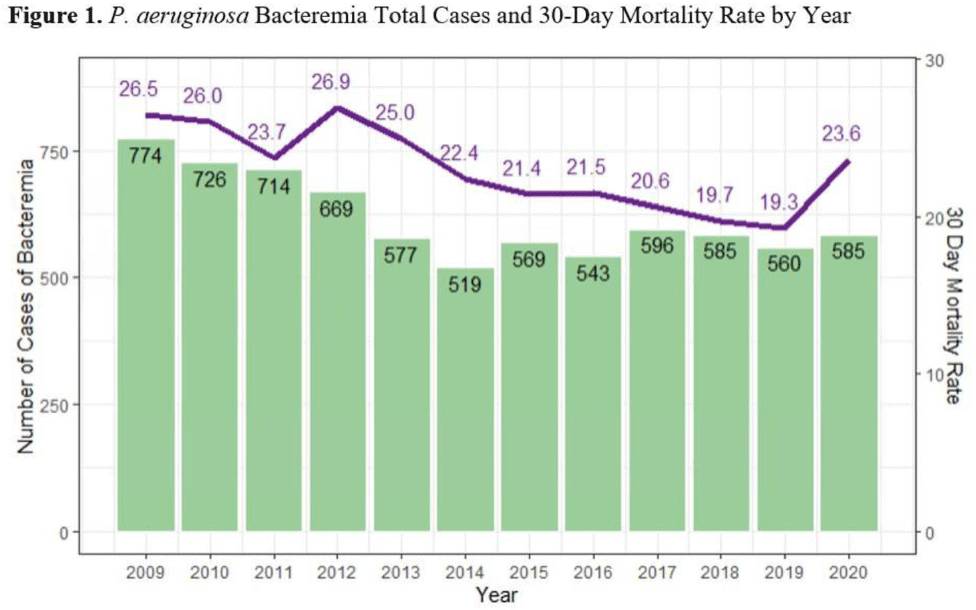

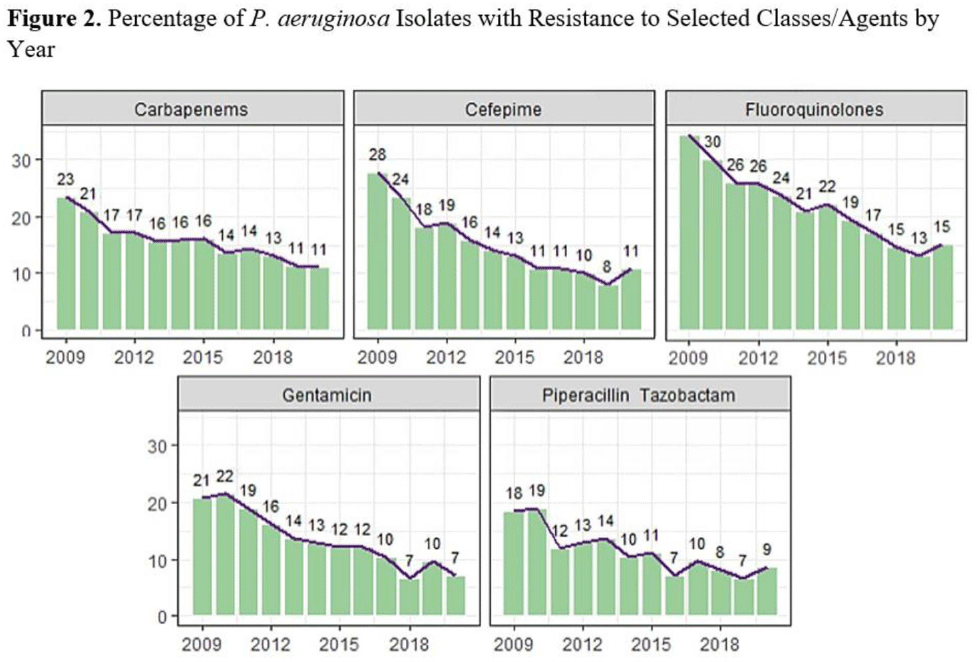

Pseudomonas aeruginosa bacteremia mortality and resistance trends in the Veterans’ Health Administration (VHA) system

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 2 / Issue S1 / July 2022

- Published online by Cambridge University Press:

- 16 May 2022, pp. s51-s52

-

- Article

-

- You have access

- Open access

- Export citation

259 Proton pump inhibitor use is not significantly associated with severe COVID-19 related outcomes after extensive covariate adjustment

-

- Journal:

- Journal of Clinical and Translational Science / Volume 6 / Issue s1 / April 2022

- Published online by Cambridge University Press:

- 19 April 2022, p. 43

-

- Article

-

- You have access

- Open access

- Export citation

Covid Conversations 5: Robert Wilson

-

- Journal:

- New Theatre Quarterly / Volume 38 / Issue 1 / February 2022

- Published online by Cambridge University Press:

- 03 February 2022, pp. 1-26

- Print publication:

- February 2022

-

- Article

-

- You have access

- Export citation

Sexual and reproductive health needs assessment and interventions in a female psychiatric intensive care unit

-

- Journal:

- BJPsych Bulletin / Volume 47 / Issue 1 / February 2023

- Published online by Cambridge University Press:

- 16 November 2021, pp. 4-10

- Print publication:

- February 2023

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Relationship of Purpose in Life to Dementia in Older Black and White Brazilians

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 28 / Issue 10 / November 2022

- Published online by Cambridge University Press:

- 19 October 2021, pp. 997-1002

-

- Article

- Export citation

Sexual and reproductive health needs assessment & interventions in a female psychiatric intensive care unit

-

- Journal:

- BJPsych Open / Volume 7 / Issue S1 / June 2021

- Published online by Cambridge University Press:

- 18 June 2021, pp. S47-S48

-

- Article

-

- You have access

- Open access

- Export citation

The link between social and emotional isolation and dementia in older black and white Brazilians

-

- Journal:

- International Psychogeriatrics , First View

- Published online by Cambridge University Press:

- 15 June 2021, pp. 1-7

-

- Article

- Export citation

A REDCap-based model for online interventional research: Parent sleep education in autism

-

- Journal:

- Journal of Clinical and Translational Science / Volume 5 / Issue 1 / 2021

- Published online by Cambridge University Press:

- 14 June 2021, e138

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Influence of microbiological culture results on antibiotic choices for veterans with hospital-acquired pneumonia and ventilator-associated pneumonia

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 43 / Issue 5 / May 2022

- Published online by Cambridge University Press:

- 04 June 2021, pp. 589-596

- Print publication:

- May 2022

-

- Article

- Export citation