15 results

Improving shared decision-making around antimicrobial-prescribing during the end-of-life period: a qualitative study of Veterans, their support caregivers and their providers

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue 1 / 2024

- Published online by Cambridge University Press:

- 17 May 2024, e89

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Spatiotemporal distribution of community-acquired phenotypic extended-spectrum beta-lactamase Escherichia coli in United States counties, 2010–2019

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 45 / Issue 4 / April 2024

- Published online by Cambridge University Press:

- 11 December 2023, pp. 540-542

- Print publication:

- April 2024

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Persistence of potential ST398 MSSA in outpatient settings among US veterans, 2010–2019

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue 1 / 2023

- Published online by Cambridge University Press:

- 20 October 2023, e177

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Impacts of Hurricane Matthew Exposure on Infections and Antimicrobial Prescribing in North Carolina Veterans

-

- Journal:

- Disaster Medicine and Public Health Preparedness / Volume 17 / 2023

- Published online by Cambridge University Press:

- 20 March 2023, e357

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

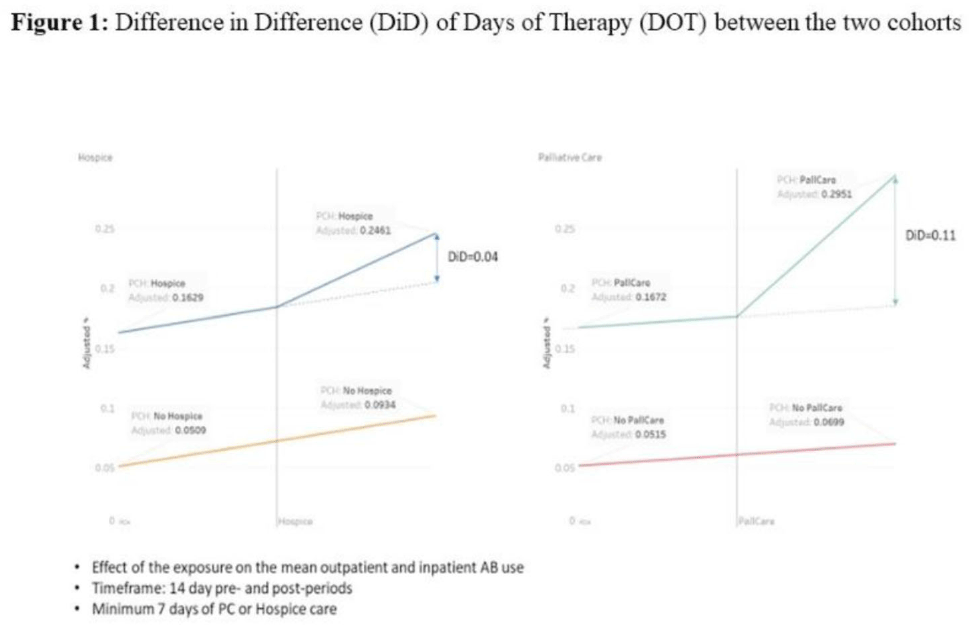

Antibiotic use in end-of-life care patients: A nationwide Veterans’ Health Administration cohort study

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 2 / Issue S1 / July 2022

- Published online by Cambridge University Press:

- 16 May 2022, pp. s19-s20

-

- Article

-

- You have access

- Open access

- Export citation

Delays and declines in seasonal influenza vaccinations due to Hurricane Harvey narrow annual gaps in vaccination by race, income and rurality

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 43 / Issue 12 / December 2022

- Published online by Cambridge University Press:

- 16 March 2022, pp. 1833-1839

- Print publication:

- December 2022

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Implementation of a surgical site infection prevention bundle: Patient adherence and experience

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 1 / Issue 1 / 2021

- Published online by Cambridge University Press:

- 10 December 2021, e63

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Acceptability and effectiveness of antimicrobial stewardship implementation strategies on fluoroquinolone prescribing

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 42 / Issue 11 / November 2021

- Published online by Cambridge University Press:

- 12 April 2021, pp. 1361-1368

- Print publication:

- November 2021

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Development of a fully automated surgical site infection detection algorithm for use in cardiac and orthopedic surgery research

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 42 / Issue 10 / October 2021

- Published online by Cambridge University Press:

- 23 February 2021, pp. 1215-1220

- Print publication:

- October 2021

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Insertion site inflammation was associated with central-line–associated bloodstream infections at a tertiary-care center, 2015–2018

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 42 / Issue 3 / March 2021

- Published online by Cambridge University Press:

- 09 October 2020, pp. 348-350

- Print publication:

- March 2021

-

- Article

- Export citation

Validation of a Surgical Site Infection Detection Algorithm for Use in Cardiac and Orthopedic Surgery Research

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 41 / Issue S1 / October 2020

- Published online by Cambridge University Press:

- 02 November 2020, pp. s55-s56

- Print publication:

- October 2020

-

- Article

-

- You have access

- Export citation

Effectiveness of Ultraviolet-C Room Disinfection on Preventing Healthcare-Associated Clostridioides difficile Infection

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 41 / Issue S1 / October 2020

- Published online by Cambridge University Press:

- 02 November 2020, p. s33

- Print publication:

- October 2020

-

- Article

-

- You have access

- Export citation

Insertion Site Inflammation Is Associated with Central-Line–Associated Bloodstream Infection

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 41 / Issue S1 / October 2020

- Published online by Cambridge University Press:

- 02 November 2020, pp. s302-s303

- Print publication:

- October 2020

-

- Article

-

- You have access

- Export citation

Conditional reflex to urine culture: Evaluation of a diagnostic stewardship intervention within the Veterans’ Affairs and Centers for Disease Control and Prevention Practice-Based Research Network

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 42 / Issue 2 / February 2021

- Published online by Cambridge University Press:

- 25 August 2020, pp. 176-181

- Print publication:

- February 2021

-

- Article

- Export citation

Testing a novel audit and feedback method for hand hygiene compliance: A multicenter quality improvement study

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 40 / Issue 1 / January 2019

- Published online by Cambridge University Press:

- 15 November 2018, pp. 89-94

- Print publication:

- January 2019

-

- Article

- Export citation