146 results

Comparative epidemiology of hospital-onset bloodstream infections (HOBSIs) and central line-associated bloodstream infections (CLABSIs) across a three-hospital health system

-

- Journal:

- Infection Control & Hospital Epidemiology , First View

- Published online by Cambridge University Press:

- 20 March 2024, pp. 1-7

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Identification of carbapenem-resistant organism (CRO) contamination of in-room sinks in intensive care units in a new hospital bed tower

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 45 / Issue 3 / March 2024

- Published online by Cambridge University Press:

- 19 January 2024, pp. 302-309

- Print publication:

- March 2024

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

A cluster of three extrapulmonary Mycobacterium abscessus infections linked to well-maintained water-based heater-cooler devices

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 45 / Issue 5 / May 2024

- Published online by Cambridge University Press:

- 21 December 2023, pp. 644-650

- Print publication:

- May 2024

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

90 School-based Implementation of Educational and Neurocognitive Interventions in Children with Neurodevelopmental Disorders.

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 29 / Issue s1 / November 2023

- Published online by Cambridge University Press:

- 21 December 2023, pp. 190-191

-

- Article

-

- You have access

- Export citation

83 Efficacy of a Tablet-Based Cognitive Flexibility Intervention in Youth with Executive Function Deficits

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 29 / Issue s1 / November 2023

- Published online by Cambridge University Press:

- 21 December 2023, pp. 184-185

-

- Article

-

- You have access

- Export citation

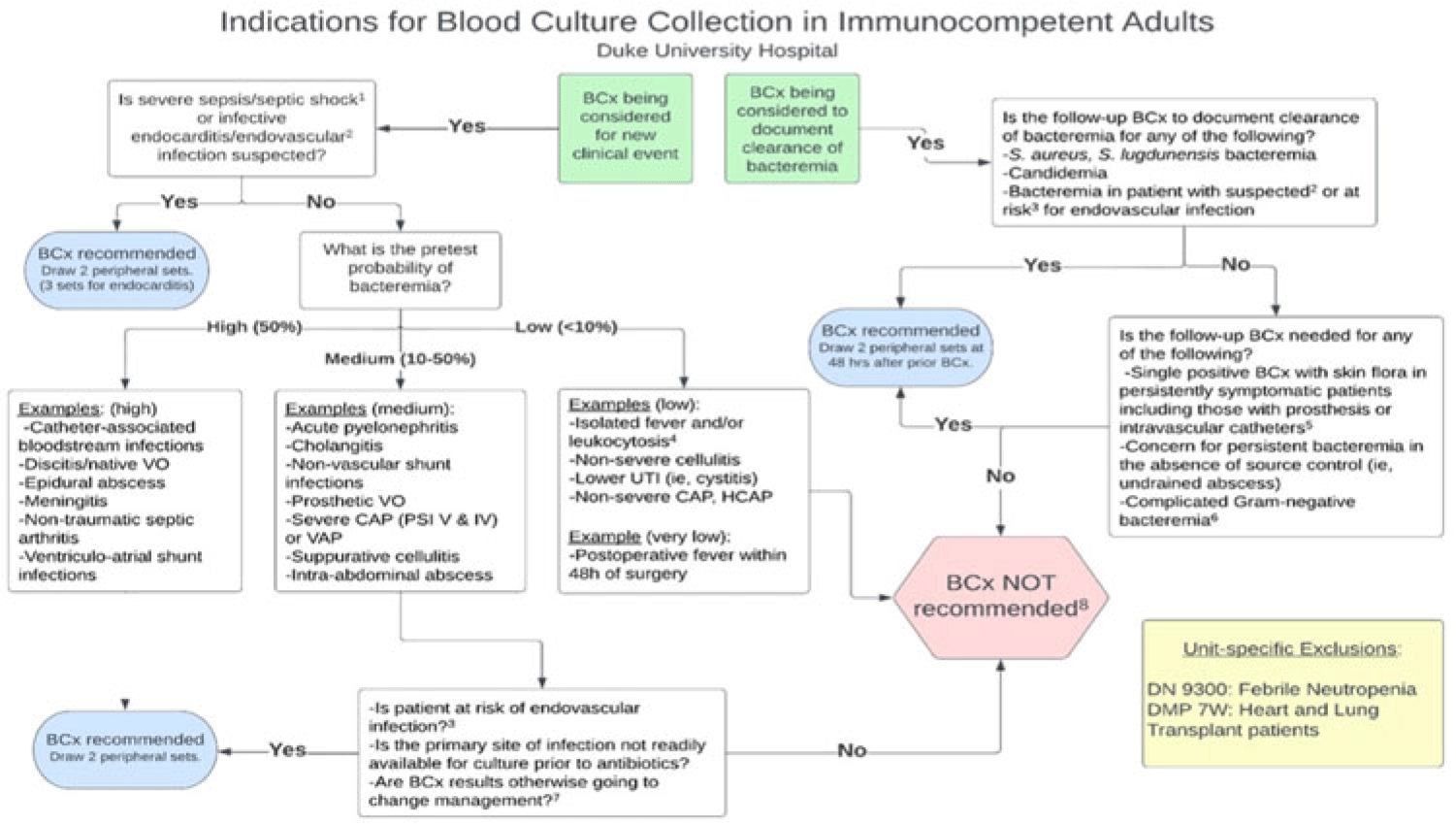

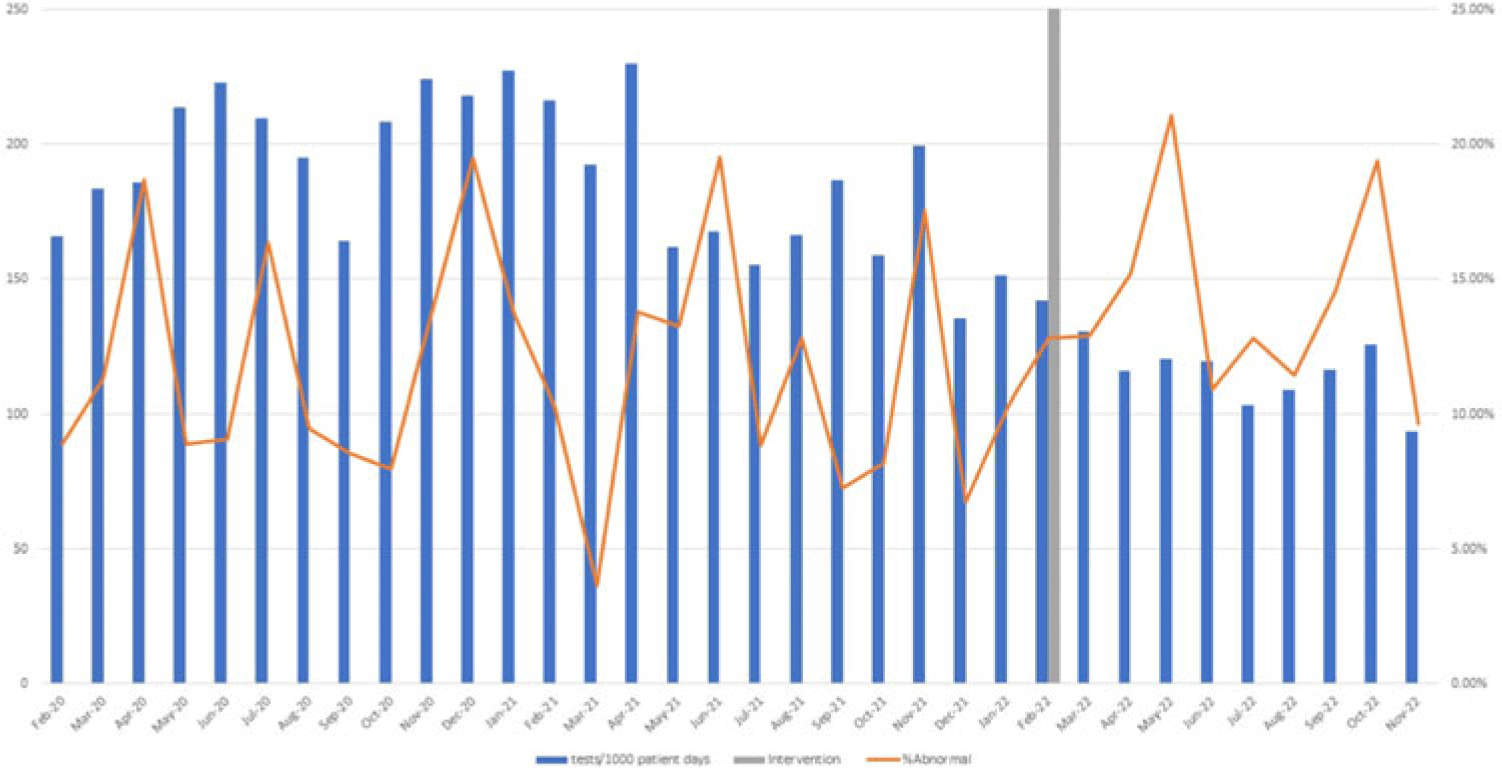

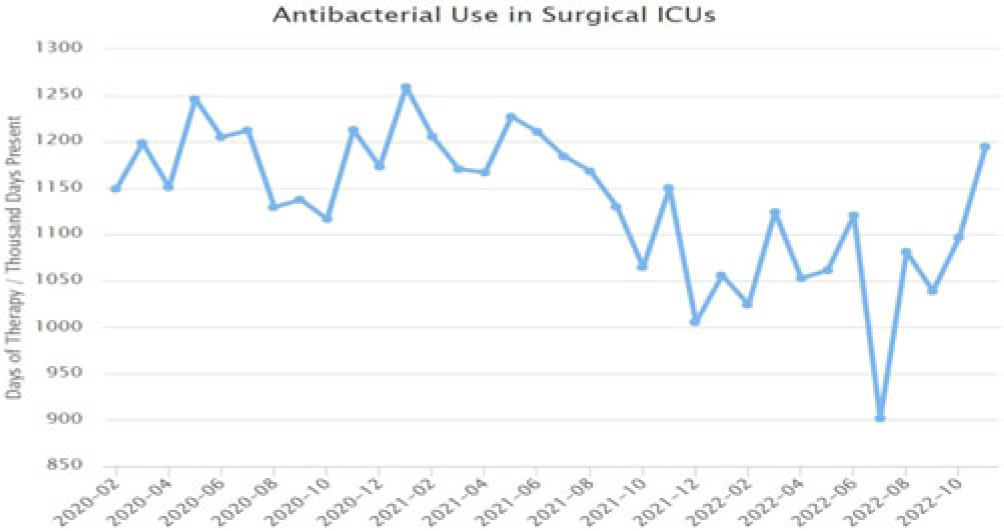

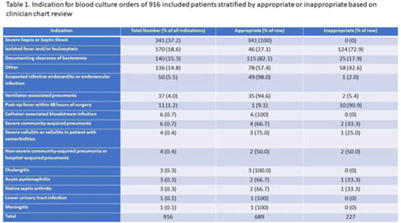

Implementation of a diagnostic stewardship intervention to improve blood-culture utilization in 2 surgical ICUs: Time for a blood-culture change

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 45 / Issue 4 / April 2024

- Published online by Cambridge University Press:

- 11 December 2023, pp. 452-458

- Print publication:

- April 2024

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Implementation of diagnostic stewardship in two surgical ICUs: Time for a blood-culture change

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, pp. s9-s10

-

- Article

-

- You have access

- Open access

- Export citation

Optimising motor development in the hospitalised infant with CHD: factors contributing to early motor challenges and recommendations for assessment and intervention

-

- Journal:

- Cardiology in the Young / Volume 33 / Issue 10 / October 2023

- Published online by Cambridge University Press:

- 20 September 2023, pp. 1800-1812

-

- Article

- Export citation

Disinfection efficacy of Oxivir TB wipe residue on severe acute respiratory coronavirus virus 2 (SARS-CoV-2)

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 11 / November 2023

- Published online by Cambridge University Press:

- 19 July 2023, pp. 1891-1893

- Print publication:

- November 2023

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Examining the ontogeny of the Pennsylvanian cladid crinoid Erisocrinus typus Meek and Worthen, 1865

-

- Journal:

- Journal of Paleontology / Volume 97 / Issue 4 / July 2023

- Published online by Cambridge University Press:

- 13 July 2023, pp. 906-913

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Preliminary evaluation of a smartphone application (DelApp) for identification of delirium in sub-Saharan Africa

-

- Journal:

- Acta Neuropsychiatrica , First View

- Published online by Cambridge University Press:

- 22 June 2023, pp. 1-9

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Developing relevant assessments of community-engaged research partnerships: A community-based participatory approach to evaluating clinical and health research study teams

-

- Journal:

- Journal of Clinical and Translational Science / Volume 7 / Issue 1 / 2023

- Published online by Cambridge University Press:

- 11 May 2023, e123

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Cerebrospinal fluid neurofilament light predicts longitudinal diagnostic change in patients with psychiatric and neurodegenerative disorders

-

- Journal:

- Acta Neuropsychiatrica / Volume 36 / Issue 1 / February 2024

- Published online by Cambridge University Press:

- 28 April 2023, pp. 17-28

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Disparities in central line-associated bloodstream infection and catheter-associated urinary tract infection rates: An exploratory analysis

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 11 / November 2023

- Published online by Cambridge University Press:

- 14 April 2023, pp. 1857-1860

- Print publication:

- November 2023

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Using clinical decision support to improve urine testing and antibiotic utilization

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 10 / October 2023

- Published online by Cambridge University Press:

- 29 March 2023, pp. 1582-1586

- Print publication:

- October 2023

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Central-line–associated bloodstream infections secondary to anaerobes: Time for a definition change?

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 10 / October 2023

- Published online by Cambridge University Press:

- 03 February 2023, pp. 1697-1698

- Print publication:

- October 2023

-

- Article

- Export citation

Therapeutic monitoring of plasma clozapine and N-desmethylclozapine (norclozapine): practical considerations

-

- Journal:

- BJPsych Advances / Volume 29 / Issue 2 / March 2023

- Published online by Cambridge University Press:

- 27 January 2023, pp. 92-102

- Print publication:

- March 2023

-

- Article

-

- You have access

- HTML

- Export citation

Colon surgical-site infections and the impact of “present at the time of surgery (PATOS)” in a large network of community hospitals

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 8 / August 2023

- Published online by Cambridge University Press:

- 22 September 2022, pp. 1255-1260

- Print publication:

- August 2023

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Surgical site infection trends in community hospitals from 2013 to 2018

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 4 / April 2023

- Published online by Cambridge University Press:

- 18 July 2022, pp. 610-615

- Print publication:

- April 2023

-

- Article

- Export citation

The impact of a comprehensive coronavirus disease 2019 (COVID-19) infection prevention bundle on non–COVID-19 hospital-acquired respiratory viral infection (HA-RVI) rates

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 6 / June 2023

- Published online by Cambridge University Press:

- 02 June 2022, pp. 1022-1024

- Print publication:

- June 2023

-

- Article

- Export citation