218 results

Scaling up the task-sharing of psychological therapies: A formative study of the PEERS smartphone application for supervision and quality assurance in rural India

-

- Journal:

- Cambridge Prisms: Global Mental Health / Volume 11 / 2024

- Published online by Cambridge University Press:

- 05 February 2024, e20

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Subsurface scientific exploration of extraterrestrial environments (MINAR 5): analogue science, technology and education in the Boulby Mine, UK – CORRIGENDUM

-

- Journal:

- International Journal of Astrobiology / Volume 23 / 2024

- Published online by Cambridge University Press:

- 06 November 2023, e2

-

- Article

-

- You have access

- HTML

- Export citation

Challenges and Factors Affecting Child, Adolescents, Young Adults, and Their Parents in Returning to School After Remote Learning in COVID Pandemic

-

- Journal:

- European Psychiatry / Volume 66 / Issue S1 / March 2023

- Published online by Cambridge University Press:

- 19 July 2023, pp. S406-S407

-

- Article

-

- You have access

- Open access

- Export citation

A parametric design approach for multi-lobed hybrid airships

-

- Journal:

- The Aeronautical Journal / Volume 128 / Issue 1319 / January 2024

- Published online by Cambridge University Press:

- 11 May 2023, pp. 1-36

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Glacier inventory and glacier changes (1994–2020) in the Upper Alaknanda Basin, Central Himalaya

-

- Journal:

- Journal of Glaciology / Volume 69 / Issue 275 / June 2023

- Published online by Cambridge University Press:

- 13 December 2022, pp. 591-606

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Estimating severe acute respiratory coronavirus virus 2 (SARS-CoV-2) seroprevalence from residual clinical blood samples, January–March 2021

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 2 / Issue 1 / 2022

- Published online by Cambridge University Press:

- 22 September 2022, e159

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Bullying victimization in children and adolescents and its impact on academic outcomes

-

- Journal:

- European Psychiatry / Volume 65 / Issue S1 / June 2022

- Published online by Cambridge University Press:

- 01 September 2022, p. S144

-

- Article

-

- You have access

- Open access

- Export citation

Bright Light Therapy for MDD in Children and Adolescents: a narrative review of literature

-

- Journal:

- European Psychiatry / Volume 65 / Issue S1 / June 2022

- Published online by Cambridge University Press:

- 01 September 2022, p. S554

-

- Article

-

- You have access

- Open access

- Export citation

Decreased mortality in coronavirus disease 2019 associated mucormycosis with aspirin use: a retrospective cohort study

-

- Journal:

- The Journal of Laryngology & Otology / Volume 136 / Issue 12 / December 2022

- Published online by Cambridge University Press:

- 14 June 2022, pp. 1309-1313

- Print publication:

- December 2022

-

- Article

- Export citation

Feasibility, acceptability and evaluation of meditation to augment yoga practice among persons diagnosed with schizophrenia

-

- Journal:

- Acta Neuropsychiatrica / Volume 34 / Issue 6 / December 2022

- Published online by Cambridge University Press:

- 19 May 2022, pp. 330-343

-

- Article

- Export citation

These Are Not the Droids You Are Looking For: Mechanical Variant of Cotard’s Syndrome

-

- Journal:

- CNS Spectrums / Volume 27 / Issue 2 / April 2022

- Published online by Cambridge University Press:

- 28 April 2022, p. 228

-

- Article

-

- You have access

- Export citation

Preliminary assessment of paediatric electrophysiology cardiac implantable electronic device resources around the world

-

- Journal:

- Cardiology in the Young / Volume 32 / Issue 12 / December 2022

- Published online by Cambridge University Press:

- 01 April 2022, pp. 1989-1993

-

- Article

- Export citation

Here Comes Everybody: Using a Data Cooperative to Understand the New Dynamics of Representation

-

- Journal:

- PS: Political Science & Politics / Volume 55 / Issue 2 / April 2022

- Published online by Cambridge University Press:

- 31 March 2022, pp. 300-302

- Print publication:

- April 2022

-

- Article

- Export citation

Key learnings from Institute for Clinical and Economic Review’s real-world evidence reassessment pilot

-

- Journal:

- International Journal of Technology Assessment in Health Care / Volume 38 / Issue 1 / 2022

- Published online by Cambridge University Press:

- 14 March 2022, e32

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Overview and risk factors for postcraniotomy surgical site infection: A four-year experience

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 2 / Issue 1 / 2022

- Published online by Cambridge University Press:

- 31 January 2022, e14

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Occupational exposure to severe acute respiratory coronavirus virus 2 (SARS-CoV-2) and risk of infection among healthcare personnel

- Part of

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 43 / Issue 12 / December 2022

- Published online by Cambridge University Press:

- 06 January 2022, pp. 1785-1789

- Print publication:

- December 2022

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Computed tomography analysis of the anterior ethmoid genu of the frontal recess in non-diseased sinuses

-

- Journal:

- The Journal of Laryngology & Otology / Volume 137 / Issue 2 / February 2023

- Published online by Cambridge University Press:

- 20 December 2021, pp. 169-173

- Print publication:

- February 2023

-

- Article

- Export citation

Prevalence of latent structural heart disease in Nepali schoolchildren

- Part of

-

- Journal:

- Cardiology in the Young / Volume 32 / Issue 7 / July 2022

- Published online by Cambridge University Press:

- 04 November 2021, pp. 1151-1153

-

- Article

- Export citation

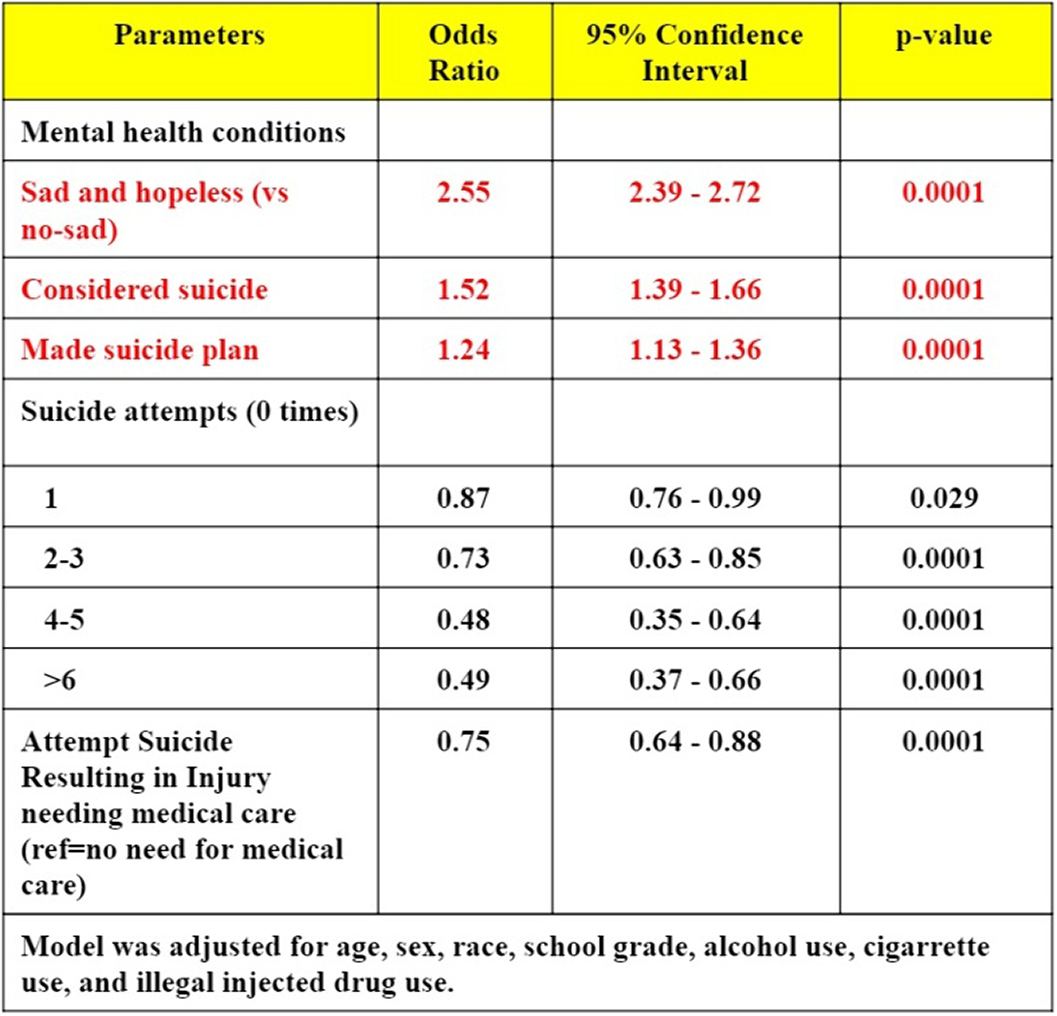

Mood and suicidality amongst cyberbullied adolescents- a cross-sectional study from youth risk behavior survey

-

- Journal:

- European Psychiatry / Volume 64 / Issue S1 / April 2021

- Published online by Cambridge University Press:

- 13 August 2021, pp. S85-S86

-

- Article

-

- You have access

- Open access

- Export citation

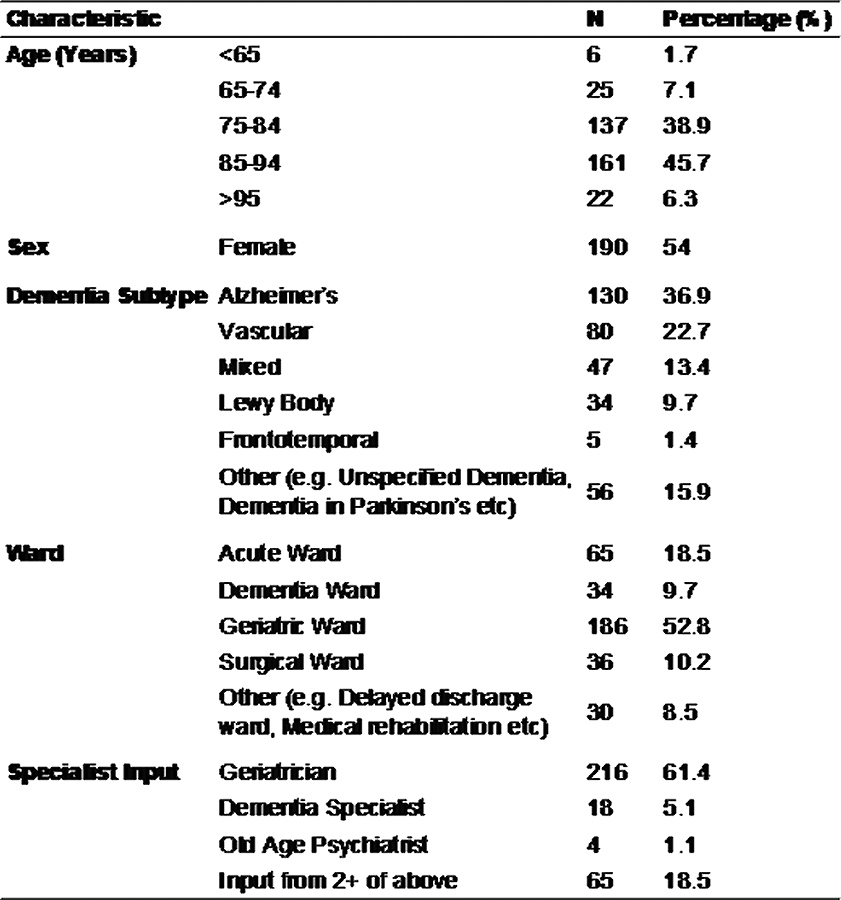

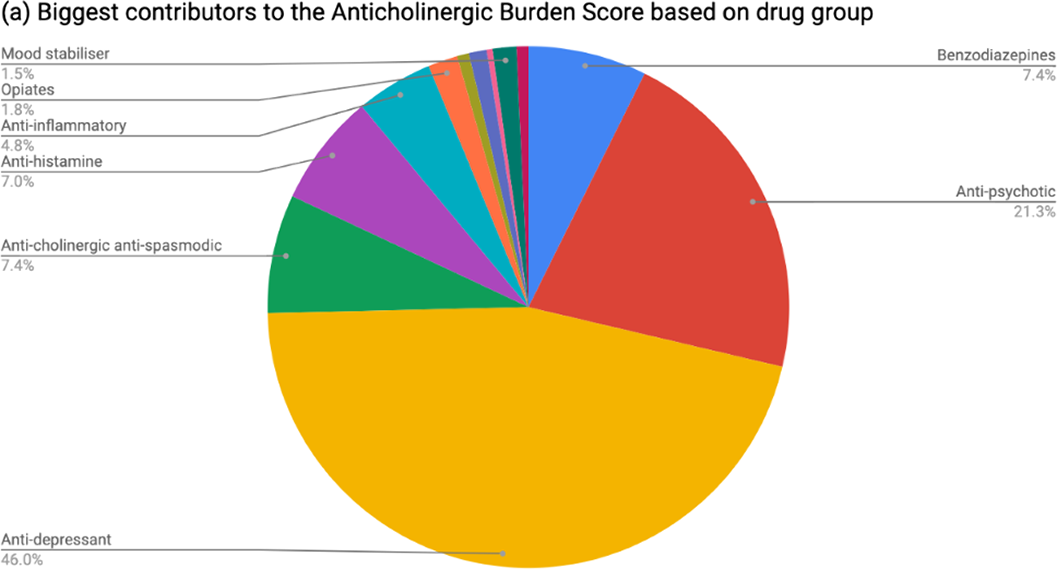

Dementia patients have greater anti-cholinergic drug burden on discharge from hospital: A multicentre cross-sectional study

-

- Journal:

- European Psychiatry / Volume 64 / Issue S1 / April 2021

- Published online by Cambridge University Press:

- 13 August 2021, pp. S422-S423

-

- Article

-

- You have access

- Open access

- Export citation