14 results

Trends of antibiotic use at the end-of-life of cancer and non-cancer decedents: a nationwide population-based longitudinal study (2006–2018)

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue 1 / 2024

- Published online by Cambridge University Press:

- 13 May 2024, e83

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Antibiotic prescribing behavior among physicians in Asia: a multinational survey

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue 1 / 2023

- Published online by Cambridge University Press:

- 29 June 2023, e112

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

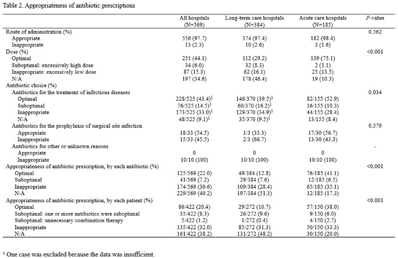

Prescriptions patterns and appropriateness of usage of antibiotics in small and medium- sized hospitals in Korea

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 2 / Issue S1 / July 2022

- Published online by Cambridge University Press:

- 16 May 2022, p. s19

-

- Article

-

- You have access

- Open access

- Export citation

Rapid diagnostic testing for antimicrobial stewardship: Utility in Asia Pacific

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 42 / Issue 7 / July 2021

- Published online by Cambridge University Press:

- 15 June 2021, pp. 864-868

- Print publication:

- July 2021

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Human resources required for antimicrobial stewardship activities for hospitalized patients in Korea

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 41 / Issue 12 / December 2020

- Published online by Cambridge University Press:

- 26 October 2020, pp. 1429-1435

- Print publication:

- December 2020

-

- Article

- Export citation

Evaluation of Patients’ Adverse Events Associated With Contact Isolation: Matched Cohort Study With Propensity Score

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 41 / Issue S1 / October 2020

- Published online by Cambridge University Press:

- 02 November 2020, pp. s476-s478

- Print publication:

- October 2020

-

- Article

-

- You have access

- Export citation

Impact on National Policy on the Hand Hygiene Promotion Activities in Hospitals in Korea

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 41 / Issue S1 / October 2020

- Published online by Cambridge University Press:

- 02 November 2020, p. s267

- Print publication:

- October 2020

-

- Article

-

- You have access

- Export citation

Decreased hemoglobin levels, cerebral small-vessel disease, and cortical atrophy: among cognitively normal elderly women and men

-

- Journal:

- International Psychogeriatrics / Volume 28 / Issue 1 / January 2016

- Published online by Cambridge University Press:

- 20 May 2015, pp. 147-156

-

- Article

- Export citation

Differences in the Risk Factors for Surgical Site Infection between Total Hip Arthroplasty and Total Knee Arthroplasty in the Korean Nosocomial Infections Surveillance System (KONIS)

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 33 / Issue 11 / November 2012

- Published online by Cambridge University Press:

- 02 January 2015, pp. 1086-1093

- Print publication:

- November 2012

-

- Article

- Export citation

Prospective Nationwide Surveillance of Surgical Site Infections after Gastric Surgery and Risk Factor Analysis in the Korean Nosocomial Infections Surveillance System (KONIS)

- Part of

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 33 / Issue 6 / June 2012

- Published online by Cambridge University Press:

- 02 January 2015, pp. 572-580

- Print publication:

- June 2012

-

- Article

-

- You have access

- Export citation

Clinical Epidemiology of Ciprofloxacin Resistance and Its Relationship to Broad-Spectrum Cephalosporin Resistance in Bloodstream Infections Caused by Enterobacter Species

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 26 / Issue 1 / January 2005

- Published online by Cambridge University Press:

- 21 June 2016, pp. 88-92

- Print publication:

- January 2005

-

- Article

- Export citation

Risk Factors for and Clinical Outcomes of Bloodstream Infections Caused by Extended-Spectrum Beta-Lactamase-Producing Klebsiella pneumoniae

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 25 / Issue 10 / October 2004

- Published online by Cambridge University Press:

- 02 January 2015, pp. 860-867

- Print publication:

- October 2004

-

- Article

- Export citation

Characterization of Carbon Nanotubes/Cu Nanocomposites Processed by Using Nano-sized Cu Powders

-

- Journal:

- MRS Online Proceedings Library Archive / Volume 821 / 2004

- Published online by Cambridge University Press:

- 15 March 2011, P3.25

- Print publication:

- 2004

-

- Article

- Export citation

Outcomes of Hickman Catheter Salvage in Febrile Neutropenic Cancer Patients With Staphylococcus aureus Bacteremia

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 24 / Issue 12 / December 2003

- Published online by Cambridge University Press:

- 02 January 2015, pp. 897-904

- Print publication:

- December 2003

-

- Article

- Export citation