439 results

81 A rapid-cycle application of the Consolidated Framework for Implementation Research allows timely identification of barriers and facilitators to implementing the World Health Organization’s Emergency Care Toolkit in Zambia

- Part of

-

- Journal:

- Journal of Clinical and Translational Science / Volume 8 / Issue s1 / April 2024

- Published online by Cambridge University Press:

- 03 April 2024, pp. 21-22

-

- Article

-

- You have access

- Open access

- Export citation

Fusion imaging for guidance of pulmonary arteriovenous malformation embolisation with minimal radiation and contrast exposure

-

- Journal:

- Cardiology in the Young , First View

- Published online by Cambridge University Press:

- 01 March 2024, pp. 1-5

-

- Article

- Export citation

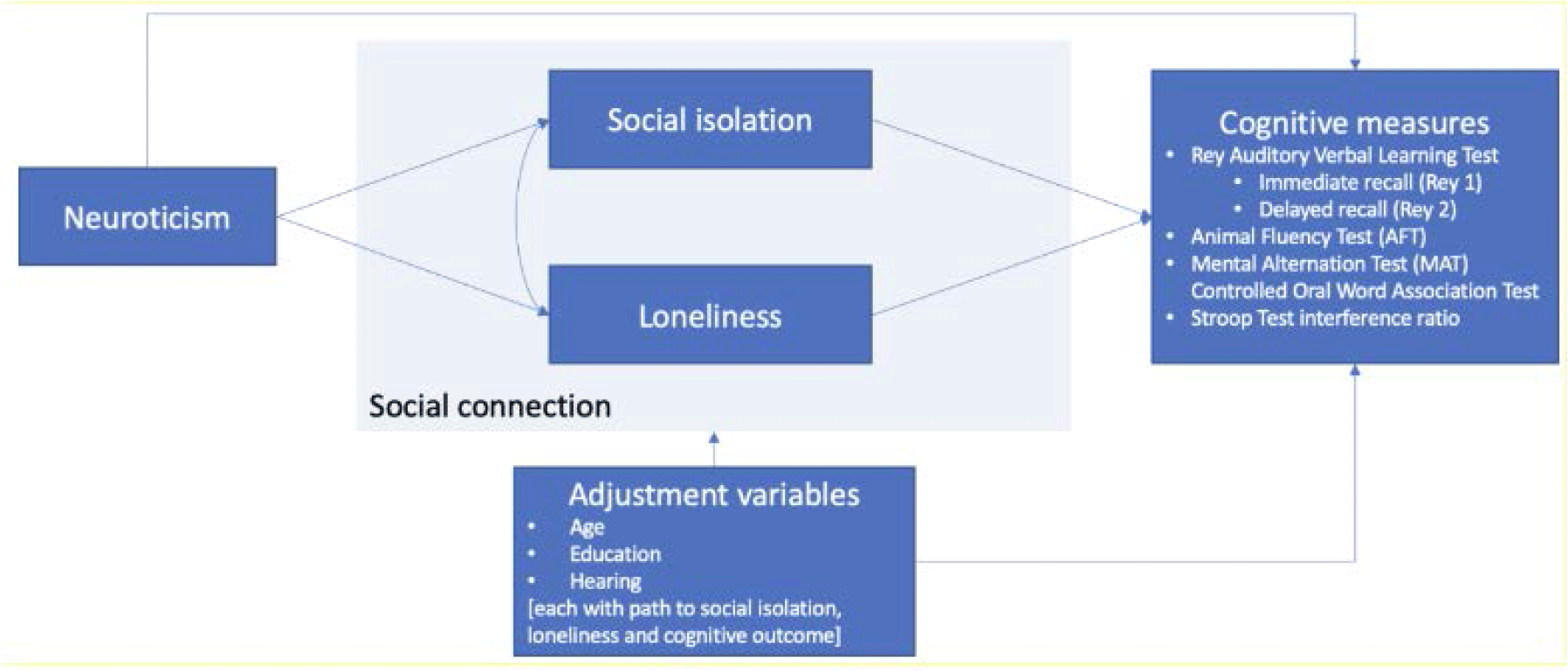

FC30: The relationships between neuroticism, social connection and cognition

-

- Journal:

- International Psychogeriatrics / Volume 35 / Issue S1 / December 2023

- Published online by Cambridge University Press:

- 02 February 2024, pp. 92-94

-

- Article

-

- You have access

- Export citation

Variation of subclinical psychosis across 16 sites in Europe and Brazil: findings from the multi-national EU-GEI study

-

- Journal:

- Psychological Medicine , First View

- Published online by Cambridge University Press:

- 30 January 2024, pp. 1-14

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Collateral effects of Coping Power on caregiver symptoms of depression and long-term changes in child behavior

-

- Journal:

- Development and Psychopathology , First View

- Published online by Cambridge University Press:

- 05 January 2024, pp. 1-13

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

30 Examining the Base Rates of Low Scores in Older Adults with Subjective Cognitive Impairment from a Specialist Memory Clinic

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 29 / Issue s1 / November 2023

- Published online by Cambridge University Press:

- 21 December 2023, pp. 711-712

-

- Article

-

- You have access

- Export citation

4 Methamphetamine, cannabis, HIV, and their combined effects on neurocognition

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 29 / Issue s1 / November 2023

- Published online by Cambridge University Press:

- 21 December 2023, pp. 797-798

-

- Article

-

- You have access

- Export citation

47 The Impact of COVID-19 Infection on Objective and Subjective Cognitive Functioning: Resilience as a Protective Factor

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 29 / Issue s1 / November 2023

- Published online by Cambridge University Press:

- 21 December 2023, pp. 44-45

-

- Article

-

- You have access

- Export citation

Pre-Clinical Mobility Limitation (PCML) Outcomes in Rehabilitation Interventions for Middle-Aged and Older Adults: A Scoping Review

-

- Journal:

- Canadian Journal on Aging / La Revue canadienne du vieillissement , First View

- Published online by Cambridge University Press:

- 20 November 2023, pp. 1-12

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Demographics of Palmer amaranth (Amaranthus palmeri) in annual and perennial cover crops

-

- Journal:

- Weed Science / Volume 72 / Issue 1 / January 2024

- Published online by Cambridge University Press:

- 17 November 2023, pp. 96-107

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

5.18 - Brain Networks and Dysconnectivity

- from 5 - Neural Circuits

-

-

- Book:

- Cambridge Textbook of Neuroscience for Psychiatrists

- Published online:

- 08 November 2023

- Print publication:

- 16 November 2023, pp 279-285

-

- Chapter

- Export citation

Wellbeing Wednesdays: a pilot trial of acceptance and commitment therapy embedded in a freshman seminar

-

- Journal:

- The Cognitive Behaviour Therapist / Volume 16 / 2023

- Published online by Cambridge University Press:

- 06 October 2023, e27

-

- Article

- Export citation

Cannabis use may attenuate neurocognitive performance deficits resulting from methamphetamine use disorder

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 30 / Issue 1 / January 2024

- Published online by Cambridge University Press:

- 09 August 2023, pp. 84-93

-

- Article

- Export citation

Geochronology and formal stratigraphy of the Sturtian Glaciation in the Adelaide Superbasin

-

- Journal:

- Geological Magazine / Volume 160 / Issue 7 / July 2023

- Published online by Cambridge University Press:

- 20 July 2023, pp. 1321-1344

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Implementing diagnostic stewardship to improve diagnosis of urinary tract infections across three medical centers: A qualitative assessment

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 12 / December 2023

- Published online by Cambridge University Press:

- 10 July 2023, pp. 1932-1941

- Print publication:

- December 2023

-

- Article

- Export citation

Modeling the potential distribution of the threatened Grey-necked Picathartes Picathartes oreas across its entire range

-

- Journal:

- Bird Conservation International / Volume 33 / 2023

- Published online by Cambridge University Press:

- 15 June 2023, e65

-

- Article

- Export citation

A pilot study of experiencing racial microaggressions, obsessive-compulsive symptoms, and the role of psychological flexibility

-

- Journal:

- Behavioural and Cognitive Psychotherapy / Volume 51 / Issue 5 / September 2023

- Published online by Cambridge University Press:

- 25 May 2023, pp. 396-413

- Print publication:

- September 2023

-

- Article

- Export citation

Chapter 17 - Inscriptions on the Capitoline: Epigraphy and Cultural Memory in Livy

- from Part III - Building Cultural Memory

-

-

- Book:

- Cultural Memory in Republican and Augustan Rome

- Published online:

- 27 April 2023

- Print publication:

- 11 May 2023, pp 294-312

-

- Chapter

- Export citation

Cannabis use as a potential mediator between childhood adversity and first-episode psychosis: results from the EU-GEI case–control study

-

- Journal:

- Psychological Medicine / Volume 53 / Issue 15 / November 2023

- Published online by Cambridge University Press:

- 04 May 2023, pp. 7375-7384

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

The association between reasons for first using cannabis, later pattern of use, and risk of first-episode psychosis: the EU-GEI case–control study

-

- Journal:

- Psychological Medicine / Volume 53 / Issue 15 / November 2023

- Published online by Cambridge University Press:

- 02 May 2023, pp. 7418-7427

-

- Article

-

- You have access

- Open access

- HTML

- Export citation