57 results

Anomalous 13C enrichment in Mesozoic vertebrate enamel reflects environmental conditions in a “vanished world” and not a unique dietary physiology

-

- Journal:

- Paleobiology / Volume 49 / Issue 3 / August 2023

- Published online by Cambridge University Press:

- 13 January 2023, pp. 563-577

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Structural racism in healthcare and research: A community-led model of curriculum development and implementation

-

- Journal:

- Journal of Clinical and Translational Science / Volume 7 / Issue 1 / 2023

- Published online by Cambridge University Press:

- 09 November 2022, e18

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Improved Throughput, Statistics, and Instrument Utilization with Automated Analytical Electron Microscopy

-

- Journal:

- Microscopy and Microanalysis / Volume 28 / Issue S1 / August 2022

- Published online by Cambridge University Press:

- 22 July 2022, pp. 2918-2919

- Print publication:

- August 2022

-

- Article

-

- You have access

- Export citation

Tracking Degradation in Individual Catalyst Nanoparticles Under Fuel Cell-Relevant Cycling Conditions by Identical-Location STEM

-

- Journal:

- Microscopy and Microanalysis / Volume 28 / Issue S1 / August 2022

- Published online by Cambridge University Press:

- 22 July 2022, pp. 2614-2617

- Print publication:

- August 2022

-

- Article

-

- You have access

- Export citation

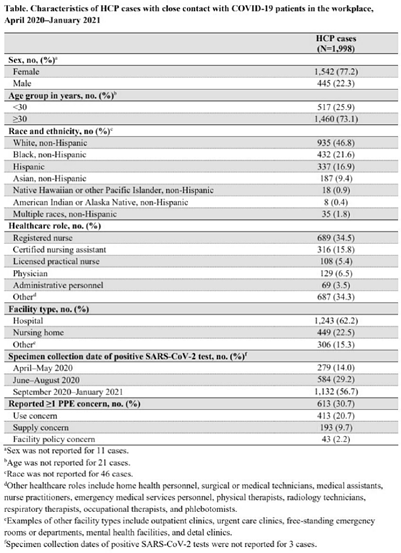

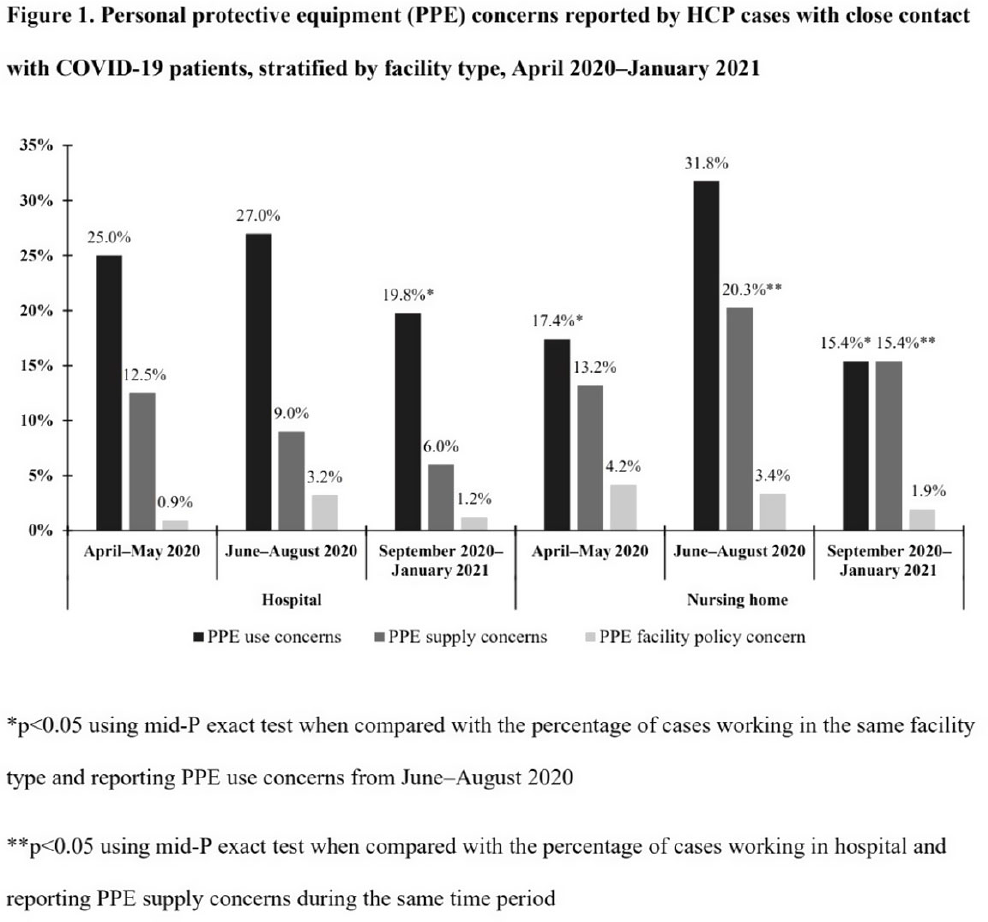

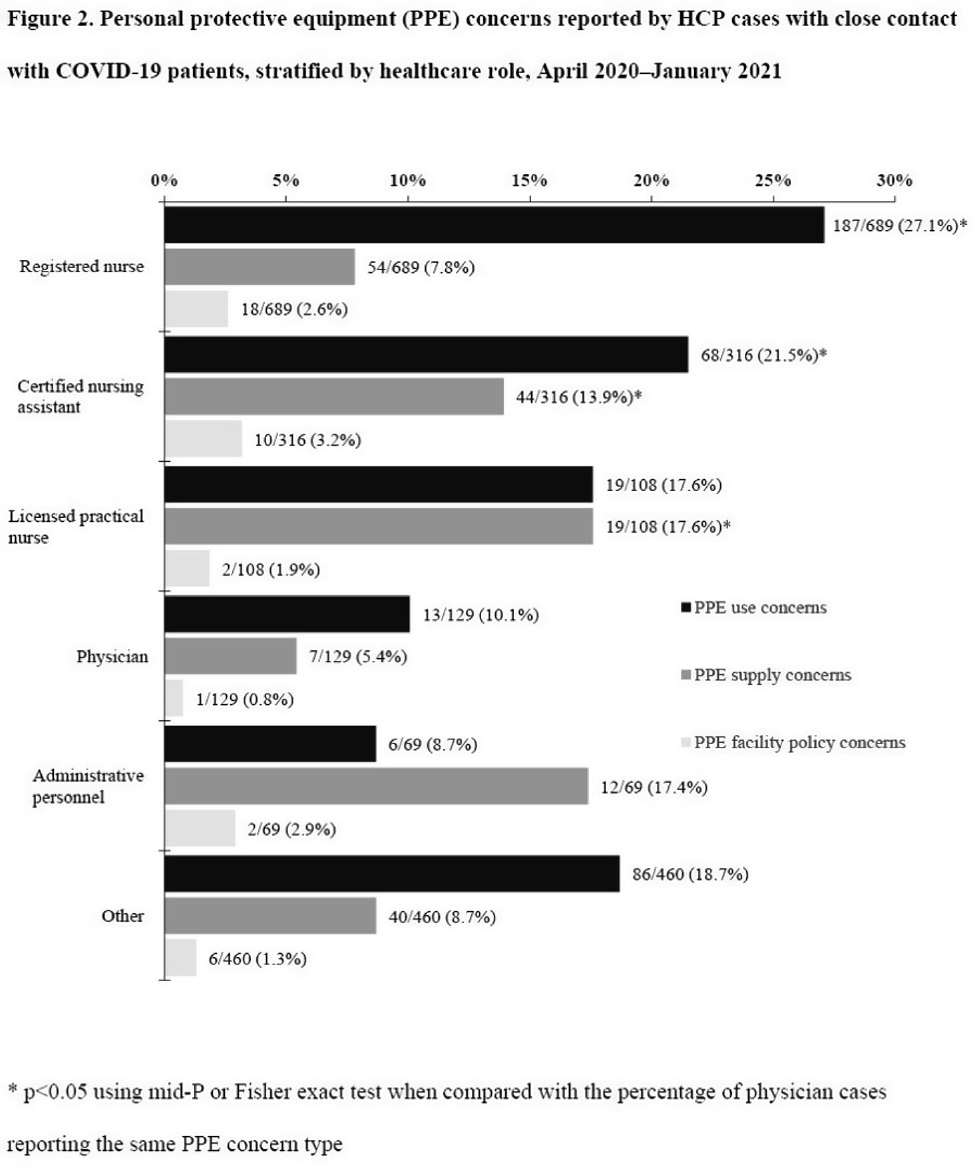

Characteristics of healthcare personnel who reported concerns related to PPE use during care of COVID-19 patients

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 2 / Issue S1 / July 2022

- Published online by Cambridge University Press:

- 16 May 2022, pp. s8-s9

-

- Article

-

- You have access

- Open access

- Export citation

Confronting School Violence

- A Synthesis of Six Decades of Research

-

- Published online:

- 18 March 2022

- Print publication:

- 05 May 2022

-

- Element

- Export citation

Psychiatric and psychosocial characteristics of suicide completers: A 13-year comprehensive evaluation of psychiatric case records and postmortem findings

-

- Journal:

- European Psychiatry / Volume 65 / Issue 1 / 2022

- Published online by Cambridge University Press:

- 24 January 2022, e14

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Corporate Responses to Tackling Modern Slavery: A Comparative Analysis of Australia, France and the United Kingdom

-

- Journal:

- Business and Human Rights Journal / Volume 7 / Issue 2 / June 2022

- Published online by Cambridge University Press:

- 15 November 2021, pp. 249-270

-

- Article

- Export citation

Practices and activities among healthcare personnel with severe acute respiratory coronavirus virus 2 (SARS-CoV-2) infection working in different healthcare settings—ten Emerging Infections Program sites, April–November 2020

- Part of

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 43 / Issue 8 / August 2022

- Published online by Cambridge University Press:

- 02 June 2021, pp. 1058-1062

- Print publication:

- August 2022

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

What is the role of doctors in respect of suspects with mental health and intellectual disabilities in police custody?

-

- Journal:

- Irish Journal of Psychological Medicine / Volume 40 / Issue 3 / September 2023

- Published online by Cambridge University Press:

- 19 April 2021, pp. 494-499

- Print publication:

- September 2023

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

The association between C-reactive protein, mood disorder, and cognitive function in UK Biobank

-

- Journal:

- European Psychiatry / Volume 64 / Issue 1 / 2021

- Published online by Cambridge University Press:

- 01 February 2021, e14

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

P02-105 - Adherence to the Trust Guidelines for the Use of High Dose Antipsychotic Medication-completed Audit Cycle

-

- Journal:

- European Psychiatry / Volume 25 / Issue S1 / 2010

- Published online by Cambridge University Press:

- 17 April 2020, 25-E703

-

- Article

-

- You have access

- Export citation

1494 – Mindfulness-based Cognitive Therapy Reduces Depression Symptoms In People Who Have a Traumatic Brain Injury: Results From a Randomized Controlled Trial

-

- Journal:

- European Psychiatry / Volume 28 / Issue S1 / 2013

- Published online by Cambridge University Press:

- 15 April 2020, 28-E803

-

- Article

-

- You have access

- Export citation

Chemical, Biological, Radiological, Nuclear, and Explosive (CBRNE) Science and the CBRNE Science Medical Operations Science Support Expert (CMOSSE)

-

- Journal:

- Disaster Medicine and Public Health Preparedness / Volume 13 / Issue 5-6 / December 2019

- Published online by Cambridge University Press:

- 17 June 2019, pp. 995-1010

-

- Article

- Export citation

Heritability of ram mating success in multi-sire breeding situations

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

LO08: PROM-ED: the development and testing of a patient-reported outcome measure for use with emergency department patients who are discharged home

-

- Journal:

- Canadian Journal of Emergency Medicine / Volume 20 / Issue S1 / May 2018

- Published online by Cambridge University Press:

- 11 May 2018, p. S9

- Print publication:

- May 2018

-

- Article

-

- You have access

- Export citation

The impact of periconceptional alcohol exposure on fat preference and gene expression in the mesolimbic reward pathway in adult rat offspring

- Part of

-

- Journal:

- Journal of Developmental Origins of Health and Disease / Volume 9 / Issue 2 / April 2018

- Published online by Cambridge University Press:

- 17 October 2017, pp. 223-231

-

- Article

- Export citation

Thermohaline Circulation and Prolonged Interglacial Warmth in the North Atlantic

-

- Journal:

- Quaternary Research / Volume 58 / Issue 1 / July 2002

- Published online by Cambridge University Press:

- 20 January 2017, pp. 17-21

-

- Article

- Export citation

A pilot study of performance among hospitalised elderly patients on a novel test of visuospatial cognition: the letter and shape drawing (LSD) test

-

- Journal:

- Irish Journal of Psychological Medicine / Volume 34 / Issue 3 / September 2017

- Published online by Cambridge University Press:

- 02 November 2016, pp. 169-175

- Print publication:

- September 2017

-

- Article

- Export citation

A systematic review and meta-analysis of the antibiotic treatment for infectious bovine keratoconjunctivitis: an update

-

- Journal:

- Animal Health Research Reviews / Volume 17 / Issue 1 / June 2016

- Published online by Cambridge University Press:

- 18 July 2016, pp. 60-75

-

- Article

- Export citation