1. Introduction

Automatic differentiation (AD) is a popular technique for computing derivatives of functions implemented by computer programs, essentially by applying the chain rule across the program code. It is typically the method of choice for computing derivatives in machine learning and scientific computing because of its efficiency and numerical stability. AD has two main variants: forward-mode AD, which calculates the derivative of a function, and reverse-mode AD, which calculates the (matrix) transpose of the derivative. Roughly speaking, for a function

![]() $f:{\mathbb{R}}^n\to {\mathbb{R}}^m$

, reverse mode is the more efficient technique if

$f:{\mathbb{R}}^n\to {\mathbb{R}}^m$

, reverse mode is the more efficient technique if

![]() $n\gg m$

and forward mode is if

$n\gg m$

and forward mode is if

![]() $n\ll m$

. Seeing that we are usually interested in computing derivatives (or gradients) of functions

$n\ll m$

. Seeing that we are usually interested in computing derivatives (or gradients) of functions

![]() $f:{\mathbb{R}}^n\to\mathbb{R}$

with very large n, reverse AD tends to be the more important algorithm in practice (Baydin et al. Reference Baydin, Pearlmutter, Radul and Siskind2017).

$f:{\mathbb{R}}^n\to\mathbb{R}$

with very large n, reverse AD tends to be the more important algorithm in practice (Baydin et al. Reference Baydin, Pearlmutter, Radul and Siskind2017).

While the study of AD has a long history in the numerical methods community, which we will not survey (see, e.g., Griewank and Walther Reference Griewank and Walther2008), there has recently been a proliferation of work by the programming languages community examining the technique from a new angle. New goals pursued by this community include

-

• giving a concise, clear, and easy-to-implement definition of various AD algorithms;

-

• expanding the languages and programming techniques that AD can be applied to;

-

• relating AD to its mathematical foundations in differential geometry and proving that AD implementations correctly calculate derivatives;

-

• performing AD at compile time through source code transformation, to maximally expose optimization opportunities to the compiler and to avoid interpreter overhead that other AD approaches can incur;

-

• providing formal complexity guarantees for AD implementations.

We provide a brief summary of some of this more recent work in Section 16. The present paper adds to this new body of work by advancing the state of the art of the first four goals. We leave the fifth goal when applied to our technique mostly to future work (with the exception of Corollary 130). Specifically, we extend the scope of the Combinatory Homomorphic Automatic Differentiation (CHAD) method of forward and reverse AD (Vákár Reference Vákár2021, Vákár and Smeding Reference Vákár and Smeding2022) (from the previous state of the art: a simply typed

![]() $\lambda$

-calculus) to apply to total functional programming languages with expressive type systems, that is, the combination of:

$\lambda$

-calculus) to apply to total functional programming languages with expressive type systems, that is, the combination of:

-

• tuple types, to enable programs that return or take as an argument more than one value;

-

• sum types, to enable programs that define and branch on variant data types;

-

• inductive types, to include programs that operate on labeled-tree-like data structures;

-

• coinductive types, to deal with programs that operate on lazy infinite data structures such as streams;

-

• function types, to encompass programs that use popular higher-order programming idioms such as maps and folds.

This conceptually simple extension requires a considerable extension of existing techniques in denotational semantics. The payoffs of this challenging development are surprisingly simple AD algorithms as well as reusable abstract semantic techniques.

The main contributions of this paper are as follows:

-

• developing an abstract categorical semantics (Section 3) of such expressive type systems in suitable

$\Sigma$

-types of categories (Section 6);

$\Sigma$

-types of categories (Section 6); -

• presenting, as the initial instantiation of this abstract semantics, an idealized target language for CHAD when applied to such type systems (Section 7);

-

• deriving the forward and reverse CHAD algorithms (Section 8) when applied to expressive type systems as the uniquely defined homomorphic functors (Section 4) from the source (Section 5) to the target language (Section 7);

-

• introducing (categorical) logical relations techniques (aka sconing) for reasoning about expressive functional languages that include both inductive and coinductive types (Section 11);

-

• using such a logical relations construction over the concrete denotational semantics (Section 10) of the source and target languages (Section 9) that demonstrates that CHAD correctly calculates the usual mathematical derivative (Section 12), even for programs between inductive types (Section 13);

-

• discussing examples (Section 14) and applied considerations around implementing this extended CHAD method in practice (Section 15).

We start by giving a high-level overview of the key insights and theorems in this paper in Section 2.

2. Key Ideas

2.1 Origins in semantic derivatives and chain rules

CHAD starts from the observation that for a differentiable function:

it is useful to pair the primal function value f(x) with f’s derivative Df(x) at x if we want to calculate derivatives in a compositional way (where we underline the spaces

![]() $\underline{\mathbb{R}}^n$

of tangent vectors to emphasize their algebraic structure and we write a linear function type for the derivative to indicate its linearity in its tangent vector argument):

$\underline{\mathbb{R}}^n$

of tangent vectors to emphasize their algebraic structure and we write a linear function type for the derivative to indicate its linearity in its tangent vector argument):

\begin{align*}\mathcal{T}{f} : & \ {\mathbb{R}}^n \to {\mathbb{R}}^m\times (\underline{\mathbb{R}}^n\multimap \underline{\mathbb{R}}^m)\\&x\mapsto (f(x),Df(x)).\end{align*}

\begin{align*}\mathcal{T}{f} : & \ {\mathbb{R}}^n \to {\mathbb{R}}^m\times (\underline{\mathbb{R}}^n\multimap \underline{\mathbb{R}}^m)\\&x\mapsto (f(x),Df(x)).\end{align*}

Indeed, the chain rule for derivatives teaches us that we compute the derivative of a composition

![]() $g\circ f$

of functions as follows, where we write

$g\circ f$

of functions as follows, where we write

![]() $\mathcal{T}_{1}{f}\stackrel {\mathrm{def}}= \pi_1\circ \mathcal{T}{f}$

and

$\mathcal{T}_{1}{f}\stackrel {\mathrm{def}}= \pi_1\circ \mathcal{T}{f}$

and

![]() $\mathcal{T}_{2}{f}\stackrel {\mathrm{def}}= \pi_2\circ \mathcal{T}{f}$

for the first and second components of

$\mathcal{T}_{2}{f}\stackrel {\mathrm{def}}= \pi_2\circ \mathcal{T}{f}$

for the first and second components of

![]() $\mathcal{T}{f}$

, respectively:

$\mathcal{T}{f}$

, respectively:

We make two observations:

(1) the derivative of

![]() $g\circ f$

does depend not only on the derivatives of g and f but also on the primal value of f;

$g\circ f$

does depend not only on the derivatives of g and f but also on the primal value of f;

(2) the primal value of f is used twice: once in the primal value of

![]() $g\circ f $

and once in its derivative; we want to share these repeated subcomputations.

$g\circ f $

and once in its derivative; we want to share these repeated subcomputations.

Insight 1. This shows that it is wise to pair up computations of primal function values and derivatives and to share computation between both if we want to calculate derivatives of functions compositionally and efficiently.

Similar observations can be made for f’s transposed (adjoint) derivative

![]() ${Df}^{t}$

, which propagates not tangent vectors but cotangent vectors and which we can pair up as:

${Df}^{t}$

, which propagates not tangent vectors but cotangent vectors and which we can pair up as:

\begin{align*}\mathcal{T}^*f : & \ {\mathbb{R}}^n \to {\mathbb{R}}^m\times (\underline{\mathbb{R}}^m\multimap \underline{\mathbb{R}}^n)\\ &x\mapsto (f(x),{Df}^{t}(x)) \end{align*}

\begin{align*}\mathcal{T}^*f : & \ {\mathbb{R}}^n \to {\mathbb{R}}^m\times (\underline{\mathbb{R}}^m\multimap \underline{\mathbb{R}}^n)\\ &x\mapsto (f(x),{Df}^{t}(x)) \end{align*}

to get the following chain rule:

CHAD directly implements the operations

![]() $\mathcal{T}_{}$

and

$\mathcal{T}_{}$

and

![]() $\mathcal{T}^*$

as source code transformations

$\mathcal{T}^*$

as source code transformations

![]() $\overrightarrow{\mathcal{D}}$

and

$\overrightarrow{\mathcal{D}}$

and

![]() $\overleftarrow{\mathcal{D}}$

on a functional language to implement forward- and reverse-mode AD, respectively. These code transformations are defined compositionally through structural induction on the syntax, by making use of the chain rules above.

$\overleftarrow{\mathcal{D}}$

on a functional language to implement forward- and reverse-mode AD, respectively. These code transformations are defined compositionally through structural induction on the syntax, by making use of the chain rules above.

2.2 CHAD on a first-order functional language

We first discuss what the technique looks like on a standard typed first-order functional language. Despite our different presentation in terms of a

![]() $\lambda$

-calculus rather than Elliott’s categorical combinators, this is essentially the algorithm of Elliott (Reference Elliott2018). Types

$\lambda$

-calculus rather than Elliott’s categorical combinators, this is essentially the algorithm of Elliott (Reference Elliott2018). Types

![]() $\tau,\sigma,\rho$

are either statically sized arrays of n real numbers

$\tau,\sigma,\rho$

are either statically sized arrays of n real numbers

![]() ${\mathbf{real}}^n$

or tuples

${\mathbf{real}}^n$

or tuples

![]() $\tau\boldsymbol{\mathop{*}}\sigma$

of types

$\tau\boldsymbol{\mathop{*}}\sigma$

of types

![]() $\tau,\sigma$

. We consider programs t of type

$\tau,\sigma$

. We consider programs t of type

![]() $\sigma$

in typing context

$\sigma$

in typing context

![]() $\Gamma=x_1:\tau_1,\ldots,x_n:\tau_n$

, where

$\Gamma=x_1:\tau_1,\ldots,x_n:\tau_n$

, where

![]() $x_i$

are identifiers. We write such a typing judgment for programs in context as

$x_i$

are identifiers. We write such a typing judgment for programs in context as

![]() $\Gamma\vdash t:\sigma$

. As long as our language has certain primitive operations (which we represent schematically)

$\Gamma\vdash t:\sigma$

. As long as our language has certain primitive operations (which we represent schematically)

$$\frac{\Gamma \vdash t_1 : {\mathbf{real}}^{n_1}\quad\cdots\quad \Gamma \vdash t_k : {\mathbf{real}}^{n_k}}{\Gamma \vdash \mathrm{op}(t_1,\ldots,t_k) : {\mathbf{real}}^m}$$

$$\frac{\Gamma \vdash t_1 : {\mathbf{real}}^{n_1}\quad\cdots\quad \Gamma \vdash t_k : {\mathbf{real}}^{n_k}}{\Gamma \vdash \mathrm{op}(t_1,\ldots,t_k) : {\mathbf{real}}^m}$$

such as constants (as nullary operations), (elementwise) addition and multiplication of arrays, inner products and certain nonlinear functions like sigmoid functions, we can write complex programs by sequencing together such operations. For example, writing

![]() $\mathbf{real}$

for

$\mathbf{real}$

for

![]() ${\mathbf{real}}^1$

, we can write a program

${\mathbf{real}}^1$

, we can write a program

![]() $x_1:\mathbf{real},x_2:\mathbf{real},x_3:\mathbf{real},x_4:\mathbf{real}\vdash s:\mathbf{real}$

by:

$x_1:\mathbf{real},x_2:\mathbf{real},x_3:\mathbf{real},x_4:\mathbf{real}\vdash s:\mathbf{real}$

by:

\begin{align*}&\mathbf{let}\,{y}=\,{x_1 * x_4 + 2 * x_2 }\,\mathbf{in}\,{}\\&\mathbf{let}\,{z}=\,{y* x_3}\,\mathbf{in}\,{}\\&\mathbf{let}\,w=\,{z+ x_4}\,\mathbf{in}\,{\sin{(w)}},\end{align*}

\begin{align*}&\mathbf{let}\,{y}=\,{x_1 * x_4 + 2 * x_2 }\,\mathbf{in}\,{}\\&\mathbf{let}\,{z}=\,{y* x_3}\,\mathbf{in}\,{}\\&\mathbf{let}\,w=\,{z+ x_4}\,\mathbf{in}\,{\sin{(w)}},\end{align*}

where we indicate shared subcomputations with

![]() $\mathbf{let}$

-bindings.

$\mathbf{let}$

-bindings.

CHAD observes that we can define for each language type

![]() $\tau$

associated types of

$\tau$

associated types of

-

• forward-mode primal values

$\overrightarrow{\mathcal{D}}(\tau)_{1}$

;

$\overrightarrow{\mathcal{D}}(\tau)_{1}$

;

we define

![]() $\overrightarrow{\mathcal{D}}({\mathbf{real}}^n)={\mathbf{real}}^n$

and

$\overrightarrow{\mathcal{D}}({\mathbf{real}}^n)={\mathbf{real}}^n$

and

![]() $\overrightarrow{\mathcal{D}}(\tau\boldsymbol{\mathop{*}}\sigma)_1=\overrightarrow{\mathcal{D}}(\tau)_1\boldsymbol{\mathop{*}}\overrightarrow{\mathcal{D}}(\sigma)_1$

, that is, for now

$\overrightarrow{\mathcal{D}}(\tau\boldsymbol{\mathop{*}}\sigma)_1=\overrightarrow{\mathcal{D}}(\tau)_1\boldsymbol{\mathop{*}}\overrightarrow{\mathcal{D}}(\sigma)_1$

, that is, for now

![]() $\overrightarrow{\mathcal{D}}(\tau)_1=\tau$

;

$\overrightarrow{\mathcal{D}}(\tau)_1=\tau$

;

-

• reverse-mode primal values

$\overleftarrow{\mathcal{D}}(\tau)_1$

;

$\overleftarrow{\mathcal{D}}(\tau)_1$

;

we define

![]() $\overleftarrow{\mathcal{D}}({\mathbf{real}}^n)={\mathbf{real}}^n$

and

$\overleftarrow{\mathcal{D}}({\mathbf{real}}^n)={\mathbf{real}}^n$

and

![]() $\overleftarrow{\mathcal{D}}(\tau)\boldsymbol{\mathop{*}}(\sigma)_1=\overleftarrow{\mathcal{D}}(\tau)_1\boldsymbol{\mathop{*}}\overleftarrow{\mathcal{D}}(\sigma)_1$

; that is, for now

$\overleftarrow{\mathcal{D}}(\tau)\boldsymbol{\mathop{*}}(\sigma)_1=\overleftarrow{\mathcal{D}}(\tau)_1\boldsymbol{\mathop{*}}\overleftarrow{\mathcal{D}}(\sigma)_1$

; that is, for now

![]() $\overleftarrow{\mathcal{D}}(\tau)_1=\tau$

;

$\overleftarrow{\mathcal{D}}(\tau)_1=\tau$

;

-

• forward-mode tangent values

$\overrightarrow{\mathcal{D}}(\tau)_2$

;

$\overrightarrow{\mathcal{D}}(\tau)_2$

;

we define

![]() $\overrightarrow{\mathcal{D}}({\mathbf{real}}^n)_2=\underline{\mathbf{real}}^n$

and

$\overrightarrow{\mathcal{D}}({\mathbf{real}}^n)_2=\underline{\mathbf{real}}^n$

and

![]() $\overrightarrow{\mathcal{D}}(\tau\boldsymbol{\mathop{*}}\sigma)=\overrightarrow{\mathcal{D}}(\tau)_2\boldsymbol{\mathop{*}}\overrightarrow{\mathcal{D}}(\sigma)_2$

;

$\overrightarrow{\mathcal{D}}(\tau\boldsymbol{\mathop{*}}\sigma)=\overrightarrow{\mathcal{D}}(\tau)_2\boldsymbol{\mathop{*}}\overrightarrow{\mathcal{D}}(\sigma)_2$

;

-

• reverse-mode cotangent values

$\overleftarrow{\mathcal{D}}(\tau)_2$

;

$\overleftarrow{\mathcal{D}}(\tau)_2$

;

we define

![]() $\overleftarrow{\mathcal{D}}({\mathbf{real}}^n)_2=\underline{\mathbf{real}}^n$

and

$\overleftarrow{\mathcal{D}}({\mathbf{real}}^n)_2=\underline{\mathbf{real}}^n$

and

![]() $\overleftarrow{\mathcal{D}}(\tau\boldsymbol{\mathop{*}}\sigma)=\overleftarrow{\mathcal{D}}(\tau)_2\boldsymbol{\mathop{*}}\overleftarrow{\mathcal{D}}(\sigma)_2$

.

$\overleftarrow{\mathcal{D}}(\tau\boldsymbol{\mathop{*}}\sigma)=\overleftarrow{\mathcal{D}}(\tau)_2\boldsymbol{\mathop{*}}\overleftarrow{\mathcal{D}}(\sigma)_2$

.

Indeed, the justification for these definitions is the crucial observation that a (co)tangent vector to a product of spaces is precisely a pair of tangent (co)vectors to the two spaces. Put differently, the space

![]() $\mathcal{T}_{(x,y)}{(X\times Y)}$

of (co)tangent vectors to

$\mathcal{T}_{(x,y)}{(X\times Y)}$

of (co)tangent vectors to

![]() $X\times Y$

at a point (x,y) equals the product space

$X\times Y$

at a point (x,y) equals the product space

![]() $(\mathcal{T}_{x}X) \times (\mathcal{T}_{y} Y)$

(Tu Reference Tu2011).

$(\mathcal{T}_{x}X) \times (\mathcal{T}_{y} Y)$

(Tu Reference Tu2011).

We write the (co)tangent types associated with

![]() ${\mathbf{real}}^n$

as

${\mathbf{real}}^n$

as

![]() $\underline{\mathbf{real}}^n$

to emphasize that it is a linear type and to distinguish it from the cartesian type

$\underline{\mathbf{real}}^n$

to emphasize that it is a linear type and to distinguish it from the cartesian type

![]() ${\mathbf{real}}^n$

. In particular, we will see that tangent and cotangent values are elements of linear types that come equipped with a commutative monoid structure

${\mathbf{real}}^n$

. In particular, we will see that tangent and cotangent values are elements of linear types that come equipped with a commutative monoid structure

![]() $(\underline{0},+)$

. Indeed, (transposed) derivatives are linear functions: homomorphisms of this monoid structure1. We extend these operations

$(\underline{0},+)$

. Indeed, (transposed) derivatives are linear functions: homomorphisms of this monoid structure1. We extend these operations

![]() $\overrightarrow{\mathcal{D}}$

and

$\overrightarrow{\mathcal{D}}$

and

![]() $\overleftarrow{\mathcal{D}}$

to act on typing contexts

$\overleftarrow{\mathcal{D}}$

to act on typing contexts

![]() $\Gamma$

:

$\Gamma$

:

\begin{align*}\overrightarrow{\mathcal{D}}(x_1:\tau_1,\ldots,x_n:\tau_n)_1&=x_1:\overrightarrow{\mathcal{D}}(\tau_1)_1,\ldots, x_n:\overrightarrow{\mathcal{D}}(\tau_n)_1 \\\overleftarrow{\mathcal{D}}(x_1:\tau_1,\ldots,x_n:\tau_n)_1&=x_1:\overleftarrow{\mathcal{D}}(\tau_1)_1,\ldots, x_n:\overleftarrow{\mathcal{D}}(\tau_n)_1 \\\overrightarrow{\mathcal{D}}(x_1:\tau_1,\ldots,x_n:\tau_n)_2&=\overrightarrow{\mathcal{D}}(\tau_1)_2\boldsymbol{\mathop{*}}\cdots\boldsymbol{\mathop{*}}\overrightarrow{\mathcal{D}}(\tau_n)_2\\\overleftarrow{\mathcal{D}}(x_1:\tau_1,\ldots,x_n:\tau_n)_2&=\overleftarrow{\mathcal{D}}(\tau_1)_2\boldsymbol{\mathop{*}}\cdots\boldsymbol{\mathop{*}}\overleftarrow{\mathcal{D}}(\tau_n)_2.\end{align*}

\begin{align*}\overrightarrow{\mathcal{D}}(x_1:\tau_1,\ldots,x_n:\tau_n)_1&=x_1:\overrightarrow{\mathcal{D}}(\tau_1)_1,\ldots, x_n:\overrightarrow{\mathcal{D}}(\tau_n)_1 \\\overleftarrow{\mathcal{D}}(x_1:\tau_1,\ldots,x_n:\tau_n)_1&=x_1:\overleftarrow{\mathcal{D}}(\tau_1)_1,\ldots, x_n:\overleftarrow{\mathcal{D}}(\tau_n)_1 \\\overrightarrow{\mathcal{D}}(x_1:\tau_1,\ldots,x_n:\tau_n)_2&=\overrightarrow{\mathcal{D}}(\tau_1)_2\boldsymbol{\mathop{*}}\cdots\boldsymbol{\mathop{*}}\overrightarrow{\mathcal{D}}(\tau_n)_2\\\overleftarrow{\mathcal{D}}(x_1:\tau_1,\ldots,x_n:\tau_n)_2&=\overleftarrow{\mathcal{D}}(\tau_1)_2\boldsymbol{\mathop{*}}\cdots\boldsymbol{\mathop{*}}\overleftarrow{\mathcal{D}}(\tau_n)_2.\end{align*}

To each program

![]() $\Gamma\vdash t:\sigma$

, CHAD associates programs calculating the forward-mode and reverse-mode derivatives

$\Gamma\vdash t:\sigma$

, CHAD associates programs calculating the forward-mode and reverse-mode derivatives

![]() $\overrightarrow{\mathcal{D}}_{{\overline{\Gamma}}}(t)$

and

$\overrightarrow{\mathcal{D}}_{{\overline{\Gamma}}}(t)$

and

![]() $\overleftarrow{\mathcal{D}}_{{\overline{\Gamma}}}(t)$

, which are indexed by the list

$\overleftarrow{\mathcal{D}}_{{\overline{\Gamma}}}(t)$

, which are indexed by the list

![]() ${\overline{\Gamma}}$

of identifiers that occur in

${\overline{\Gamma}}$

of identifiers that occur in

![]() $\Gamma$

:

$\Gamma$

:

\begin{align*}&\overrightarrow{\mathcal{D}}(\Gamma)_1\vdash \overrightarrow{\mathcal{D}}_{\overline{\Gamma}}(t) : \overrightarrow{\mathcal{D}}(\sigma)(\boldsymbol){\mathop{*}} \left( \overrightarrow{\mathcal{D}}(\Gamma)_2\multimap \overrightarrow{\mathcal{D}}(\sigma)(\right)\\&\overleftarrow{\mathcal{D}}(\Gamma)_1\vdash \overleftarrow{\mathcal{D}}_{\overline{\Gamma}}(t) : \overleftarrow{\mathcal{D}}(\sigma)\boldsymbol{\mathop{*}} \left( \overleftarrow{\mathcal{D}}(\sigma)\multimap\overleftarrow{\mathcal{D}}(\Gamma)_2 \right).\end{align*}

\begin{align*}&\overrightarrow{\mathcal{D}}(\Gamma)_1\vdash \overrightarrow{\mathcal{D}}_{\overline{\Gamma}}(t) : \overrightarrow{\mathcal{D}}(\sigma)(\boldsymbol){\mathop{*}} \left( \overrightarrow{\mathcal{D}}(\Gamma)_2\multimap \overrightarrow{\mathcal{D}}(\sigma)(\right)\\&\overleftarrow{\mathcal{D}}(\Gamma)_1\vdash \overleftarrow{\mathcal{D}}_{\overline{\Gamma}}(t) : \overleftarrow{\mathcal{D}}(\sigma)\boldsymbol{\mathop{*}} \left( \overleftarrow{\mathcal{D}}(\sigma)\multimap\overleftarrow{\mathcal{D}}(\Gamma)_2 \right).\end{align*}

Observing that each program t computes a differentiable function

![]() $\unicode{x27E6} t\unicode{x27E7}$

between Euclidean spaces, as long as all primitive operations op are differentiable, the key property that we prove for these code transformations is that they actually calculate derivatives:

$\unicode{x27E6} t\unicode{x27E7}$

between Euclidean spaces, as long as all primitive operations op are differentiable, the key property that we prove for these code transformations is that they actually calculate derivatives:

Theorem A (Correctness of CHAD, Theorem 124). For any well-typed program:

we have that

![]() $\unicode{x27E6} \overrightarrow{\mathcal{D}}_{x_1,\ldots,x_k}(t)\unicode{x27E7}=\mathcal{T}_{\unicode{x27E6} t\unicode{x27E7}}\;\text{ and }\;\unicode{x27E6} \overleftarrow{\mathcal{D}}_{x_1,\ldots,x_k}(t)\unicode{x27E7}=\mathcal{T}^*{\unicode{x27E6} t\unicode{x27E7}}.$

$\unicode{x27E6} \overrightarrow{\mathcal{D}}_{x_1,\ldots,x_k}(t)\unicode{x27E7}=\mathcal{T}_{\unicode{x27E6} t\unicode{x27E7}}\;\text{ and }\;\unicode{x27E6} \overleftarrow{\mathcal{D}}_{x_1,\ldots,x_k}(t)\unicode{x27E7}=\mathcal{T}^*{\unicode{x27E6} t\unicode{x27E7}}.$

Once we fix the semantics for the source and target languages, we can show that this theorem holds if we define

![]() $\overrightarrow{\mathcal{D}}$

and

$\overrightarrow{\mathcal{D}}$

and

![]() $\overleftarrow{\mathcal{D}}$

on programs using the chain rule. The proof works by plain induction on the syntax. For example, we can correctly define reverse-mode CHAD on a first-order language as follows:

$\overleftarrow{\mathcal{D}}$

on programs using the chain rule. The proof works by plain induction on the syntax. For example, we can correctly define reverse-mode CHAD on a first-order language as follows:

\begin{align*} &\overleftarrow{\mathcal{D}}_{\overline{\Gamma}}(\mathrm{op}(t_1,\ldots,t_k)) \stackrel{\mathrm{def}}{=} && \mathbf{let}\,\langle x_1,x_1'\rangle=\,\overleftarrow{\mathcal{D}}_{\overline{\Gamma}}(t_1)\,\mathbf{in}\,\cdots\\ &&& \mathbf{let}\,\langle x_k,x_k'\rangle=\,\overleftarrow{\mathcal{D}}_{\overline{\Gamma}}(t_k)\,\mathbf{in}\,\\ &&&\langle\mathrm{op}(x_1,\ldots,x_k),\underline{\lambda} \mathsf{v}. \mathbf{let}\,\mathsf{v}=\,{D\mathrm{op}}^{t}(x_1,\ldots,x_k;\mathsf{v})\,\mathbf{in}\,\\ &&&\phantom{\langle \mathrm{op}(x_1,\ldots,x_k),\underline{\lambda} \mathsf{v}.\rangle}x_1'\bullet \mathbf{proj}_{1}\,{\mathsf{v}}+\cdots+x_k'\bullet \mathbf{proj}_{k}\,{\mathsf{v}}\rangle\end{align*}

\begin{align*} &\overleftarrow{\mathcal{D}}_{\overline{\Gamma}}(\mathrm{op}(t_1,\ldots,t_k)) \stackrel{\mathrm{def}}{=} && \mathbf{let}\,\langle x_1,x_1'\rangle=\,\overleftarrow{\mathcal{D}}_{\overline{\Gamma}}(t_1)\,\mathbf{in}\,\cdots\\ &&& \mathbf{let}\,\langle x_k,x_k'\rangle=\,\overleftarrow{\mathcal{D}}_{\overline{\Gamma}}(t_k)\,\mathbf{in}\,\\ &&&\langle\mathrm{op}(x_1,\ldots,x_k),\underline{\lambda} \mathsf{v}. \mathbf{let}\,\mathsf{v}=\,{D\mathrm{op}}^{t}(x_1,\ldots,x_k;\mathsf{v})\,\mathbf{in}\,\\ &&&\phantom{\langle \mathrm{op}(x_1,\ldots,x_k),\underline{\lambda} \mathsf{v}.\rangle}x_1'\bullet \mathbf{proj}_{1}\,{\mathsf{v}}+\cdots+x_k'\bullet \mathbf{proj}_{k}\,{\mathsf{v}}\rangle\end{align*}

\begin{align*}&\overleftarrow{\mathcal{D}}_{\overline{\Gamma}}(x) \stackrel{\mathrm{def}}{=} && \langle x,\underline{\lambda} \mathsf{v}. \mathbf{coproj}_{\mathbf{idx}(x; {\overline{\Gamma}})\,}\,(\mathsf{v})\rangle\end{align*}

\begin{align*}&\overleftarrow{\mathcal{D}}_{\overline{\Gamma}}(x) \stackrel{\mathrm{def}}{=} && \langle x,\underline{\lambda} \mathsf{v}. \mathbf{coproj}_{\mathbf{idx}(x; {\overline{\Gamma}})\,}\,(\mathsf{v})\rangle\end{align*}

\begin{align*} &\overleftarrow{\mathcal{D}}_{\overline{\Gamma}}(\mathbf{let}\,x=\,t\,\mathbf{in}\,s)\stackrel{\mathrm{def}}{=} &&\mathbf{let}\,\langle x,x'\rangle=\,\overleftarrow{\mathcal{D}}_{\overline{\Gamma}}(t)\,\mathbf{in}\,\\ &&& \mathbf{let}\,\langle y,y'\rangle=\,\overleftarrow{\mathcal{D}}_{\overline{\Gamma},x}(s)\,\mathbf{in}\,\\ &&& \langle y, \underline{\lambda} \mathsf{v}. \mathbf{let}\,\mathsf{v}=\,y'\bullet \mathsf{v}\,\mathbf{in}\, \mathbf{fst}\,\mathsf{v}+x'\bullet (\mathbf{snd}\, \mathsf{v})\rangle\end{align*}

\begin{align*} &\overleftarrow{\mathcal{D}}_{\overline{\Gamma}}(\mathbf{let}\,x=\,t\,\mathbf{in}\,s)\stackrel{\mathrm{def}}{=} &&\mathbf{let}\,\langle x,x'\rangle=\,\overleftarrow{\mathcal{D}}_{\overline{\Gamma}}(t)\,\mathbf{in}\,\\ &&& \mathbf{let}\,\langle y,y'\rangle=\,\overleftarrow{\mathcal{D}}_{\overline{\Gamma},x}(s)\,\mathbf{in}\,\\ &&& \langle y, \underline{\lambda} \mathsf{v}. \mathbf{let}\,\mathsf{v}=\,y'\bullet \mathsf{v}\,\mathbf{in}\, \mathbf{fst}\,\mathsf{v}+x'\bullet (\mathbf{snd}\, \mathsf{v})\rangle\end{align*}

\begin{align*}&\overleftarrow{\mathcal{D}}_{\overline{\Gamma}}(\langle t, s\rangle) \stackrel {\mathrm{def}}= &&\mathbf{let}\,\langle x,x'\rangle=\,\overleftarrow{\mathcal{D}}_{\overline{\Gamma}}(t)\,\mathbf{in}\, \\ &&&\mathbf{let}\,\langle y,y'\rangle=\,\overleftarrow{\mathcal{D}}_{\overline{\Gamma}}(s)\,\mathbf{in}\,\\ &&&\langle \langle x, y \rangle,\underline{\lambda} \mathsf{v}. x'\bullet (\mathbf{fst}\,\mathsf{v}) + {y'\bullet(\mathbf{snd}\, \mathsf{v})}\rangle\end{align*}

\begin{align*}&\overleftarrow{\mathcal{D}}_{\overline{\Gamma}}(\langle t, s\rangle) \stackrel {\mathrm{def}}= &&\mathbf{let}\,\langle x,x'\rangle=\,\overleftarrow{\mathcal{D}}_{\overline{\Gamma}}(t)\,\mathbf{in}\, \\ &&&\mathbf{let}\,\langle y,y'\rangle=\,\overleftarrow{\mathcal{D}}_{\overline{\Gamma}}(s)\,\mathbf{in}\,\\ &&&\langle \langle x, y \rangle,\underline{\lambda} \mathsf{v}. x'\bullet (\mathbf{fst}\,\mathsf{v}) + {y'\bullet(\mathbf{snd}\, \mathsf{v})}\rangle\end{align*}

Here, we write

![]() $\underline{\lambda} \mathsf{v}. t$

for a linear function abstraction (merely a notational convention – it can simply be thought of as a plain function abstraction) and

$\underline{\lambda} \mathsf{v}. t$

for a linear function abstraction (merely a notational convention – it can simply be thought of as a plain function abstraction) and

![]() $t\bullet s$

for a linear function application (which again can be thought of as a plain function application). Furthermore, given

$t\bullet s$

for a linear function application (which again can be thought of as a plain function application). Furthermore, given

![]() $\Gamma;\mathsf{v}:\underline{\alpha}\vdash t:\boldsymbol{(}\underline{\sigma}_1 \boldsymbol{\mathop{*}} \cdots \boldsymbol{\mathop{*}} \underline{\sigma}_n\boldsymbol{)}$

, we write

$\Gamma;\mathsf{v}:\underline{\alpha}\vdash t:\boldsymbol{(}\underline{\sigma}_1 \boldsymbol{\mathop{*}} \cdots \boldsymbol{\mathop{*}} \underline{\sigma}_n\boldsymbol{)}$

, we write

![]() $\Gamma;\mathsf{v}:\underline{\alpha}\vdash \mathbf{proj}_{i}\,(t):\underline{\sigma}_i$

for the i-th projection of t. Similarly, given

$\Gamma;\mathsf{v}:\underline{\alpha}\vdash \mathbf{proj}_{i}\,(t):\underline{\sigma}_i$

for the i-th projection of t. Similarly, given

![]() $\Gamma;\mathsf{v}:\underline{\alpha}\vdash t:\underline{\sigma}_i$

, we write the i-th coprojection

$\Gamma;\mathsf{v}:\underline{\alpha}\vdash t:\underline{\sigma}_i$

, we write the i-th coprojection

![]() $\Gamma;\mathsf{v}:\underline{\alpha}\vdash\mathbf{coproj}_{i}\,(t)= \langle \underline{0},\ldots,\underline{0},t,\underline{0},\ldots,\underline{0}\rangle:\boldsymbol{(}\underline{\sigma}_1 \boldsymbol{\mathop{*}} \cdots \boldsymbol{\mathop{*}} \underline{\alpha}_n\boldsymbol{)}$

and we write

$\Gamma;\mathsf{v}:\underline{\alpha}\vdash\mathbf{coproj}_{i}\,(t)= \langle \underline{0},\ldots,\underline{0},t,\underline{0},\ldots,\underline{0}\rangle:\boldsymbol{(}\underline{\sigma}_1 \boldsymbol{\mathop{*}} \cdots \boldsymbol{\mathop{*}} \underline{\alpha}_n\boldsymbol{)}$

and we write

![]() $\mathbf{idx}(x_i; x_1,\ldots,x_n)\,=i$

for the index of an identifier in a list of identifiers. Finally,

$\mathbf{idx}(x_i; x_1,\ldots,x_n)\,=i$

for the index of an identifier in a list of identifiers. Finally,

![]() ${D\mathrm{op}}^{t}$

here is a linear operation that implements the transposed derivative of the primitive operation op.

${D\mathrm{op}}^{t}$

here is a linear operation that implements the transposed derivative of the primitive operation op.

Note, in particular, that CHAD pairs up primal and (co)tangent values and shares common subcomputations. We see that what CHAD achieves is a compositional efficient reverse-mode AD algorithm that computes the (transposed) derivatives of a composite program in terms of the (transposed) derivatives

![]() ${D\mathrm{op}}^{t}$

of the basic building blocks op.

${D\mathrm{op}}^{t}$

of the basic building blocks op.

2.3 CHAD on a higher-order language: a categorical perspective saves the day

So far, this account of CHAD has been smooth sailing: we can simply follow the usual mathematics of (transposed) derivatives of functions

![]() ${\mathbb{R}}^n\to {\mathbb{R}}^m$

and implement it in code. A challenge arises when trying to extend the algorithm to more expressive languages with features that do not have an obvious counterpart in multivariate calculus, like higher-order functions.

${\mathbb{R}}^n\to {\mathbb{R}}^m$

and implement it in code. A challenge arises when trying to extend the algorithm to more expressive languages with features that do not have an obvious counterpart in multivariate calculus, like higher-order functions.

Vákár and Smeding (Reference Vákár and Smeding2022) and Vákár (Reference Vákár2021) solve this problem by observing that we can understand CHAD through the categorical structure of Grothendieck constructions (aka

![]() $\Sigma$

-types of categories). In particular, they observe that the syntactic category of the target language for CHAD, a language with both cartesian and linear types, forms a locally indexed category

$\Sigma$

-types of categories). In particular, they observe that the syntactic category of the target language for CHAD, a language with both cartesian and linear types, forms a locally indexed category

![]() ${\mathbf{LSyn}}:{\mathbf{CSyn}}^{op}\to \mathbf{Cat}$

, that is, functor to the category of categories and functors for which

${\mathbf{LSyn}}:{\mathbf{CSyn}}^{op}\to \mathbf{Cat}$

, that is, functor to the category of categories and functors for which

![]() $\mathrm{obj} \left( {\mathbf{LSyn}}\right)(\tau)=\mathrm{obj} \left( {\mathbf{LSyn}}\right)(\sigma)$

for all

$\mathrm{obj} \left( {\mathbf{LSyn}}\right)(\tau)=\mathrm{obj} \left( {\mathbf{LSyn}}\right)(\sigma)$

for all

![]() $\tau,\sigma\in\mathrm{obj} \left( {\mathbf{CSyn}}\right)$

and

$\tau,\sigma\in\mathrm{obj} \left( {\mathbf{CSyn}}\right)$

and

![]() ${\mathbf{LSyn}}(\tau\xrightarrow{t}\sigma):{\mathbf{LSyn}}(\sigma)\to{\mathbf{LSyn}}(\tau)$

is identity on objects. Here,

${\mathbf{LSyn}}(\tau\xrightarrow{t}\sigma):{\mathbf{LSyn}}(\sigma)\to{\mathbf{LSyn}}(\tau)$

is identity on objects. Here,

![]() ${\mathbf{CSyn}} $

is the syntactic category whose objects are cartesian types

${\mathbf{CSyn}} $

is the syntactic category whose objects are cartesian types

![]() $\tau,\sigma,\rho$

and morphisms

$\tau,\sigma,\rho$

and morphisms

![]() $\tau\to \sigma$

are programs

$\tau\to \sigma$

are programs

![]() $x:\tau\vdash t:\sigma$

, up to a standard program equivalence. Similarly,

$x:\tau\vdash t:\sigma$

, up to a standard program equivalence. Similarly,

![]() ${\mathbf{LSyn}}(\tau)$

is the syntactic category whose objects are linear types

${\mathbf{LSyn}}(\tau)$

is the syntactic category whose objects are linear types

![]() $\underline{\alpha},\underline{\sigma},\underline{\gamma}$

and morphisms

$\underline{\alpha},\underline{\sigma},\underline{\gamma}$

and morphisms

![]() $\underline{\alpha}\to\underline{\gamma}$

are programs

$\underline{\alpha}\to\underline{\gamma}$

are programs

![]() $x:\tau;\mathsf{v}:\underline{\alpha}\vdash t:\underline{\gamma}$

of type

$x:\tau;\mathsf{v}:\underline{\alpha}\vdash t:\underline{\gamma}$

of type

![]() $\underline{\gamma}$

that have a free variable x of cartesian type

$\underline{\gamma}$

that have a free variable x of cartesian type

![]() $\tau$

and a free variable

$\tau$

and a free variable

![]() $\mathsf{v}$

of linear type

$\mathsf{v}$

of linear type

![]() $\underline{\alpha}$

. The key observation then is the following.

$\underline{\alpha}$

. The key observation then is the following.

Theorem B (CHAD from a universal property, Corollary 69). Forward- and reverse-mode CHAD are the unique structure-preserving functors:

\begin{align*} &\overrightarrow{\mathcal{D}}({-}):\mathbf{Syn}\to \Sigma_{{\mathbf{CSyn}}}{\mathbf{LSyn}}\\ &\overleftarrow{\mathcal{D}}({-}):\mathbf{Syn}\to \Sigma_{{\mathbf{CSyn}}}{\mathbf{LSyn}}^{op}\end{align*}

\begin{align*} &\overrightarrow{\mathcal{D}}({-}):\mathbf{Syn}\to \Sigma_{{\mathbf{CSyn}}}{\mathbf{LSyn}}\\ &\overleftarrow{\mathcal{D}}({-}):\mathbf{Syn}\to \Sigma_{{\mathbf{CSyn}}}{\mathbf{LSyn}}^{op}\end{align*}

from the syntactic category

![]() $\mathbf{Syn}$

of the source language to (opposite) Grothendieck construction of the target language

$\mathbf{Syn}$

of the source language to (opposite) Grothendieck construction of the target language

![]() ${\mathbf{LSyn}}:{\mathbf{CSyn}}^{op}\to \mathbf{Cat}$

that send primitive operations op to their derivative

${\mathbf{LSyn}}:{\mathbf{CSyn}}^{op}\to \mathbf{Cat}$

that send primitive operations op to their derivative

![]() $D\mathrm{op}$

and transposed derivative

$D\mathrm{op}$

and transposed derivative

![]() ${D\mathrm{op}}^{t}$

, respectively.

${D\mathrm{op}}^{t}$

, respectively.

In particular, they prove that this is true for the unambiguous definitions of CHAD for a source language that is the first-order functional language we have considered above, which we can see as the freely generated category

![]() $\mathbf{Syn}$

with finite products, generated by the objects

$\mathbf{Syn}$

with finite products, generated by the objects

![]() ${\mathbf{real}}^n$

and morphisms op. That is, for this limited language, “structure-preserving functor” should be interpreted as “finite product-preserving functor.”

${\mathbf{real}}^n$

and morphisms op. That is, for this limited language, “structure-preserving functor” should be interpreted as “finite product-preserving functor.”

This leads (Vákár Reference Vákár2021; Vákár and Smeding Reference Vákár and Smeding2022) to the idea to try to use Theorem B as a definition of CHAD on more expressive programming languages. In particular, they consider a higher-order functional source language

![]() $\mathbf{Syn}$

, that is, the freely generated cartesian closed category on the objects

$\mathbf{Syn}$

, that is, the freely generated cartesian closed category on the objects

![]() ${\mathbf{real}}^n$

and morphisms op and try to define

${\mathbf{real}}^n$

and morphisms op and try to define

![]() $\overrightarrow{\mathcal{D}}(-)$

and

$\overrightarrow{\mathcal{D}}(-)$

and

![]() $\overleftarrow{\mathcal{D}}(-)$

as the (unique) structure-preserving (meaning: cartesian closed) functors to

$\overleftarrow{\mathcal{D}}(-)$

as the (unique) structure-preserving (meaning: cartesian closed) functors to

![]() $\Sigma_{{\mathbf{CSyn}}}{\mathbf{LSyn}}$

and

$\Sigma_{{\mathbf{CSyn}}}{\mathbf{LSyn}}$

and

![]() $\Sigma_{{\mathbf{CSyn}}}{\mathbf{LSyn}}^{op}$

for a suitable linear target language

$\Sigma_{{\mathbf{CSyn}}}{\mathbf{LSyn}}^{op}$

for a suitable linear target language

![]() ${\mathbf{LSyn}}:{\mathbf{CSyn}}^{op}\to \mathbf{Cat}$

. The main contribution then is to identify conditions on a locally indexed category

${\mathbf{LSyn}}:{\mathbf{CSyn}}^{op}\to \mathbf{Cat}$

. The main contribution then is to identify conditions on a locally indexed category

![]() $\mathcal{L}:\mathcal{C}^{op}\to \mathbf{Cat}$

that guarantee that

$\mathcal{L}:\mathcal{C}^{op}\to \mathbf{Cat}$

that guarantee that

![]() $\Sigma_{\mathcal{C}}\mathcal{L}$

and

$\Sigma_{\mathcal{C}}\mathcal{L}$

and

![]() $\Sigma_{\mathcal{C}}\mathcal{L}^{op}$

are cartesian closed and to take the target language

$\Sigma_{\mathcal{C}}\mathcal{L}^{op}$

are cartesian closed and to take the target language

![]() ${\mathbf{LSyn}}:{\mathbf{CSyn}}^{op}\to \mathbf{Cat}$

as a freely generated such category.

${\mathbf{LSyn}}:{\mathbf{CSyn}}^{op}\to \mathbf{Cat}$

as a freely generated such category.

Insight 2. To understand how to perform CHAD on a source language with language feature X (e.g., higher-order functions), we need to understand the categorical semantics of language feature X (e.g., categorical exponentials) in categories of the form

![]() $\Sigma_\mathcal{C}\mathcal{L}$

and

$\Sigma_\mathcal{C}\mathcal{L}$

and

![]() $\Sigma_\mathcal{C}\mathcal{L}^{op}$

. Giving sufficient conditions on

$\Sigma_\mathcal{C}\mathcal{L}^{op}$

. Giving sufficient conditions on

![]() $\mathcal{L}$

for such a semantics to exist yields a suitable target language for CHAD, with the definition of the algorithm falling from the universal property of the source language.

$\mathcal{L}$

for such a semantics to exist yields a suitable target language for CHAD, with the definition of the algorithm falling from the universal property of the source language.

Furthermore, we observe in these papers that Theorem A again holds for this extended definition of CHAD on higher-order languages. However, to prove this, plain induction no longer suffices and we instead need to use a logical relations construction over the semantics (in the form of categorical sconing) that relates differentiable curves to their associated primal and (co)tangent curves. This is necessary because the program t may use higher-order constructions such as

![]() $\lambda$

-abstractions and function applications in its definition, even if the input and output types are plain first-order types that implement some Euclidean space.

$\lambda$

-abstractions and function applications in its definition, even if the input and output types are plain first-order types that implement some Euclidean space.

Insight 3. To obtain a correctness proof of CHAD on source languages with language feature X, it suffices to give a concrete denotational semantics for the source and target languages as well as a categorical semantics of language feature X in a category of logical relations (a scone) over these concrete semantics. The main technical challenge is to analyze logical relations techniques for language feature X.

Finally, these papers observe that the resulting target language can be implemented as a shallowly embedded DSL in standard functional languages, using a module system to implement the required linear types as abstract types, with a reference Haskell implementation available at https://github.com/VMatthijs/CHAD. In fact, Vytiniotis et al. (Reference Vytiniotis, Belov, Wei, Plotkin and Abadi2019) had proposed the same CHAD algorithm for higher-order languages, arriving at it from practical considerations rather than abstract categorical observations.

Insight 4. The code generated by CHAD naturally comes equipped with very precise (e.g., linear) types. These types emphasize the connections to its mathematical foundations and provide scaffolding for its correctness proof. However, they are unnecessary for a practical implementation of the algorithm: CHAD can be made to generate standard functional (e.g., Haskell) code; the type safety can even be rescued by implementing the linear types as abstract types.

2.4 CHAD for sum types: a challenge – (co)tangent spaces of varying dimension

A natural approach, therefore, when extending CHAD to yet more expressive source languages is to try to use Theorem B as a definition. In the case of sum types (aka variant types), therefore, we should consider their categorical equivalent, distributive coproducts, and seek conditions on

![]() $\mathcal{L}:\mathcal{C}^{op}\to\mathbf{Cat}$

under which

$\mathcal{L}:\mathcal{C}^{op}\to\mathbf{Cat}$

under which

![]() $\Sigma_{\mathcal{C}}\mathcal{L}$

and

$\Sigma_{\mathcal{C}}\mathcal{L}$

and

![]() $\Sigma_{\mathcal{C}}\mathcal{L}^{op}$

have distributive coproducts. The difficulty is that these categories tend not to have coproducts if

$\Sigma_{\mathcal{C}}\mathcal{L}^{op}$

have distributive coproducts. The difficulty is that these categories tend not to have coproducts if

![]() $\mathcal{L}$

is locally indexed. Instead, the desire to have coproducts in

$\mathcal{L}$

is locally indexed. Instead, the desire to have coproducts in

![]() $\Sigma_{\mathcal{C}}\mathcal{L}$

and

$\Sigma_{\mathcal{C}}\mathcal{L}$

and

![]() $\Sigma_{\mathcal{C}}\mathcal{L}^{op}$

naturally leads us to consider more general strictly indexed categories

$\Sigma_{\mathcal{C}}\mathcal{L}^{op}$

naturally leads us to consider more general strictly indexed categories

![]() $\mathcal{L}:\mathcal{C}^{op}\to\mathbf{Cat}$

.

$\mathcal{L}:\mathcal{C}^{op}\to\mathbf{Cat}$

.

In fact, this is compatible with what we know from differential geometry (Tu Reference Tu2011): coproducts allow us to construct spaces with multiple connected components, each of which may have a distinct dimension. To make things concrete, the space

![]() $\mathcal{T}_{x}{({\mathbb{R}}^2 \sqcup {\mathbb{R}}^3)}$

of tangent vectors to

$\mathcal{T}_{x}{({\mathbb{R}}^2 \sqcup {\mathbb{R}}^3)}$

of tangent vectors to

![]() ${\mathbb{R}}^2 \sqcup {\mathbb{R}}^3$

is either

${\mathbb{R}}^2 \sqcup {\mathbb{R}}^3$

is either

![]() $\underline{\mathbb{R}}^2$

or

$\underline{\mathbb{R}}^2$

or

![]() $\underline{\mathbb{R}}^3$

depending on whether the base point x is chosen in the left or right component of the coproduct. More generally, a differentiable function

$\underline{\mathbb{R}}^3$

depending on whether the base point x is chosen in the left or right component of the coproduct. More generally, a differentiable function

![]() $f:X\to Y$

between spaces of varying dimension (which can be formalized as manifolds with multiple connected components) induces functions on the spaces of tangent and cotangent vectors2:

$f:X\to Y$

between spaces of varying dimension (which can be formalized as manifolds with multiple connected components) induces functions on the spaces of tangent and cotangent vectors2:

\begin{align*}\mathcal{T}{f}&:\Pi_{x\in X}\Sigma_{y\in Y}(\mathcal{T}_{x} X\multimap \mathcal{T}_{y}Y)\\\mathcal{T}^*{f}&:\Pi_{x\in X}\Sigma_{y\in Y}(\mathcal{T}^*_{y} Y\multimap \mathcal{T}^*_{x}X),\end{align*}

\begin{align*}\mathcal{T}{f}&:\Pi_{x\in X}\Sigma_{y\in Y}(\mathcal{T}_{x} X\multimap \mathcal{T}_{y}Y)\\\mathcal{T}^*{f}&:\Pi_{x\in X}\Sigma_{y\in Y}(\mathcal{T}^*_{y} Y\multimap \mathcal{T}^*_{x}X),\end{align*}

whose first component is f itself and whose second component is the action on (co)tangent vectors that f induces.

If the types

![]() $\overrightarrow{\mathcal{D}}(\tau)_2$

and

$\overrightarrow{\mathcal{D}}(\tau)_2$

and

![]() $\overleftarrow{\mathcal{D}}(\tau)_2$

are to represent spaces of tangent and cotangent vectors to the spaces that

$\overleftarrow{\mathcal{D}}(\tau)_2$

are to represent spaces of tangent and cotangent vectors to the spaces that

![]() $\overrightarrow{\mathcal{D}}(\tau)_{1}$

and

$\overrightarrow{\mathcal{D}}(\tau)_{1}$

and

![]() $\overleftarrow{\mathcal{D}}(\tau)_1$

represent, we would expect them to be types that vary with the particular base point (primal) we choose. This leads to a refined view of CHAD: while

$\overleftarrow{\mathcal{D}}(\tau)_1$

represent, we would expect them to be types that vary with the particular base point (primal) we choose. This leads to a refined view of CHAD: while

![]() $\vdash \overrightarrow{\mathcal{D}}(\tau)_1:\mathrm{type} $

and

$\vdash \overrightarrow{\mathcal{D}}(\tau)_1:\mathrm{type} $

and

![]() $\vdash\overleftarrow{\mathcal{D}}(\tau)_1:\mathrm{type}$

can remain (closed/nondependent) cartesian types,

$\vdash\overleftarrow{\mathcal{D}}(\tau)_1:\mathrm{type}$

can remain (closed/nondependent) cartesian types,

![]() ${p}:\overrightarrow{\mathcal{D}}(\tau)_1\vdash \overrightarrow{\mathcal{D}}(\tau)_2:\mathrm{ltype}$

and

${p}:\overrightarrow{\mathcal{D}}(\tau)_1\vdash \overrightarrow{\mathcal{D}}(\tau)_2:\mathrm{ltype}$

and

![]() ${p}:\overleftarrow{\mathcal{D}}(\tau)_1\vdash \overleftarrow{\mathcal{D}}(\tau)_2:\mathrm{ltype}$

are, in general, linear dependent types.

${p}:\overleftarrow{\mathcal{D}}(\tau)_1\vdash \overleftarrow{\mathcal{D}}(\tau)_2:\mathrm{ltype}$

are, in general, linear dependent types.

Insight 5. To accommodate sum types in CHAD, it is natural to consider a target language with dependent types: this allows the dimension of the spaces of (co)tangent vectors to vary with the chosen primal. In categorical terms, we need to consider general strictly indexed categories

![]() $\mathcal{L}:\mathcal{C}^{op}\to \mathbf{Cat}$

instead of merely locally indexed ones.

$\mathcal{L}:\mathcal{C}^{op}\to \mathbf{Cat}$

instead of merely locally indexed ones.

The CHAD transformations of the program now becomes typed in the following more precise way:

\[\begin{array}{l} \overrightarrow{\mathcal{D}}(\Gamma)_1\vdash \overrightarrow{\mathcal{D}}_{\overline{\Gamma}}(t):\Sigma{{p}:\overrightarrow{\mathcal{D}}(\tau)_1}.{\overrightarrow{\mathcal{D}}(\Gamma)_2\multimap \overrightarrow{\mathcal{D}}(\tau)_2}\\ \overleftarrow{\mathcal{D}}(\Gamma)_1\vdash \overleftarrow{\mathcal{D}}_{\overline{\Gamma}}(t):\Sigma{{p}:\overleftarrow{\mathcal{D}}(\tau)_1}.{\overleftarrow{\mathcal{D}}(\tau)_2\multimap \overleftarrow{\mathcal{D}}(\Gamma)_2},\end{array}\]

\[\begin{array}{l} \overrightarrow{\mathcal{D}}(\Gamma)_1\vdash \overrightarrow{\mathcal{D}}_{\overline{\Gamma}}(t):\Sigma{{p}:\overrightarrow{\mathcal{D}}(\tau)_1}.{\overrightarrow{\mathcal{D}}(\Gamma)_2\multimap \overrightarrow{\mathcal{D}}(\tau)_2}\\ \overleftarrow{\mathcal{D}}(\Gamma)_1\vdash \overleftarrow{\mathcal{D}}_{\overline{\Gamma}}(t):\Sigma{{p}:\overleftarrow{\mathcal{D}}(\tau)_1}.{\overleftarrow{\mathcal{D}}(\tau)_2\multimap \overleftarrow{\mathcal{D}}(\Gamma)_2},\end{array}\]

where the action of

![]() $\overrightarrow{\mathcal{D}}({-})_2$

and

$\overrightarrow{\mathcal{D}}({-})_2$

and

![]() $\overleftarrow{\mathcal{D}}(-)_2$

on typing contexts

$\overleftarrow{\mathcal{D}}(-)_2$

on typing contexts

![]() $\Gamma=x_1:\tau_1,\ldots,x_n:\tau_n$

has been refined to

$\Gamma=x_1:\tau_1,\ldots,x_n:\tau_n$

has been refined to

All given definitions remain valid, where we simply reinterpret some tuples as having a

![]() $\Sigma$

-type rather than the more limited original tuple type.

$\Sigma$

-type rather than the more limited original tuple type.

We prove the following novel results.

Theorem C (Bicartesian closed structure of

![]() $\Sigma$

-categories, Propositions 17 and 18, Theorems 25, 26, and 39, and Corollaries 35 and 36). For a category

$\Sigma$

-categories, Propositions 17 and 18, Theorems 25, 26, and 39, and Corollaries 35 and 36). For a category

![]() $\mathcal{C}$

and a strictly indexed category

$\mathcal{C}$

and a strictly indexed category

![]() $\mathcal{L}:\mathcal{C}^{op}\to \mathbf{Cat}$

,

$\mathcal{L}:\mathcal{C}^{op}\to \mathbf{Cat}$

,

![]() $\Sigma_\mathcal{C} \mathcal{L}$

and

$\Sigma_\mathcal{C} \mathcal{L}$

and

![]() $\Sigma_\mathcal{C} \mathcal{L}^{op}$

have

$\Sigma_\mathcal{C} \mathcal{L}^{op}$

have

-

• (fibered) finite products, if

$\mathcal{C}$

has finite coproducts and

$\mathcal{C}$

has finite coproducts and

$\mathcal{L}$

has strictly indexed products and coproducts;

$\mathcal{L}$

has strictly indexed products and coproducts; -

• (fibered) finite coproducts, if

$\mathcal{C}$

has finite coproducts and

$\mathcal{C}$

has finite coproducts and

$\mathcal{L}$

is extensive;

$\mathcal{L}$

is extensive; -

• exponentials, if

$\mathcal{L}$

is a biadditive model of the dependently typed enriched effect calculus (we intentially keep this vague here to aid legibility – the point is that these are relatively standard conditions).

$\mathcal{L}$

is a biadditive model of the dependently typed enriched effect calculus (we intentially keep this vague here to aid legibility – the point is that these are relatively standard conditions).

Furthermore, the coproducts in

![]() $\Sigma_\mathcal{C} \mathcal{L}$

and

$\Sigma_\mathcal{C} \mathcal{L}$

and

![]() $\Sigma_\mathcal{C} \mathcal{L}^{op}$

distribute over the products, as long as those in

$\Sigma_\mathcal{C} \mathcal{L}^{op}$

distribute over the products, as long as those in

![]() $\mathcal{C}$

do, even in the absence of exponentials. Notably, the exponentials are not generally fibered over

$\mathcal{C}$

do, even in the absence of exponentials. Notably, the exponentials are not generally fibered over

![]() $\mathcal{C}$

.

$\mathcal{C}$

.

The crucial notion here is our (novel) notion of extensivity of an indexed category, which generalizes well-known notions of extensive categories. In particular, we call

![]() $\mathcal{L}:\mathcal{C}^{op}\to \mathbf{Cat}$

extensive if the canonical functor

$\mathcal{L}:\mathcal{C}^{op}\to \mathbf{Cat}$

extensive if the canonical functor

![]() $\mathcal{L}(\sqcup_{i=1}^n C_i)\to \prod_{i=1}^n \mathcal{L}(C_i)$

is an equivalence. Furthermore, we note that we need to reestablish the product and exponential structures of

$\mathcal{L}(\sqcup_{i=1}^n C_i)\to \prod_{i=1}^n \mathcal{L}(C_i)$

is an equivalence. Furthermore, we note that we need to reestablish the product and exponential structures of

![]() $\Sigma_\mathcal{C} \mathcal{L}$

and

$\Sigma_\mathcal{C} \mathcal{L}$

and

![]() $\Sigma_\mathcal{C} \mathcal{L}^{op}$

due to the generalization from locally indexed to arbitrary strictly indexed categories

$\Sigma_\mathcal{C} \mathcal{L}^{op}$

due to the generalization from locally indexed to arbitrary strictly indexed categories

![]() $\mathcal{L}$

.

$\mathcal{L}$

.

Using these results, we construct a suitable target language

![]() ${\mathbf{LSyn}}:{\mathbf{CSyn}}^{op}\to\mathbf{Cat}$

for CHAD on a source language with sum types (and tuple and function types) and derive the forward and reverse CHAD algorithms for such a language and reestablish Theorems A and B in this more general context. This target language is a standard dependently typed enriched effect calculus with cartesian sum types and extensive families of linear types (i.e., dependent linear types that can be defined through case distinction). Again, the correctness proof of Theorem A uses the universal property of Theorem B and a logical relations (categorical sconing) construction over the denotational semantics of the source and target languages. This logical relations construction is relatively straightforward and relies on well-known sconing methods for bicartesian closed categories. In particular, we obtain the following formulas for a sum type

${\mathbf{LSyn}}:{\mathbf{CSyn}}^{op}\to\mathbf{Cat}$

for CHAD on a source language with sum types (and tuple and function types) and derive the forward and reverse CHAD algorithms for such a language and reestablish Theorems A and B in this more general context. This target language is a standard dependently typed enriched effect calculus with cartesian sum types and extensive families of linear types (i.e., dependent linear types that can be defined through case distinction). Again, the correctness proof of Theorem A uses the universal property of Theorem B and a logical relations (categorical sconing) construction over the denotational semantics of the source and target languages. This logical relations construction is relatively straightforward and relies on well-known sconing methods for bicartesian closed categories. In particular, we obtain the following formulas for a sum type

![]() $\left\{\ell_1\tau_1\mid\cdots\mid \ell_n\tau_n\right\}$

with constructors

$\left\{\ell_1\tau_1\mid\cdots\mid \ell_n\tau_n\right\}$

with constructors

![]() $\ell_1,\ldots,\ell_n$

that take arguments of type

$\ell_1,\ldots,\ell_n$

that take arguments of type

![]() $\tau_1,\ldots,\tau_n$

:

$\tau_1,\ldots,\tau_n$

:

\begin{align*} &\overrightarrow{\mathcal{D}}\left\{\ell_1\tau_1\mid\cdots\mid \ell_n\tau_n\right\})_1 \stackrel {\mathrm{def}}= \left\{\ell_1\overrightarrow{\mathcal{D}}\tau_1)_1\mid \cdots \mid\ell_n\overrightarrow{\mathcal{D}}\tau_n)_1\right\}\\ &\overrightarrow{\mathcal{D}}\left\{\ell_1\tau_1\mid\cdots\mid \ell_n\tau_n\right\})_2\stackrel {\mathrm{def}}= {\mathbf{case}\,{p}\,\mathbf{of}\,\{{\ell_1{p}\to \overrightarrow{\mathcal{D}}\tau_1)_2\mid\cdots\mid \ell_n{p}\to\overrightarrow{\mathcal{D}}\tau_n)_2}\}}\\ &\overleftarrow{\mathcal{D}}(\left\{\ell_1\tau_1\mid\cdots\mid \ell_n\tau_n\right\})_1 \stackrel {\mathrm{def}}= \left\{\ell_1\overleftarrow{\mathcal{D}}(\tau_1)_1\mid \cdots \mid\ell_n\overleftarrow{\mathcal{D}}(\tau_n)_1\right\}\\ &\overleftarrow{\mathcal{D}}(\left\{\ell_1\tau_1\mid\cdots\mid \ell_n\tau_n\right\})_2\stackrel {\mathrm{def}}= {\mathbf{case}\,{p}\,\mathbf{of}\,\{{\ell_1{p}\to \overrightarrow{\mathcal{D}}\tau_1)_2\mid\cdots\mid \ell_n{p}\to\overleftarrow{\mathcal{D}}(\tau_n)_2}\}},\end{align*}

\begin{align*} &\overrightarrow{\mathcal{D}}\left\{\ell_1\tau_1\mid\cdots\mid \ell_n\tau_n\right\})_1 \stackrel {\mathrm{def}}= \left\{\ell_1\overrightarrow{\mathcal{D}}\tau_1)_1\mid \cdots \mid\ell_n\overrightarrow{\mathcal{D}}\tau_n)_1\right\}\\ &\overrightarrow{\mathcal{D}}\left\{\ell_1\tau_1\mid\cdots\mid \ell_n\tau_n\right\})_2\stackrel {\mathrm{def}}= {\mathbf{case}\,{p}\,\mathbf{of}\,\{{\ell_1{p}\to \overrightarrow{\mathcal{D}}\tau_1)_2\mid\cdots\mid \ell_n{p}\to\overrightarrow{\mathcal{D}}\tau_n)_2}\}}\\ &\overleftarrow{\mathcal{D}}(\left\{\ell_1\tau_1\mid\cdots\mid \ell_n\tau_n\right\})_1 \stackrel {\mathrm{def}}= \left\{\ell_1\overleftarrow{\mathcal{D}}(\tau_1)_1\mid \cdots \mid\ell_n\overleftarrow{\mathcal{D}}(\tau_n)_1\right\}\\ &\overleftarrow{\mathcal{D}}(\left\{\ell_1\tau_1\mid\cdots\mid \ell_n\tau_n\right\})_2\stackrel {\mathrm{def}}= {\mathbf{case}\,{p}\,\mathbf{of}\,\{{\ell_1{p}\to \overrightarrow{\mathcal{D}}\tau_1)_2\mid\cdots\mid \ell_n{p}\to\overleftarrow{\mathcal{D}}(\tau_n)_2}\}},\end{align*}

mirroring our intuition that the (co)tangent bundle to a coproduct of spaces decomposes (extensively) into the (co)tangent bundles to the component spaces.

2.5 CHAD for (co)inductive types: where do we begin?

If we are to really push forward the dream of differentiable programming, we need to learn how to perform AD on programs that operate on data types. To this effect, we analyze CHAD for inductive and coinductive types. If we want to follow our previous methodology to find suitable definitions and correctness proofs, we first need a good categorical axiomatization of such types. It is well known that inductive types correspond to initial algebras of functors, while coinductive types are precisely terminal coalgebras. The question, however, is what class of functors to consider. That choice makes the vague notion of (co)inductive types precise.

Following Santocanale (Reference Santocanale2002), we work with the class of

![]() $\mu\nu$

-polynomials, a relatively standard choice, that is functors that can be defined inductively through the combination of

$\mu\nu$

-polynomials, a relatively standard choice, that is functors that can be defined inductively through the combination of

-

• constants for primitive types

${\mathbf{real}}^n$

;

${\mathbf{real}}^n$

; -

• type variables

${\alpha}$

;

${\alpha}$

; -

• unit and tuple types

$\mathbf{1}$

and

$\mathbf{1}$

and

$\tau\boldsymbol{\mathop{*}}\sigma$

of

$\tau\boldsymbol{\mathop{*}}\sigma$

of

$\mu\nu$

-polynomials;

$\mu\nu$

-polynomials; -

• sum types

$\left\{\ell_1\tau_1\mid\cdots\mid \ell_n\tau_n\right\}$

of

$\left\{\ell_1\tau_1\mid\cdots\mid \ell_n\tau_n\right\}$

of

$\mu\nu$

-polynomials;

$\mu\nu$

-polynomials; -

• initial algebras

$\mu{\alpha}.\tau$

of

$\mu{\alpha}.\tau$

of

$\mu\nu$

-polynomials;

$\mu\nu$

-polynomials; -

• terminal coalgebras

$\nu{\alpha}.\tau$

of

$\nu{\alpha}.\tau$

of

$\mu\nu$

-polynomials.

$\mu\nu$

-polynomials.

Notably, we exclude function types, as the non-fibered nature of exponentials in

![]() $\Sigma_\mathcal{C} \mathcal{L}$

and

$\Sigma_\mathcal{C} \mathcal{L}$

and

![]() $\Sigma_\mathcal{C} \mathcal{L}^{op}$

would significantly complicate the technical development. While this excludes certain examples like the free state monad (which for type

$\Sigma_\mathcal{C} \mathcal{L}^{op}$

would significantly complicate the technical development. While this excludes certain examples like the free state monad (which for type

![]() $\sigma$

state would be the intial algebra

$\sigma$

state would be the intial algebra

![]() $\mu{\alpha}.\left\{Get (\sigma\to {\alpha})\mid Put (\sigma\boldsymbol{\mathop{*}} {\alpha})\right\}$

), it still includes the vast majority of examples of eager and lazy types that one uses in practice, for example, lists

$\mu{\alpha}.\left\{Get (\sigma\to {\alpha})\mid Put (\sigma\boldsymbol{\mathop{*}} {\alpha})\right\}$

), it still includes the vast majority of examples of eager and lazy types that one uses in practice, for example, lists

![]() $\mu{\alpha}.\left\{Empty\,\mathbf{1}\mid Cons (\sigma\boldsymbol{\mathop{*}} {\alpha})\right\}$

, (finitely branching) labeled trees like

$\mu{\alpha}.\left\{Empty\,\mathbf{1}\mid Cons (\sigma\boldsymbol{\mathop{*}} {\alpha})\right\}$

, (finitely branching) labeled trees like

![]() $\mu{\alpha}.\left\{Leaf\,\mathbf{1}\mid Node (\sigma\boldsymbol{\mathop{*}} {\alpha}\boldsymbol{\mathop{*}} {\alpha})\right\}$

, streams

$\mu{\alpha}.\left\{Leaf\,\mathbf{1}\mid Node (\sigma\boldsymbol{\mathop{*}} {\alpha}\boldsymbol{\mathop{*}} {\alpha})\right\}$

, streams

![]() $\nu{\alpha}.\sigma\boldsymbol{\mathop{*}} {\alpha}$

, and many more.

$\nu{\alpha}.\sigma\boldsymbol{\mathop{*}} {\alpha}$

, and many more.

We characterize conditions on a strictly indexed category

![]() $\mathcal{L}:\mathcal{C}^{op}\to\mathbf{Cat}$

that guarantee that

$\mathcal{L}:\mathcal{C}^{op}\to\mathbf{Cat}$

that guarantee that

![]() $\Sigma_\mathcal{C}\mathcal{L}$

and

$\Sigma_\mathcal{C}\mathcal{L}$

and

![]() $\Sigma_\mathcal{C} \mathcal{L}^{op}$

have this precise notion of inductive and coinductive types. The first step is to give a characterization of initial algebras and terminal coalgebras of split fibration endofunctors on

$\Sigma_\mathcal{C} \mathcal{L}^{op}$

have this precise notion of inductive and coinductive types. The first step is to give a characterization of initial algebras and terminal coalgebras of split fibration endofunctors on

![]() $\Sigma_\mathcal{C}\mathcal{L}$

and

$\Sigma_\mathcal{C}\mathcal{L}$

and

![]() $\Sigma_\mathcal{C} \mathcal{L}^{op}$

. For legibility, we state the results here for simple endofunctors and (co)algebras, but they generalize to parameterized endofunctors and (co)algebras.

$\Sigma_\mathcal{C} \mathcal{L}^{op}$

. For legibility, we state the results here for simple endofunctors and (co)algebras, but they generalize to parameterized endofunctors and (co)algebras.

Theorem D (Characterization of initial algebras and terminal coalgebras in

![]() $\Sigma$

-categories, Corollary 49 and Theorem 52). Let E be a split fibration endofunctor on

$\Sigma$

-categories, Corollary 49 and Theorem 52). Let E be a split fibration endofunctor on

![]() $\Sigma_\mathcal{C}\mathcal{L}$

(resp.

$\Sigma_\mathcal{C}\mathcal{L}$

(resp.

![]() $\Sigma_\mathcal{C} \mathcal{L}^{op}$

) and let

$\Sigma_\mathcal{C} \mathcal{L}^{op}$

) and let

![]() $(\overline{E},e)$

be the corresponding strictly indexed endofunctor on

$(\overline{E},e)$

be the corresponding strictly indexed endofunctor on

![]() $\mathcal{L}$

. Then, E has a (fibered) initial algebra if

$\mathcal{L}$

. Then, E has a (fibered) initial algebra if

-

•

$\overline{E}:\mathcal{C}\to\mathcal{C}$

has an initial algebra

$\overline{E}:\mathcal{C}\to\mathcal{C}$

has an initial algebra

$\mathbf{\mathfrak{in}} _{\overline{E}}:\overline{E}(\mu\overline{E})\to \mu\overline{E}$

;

$\mathbf{\mathfrak{in}} _{\overline{E}}:\overline{E}(\mu\overline{E})\to \mu\overline{E}$

; -

•

$\mathcal{L}(\mathbf{\mathfrak{in}} _{\overline{E}} )^{-1} e_{\mu\overline{E}} :\mathcal{L}(\mu\overline{E})\to \mathcal{L}(\mu\overline{E})$

has an initial algebra (resp. terminal coalgebra);

$\mathcal{L}(\mathbf{\mathfrak{in}} _{\overline{E}} )^{-1} e_{\mu\overline{E}} :\mathcal{L}(\mu\overline{E})\to \mathcal{L}(\mu\overline{E})$

has an initial algebra (resp. terminal coalgebra);

-

•

$\mathcal{L}(f)$

preserves initial algebras (resp. terminal coalgebras) for all morphisms

$\mathcal{L}(f)$

preserves initial algebras (resp. terminal coalgebras) for all morphisms

$f\in \mathcal{C}$

;

$f\in \mathcal{C}$

;

and E has a (fibered) terminal coalgebra if

-

•

$\overline{E}:\mathcal{C}\to\mathcal{C}$

has a terminal coalgebra

$\overline{E}:\mathcal{C}\to\mathcal{C}$

has a terminal coalgebra

$\mathbf{\mathfrak{out}} _{\overline{E}}:\nu\overline{E}\to \overline{E}(\nu\overline{E})$

;

$\mathbf{\mathfrak{out}} _{\overline{E}}:\nu\overline{E}\to \overline{E}(\nu\overline{E})$

; -

•

$\mathcal{L}(\mathbf{\mathfrak{out}} _{\overline{E}} ) e_{\mu\overline{E}} :\mathcal{L}(\nu\overline{E})\to \mathcal{L}(\nu\overline{E})$

has a terminal coalgebra (resp. initial algebra)

$\mathcal{L}(\mathbf{\mathfrak{out}} _{\overline{E}} ) e_{\mu\overline{E}} :\mathcal{L}(\nu\overline{E})\to \mathcal{L}(\nu\overline{E})$

has a terminal coalgebra (resp. initial algebra)

-

•

$\mathcal{L}(f)$

preserves terminal coalgebras (resp. initial algebras) for all morphisms

$\mathcal{L}(f)$

preserves terminal coalgebras (resp. initial algebras) for all morphisms

$f\in \mathcal{C}$

.

$f\in \mathcal{C}$

.

We use this result to give sufficient conditions for (fibered)

![]() $\mu\nu$

-polynomials (including their fibered initial algebras and terminal coalgebras) to exist in

$\mu\nu$

-polynomials (including their fibered initial algebras and terminal coalgebras) to exist in

![]() $\Sigma_\mathcal{C}\mathcal{L}$

and

$\Sigma_\mathcal{C}\mathcal{L}$

and

![]() $\Sigma_\mathcal{C}\mathcal{L}^{op}$

. In particular, we show that it suffices to extend the target language

$\Sigma_\mathcal{C}\mathcal{L}^{op}$

. In particular, we show that it suffices to extend the target language

![]() ${\mathbf{LSyn}}:{\mathbf{CSyn}}^{op}\to \mathbf{Cat}$

with both cartesian and linear inductive and coinductive types to perform CHAD on a source language

${\mathbf{LSyn}}:{\mathbf{CSyn}}^{op}\to \mathbf{Cat}$

with both cartesian and linear inductive and coinductive types to perform CHAD on a source language

![]() $\mathbf{Syn}$

with inductive and coinductive types. Again, an equivalent of Theorem B holds.

$\mathbf{Syn}$

with inductive and coinductive types. Again, an equivalent of Theorem B holds.

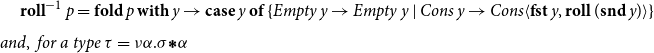

We write

![]() $\mathbf{roll}\,{x}$

for the constructor of inductive types (applied to an identifier x),

$\mathbf{roll}\,{x}$

for the constructor of inductive types (applied to an identifier x),

![]() $\mathbf{unroll}\,x$

for the destructor of coinductive types, and

$\mathbf{unroll}\,x$

for the destructor of coinductive types, and

![]() ${\tau}.\mathbf{roll}^{-1}\,x\stackrel {\mathrm{def}}= \mathbf{fold}\,x\,\mathbf{with}\,y\to\tau{}[^{y\vdash \mathbf{roll}_{}\,y}\!/\!_{{\alpha}}]$

, where we write

${\tau}.\mathbf{roll}^{-1}\,x\stackrel {\mathrm{def}}= \mathbf{fold}\,x\,\mathbf{with}\,y\to\tau{}[^{y\vdash \mathbf{roll}_{}\,y}\!/\!_{{\alpha}}]$

, where we write

![]() $\tau[{}^{y\vdash \mathbf{roll}_{}\,y}\!/\!_{{\alpha}}]$

for the functorial action of the parameterized type

$\tau[{}^{y\vdash \mathbf{roll}_{}\,y}\!/\!_{{\alpha}}]$

for the functorial action of the parameterized type

![]() $\tau$

with type parameter

$\tau$

with type parameter

![]() ${\alpha}$

on the term

${\alpha}$

on the term

![]() $\mathbf{roll}_{}\,y$

in context y. This yields the following formula for spaces of primals and (co)tangent vectors to (co)inductive types where:

$\mathbf{roll}_{}\,y$

in context y. This yields the following formula for spaces of primals and (co)tangent vectors to (co)inductive types where:

\begin{align*}&\overrightarrow{\mathcal{D}}({\alpha})_1\stackrel {\mathrm{def}}= {\alpha} \qquad\qquad & \overrightarrow{\mathcal{D}}({\alpha})_2 = \underline{\alpha}\\&\overrightarrow{\mathcal{D}}\mu{\alpha}.(\tau)_1\stackrel {\mathrm{def}}= \mu{\alpha}.\overrightarrow{\mathcal{D}}(\tau)_1\qquad\qquad&\overrightarrow{\mathcal{D}}\mu{\alpha}.(\tau)_2\stackrel {\mathrm{def}}= \underline{\mu}\underline{\alpha}.\overrightarrow{\mathcal{D}}(\tau)_2[{}^{{\overrightarrow{\mathcal{D}}(\tau)_1}.\mathbf{roll}^{-1}{p}}\!/\!_{{p}}]\\&\overrightarrow{\mathcal{D}}\nu{\alpha}.(\tau)_1\stackrel {\mathrm{def}}= \nu{\alpha}.\overrightarrow{\mathcal{D}}(\tau)_1\qquad\qquad&\overrightarrow{\mathcal{D}}\nu{\alpha}.(\tau)_2\stackrel {\mathrm{def}}= \underline{\nu}\underline{\alpha}.\overrightarrow{\mathcal{D}}(\tau)_2[{}^{\mathbf{unroll}\,{p}}\!/\!_{{p}}]\\&\overleftarrow{\mathcal{D}}({\alpha})_1\stackrel {\mathrm{def}}= {\alpha} \qquad\qquad & \overleftarrow{\mathcal{D}}({\alpha})_2 = \underline{\alpha}\\&\overleftarrow{\mathcal{D}}(\mu{\alpha}.(\tau)_1\stackrel {\mathrm{def}}= \mu{\alpha}.\overleftarrow{\mathcal{D}}(\tau)_1\qquad\qquad&\overleftarrow{\mathcal{D}}(\mu{\alpha}.(\tau)_2\stackrel {\mathrm{def}}= \underline{\nu}\underline{\alpha}.\overleftarrow{\mathcal{D}}(\tau)_2[{}^{{\overrightarrow{\mathcal{D}}(\tau)_1}.\mathbf{roll}^{-1}{p}}\!/\!_{{p}}]\\&\overleftarrow{\mathcal{D}}(\nu{\alpha}.(\tau)_1\stackrel {\mathrm{def}}= \nu{\alpha}.\overleftarrow{\mathcal{D}}(\tau)_1\qquad\qquad&\overleftarrow{\mathcal{D}}(\nu{\alpha}.\tau)_2\stackrel {\mathrm{def}}= \underline{\mu}\underline{\alpha}.\overleftarrow{\mathcal{D}}(\tau)_2[{}^{\mathbf{unroll}\,{p}}\!/\!_{{p}}]\end{align*}

\begin{align*}&\overrightarrow{\mathcal{D}}({\alpha})_1\stackrel {\mathrm{def}}= {\alpha} \qquad\qquad & \overrightarrow{\mathcal{D}}({\alpha})_2 = \underline{\alpha}\\&\overrightarrow{\mathcal{D}}\mu{\alpha}.(\tau)_1\stackrel {\mathrm{def}}= \mu{\alpha}.\overrightarrow{\mathcal{D}}(\tau)_1\qquad\qquad&\overrightarrow{\mathcal{D}}\mu{\alpha}.(\tau)_2\stackrel {\mathrm{def}}= \underline{\mu}\underline{\alpha}.\overrightarrow{\mathcal{D}}(\tau)_2[{}^{{\overrightarrow{\mathcal{D}}(\tau)_1}.\mathbf{roll}^{-1}{p}}\!/\!_{{p}}]\\&\overrightarrow{\mathcal{D}}\nu{\alpha}.(\tau)_1\stackrel {\mathrm{def}}= \nu{\alpha}.\overrightarrow{\mathcal{D}}(\tau)_1\qquad\qquad&\overrightarrow{\mathcal{D}}\nu{\alpha}.(\tau)_2\stackrel {\mathrm{def}}= \underline{\nu}\underline{\alpha}.\overrightarrow{\mathcal{D}}(\tau)_2[{}^{\mathbf{unroll}\,{p}}\!/\!_{{p}}]\\&\overleftarrow{\mathcal{D}}({\alpha})_1\stackrel {\mathrm{def}}= {\alpha} \qquad\qquad & \overleftarrow{\mathcal{D}}({\alpha})_2 = \underline{\alpha}\\&\overleftarrow{\mathcal{D}}(\mu{\alpha}.(\tau)_1\stackrel {\mathrm{def}}= \mu{\alpha}.\overleftarrow{\mathcal{D}}(\tau)_1\qquad\qquad&\overleftarrow{\mathcal{D}}(\mu{\alpha}.(\tau)_2\stackrel {\mathrm{def}}= \underline{\nu}\underline{\alpha}.\overleftarrow{\mathcal{D}}(\tau)_2[{}^{{\overrightarrow{\mathcal{D}}(\tau)_1}.\mathbf{roll}^{-1}{p}}\!/\!_{{p}}]\\&\overleftarrow{\mathcal{D}}(\nu{\alpha}.(\tau)_1\stackrel {\mathrm{def}}= \nu{\alpha}.\overleftarrow{\mathcal{D}}(\tau)_1\qquad\qquad&\overleftarrow{\mathcal{D}}(\nu{\alpha}.\tau)_2\stackrel {\mathrm{def}}= \underline{\mu}\underline{\alpha}.\overleftarrow{\mathcal{D}}(\tau)_2[{}^{\mathbf{unroll}\,{p}}\!/\!_{{p}}]\end{align*}

Insight 6. Types of primals to (co)inductive types are (co)inductive types of primals, types of tangents to (co)inductive types are linear (co)inductive types of tangents, and types of cotangents to inductive types are linear coinductive types of cotangents and vice versa.

For example, for a type

![]() $\tau=\mu{\alpha}.\left\{Empty\,\mathbf{1}\mid Cons (\sigma\boldsymbol{\mathop{*}} {\alpha})\right\}$

of lists of elements of type

$\tau=\mu{\alpha}.\left\{Empty\,\mathbf{1}\mid Cons (\sigma\boldsymbol{\mathop{*}} {\alpha})\right\}$

of lists of elements of type

![]() $\sigma$

, we have a cotangent space:

$\sigma$

, we have a cotangent space:

$\mathbf{roll}^{-1}\,{{p}}=\mathbf{fold}\,{p}\,\mathbf{with}\,y\to{\mathbf{case}\,y\,\mathbf{of}\,\{{Empty\,y\to Empty\,y\mid Cons \, y \to Cons\langle\mathbf{fst}\, y, \mathbf{roll}\,(\mathbf{snd}\,y)\rangle}\}}\hspace{-40pt}\\[8pt]and,~for~a~type~\tau=\nu{\alpha}.\sigma\boldsymbol{\mathop{*}} {\alpha}$

of streams, we have a cotangent space:

$\mathbf{roll}^{-1}\,{{p}}=\mathbf{fold}\,{p}\,\mathbf{with}\,y\to{\mathbf{case}\,y\,\mathbf{of}\,\{{Empty\,y\to Empty\,y\mid Cons \, y \to Cons\langle\mathbf{fst}\, y, \mathbf{roll}\,(\mathbf{snd}\,y)\rangle}\}}\hspace{-40pt}\\[8pt]and,~for~a~type~\tau=\nu{\alpha}.\sigma\boldsymbol{\mathop{*}} {\alpha}$

of streams, we have a cotangent space:

We demonstrate that the strictly indexed category

![]() $\mathbf{FVect}:\mathbf{Set}^{op}\to \mathbf{Cat}$

of families of vector spaces also satisfies our conditions, so it gives a concrete denotational semantics of the target language

$\mathbf{FVect}:\mathbf{Set}^{op}\to \mathbf{Cat}$

of families of vector spaces also satisfies our conditions, so it gives a concrete denotational semantics of the target language

![]() ${\mathbf{LSyn}}:{\mathbf{CSyn}}^{op}\to\mathbf{Cat}$

, by Theorem B. To reestablish the correctness Theorem A, existing logical relations techniques do not suffice, as far as we are aware. Instead, we achieve it by developing a novel theory of categorical logical relations (sconing) for languages with expressive type systems like our AD source language.

${\mathbf{LSyn}}:{\mathbf{CSyn}}^{op}\to\mathbf{Cat}$

, by Theorem B. To reestablish the correctness Theorem A, existing logical relations techniques do not suffice, as far as we are aware. Instead, we achieve it by developing a novel theory of categorical logical relations (sconing) for languages with expressive type systems like our AD source language.

Insight 7. We can obtain powerful logical relations techniques for reasoning about expressive type systems by analyzing when the forgetful functor from a category of logical relations to the underlying category is comonadic and monadic.

In almost all instances, the forgetful functor from a category of logical relations to the underlying category is comonadic and in many instances, including ours, it is even monadic. This gives us the following logical relations techniques for expressive type systems:

Theorem E (Logical relations for expressive types, Section 11). Let

![]() $G:\mathcal{C}\to\mathcal{D}$

be a functor. We observe

$G:\mathcal{C}\to\mathcal{D}$

be a functor. We observe

-

• If

$\mathcal{D}$

has binary products, then the forgetful functor from the scone (the comma category)

$\mathcal{D}$

has binary products, then the forgetful functor from the scone (the comma category)

$\mathcal{D}\downarrow G\to \mathcal{D}\times\mathcal{C}$

is comonadic (Theorem 97).

$\mathcal{D}\downarrow G\to \mathcal{D}\times\mathcal{C}$

is comonadic (Theorem 97).

-

• If G has a left adjoint and

$\mathcal{C} $

has binary coproducts, then

$\mathcal{C} $

has binary coproducts, then

$\mathcal{D}\downarrow G\to \mathcal{D}\times\mathcal{C}$

is monadic (Corollary 99).

$\mathcal{D}\downarrow G\to \mathcal{D}\times\mathcal{C}$

is monadic (Corollary 99).

This is relevant because:

-

• comonadic functors create initial algebras (Theorem 109);

-

• monadic functors create terminal coalgebras (Theorem 109);

-

• monadic–comonadic functors create

$\mu\nu$

-polynomials (Corollary 110);

$\mu\nu$

-polynomials (Corollary 110);

-

• if

$\mathcal{E}$

is monadic–comonadic over

$\mathcal{E}$

is monadic–comonadic over

$\mathcal{E}'$

, then

$\mathcal{E}'$

, then

$\mathcal{E}$

is finitely complete cartesian closed if

$\mathcal{E}$

is finitely complete cartesian closed if

$\mathcal{E}'$

is (Proposition 103).

$\mathcal{E}'$

is (Proposition 103).

As a consequence, we can lift our concrete denotational semantics of all types, including inductive and coinductive types to our categories of logical relations over the semantics.

These logical relations techniques are suffient to yield the correctness Theorem 1. Indeed, as long as derivatives of primitive operations are correctly implemented in the sense that

![]() $\unicode{x27E6} D\mathrm{op}\unicode{x27E7}=D\mathrm{op}$

and

$\unicode{x27E6} D\mathrm{op}\unicode{x27E7}=D\mathrm{op}$

and

![]() $\unicode{x27E6} {D\mathrm{op}}^{t}\unicode{x27E7}={D\unicode{x27E6} \mathrm{op}\unicode{x27E7}}^{t}$

, Theorem E tells us that the unique structure-preserving functors:

$\unicode{x27E6} {D\mathrm{op}}^{t}\unicode{x27E7}={D\unicode{x27E6} \mathrm{op}\unicode{x27E7}}^{t}$

, Theorem E tells us that the unique structure-preserving functors:

\begin{align*}&(\unicode{x27E6} -\unicode{x27E7},\unicode{x27E6} \overrightarrow{\mathcal{D}}(-)\unicode{x27E7}):\mathbf{Syn}\to \mathbf{Set}\times \Sigma_\mathbf{Set} \mathbf{FVect}\\ & (\unicode{x27E6} -\unicode{x27E7},\unicode{x27E6} \overleftarrow{\mathcal{D}}(-)\unicode{x27E7}):\mathbf{Syn}\to \mathbf{Set}\times \Sigma_\mathbf{Set}\mathbf{FVect}^{op}\end{align*}

\begin{align*}&(\unicode{x27E6} -\unicode{x27E7},\unicode{x27E6} \overrightarrow{\mathcal{D}}(-)\unicode{x27E7}):\mathbf{Syn}\to \mathbf{Set}\times \Sigma_\mathbf{Set} \mathbf{FVect}\\ & (\unicode{x27E6} -\unicode{x27E7},\unicode{x27E6} \overleftarrow{\mathcal{D}}(-)\unicode{x27E7}):\mathbf{Syn}\to \mathbf{Set}\times \Sigma_\mathbf{Set}\mathbf{FVect}^{op}\end{align*}

lift to the scones of

![]() $\mathrm{Hom}(({\mathbb{R}}^k,({\mathbb{R}}^k,\underline{\mathbb{R}}^k)),-) :\mathbf{Set}\times \Sigma_\mathbf{Set} \mathbf{FVect}\to\mathbf{Set}$

and

$\mathrm{Hom}(({\mathbb{R}}^k,({\mathbb{R}}^k,\underline{\mathbb{R}}^k)),-) :\mathbf{Set}\times \Sigma_\mathbf{Set} \mathbf{FVect}\to\mathbf{Set}$

and