Imprinting or programming as a result of early life experience is becoming an accepted scientific phenomenon. Implicit in this is the concept of a ‘stage of developmental plasticity, where specific conditions give rise to later life outcomes’. The most significant aspect is metabolic imprinting, in which maternal undernutrition, obesity and diabetes during gestation and lactation can contribute towards obesity in the offspring(Reference Levin1). Other endpoints that seem to be affected by early life exposure include neurodevelopment and immune modulation.

The concept of fetal growth affecting adult disease was explained by Barker(Reference Barker2, Reference Barker3) in his seminal papers. Programming later evolved to mean alterations in nutrition and growth at specific developmental points, resulting in long-term or even permanent effects(Reference Lucas4).

Observation is the first step and the initial link between health and early diet is often found from epidemiological investigations. One of the most exemplary studies is the Avon Longitudinal Study of Parents and Children (ALSPAC) cohort(Reference Ness5). This has been the source for a host of publications in which early diet is linked to later obesity and to other health endpoints, as outlined in Table 1.

Table 1 Correlations between early exposure and later health outcomes from the Avon longitudinal study of parents and children cohort

Other cohorts include the Helsinki study, which showed a link between prenatal and postnatal factors and type 2 diabetes(Reference Eriksson, Forsén and Tuomilehto6), diet in pregnancy and blood pressure of offspring(Reference Campbell, Hall and Barker7), maternal nutritional status and blood pressure(Reference Godfrey, Forrester and Barker8) and the USA National Children's Study. This latter study is designed to examine the effects of environmental influences on the health and development of 100 000 children across the USA, following them from before birth until age 21. The goal of the study is to improve the health and well-being of children by assessing the impact of exposure on health endpoints. These and other studies are presented in more detail in section ‘Nutritional epigenomics: how to make sense of what we measure'.

These and other observational studies can be misinterpreted due to confounders; however, they can help to establish associations, which can then be tested by intervention to demonstrate the causality. The observational evidence has been the starting point for the scope of review since it provides a strong suggestion of a measurable effect apparently linked to an earlier exposure. However, while numerous studies have been carried out using animal models – particularly in relation to obesity – for obvious reasons, human clinical interventions are comparatively rare.

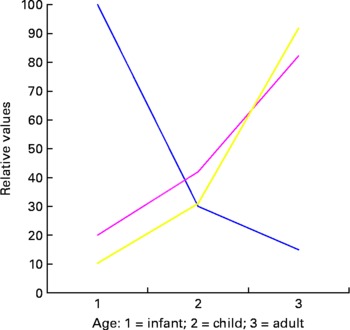

Another crucial element in considering long-term results from early exposure is the timeframe/timing and duration of exposure. In many cases, the potential long-term effect of a single intervention is greatest in the developing system (infant or fetus), and the size of the effective dose may be relatively low. However, the ability to predict the precise effect of the intervention in terms of long-term outcome is also low. In adults, the opposite is often the case. The dietary intervention may have to be significant and the prediction of effect is immediately measurable but the long-term effect of a single intervention is low as demonstrated conceptually in Fig. 1.

Fig. 1 Conceptual figure on the effects of exposure at different developmental ages. ![]() , predictive power;

, predictive power; ![]() , intervention;

, intervention; ![]() , long-term effect.

, long-term effect.

A further complication is that clinical endpoints change with time and often the effects of the initial programming event may be significantly diluted by a lifetime exposure to a range of other factors. This, together with reducing metabolic plasticity and increased differentiation, may be a major factor in the lowering of programming potential as cells and organisms get older (Fig. 2).

Fig. 2 The shrinking of unmodified endpoints and increase in environmentally modified endpoints over time as external factors impact through a lifetime.

In mechanistic terms, it has been hypothesised that fetal developmental plastic responses can cause changes in lean body mass, endocrinology, blood flow and vascular loading. These responses are then modulated or amplified in infancy and childhood and therefore lead to increased susceptibility– particularly to cardiovascular and metabolic disease – in adulthood(Reference Javaid, Crozier and Harvey9). In developmental terms, fetal exposure to a range of dietary components including Ca, folate, Mg, high or low protein and Zn as a result of maternal diet have all been, with varying degrees of confidence, associated with birth weight. Low birth weight is by itself associated with a range of long-term outcomes, including insulin resistance and type 2 diabetes in later life.

A further addition to the genre was the use of the term ‘metabolic imprinting’ which was adopted by Waterland & Garza(Reference Waterland and Garza10), who suggested that while biological mechanisms exist to ‘memorise’ the metabolic effects of early nutrient exposure, these were not hypothesis driven. They proposed to apply more rigour to the testing of chronic disease outcomes and on the development of biological mechanisms and the testing of hypotheses. Metabolic imprinting was defined as ‘the basic biological phenomena that putatively underlie the relations among nutritional experiences of early life and later diseases’.

The authors also considered that metabolic programming, while being a useful term, did not convey with sufficient clarity the key aspects of imprinting which, as they saw it, was required to encompass both susceptibility limited to a specific developmental window and a persistent effect lasting through adulthood – although it is not clear if the magnitude of the effect should be consistent through adulthood or if a falling off in potency is acceptable.

They also considered that the outcome should be specific and measurable and that a dose–response or threshold relation between a specific exposure and an outcome should be demonstrable. Waterland & Garza(Reference Waterland and Garza10) also distinguished between other types of imprinting (e.g. hormonal and metabolic). The essence of their argument appears to be that ‘imprinting’ has particular characteristics and the term can be used in conjunction with several prefixes dependent upon the target physiological effect but that in each case there should be a mechanistic underpinning for the use of the term.

Following this suggestion, Lucas(Reference Lucas11) raised some questions as to the use of the term ‘imprinting’. His main argument was that programming can encompass a wide range of biological effects, whereas imprinting had a much narrower range. In addition, he also felt that the use of a term more usually associated with a quite distinct event – i.e. gene imprinting, would inevitably lead to confusion. Since this early exchange, a plethora of papers have appeared which seem to use the terms imprinting and programming almost interchangeably. This is not a particularly helpful situation for communication to both scientific and non-scientific stakeholders and a robust and reliable definition is a prerequisite to developing the area.

Epigenetics, on the other hand, has been quite strictly defined in terms of specific molecular events relating to gene expression and provides a mechanistic underpinning for many imprinting/programming events. The development of clear mechanisms to explain the impact of early life exposure on later clinical endpoints would be of great value in predicting the outcomes of specific dietary interventions.

The scope of this review is to lay the foundation for the prioritisation of factors that determine the relative significance of different early exposures in terms of health outcomes. These outcomes should include both mortality and morbidity or quality of life. In this way, we can enumerate the most significant risk factors associated with the early life events and define the causality, association and effects. In particular, to provide a guide for scientists, regulators and policymakers that will enable them to understand what is presently known, prioritise the research to address the gaps and effectively impact upon public health in an understandable and targeted way. Implicit in this analysis is a realisation of the social conditions that pertain to early life exposure and later health and consequences for funding prioritisation.

Enabling technologies and methodology

Biomarkers – what to measure

Biological systems are constantly in a state of flux both due to internal interactions and due to external exposures. In the context of diet and health, biomarkers are factors that reflect biological status at a given time point. For example, dietary stanols and sterols will reduce cholesterol levels in hypercholesterolaemic individuals. High cholesterol is a risk factor for CVD; therefore, blood cholesterol is a biomarker which reflects the increased risk of a disease outcome and may be affected by a specific dietary intervention. Most biomarkers measure biological response at a specific time point and hence the effect of a given intervention at that time point also. Finding relevant, predictive biomarkers related to programming or imprinting is not straightforward. The biologically relevant event remains significant long after the exposure responsible for it has ceased. Finding biomarkers that are not only predictive of a later effect but which, under the best circumstances, remain measurable once the initial exposure has ceased constitutes a major problem. This will become clear when we consider definitions of biomarkers, their validation and how they can best be used.

Biomarkers have conveniently been divided into sub-categories(Reference Branca, Hanley and Pool-Zobel12). The definitions are designed to allow for a simple categorisation but it also tends to describe the analytical methodology used. In addition, it also introduces the concepts of surrogate biomarkers and predictivity.

Exposure

A biomarker of exposure can be defined as a chemical entity (or something derived directly from it), which is measurable in an exposed individual and reflecting that exposure in a dose-dependent fashion. The simplest exposure biomarkers are the components of interest themselves. It is implicit in this type of measurement that the component is either unchanged by metabolism or can be converted into something that is directly measurable.

Since programming implies some alteration in status, exposure biomarkers are only relevant to programming if they are associated with a known and measurable effect in a time period after exposure. The measurement taken early in life may provide useful information concerning the likelihood of a subsequent health endpoint once the exposure has ceased. Observational studies such as ALSPAC in which a specific exposure is correlated with later outcomes are valuable since they can lead to the development of hypothesis-driven research. Many such studies reveal correlations rather than causal associations and this is an inherent weakness of this type of investigation. The utility of biomarkers of exposure in the context of programming is where they are linked to mechanistic or associative knowledge of the consequences of that exposure. The great advantage of developing robust biomarkers of exposure is that they are measurable events at a time when it is possible to change the outcome by dietary manipulation. A typical exposure biomarker could include the levels of dioxins whose presence in the body is reflective of earlier exposure.

Susceptibility

A biomarker of susceptibility has traditionally largely encompassed genetic polymorphisms or variability that give rise to an increased susceptibility to an effect. This can be a direct (genetic) or an indirect effect. The implication is that a biomarker of genetic variability is a distinct and measurable entity in a gene which can be used to predict likely outcomes. Such genes are referred to as ‘imprinted’ genes.

More recently, susceptibility has grown to include epigenetic effects where there is a connection between certain genes, exposure to some environmental (including dietary) factors and later biological events. For example, folate deficiency affects epigenetic events and has been implicated in colon cancer susceptibility. This led to the conclusion that ‘the portfolio of evidence from animal, human and in vitro studies suggests that the effects of folate deficiency and supplementation on DNA methylation are gene and site specific, and appear to depend on cell type, target organ, stage of transformation and the degree and duration of folate depletion’(Reference Kim13). In these terms, susceptibility is not fixed but is measurable at a specific time. It therefore becomes a fluid and dynamic process which is affected by external factors and may change depending on when in the temporal sequence it is measured.

Effect

Biomarkers of effect comprise the most challenging group. In order for a biomarker of effect to be useful in the context of programming, it must fulfil some key criteria.

(1) It should be measurable at a time point when it is able to be altered by an external (dietary) component and that alteration should be reflective of an eventual changed health endpoint. Biomarkers of effect in general may be measured at a time that is distant from the exposure and can also be as a result of cumulative exposure (e.g. DNA damage). However, in order to be relevant to programming, an effect biomarker must be predictive of a future outcome. It should be measurable before and after the exposure event and should predict the biological outcomes.

(2) There must be a dose-related, mechanistic rationale between exposure (and a biomarker of exposure) and the biomarker of effect and to the health endpoint. This means that many biomarkers of effect are measuring surrogate endpoints.

(3) In common, with all biomarkers, it must be validated both in terms of analytical methodology and biological integrity.

Many of the biomarkers of effect and exposure and their relationship to eventual health outcomes have been indicated in the first instance by prospective epidemiological studies. These have suggested associations between certain events and later onset of disease. While these are certainly useful in suggesting new potential areas for study, it is important to recognise that such studies have a high tendency to being blurred by confounders, and the search for a mechanistic underpinning may be futile.

Specificity of human/animal models

Research into developmental programming of adult health and disease has made considerable progress over the past two decades but a clear consensus on the exact nutrients involved and their mechanisms remains to be established. This problem relates in part to the long-term developmental time frame in which changes in metabolism and cardiovascular function occur. At the same time, the discipline has had to contend with the dual challenges of integrating findings from lifelong epidemiological studies which have largely focussed on birth weight and its relationship (or otherwise) with adult disease and our ability to incorporate these results into appropriate nutritional intervention studies using animal models. It is now established that there are many potential influences on offspring outcome. For example, maternal body composition, age and parity, genetic constitution, macro and micronutrient intake and handling, size, shape and number of offspring, sex, type of lactation and so on.

In this section, we attempt to provide an overview of some of the major problems with both human and animal studies in conjunction with optimal experimental paradigms that may be utilised in future research aimed at elucidating the precise mechanisms by which changes in the maternal diet during reproduction can impact on the life time health of resulting offspring. Particular emphasis will be given to the applicability of the animal model that has been utilised and how similarities in the reproductive process may enable its best use in future examinations of the nutritional programming of adult health and disease.

Human models – historical and contemporary models of developmental programming

The majority of the early work conducted by David Barker and colleagues utilised data from historical cohorts born in the 1930 s and benefited from the meticulous birth records, often kept by the same individual over long periods of time(Reference Kim13). This enabled clear relationships between the size, shape and placental mass of an infant at birth with hypertension later in life(Reference Barker, Bull and Osmond14). Subsequently, the recruitment of long-term historical records from Finland has enabled longitudinal studies on infant growth to be related to adult insulin sensitivity(Reference Barker, Osmond and Forsen15). More recently, the use of nutritional interventions of preterm formula in randomised controlled studies has emphasised the impact of inappropriate growth in early infancy on later disease risk(Reference Singhal16). What is apparent, however, from more contemporary studies and the rise in both childhood and adult obesity is the complexity of this process and how changes in both activity and dietary intake make the translation of findings from such studies into present lifestyle interventions very difficult. At the same time, the causes of the ongoing epidemic of obesity and the predicted increase in associated renal and CVD are multifactorial(Reference Keith, Redden and Katzmarzyk17) and these may be either exacerbated or reduced by early dietary exposure(Reference Williams, Kurlak and Perkins18).

Critical developmental stage

One consistent theme that is apparent from both historical and contemporary studies is that changes in nutrition at specific stages of pregnancy can have very different outcomes(Reference Symonds, Stephenson and Gardner19). This is not unexpected as different organs have critical and precise developmental stages which may be compromised, or enhanced, and, thereafter, be permanently set for the rest of that individual's life. Importantly, adaptations of this type appear to be dependent not only on the period in which the mother's diet is altered but also on the diet to which she is rehabilitated(Reference Reynolds, Godfrey and Barker20). One fundamental consideration is the self-limitation in food intake between early and mid gestation that occurs commonly as a result of nausea affecting approximately 90 % of women in the UK that may be directly linked to Western diets(Reference Pepper and Roberts21). The extent to which this directly relates to changes in placental function and/or fetal growth remains less clear but there is a need to match global prenatal and postnatal nutritional requirements so as to avoid accelerated growth (during pregnancy and early infant life)(Reference Gardner, Tingey and van Bon22) and the concomitant increased risk of later obesity and metabolic complications(Reference Symonds23). Importantly, however, intergenerational acceleration mechanisms do not appear to make an important contribution to levels of childhood BMI within the population(Reference Davey Smith, Steer and Leary24).

The lactational environment and postnatal development

A further area requiring consideration is the relationship between the maternal diet in late pregnancy, its impact on mammary gland development and milk production and whether the infant is breast-fed or formula fed(Reference Toschke, Martin and von Kries25). A higher macronutrient content of formula feed compared with breast milk, in conjunction with its fixed composition throughout a feed – unlike in breast-fed infants for whom milk composition changes with time – will impact on nutrient supply to the infant. It is, therefore, not only the short-term but also the long-term advantages of breast-feeding in terms of development of appetite regulation that should be considered in this regard(Reference Sievers, Oldigs and Santer26). The type of lactation also impacts on other behavioural aspects, including sleep–wake activity cycles(Reference Lee27), so that extended breast-feeding may not only be beneficial in developing countries but also in developed countries(Reference Heird28). Other confounding factors such as social class and smoking during pregnancy and lactation further determine postnatal diet(Reference Bogen, Hanusa and Whitaker29).

In summary, the relationship between nutrient supply and the key stages of development from the time of conception to weaning is highly complex and requires careful, in-depth consideration. It is necessary to conduct detailed animal experiments in a range of species in order to elucidate the mechanisms involved, be they epigenetic or related processes(Reference Symonds, Stephenson and Gardner30).

Classic animal models of nutritional programming

The main animal models that have been utilised to date to investigate the impact of maternal diet on long-term programming have been rats and sheep(Reference McMillen and Robinson31). These obviously have very different developmental patterns in not only the relationship between placental and fetal growth but also in maturity at birth and milk composition(Reference Prentice and Prentice32). The advantage of using rats is their very short gestational length. However, the type of diet they consume in the wild is very different to that fed to housed laboratory animals in which semi-purified diets are the norm. Such diets provide substantially greater nutrients to pregnant rats than to controls, and thus should be considered ‘pharmalogical’ as opposed to ‘physiological’ and are well outside the normal distribution. For example, in the case of high-fat diets, these contain four times as much fat compared with control diets and are at risk of being deficient in micronutrients. Not surprisingly, when fed a diet so rich in fat, maternal food intake is reduced(Reference Taylor, McConnell and Khan33). In addition, rats exhibit coprophagia which has a substantial effect on nutrient flux and the ability to experimentally manipulate the intake of specific nutrients. It should be noted, however, that recent rodent models have been developed to overcome the issue of high fat content at the expense of other essential nutrients.

Appreciable placental growth continues up to term in the rat which is necessary in part to meet the much higher protein demands for fetal growth compared with that seen in human subjects or sheep(Reference Widdowson34). In large mammals, the maximal period of placental growth is early in pregnancy and is normally necessary to meet the increased fetal nutrient requirements in late gestation when fetal growth is exponential(Reference Symonds and Gardner35). Furthermore, rats produce large litters whereas sheep and human subjects normally produce only one (or two) offspring of comparable birth weight per pregnancy.

Methodological considerations and the interpretation of metabolic programming in rat and sheep models

There have been two major problems with rat studies with regard to assessment of the long-term cardiovascular outcomes. First, in many studies, blood pressure has only been measured using the tail-cuff technique in restrained and heated animals during the day when they are normally inactive(Reference Kwong, Wild and Roberts36). The results with this method differ considerably from those obtained from telemetry(Reference D'Angelo, Elmarakby and Pollock37). The tail-cuff method was originally validated and recommended to use only in hypertensive animals(Reference Bunag38). Modest differences in blood pressure recorded in normotensive rats are not always informative, which may explain why, in more recent studies, offspring born to dams fed a low-protein/high-carbohydrate diet through pregnancy show either no difference or a reduction in blood pressure when measured using either a telemetry or an indwelling arterial catheter(Reference Fernandez-Twinn, Ekizoglou and Wayman39, Reference Hoppe, Evans and Moritz40). Comparable findings are seen in offspring born to dams in which food intake is reduced by 50 % through pregnancy compared with controls(Reference Brennan, Olson and Symonds41). There also appears to be a marked divergence in the long-term outcomes between sexes in rats that is primarily linked to the faster, as well as continued, growth of males compared with females(Reference Symonds and Gardner35).

Despite the fact that sheep are ruminants, they have proved valuable in enabling us to understand the nutritional and endocrine regulation of placento-fetal development. Like in human subjects, the primary metabolic substrate for fetal metabolism is glucose, for which GLUT 1 is the main placental regulator(Reference Dandrea, Wilson and Gopalakrishnan42). Glucose is, thus, transported across the placenta by active diffusion determined by its concentration in maternal blood(Reference Edwards, Symonds and Warnes43). In addition, not only does kidney development show a very similar ontogeny between sheep and human subjects, but the distribution of total nephrons across the adult population is also comparable(Reference Symonds, Budge, Mostyn and Watkins44). It is also feasible to obtain very consistent blood pressure recordings in the offspring using arterial cannulation while the animal is standing freely with continual access to its diet(Reference Gardner, Pearce and Dandrea45). At the same time, there is no discernable difference in blood pressure control or glucose regulation between sexes when measured in intact adult sheep(Reference Gardner, Tingey and van Bon22, Reference Symonds, Budge, Mostyn and Watkins44).

In summary, the use of both rats and sheep as models for examining the long-term effects of early nutritional interventions is valid and can produce consistent results. Extrapolation of these to the human situation must be carried out with care and with a clear understanding of the discrepancies of both model systems.

Other models

Preterm infants

Preterm-born babies may represent a human model of the third trimester of pregnancy in which the impact of the environment including nutrition can be studied. Although the precise nutritional needs of the fetus to support optimal growth velocity are not known, for instance, amino acid and long-chain PUFA (LCPUFA) supplementation have been shown to improve the early weight gain and/or body composition, respectively(Reference Valentine, Fernandez and Rogers46–Reference Innis, Adamkin and Hall48). However, exposure to other environmental factors associated with being born preterm including high risk of infection and other non-nutritional factors does complicate this further.

Thus, suitable nutritional interventions may now be available that can examine the relevant short- and long-term outcomes in a consistent and validated manner to determine how contemporary diets impact on fat deposition, metabolic homoeostasis and cardiovascular control in animal models. The completion of such studies may enable us to determine the optimum nutrition in terms of quantity and quality. However, as Table 2 demonstrates nutritional interventions, even to demonstrate short-term effects, must control for potential confounders and demonstrate the importance of sound intervention design in this growing field.

Table 2 Summary of studies into nutritional programming in which outcome measures are potentially confounded by a mismatch between groups in their composition of offspring from singleton and twin pregnancies

Nutritional epigenomics: how to make sense of what we measure

Slowing down or preventing the alarming progression of obesity worldwide represents a major public health challenge and a major health concern for future generations. The presence of a heritable or familial component of susceptibility to obesity is well established(Reference Rankinen and Bouchard49). However, apart from extremely rare cases of monogenic forms, most cases correspond to a multifactorial disorder(Reference Rankinen, Zuberi and Chagnon50). This said, however, obesity is a good example of epigenetics as these common forms are associated with a range of genetic and non-genetic familial factors, triggered by the ‘developmental origins of disease’ phenomenon and aggravated by environmental factors, as shown by the rate of discordance between monozygotic twins(Reference Bouchard, Tremblay and Despres51, Reference Fraga, Ballestar and Paz52). Epigenetic misprogramming during development is now widely thought to have a persistent effect on the health of the offspring and may even be transmitted to the next generation(Reference Gallou-Kabani and Junien53).

The term ‘epigenetics’ has been defined as ‘the causal interactions between genes and their products which bring phenotype into being’(Reference Waddington54). It is now used to refer to stably maintained mitotically (and potentially meiotically) heritable patterns of gene expression occurring without changes in DNA sequence. Mechanistically, this is achieved by a range of modifications, including DNA methylation and a complex repertoire of histone modifications: acetylation, methylation, phosphorylation, ADP ribosylation, ubiquitination leading to chromatin remodelling. These processes add to the information of the underlying genetic code conferring unique transcriptional instructions. Epigenetics instructions and machinery create a dynamic nuclear environment that specifies transcriptional states and comprises the essential components of heritable cellular memory, a hallmark of differentiation. Despite sequencing of the human genome studies of the finely tuned chromatin epigenetic networks, DNA methylation and histone modifications are required to determine how the same DNA sequence generates different cells, lineages, organs and ultimately the phenotype.

The mechanism of epigenetic manipulation

DNA methylation patterns and histone modifications are responsive to the environment throughout the life. The epigenetic landscapes are affected by environmental and genetic influences such as embryo culture conditions, DNA methyltransferase 1 overexpression, hyperhomocysteinaemia and folate deficiency either before or during pregnancy, in the postnatal and post-weaning period that persist into adulthood. Transient nutritional stimuli occurring at critical ontogenic stages may have long-lasting influences on expression of various genes by interacting with epigenetic mechanisms and altering the chromatin conformation and transcription factor accessibility.

Several types of sequences associated with specific epigenetic makeup are targets of a host of environmental factors that can trigger transiently or permanently disturbed chromatin architecture with altered epigenetic instructions – either at the somatic or at the germline levels – leading to aberrant patterns of gene expression.

For example,

(1) unique genes, e.g. the glucocorticoid receptor, or, more likely, specific subsets of unique genes belonging to different pathways or systems(Reference Weaver, Cervoni and Champagne55, Reference Champagne, Weaver and Diorio56);

(2) genes present as multiple copies such as of genes coding ribosomal RNA(Reference Santoro57, Reference Oakes, Smiraglia and Plass58);

(3) whole genome epigenomic changes(Reference Pham, MacLennan and Chiu59–Reference Pogribny, Ross and Wise61).

However, still little is known about the various replication/DNA synthesis-dependent and -independent epigenetic mechanisms underlying the stochastically, genetically and environmentally triggered epigenetic changes occurring during an individual's lifetime. They may result from replication-dependent, replication-independent or DNA repair events. Most of the epigenetic changes were thought to be coupled to DNA replication. Thus, epigenetic patterns need to be faithfully maintained during each cell cycle. In addition, the maintenance of genome integrity involves specific repair pathways(Reference Polo, Roche and Almouzni62). During the synthesis phase, this is achieved by duplication of chromatin structure in tight coordination with DNA replication. Histone synthesis and deposition onto DNA by chromatin assembly factors ensures efficient coupling with DNA synthesis(Reference Nakatani, Ray-Gallet and Quivy63). However, this faithful maintenance is not always required. Changes in epigenetic patterns are observed during the differentiation processes for several genes involved in development, cellular growth, differentiation, apoptosis or tissue- or sex-specific expression. DNA demethylation modulates mouse leptin promoter activity and the insulin-sensitive GLUT4(Reference Yokomori, Tawata and Onaya64) during the differentiation of 3T3-L1 cells (mouse embryonic fibroblast – adipose-like cell line)(Reference Yokomori, Tawata and Onaya64, Reference Yokomori, Tawata and Onaya65).

The evidence for epigenetic systems

Recently, links have been found between circadian rhythms and major components of energy homoeostasis, thermogenesis and hunger–satiety, rest–activity rhythms and the sleep–wake cycle(Reference Staels66, Reference Fontaine and Staels67). The rhythmic, circadian induction of a substantial proportion of genes, by a network of clock genes, one of which is a histone acetyltransferase, by nuclear receptors and transcription factors is also controlled by chromatin remodelling. The associated circadian epigenetic patterns must be replication-independent, transient, sensitive to environmental cues and reversible. However, poorly adapted behaviour or lifestyle and desynchronised cues may disturb the modulation of gene expression. This may ultimately lead to persistence of aberrant and unphased ‘locking’ or ‘leakage’ of gene expression and unadapted responses of the organism in terms of physiology, metabolism and behaviour to environmental changes. Thus, epimutations accumulate over time, increasing the ‘epigenetic burden’ potentially leading to the onset of age- and/or environment-related diseases(Reference Issa68). The lifelong remodelling of our epigenomes by nutritional metabolic and behavioural factors corresponds to the new field of ‘nutritional epigenomics’.

Trans-generational effects

It is now widely accepted that the developmental basis of adult diseases and the non-Mendelian transmission of acquired traits cannot be attributed solely to genetic mutations or a single aetiology(Reference Whitelaw and Whitelaw69). In addition, there is accumulating evidence that during the periconceptual, fetal and infant phases of life, exposure to environmental compounds or behaviours, placental insufficiency, maternal inadequate nutrition and metabolic disturbances can promote improper ‘epigenetic programming’, leading to susceptibility to various disease states or lesions in the first generation and sometimes subsequent generations, i.e. transgenerational effects. While developmental programming may imply an altered uterine milieu perpetuating the disease risk through the cycle of mother-to-daughter transmission, with epigenomic alterations at the somatic level, there are also examples of transmission through the germline for both sexes and with sexually dimorphic effects(Reference Gluckman, Hanson and Beedle70, Reference Yang, Schadt and Wang71). There are an increasing number of animal models, designed to mimic human conditions, that clearly involve an epigenetic and/or gene expression-based mechanism and these have recently been reviewed(Reference Junien and Nathanielsz72).

DNA methylation alterations and/or histone modifications involve different types of sequences either at the somatic or at the germline levels. However, very little information is presently available to evaluate the actual impact, persistence, and dietary and therapeutic reversibility of these environmentally triggered transgenerational effects. It remains difficult to determine the conditions required for the persistence of transgenerational effects over several generations, even in the absence of the original stimulus. It also remains unclear whether the continuation of exposure over several generations leads to ‘locked’ epigenomic patterns. If this were the case, permanently methylated cytosines, with their higher rate of mutation, would give rise to genuine genetic mutations, thereby persisting in the genome. This would have important consequences for adaptation to new environments – coping with the worldwide epidemic of obesity, for instance.

Epigenetic studies in human subjects

Recent studies also suggest that part of the epigenetic component can be dependent on genetic changes: there is a genetic basis for epigenetic variability between individuals, in stochastic events, susceptibility to environment/diets, to replication-dependent and replication-independent events. The finding that DNA methylation profiles can be associated with particular alleles is of considerable interest. Only a few studies in human subjects have identified associations between DNA sequence and epigenetic profiles. The present population-based approach to common diseases relates common DNA sequence variants to either disease status or incremental quantitative traits contributing to disease. This purely genetic approach is powerful and general, and Bjornsson et al. (Reference Bjornsson, Danielle Fallin and Feinberg73) have proposed an approach to incorporate epigenetic variation into genetic studies. Indeed, it could be that epigenetic variation (including at the epiallele and epihaplotype) may be a better predictor for risk of disease, including late onset and progressive nature of complex diseases than sequence-based approaches alone(Reference Abdolmaleky, Smith and Faraone74, Reference Petronis75).

Future studies in epigenetics

Depending on the nature and intensity of the insult, the critical spatiotemporal windows and developmental or lifelong processes involved, these epigenetic alterations can lead to permanent changes in tissue and organ structure and function; alternatively, some of the gene- and/or tissue-specific changes can be reversible by means of appropriate epigenetic tools. Given several encouraging trials, prevention and therapy of age- and lifestyle-related diseases by individualised tailoring to optimal epigenetic diets or drugs are conceivable(Reference Egger, Liang and Aparicio76, Reference Ou, Torrisani and Unterberger77). However, these potential interventions will require intense efforts to unravel the complexity of these epigenetic, genetic, stochastic and environmental interactions and to evaluate their potential reversibility with minimal side effects. Given the significant and increasing proportion of women who are overweight and overfed when pregnant paying attention to the over-nourished fetus is as important as investigating the growth retarded one(Reference Muhlhausler, Adam and Findlay78). Improving the environment to which an individual is exposed during development may be as important as any other public health effort to enhance population health worldwide(Reference Gluckman, Hanson and Beedle70). It is clear that epigenetic alterations can no longer be ignored in evaluations of the causes of obesity and its associated disorders. There is a need for systematic large-scale epigenetic studies on obesity, employing appropriate strategies and techniques and appropriately chosen environmental factors during critical spatiotemporal windows in development.

Perinatal nutrition and CVD in adults

Background to diet effects

The possible impact of perinatal nutrition (including both in utero nutrition and lactation) on the development of CVD in adulthood is a very complex issue, mainly because of the very slow evolution of the disease before clinical manifestation. The progression of fatty streaks to coronary atherosclerosis and then to the stenosis that will provoke cardiac ischaemia or infarction may require 50 years. Moreover, the possible evolution of infarct (or hypertension) to cardiac hypertrophy and/or chronic heart failure may also require a long duration. This cardiac disease ‘continuum’ has been related to several risk factors, including cholesterol levels, diabetes, obesity, sedentarity, coagulation, smoking, dietary practices and vascular dysfunction. In this context, it is difficult to evaluate the possible influence of the perinatal environment, including maternal factors (genotype, nutrition, disease state including dyslipidaemia, gestational diabetes and hypertension), fetal predisposition (genotype development) and lactation. It is obviously difficult to differentiate these early putative risk factors from those which develop later independently of the link from childhood to adulthood.

Epidemiological evidence of association

Hypertension

Birth triggers the transition from a low blood pressure system to a high blood pressure system. Intra-uterine undernutrition is known to affect later hypertension both in experimental animals and in human subjects and the mechanism has been investigated(Reference Franco, Akamine and Di Marco79). Intra-uterine undernutrition impairs nephrogenesis and glomerular hypertrophy. These developmental alterations induce decreased filtration rate and a decreased plasma flow that will contribute to increased blood pressure. Besides this kidney functional alteration, intra-uterine undernutrition also affects the endothelium function. The mechanism involves a reduction of superoxide dismutase activity, an increase in NADPH oxidase activity and a decrease nitric oxide synthase gene expression and activity. As a consequence, nitric oxide production is reduced, whereas free radical oxygen is increased, resulting in a change in relaxation of vascular smooth muscle cells.

The quality of lactation was also shown to affect blood pressure in human subjects. Diastolic and mean blood pressure at the age 13–16 years were significantly lower in children previously fed banked breast milk compared with children fed either term or preterm infant formulas. Moreover, the authors report than the results remained unchanged after adjustment for present BMI, sex and Na intake(Reference Singhal, Cole and Lucas80). However, this result was not confirmed in large-scale epidemiological studies. The Oxford Nutrition Survey investigated pregnant women recruited in 1942–4 to determine whether the wartime dietary rations were sufficient to prevent the deficiencies. More than 50 years later, the offspring were recruited to explore the possible impact of maternal nutrition in pregnancy on CHD risk factors, including blood pressure, but the results provided no evidence to support the hypothesis that birth weight or undernutrition in pregnancy affect hypertension(Reference Huxley and Neil81). Similarly, The Boyd Orr Cohort investigated a cohort of children born 1937–9 and their follow-up in 732 adults aged 65 years and this reported no evidence of the influence of breast-feeding on blood pressure(Reference Martin, Ebrahim and Griffin82).

Athersclerosis

The Boyd Orr Cohort, comprising 700 adults between in the years 1937 and 1939 (see above), was recently reinvestigated. The authors report that the breast-fed group displayed lower intima-media thickness of carotid arteries and a lower score in carotid and femoral plaques(Reference Martin, Ebrahim and Griffin82). The results remained unchanged after adjustment for socio-economic variables (including smoking and alcohol), and adjustment for pathway causal factors (including blood pressure, adiposity, cholesterol, insulin resistance and C-reactive protein). Atherosclerosis is considered to begin very early in life as shown in the Fate of Early Lesions in Children Study(Reference Napoli, Glass and Witztum83). This study showed that maternal hypercholesterolaemia during pregnancy induces changes in fetal aorta that may determine the long-term susceptibility of children to fatty-streak formation and subsequent atherosclerosis. The human fetus displays arterial fatty streaks in utero. Although these fatty streaks regress after birth, they redevelop rapidly independently of the cholesterol status of the child. The study reports that these fatty streaks are associated with an increase in arterial wall thickness (aorta and carotids) in child than in fetus. Investigations in animals(Reference Palinski, D'Armiento and Witztum84) showed similar results, the offspring of hypercholesterolaemic mothers displaying a significantly higher atherosclerosis lesion score at birth, at 6 months and at 12 months. Interestingly, when the mothers were treated with cholestyramine during pregnancy, the atherosclerosis lesion score in the offspring was significantly lower at birth and at 6 months and fully normalised at 12 months(Reference Huxley and Neil81). However, although several nutrients, including phytosterols, the SFA:PUFA ratio and n-3 PUFA, affect cholesterol transport in adults, the impact of these nutrients in early development has not been considered so far.

Myocardium and coronaropathies

The Helsinki Birth Cohort Study including more than 4000 men born 1934–4 reported a significant correlation between the ponderal index at birth (term babies only), early growth and the standardised mortality ratios for CHD(Reference Eriksson, Forsen and Tuomilehto85). Low birth weight and low ponderal index were associated with increased CHD. After 1 year of age, rapid gain in weight and BMI increased the risk of CHD in those men with a low ponderal index at birth. Epidemiological studies can be confusing. Investigations in the ‘Nurses' Health Study’ cohort suggested that breast-feeding may be associated with a reduction in risk of ischaemic CVD in adulthood(Reference Rich-Edwards, Stampfer and Manson86). Conversely, investigations on the ‘Caerphilly study’ cohort data provide little evidence of a protective influence of breast-feeding on CVD risk factors, incidence or mortality. Moreover, a possible adverse effect of breast-feeding on CHD incidence was reported, which may be related to the difficulties in differentiating the lactation effects from the individual risk factors developed after weaning(Reference Martin, Ben-Shlomo and Gunnell87).

In the perinatal period, the myocardium is subjected to several key changes and some of these changes will induce a phenotype influencing cardiac function. These could be the key parameters in the pathology developed in later life such as mitochondria oxidative capacity and adrenergic regulation of cardiac function, both through membrane phospholipid homoeostasis.

Critical developmental stage

The question is then restricted to the developmental stage during which the specific impact of the perinatal nutrition period on the development of CVD, independently from the known risk factors, developed during independent life. This may affect hypertension, atherosclerosis development and localisation, individual sensitivity to ischaemia and preconditioning, occurrence and severity of infarct, development of cardiac hypertrophy and its evolution in chronic heart failure.

Several organ systems which can subsequently influence cardiovascular function via programming mechanisms have been documented in human subjects. These include the vessels (vascular compliance and endothelial function), the endocrine system (glucose and insulin metabolism), the muscles (glycolysis in exercise and insulin resistance), the kidneys (rennin–angiotensin system) and the liver (cholesterol metabolism, fibrinogen and factor VII)(Reference Godfrey and Barker88). However, all these investigations referred specifically to perinatal dietary restriction and raise the issue of qualitative concern. Moreover, the data on the heart itself are scarce and the role of metabolic programming that may affect cardiac metabolism and function is unclear.

Mitochondria oxidising capacity

The transition of the cardiomyocyte energy production system from exclusive glucose oxidation to fatty acid (FA) oxidation allows the large increase in cardiac energy production capacity as required by independent life. This process is based on a large increase in mitochondria mass controlled by several key factors including mitochondrial DNA (always from maternal origin) mainly encoding for the electron transport chain, transcriptional co-activator PGC-1α(89) which controls mitochondrial biogenesis, the development of FA oxidation pathways(Reference Huss and Kelly90, Reference Huss and Kelly91)and also cardiolipin (CL) synthesis through PPAR. Feeding dam rats a high-fat diet during pregnancy resulted in offspring which at 6 months display a significant decrease in mitochondria encoding mRNAs (mainly cytochrome oxidase subunits, dicarboxylate carrier and mitochondrial genome)(Reference Taylor, McConnell and Khan33). CL is the key phospholipid in the function of inner mitochondrial membrane ensuring the cohesion of the electron transport chain and associated enzymes. At the cellular level, cardiac ischaemia is basically a crisis of energy production associated with an unbalanced ratio in substrate oxidation (excessive FA oxidation and decreased glucose oxidation)(Reference Grynberg92). This unbalanced metabolism contributes to the rapid oxidation of CL which decreases in mitochondrial membranes, impairing energy production(Reference Paradies, Petrosillo and Pistolese93). The cardiac capacity to restore CL is partly controlled by the effect of LCPUFA on PPAR, and maternal diet was reported to influence the acyl composition of CL (and hence its sensitivity to oxidation) via both FA placental transfer and breast milk(Reference Berger, Gershwin and German94). However, chronic heart failure is associated to a decreased capacity of the cardiomyocytes to produce energy from FA resulting in a reduced capacity to face any increase in energy demand (the term ‘metabolic regression to fetal phenotype’ is often encountered in the literature)(Reference Huss and Kelly91). The efficiency of mitochondrial biogenesis and CL synthesis and the basal mitochondrial mass are key factors in cardiac pathophysiology.

Cardiac function

The perinatal period is also associated with the transition in the neurohumoral regulation of cardiac function to a large predominance of the β-adrenergic system. This system involves the internalisation of the receptor and its recycling to sarcolemma (clathrin-mediated recycling) in which phosphatidylinositol-3-kinase plays a key role. The use of β-blockers in the treatment of cardiac disease including coronaropathy and chronic heart failure outlines the importance of the basal β-adrenergic function. The development of this pathway is based on the membrane homoeostasis of phosphatidylinositol, the substrate of phosphatidylinositol-3-kinase. This enzyme is also involved in insulin signalling by triggering the translocation of GLUT4 to the membrane. The early development of the phosphatidylinositol-3-kinase pathway may thus impact on both neurohumoral regulation of cardiac function and insulin control of cardiac metabolism, since insulin contributes to myocardium substrate balance through the regulation of AMP-activated kinase-like leptin and adiponectin.

Experimental evidence and mechanistic understanding

Several attempts have been made, using animal models, to investigate the cardiac consequences of in utero nutrition. The effect of maternal undernutrition during pregnancy was studied using sheep as a model system. Dong et al. (Reference Dong, Ford and Fang95) reported a change in the expression of insulin-like growth factor (IGF-1), IGF-1R and IGF-2R, in fetal myocardium associated with a ventricular enlargement in fetus. Han et al. (Reference Han, Austin and Nathanielsz96) reported several alterations of gene expression and particularly the upregulation for several proteins that have been linked to cardiac hypertrophy and compensatory growth in several species including human subjects. Other authors reported in the same model that maternal undernutrition decreased immunoreactive type 1 and type 2 angiotensin-II receptors (AT1 and AT2) in the left ventricle of the fetuses without affecting gene transcription of the angiotensin-II receptors or increased the transcription of mRNA for vascular endothelial growth factor, whereas immunoreactive vascular endothelial growth factor remained unchanged. All together, these data suggest a relationship between maternal undernutrition and later cardiac remodelling processes. The rat is another frequently used model. Studies have included the link between maternal dietary isoflavones and the sensitivity to dilatation and chronic heart failure of offspring, the influence of litter size on cardiac neurohumoral control, the influence of maternal nutrition on cardiomyocyte length which affects left ventricle capacity in overload and the effect of high-fat diet in mothers on mitochondrial DNA expression.

The role of specific nutrients possibly involved in metabolic imprinting of blood pressure and dietary fat has been investigated. In animal experiments (rats), Khan et al. (Reference Khan, Taylor and Dekou97) reported an increase in systolic and diastolic blood pressure in the adult offspring of dam fed a high-SFA diet during pregnancy. Interestingly, this increase was observed in female offspring but not in males. Investigations on the mechanism showed a significant alteration of endothelial function associated with a modified arterial lipid composition, which could result from a misbalanced SFA:PUFA ratio during early growth(Reference Ghosh, Bitsanis and Ghebremeskel98). Such an effect of LCPUFA was also reported in children. Forsyth et al. compared three groups of infants fed an infant formula or the same formula supplemented with arachidonic acid and DHA or breastfed (also providing arachidonic acid and DHA). After weaning, the children were allowed to return to a non-controlled diet and re-examined 6 years later. The results showed a lower diastolic and mean blood pressure in those children who received LCPUFA during lactation either by breastfeeding or by supplementation.(Reference Forsyth, Willatts and Agostoni99) The mechanism is still unknown but could be related with the differential effect of each LCPUFA on blood pressure according to hypertension aetiology as reported in animal models.

Conclusion and future directions

In conclusion, metabolic programming of the cardiovascular system cannot be considered yet as proven, in spite of several promising results. The range of animal investigations does not suggest a trend in the relationship between perinatal nutrition and adult cardiac function, and/or protection can be considered to be confirmed. Epidemiological studies remain unconvincing and often controversial. In addition, so far, they cover only the domains of maternal food restriction and breast-feeding. In between, there is a strong requirement for mechanistic investigations to provide science-based information on the influence of maternal diet on offspring heart function and protection from later disease. Also, these investigations will have to include the globally misbalanced dietary habits (low protein and high fat) as well as the influence of specific nutrients such as specific FA, glycaemic index, amino acids, salt and minerals, sterols or phytohormones.

Role of perinatal leptin in obesity risk/incidence in adults

Background to diet effects

The incidence of obesity, defined as a BMI >30 kg/m2, is rapidly increasing all over the world(Reference Rodgers, Vaughan and Prentice100). The epidemic now affects young children and accumulative evidence suggest that, in part, the origin of the disease may be influenced by fetal development and early life. Nutritional and hormonal status during pregnancy and early life could interfere irreversibly on the development of the organs involved in the control of food intake and metabolism and particularly the hypothalamic structures responsible for the establishment of the ingestive behaviour and regulation of energy expenditure.

The mechanisms responsible for this developmental programming remain poorly documented. While obesity is a multi-factorial problem and is affected by several factors, recent research indicates that the adipokine leptin plays a critical role in this programming(Reference Cottrell and Ozanne101).

Leptin sources and biological functions

Leptin is produced essentially by the adipose tissue and its plasma levels reflect the fat reserves. There are also several extra adipose sources of leptin. The placenta in human subjects (but not in all species) produces leptin and constitutes an appreciable source of leptin for the fetus during pregnancy(Reference Laivuori, Gallaher and Collura102). The mammary gland is also able to produce leptin, particularly during the early phase of lactation(Reference Smith-Kirwin, O'Connor and De Johnston103). In addition, the mammary gland is involved in the transport of leptin from the mother to the milk, which is an additional source of leptin for the newborn(Reference Uysal, Onal and Aral104). The immature gastrointestinal tract may allow leptin to enter the circulation, but it is likely that leptin may have considerable effects locally and play a role in the maturation of the epithelial lining of the gut. There are leptin receptors present in the gastrointestinal tract, which suggests a localised function. Although initially there may be leptin leakage to the circulation because of gastrointestinal immaturity, access of leptin to the circulation may be limited.

Leptin is involved in an extensive number of biological functions and several isoforms of the receptor have been identified in different organs. The best-known effects are those exerted at the hypothalamic level where the long form of the leptin receptor is predominantly expressed and exerts a pivotal role in the regulation of food intake. In peripheral organs, the short form of the leptin receptor is the dominant form expressed and its biological effects include cell proliferation and cell differentiation in adipose tissue, pancreas, liver, kidney, arteries and immune cells.

Leptin and regulation of food intake

At the hypothalamic level, after crossing the brain–blood barrier, leptin interacts with a complex neuronal network integrating a wide range of nervous, nutritional and hormonal signals. Leptin interacts primarily with the arcuates nucleus, where it inhibits the activity of neurons expressing orexigenic peptides (neuropeptide Y and Agouti-related protein) connected to the lateralhypothalamic nucleus which is recognised as a major controlling factor in hunger. Leptin also stimulates the activity of anorexigenic neurons expressing pro-opiomelanocortin connected to ventromedial nucleus recognised as the centre of satiety. The different hypothalamic nuclei are interconnected via a complex neuronal network with paraventricular and dorsomedial nuclei and the integration of all these stimuli determines the food intake behaviour(Reference Friedman and Halaas105).

Epidemiological evidence for association

There is little epidemiological evidence for an association between circulating leptin and obesity. To briefly summarise, there is a rare leptin gene mutation that causes obesity in early childhood. Small-for-gestational age and preterms have lowered leptin levels. Family history of obesity has been correlated with high umbilical cord levels of leptin.

Critical developmental stage

Leptin stimulation results in a decrease in food intake, and it was initially hoped that exogenous leptin therapy might induce satiety and weight loss in the obese human. Unfortunately, it has been found that obesity is often associated with a ‘leptin resistance’, which is progressively established during ingestion of a hypercaloric diet and associated with an increase of serum leptin levels. The mechanisms underlying leptin resistance remain a matter of debate, but the two hypotheses that have received the most attention are a failure of circulating leptin to reach its target cells in the brain or a blockage of leptin signalling by activation of suppressor of signalling (SOCS3) or specific phosphatases (PTP1b). An alternative hypothesis suggests that leptin resistance may in fact be programmed during fetal and neonatal life and may be the result of an altered development of neuronal circuitry involved in food intake regulation.

The neuronal network is established during hypothalamic development occurring postnatally in rodents. In the mouse, dense neuronal fibre originating from the arcuate nucleus and reaching lateral and dorsomedian hypothalamus and paraventricular nucleus are progressively established between days 6 and 16. The development of this neuronal network occurs at the same period as a dramatic increase in leptin level occurs in the blood, the suggested origin of which is adipose tissue. This change in leptin levels is not related to food intake regulation since body weight of the animals rapidly increases at this period. Bouret, in the group of Simerly(Reference Bouret, Draper and Simerly106, Reference Bouret and Simerly107), clearly demonstrated that leptin at this period exerts a potent neurotrophic action. These authors have observed that ob/ob mice, genetically deficient in leptin, have an altered hypothalamic development characterised by a dramatic decrease in neuronal fibre density in the hypothalamic structures. Secondly, they elegantly demonstrated that leptin administration to these animals during the early postnatal period restored neuronal organisation of hypothalamic circuits in term of fibre density in the paraventricular nucleus. Finally, hypothalamic connections in the diet-induced obese rat model were shown to be permanently disrupted(Reference Bouret, Gorski and Patterson108).

Experimental evidence and mechanistic understanding

Several animal models have clearly shown that either severe undernutrition during pregnancy or placental deficiency, induce intra-uterine growth retardation (IUGR) leading to low birth weight which is associated with low leptin levels. It is also well established that the IUGR newborn show an increased susceptibility to develop obesity and metabolic syndrome when submitted to high-caloric diet during later life. One possible explanation is that leptin deficiency in IUGR causes improper programming. Supporting this hypothesis, it has been recently demonstrated(Reference Vickers, Gluckman and Coveny109) that neonatal leptin treatment of IUGR pups reverses developmental programming induced by mother's severe undernutrition and restores normal adult phenotype. To extend these findings to normal birth weight animals and taking advantage of the recent development of specific leptin antagonist(Reference Solomon, Niv-Spector and Gonen-Berger110), we have recently analysed the consequences of the blockage of the postnatal leptin surge in newborn rats(Reference Attig, Solomon and Taouis111). Leptin mutants (L39A/D40A/F41A/I42) which bind to the leptin receptor with an affinity identical to wild-type leptin, but are completely devoid of agonistic activity, have been administered during early postnatal days 2–13. Three months later, the animals were given a leptin challenge and these animals injected with the leptin antagonist early in life were leptin resistant. When a high-energy diet was given to these animals, they showed a higher susceptibility and a greater increase in body weight than control animals. At 8 months, the animals presented a higher adiposity associated with hyperleptinaemia. These data demonstrate that perinatal leptin in a normal situation plays a crucial role in the determination of the capacity of the animal to respond to leptin later in life and to protect the newborn against the adverse effect of hypercaloric diet.

Several studies have documented the evolution of leptin levels during pregnancy in normal and IUGR babies. It has been shown that leptin levels increased during late fetal life, and at birth, leptin levels are lower in IUGR babies than in normal weight newborn(Reference Jaquet, Leger and Levy-Marchal112). The chronology in the development of the different organs varies between animal species. In contrast to the rodent, the major part of neuronal development occurs before birth in the human(Reference Grayson, Allen and Billes113). However, it is evident that a relative neuronal plasticity remains after birth and this could be even more so in the case of IUGR, where an impairment in the development of several organs (particularly the kidney) is generally observed. As we mentioned earlier, the mammary gland is able to produce leptin and also to transfer leptin from mother's blood to milk. An interesting possibility is to consider that leptin absorbed by the newborn via the milk may be an important factor, which participates in the final maturation of different organs such as the intestine and also hypothalamic structures involved in food intake regulation. Several studies seem to support this hypothesis. Indeed, it has been reported that breast-fed infants have higher serum leptin levels than formula-fed infants(Reference Savino, Nanni and Maccario114) and that breast-fed infants may show an decreased risk of developing obesity(Reference von Kries, Koletzko and Sauerwald115).

Experimentally, it has been demonstrated that the intake of physiological doses of leptin during lactation in rats prevents obesity in later life(Reference Pico, Oliver and Sanchez116). All these facts support the idea that milk leptin may play a favourable role in developmental programming and constitute a credible candidate to explain, at least partially, the protective effect of breast-feeding against obesity. This is an interesting subject for future investigations. In mice, the leptin responsible for the hypothalamic development of the hypothalamic food intake circuitry is thought to be of adipose tissue origin of the newborn despite its very small quantity.

Conclusions and future directions

All the data summarised in this paper suggest that leptin constitutes a key hormonal player during the perinatal period in the prevention of unfavourable developmental programming. Additional basic research is necessary to establish the biological mechanisms involved. In addition to classical rodent models, animal models including sheep or pigs may be useful as models of the human situation. Indeed, as in human subjects, the major part of neuronal hypothalamic development occurs before birth in these two species, although it should be noted that, in the human, major synaptic proliferation occurs after birth. Epigenetic modulations are probably involved in this developmental process and the genes implicated remain to be established.

Different strategies could be envisaged to optimise the effects of leptin during developmental programming. Particular attention must be given to nutrition during pregnancy. Development of well-adapted diets associated with optimised maternal leptin levels would be beneficial.

During the postnatal period, several months of breast-feeding must be encouraged, particularly in IUGR babies. Opportunities for research on the optimisation of the postnatal diet during the critical developmental stage could potentially focus on leptin and its addition to infant formula, for instance.

Perinatal nutrition and type 1 diabetes in adults

Background to diet effects

Type 1 diabetes (T1D), a chronic inflammatory disease caused by a selective destruction of the insulin-producing β-cells of the pancreas, is one of the most common and serious chronic diseases in children(Reference Green and Patterson117, Reference Onkamo, Vaananen and Karvonen118). The incidence is increasing by 3 % per year, particularly in young children and in developed countries(Reference Onkamo, Vaananen and Karvonen118).

T1D is preceded by a pre-clinical phase characterised by autoimmunity against pancreatic islets(Reference Eisenbarth119). A genetic susceptibility for developing islet autoimmunity and T1D is well documented and an environmental influence is assumed(Reference Atkinson and Eisenbarth120).

Epidemiological evidence for association

Over the last 15 years, several groups have initiated prospective studies from birth investigating the development of islet autoimmunity and diabetes(Reference Ziegler, Hillebrand and Rabl121–Reference Honeyman, Coulson and Stone124). These studies provide an opportunity to investigate the factors that are associated with the development of islet autoimmunity and progression to T1D. Findings from these studies have significantly contributed to our present understanding of the pathogenesis of childhood diabetes. However, the exact aetiology and pathogenesis of T1D are still unknown.

Genetic factors influencing the development of islet autoimmunity and type 1 diabetes

Children with a first-degree relative with T1D have a more than tenfold higher risk to develop T1D, further increasing if both parents were affected. Genetic variability in the human leucocyte antigen region explains approximately 50 % of the familiar clustering(Reference Risch125, Reference Davies, Kawaguchi and Bennett126); other genes have also been identified as providing more modest contributions to risk(Reference Davies, Kawaguchi and Bennett126, Reference Cox, Wapelhorst and Morrison127). The concordance of T1D between monozygotic twins is up to 50 %, whereas between dizygotic twins, it is only 10 %(Reference Kyvik, Green and Beck-Nielsen128). Although such differences in the concordance rates between identical and non-identical twins clearly underline the impact of genes on the development of T1D, they also show that genetic susceptibility alone cannot be the ultimate cause for the disease and that environmental factors seem to modify the risk for islet autoimmunity and T1D.

Environmental factors influencing the development of islet autoimmunity

Prospective studies from birth have demonstrated that islet autoimmunity occurs very early in life. Around 4 % of offspring of parents with T1D in the BABYDIAB (genetic risk of developing T1D) study and about 6 % of genetically at-risk infants from the general population in the Finnish Diabetes Prediction and Prevention study have developed islet autoantibodies by age 2(Reference Hummel, Bonifacio and Schmid129, Reference Kimpimaki, Kulmala and Savola130). Children who develop autoantibodies within the first 2 years of life are those who most often develop multiple islet autoantibodies and progress to T1D in childhood(Reference Hummel, Bonifacio and Schmid129). These findings implicate environmental factors that are encountered before age 2 may be important for the development of islet autoimmunity. Candidate environmental factors that are suspected to influence risk for islet autoimmunity in genetically susceptible individuals are dietary factors and factors associated with maternal diabetes.

Critical developmental stage

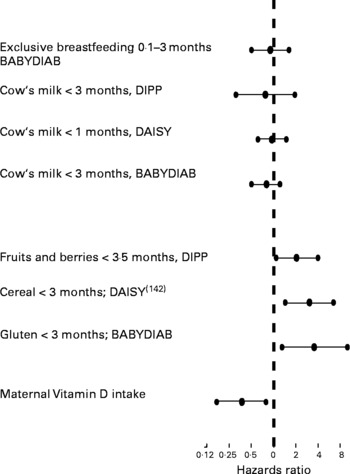

There are several dietary factors that are proposed to be associated with the development of islet autoimmunity and T1D but most of the research done in this field led to controversial results. There are only few prospective case–control, cohort and human-intervention studies that can be used for hypothesis testing. However, dietary factors that have already been related to the development of islet autoimmunity and T1D were examined in these following prospective studies (Fig. 3). Recently, weight gain in early life was proposed to predict the risk of islet autoimmunity in children with a first-degree relative with T1D(Reference Couper, Beresford and Hirte131).

Fig. 3 Studies of the hazards ratios for risk of T1D

It has been suggested by some investigators that breast-feeding may protect against T1D(Reference Sadauskaite-Kuehne, Ludvigsson and Padaiga132), whereas early introduction of supplementary milk feeding may promote the development of islet autoantibodies and T1D(Reference Vaarala, Knip and Paronen133). Four prospective studies in at-risk neonates have not demonstrated an increased risk for developing islet autoantibodies in children who were not breast-fed and received cow's milk (CM) proteins early in life(Reference Couper, Steele and Beresford134–Reference Virtanen, Kenward and Erkkola137). However, recent results suggest that an enhanced humoral immune response to various CM proteins in infancy is seen in a subgroup of those children who later progress to T1D. The authors imply that a dysregulated immune response to oral antigens may be an early event in the pathogenesis of T1D(Reference Lupopajarvi, Savilahti and Virtanen138).

Another candidate factor is the early introduction of solid food in an infant's diet. In two recent prospective studies, it was suggested that risk of development of islet autoimmunity is increased in children who were exposed to cereal proteins, and particularly gluten, early in life(Reference Ziegler, Schmid and Huber139, Reference Norris, Barriga and Klingensmith140). The BABYDIAB study looked at the impact of food supplementation during the first 3 months of life on the development of islet autoimmunity in offspring of parents with T1D. Children who received gluten-containing supplements during the first 3 months of life had a significantly higher risk of developing islet autoimmunity compared with children who received nongluten-containing solid food, CM-based supplements or who were breast-fed only. The Finnish Diabetes Prediction and Prevention study showed that the introduction of fruits and berries before 4 months of life was associated with a significantly higher risk of developing islet autoimmunity compared with children who received solid food supplements later in life(Reference Virtanen, Kenward and Erkkola137).

Dietary factors that have been proposed to protect from islet autoimmunity are vitamin D and n-3 fatty acids. The prospective Dietary Autoimmunity Study in the Young showed that dietary maternal intake of vitamin D was significantly associated with a decreased risk of islet autoimmunity appearance in offspring who were at increased risk for T1D (including a dose–response effect!). Neither vitamin D intake via supplements nor the n-3 and n-6 fatty acids intake via supplements during pregnancy was associated with the appearance of islet autoimmunity in offspring(Reference Fronczak, Barón and Chase141, Reference Zipitis and Akobeng142).

Experimental evidence and mechanistic understanding

There are several ongoing dietary intervention trials in newborns at high risk for T1D:

Trial to reduce type 1 diabetes in the genetically at risk (TRIGR)

To study the impact of CM proteins in an infant's diet on the development of islet autoimmunity and T1D, an interventional trial, the trial to reduce T1D in the genetically at risk, is presently ongoing in children with increased genetic risk and who have a first-degree relative with T1D(Reference Sadeharju, Hamalainen and Knip143). The trial has a double-blind, prospective, placebo-controlled intervention protocol, comparing casein hydrolysate with a conventional CM-based formula. The ‘trial to reduce T1D in the genetically at risk’ is an international multicentre study with seventy-eight clinical centres in fifteen countries. The recruitment of families for the trial to reduce T1D in the genetically at risk study was completed at the end of 2006. Altogether, 2162 children were included in the intervention study and will be followed up until the age of 10 years.

BABYDIET

The German-wide BABYDIET study, an interventional trial, has been initiated to investigate whether delaying dietary gluten introduction influences the development of islet autoimmunity in newborns at genetically high risk for T1D and with a first-degree relative with T1D(Reference Schmid, Buuck and Knopff144). Children participating in BABYDIET are randomised to one of two dietary intervention groups that introduce gluten-containing cereals either at age 6 months, as recommended by the German National Committee for the Promotion of Breastfeeding, or at age 12 months (intervention group). The recruitment of children was finished in 2006 and altogether 150 children have been enrolled and will be followed up until the age of 10 years. The first results are expected for 2010.

The nutritional intervention to prevent type 1 diabetes pilot study

The nutritional intervention to prevent type 1 diabetes study has been initiated to investigate whether DHA supplementation during pregnancy and early childhood will prevent development of islet autoimmunity in children at high genetic risk for T1D and with a family history of T1D. Eligible participants (pregnant women or infants) will be randomised to one of the two study groups: a DHA group (intervention) or a control study group substance (placebo). During pregnancy and while breast-feeding, infants will receive the study substance indirectly through their mother (either via the placenta or via the breast milk). Infants who are either partially or exclusively formula fed will receive DHA more directly through the study formula. By 6–12 months of age, all the infants will get the supplement added to solid foods. Recruitment of families for the nutritional intervention to prevent study is still ongoing.

Maternal transfer of islet autoantibodies

The influence of maternally transmitted islet autoantibodies on the development of islet autoimmunity and T1D has been examined both in animal models and human subjects. In the non-obese diabetic (NOD) mouse, removal of maternally transmitted Ig prevented spontaneous diabetes in offspring mice, suggesting that maternal antibodies present during gestation including islet autoantibodies could be important factors in the pathogenesis of β-cell destruction(Reference Greeley, Katsumata and Yu145). Further studies in mice looking specifically at whether maternal insulin antibodies influence diabetes development reported controversial findings(Reference Koczwara, Ziegler and Bonifacio146, Reference Melanitou, Devendra and Liu147).